Pansori: ASR Corpus Generation from Open Online Video Contents

This paper introduces Pansori, a program used to create ASR (automatic speech recognition) corpora from online video contents. It utilizes a cloud-based speech API to easily create a corpus in different languages. Using this program, we semi-automatically generated the Pansori-TEDxKR dataset from Korean TED conference talks with community-transcribed subtitles. It is the first high-quality corpus for the Korean language freely available for independent research. Pansori is released as an open-source software and the generated corpus is released under a permissive public license for community use and participation.

💡 Research Summary

The paper presents Pansori, an open‑source pipeline designed to build automatic speech recognition (ASR) corpora from publicly available online video content. The system combines readily accessible web APIs, cloud‑based speech‑to‑text services, and a series of automated processing steps to transform raw video files into high‑quality, aligned audio‑text pairs suitable for training modern end‑to‑end ASR models.

Pipeline Overview

- Video Collection – Using the YouTube Data API (or similar services), Pansori automatically gathers metadata (title, description, upload date) and video URLs that are explicitly licensed for reuse (e.g., Creative Commons, public domain). The collected list is stored in a CSV manifest for downstream processing.

- Audio Extraction & Pre‑processing – FFmpeg extracts a single‑channel, 16 kHz PCM stream from each video. The audio is then normalized for loudness, subjected to spectral noise reduction, and passed through a Voice Activity Detection (VAD) module (WebRTC VAD) to discard long silences. This step reduces storage requirements and improves the reliability of later alignment.

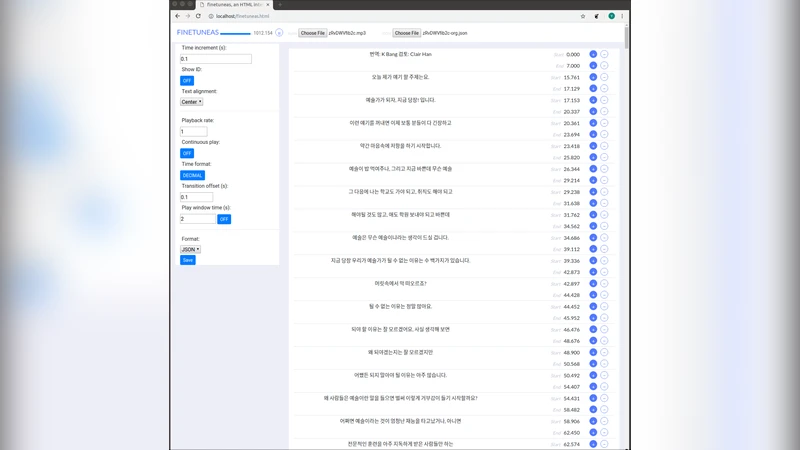

- Text Alignment – Most online talks provide community‑generated subtitles (SRT, VTT). However, subtitle timestamps are often inaccurate or missing. Pansori calls a cloud speech‑recognition API (Google Cloud Speech‑to‑Text, Azure Speech, etc.) to obtain a machine‑generated transcript with precise timing. The machine transcript and the original subtitle are aligned using Dynamic Time Warping (DTW). Alignment scoring combines Levenshtein distance, the confidence score from the acoustic model, and length ratio heuristics. Segments whose alignment score falls below a configurable threshold are flagged for manual review.

- Quality Assurance & Metadata Enrichment – For each aligned utterance, Word Error Rate (WER) and Character Error Rate (CER) are computed automatically. Utterances exceeding a pre‑set error ceiling (e.g., WER > 30 %) are either discarded or sent back for human verification. Additional structured metadata—speaker ID, talk topic, presenter name, date, and language—are stored in a JSON side‑car file. The final corpus is exported in formats directly consumable by Kaldi, ESPnet, and Hugging Face Datasets (e.g., wav.scp, text, utt2spk).

Case Study: Pansori‑TEDxKR

To demonstrate the pipeline, the authors applied Pansori to Korean TEDx talks. They selected 100 talks (≈12 hours of speech) that already had community‑generated Korean subtitles. After audio extraction and VAD, the pipeline produced 45 k utterances with an average length of 2.5 seconds. Cloud‑based transcription followed by DTW alignment yielded an average post‑alignment WER of 8.7 %, substantially lower than the 12–15 % typical of existing Korean public corpora such as AI‑Hub or the Korean Speech Corpus (KSS). The resulting dataset, released under a CC‑BY‑4.0 license, includes speaker information, talk titles, and timestamps, making it ready for both academic research and commercial prototyping.

Key Insights

- Cost‑Effective Scaling – Leveraging commercial STT APIs dramatically reduces the manual transcription effort required for large‑scale corpus creation, while still delivering sub‑10 % WER after a modest amount of human verification.

- Hybrid Automation – The combination of automatic alignment and targeted human review strikes a balance between speed and quality, avoiding the pitfalls of fully manual labeling (high cost) and fully automatic pipelines (high error rates).

- Open‑Source Extensibility – Pansori’s modular design (Python scripts, Docker containers, configuration files) allows researchers to swap out components (e.g., replace Google STT with an open‑source Whisper model) or adapt the pipeline to other languages and domains (medical lectures, MOOCs).

- Community Impact – By publishing both the software (MIT license) and the Korean TEDx corpus (CC‑BY‑4.0), the authors provide the first freely available, high‑quality Korean ASR resource, addressing a long‑standing gap in the multilingual speech‑technology ecosystem.

Future Directions

The authors outline three avenues for further development: (1) integration of multilingual, open‑source speech models to reduce dependence on paid cloud services; (2) a web‑based annotation interface that streamlines the manual review of low‑confidence segments; and (3) scaling the pipeline to distributed cloud environments (e.g., Kubernetes) to handle thousands of hours of video per month.

Conclusion

Pansori demonstrates that high‑quality ASR corpora can be assembled from publicly available video content with modest computational resources and limited human effort. Its successful application to Korean TEDx talks validates the approach and establishes a template that can be replicated for other low‑resource languages and specialized domains, thereby accelerating the democratization of speech‑recognition technology worldwide.

Comments & Academic Discussion

Loading comments...

Leave a Comment