GP-CNAS: Convolutional Neural Network Architecture Search with Genetic Programming

Convolutional neural networks (CNNs) are effective at solving difficult problems like visual recognition, speech recognition and natural language processing. However, performance gain comes at the cost of laborious trial-and-error in designing deeper CNN architectures. In this paper, a genetic programming (GP) framework for convolutional neural network architecture search, abbreviated as GP-CNAS, is proposed to automatically search for optimal CNN architectures. GP-CNAS encodes CNNs as trees where leaf nodes (GP terminals) are selected residual blocks and non-leaf nodes (GP functions) specify the block assembling procedure. Our tree-based representation enables easy design and flexible implementation of genetic operators. Specifically, we design a dynamic crossover operator that strikes a balance between exploration and exploitation, which emphasizes CNN complexity at early stage and CNN diversity at later stage. Therefore, the desired CNN architecture with balanced depth and width can be found within limited trials. Moreover, our GP-CNAS framework is highly compatible with other manually-designed and NAS-generated block types as well. Experimental results on the CIFAR-10 dataset show that GP-CNAS is competitive among the state-of-the-art automatic and semi-automatic NAS algorithms.

💡 Research Summary

The paper introduces GP‑CNAS, a novel neural architecture search (NAS) framework that leverages Genetic Programming (GP) to automatically discover high‑performing convolutional neural network (CNN) architectures. Instead of the common graph‑based or binary‑string encodings used in many evolutionary NAS methods, GP‑CNAS represents each candidate CNN as a tree. The leaf nodes (terminals) are four pre‑defined residual blocks (b1‑b4) taken from the literature, while the internal nodes (primitive functions) consist of four operators: “^2” (double the number of filters), “^3” (triple the number of filters), “+” (concatenate two blocks, increasing depth) and “str” (double the stride, performing down‑sampling). By traversing the tree from the root to each leaf, the cumulative effect of these operators determines the final depth, width, and down‑sampling pattern of the network, allowing a compact yet expressive description of a wide variety of CNN topologies.

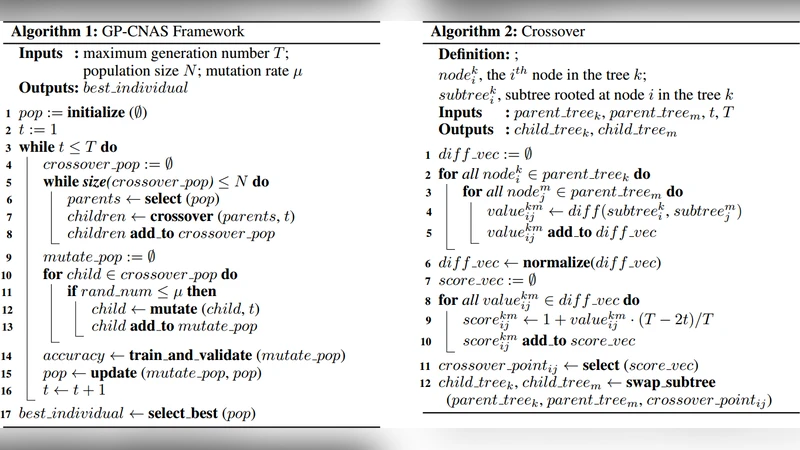

The evolutionary process begins with a population generated by the classic “ramped‑half‑and‑half” method, ensuring a diverse set of trees with varying depths. Selection uses a dynamic tournament size that grows with the generation number, gradually shifting pressure from random exploration toward exploitation of the best individuals. The core innovation is a dynamic crossover operator. For each generation, the algorithm computes a score for every possible pair of crossover points based on the current generation index and the size difference of the two sub‑trees. Early generations assign high scores to pairs with large size differences, encouraging the creation of deeper and wider networks (complexity‑driven search). In later generations the scoring favours pairs with similar sizes, promoting subtle structural variations and diversity (exploration‑driven search). This adaptive mechanism balances the need to quickly reach sufficient model capacity with the later requirement to fine‑tune architecture.

Mutation is applied with a fixed probability; a randomly chosen leaf node is replaced by a newly grown subtree using the “grow” method, injecting fresh structural motifs while keeping the overall search space tractable. Fitness is measured as the validation accuracy on a held‑out split of CIFAR‑10 after a short training schedule, and an elitism strategy guarantees that the best individuals survive to the next generation.

Experiments on CIFAR‑10 demonstrate that GP‑CNAS can locate architectures achieving over 94 % test accuracy while evaluating only a few hundred candidates—a budget comparable to or smaller than many reinforcement‑learning‑based NAS approaches (e.g., MetaQNN, BlockQNN) and evolutionary baselines (e.g., Regularized Evolution). The discovered models are competitive with state‑of‑the‑art hand‑crafted and automatically‑designed networks, confirming the effectiveness of the tree‑based representation and the dynamic crossover scheme. Moreover, because the terminals are simply residual blocks, the framework can be extended to incorporate other manually designed blocks or blocks generated by separate NAS processes, offering high modularity.

The authors highlight three main contributions: (1) a tree‑based encoding that naturally captures both depth and width variations; (2) a generation‑aware crossover operator that transitions the search focus from model complexity to architectural diversity; and (3) a semi‑automatic NAS system that remains compatible with a broad range of block types. Limitations include the computational cost of evaluating each candidate (still GPU‑intensive) and potential inefficiencies when trees become very deep, which may affect crossover and mutation speed. Future work is suggested to integrate weight‑sharing or surrogate models to reduce evaluation overhead and to test the method on larger datasets and more diverse search spaces. Overall, GP‑CNAS showcases how classic GP concepts, when adapted with dynamic operators, can provide a powerful and flexible alternative to existing NAS methodologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment