Sound Event Localization and Detection of Overlapping Sources Using Convolutional Recurrent Neural Networks

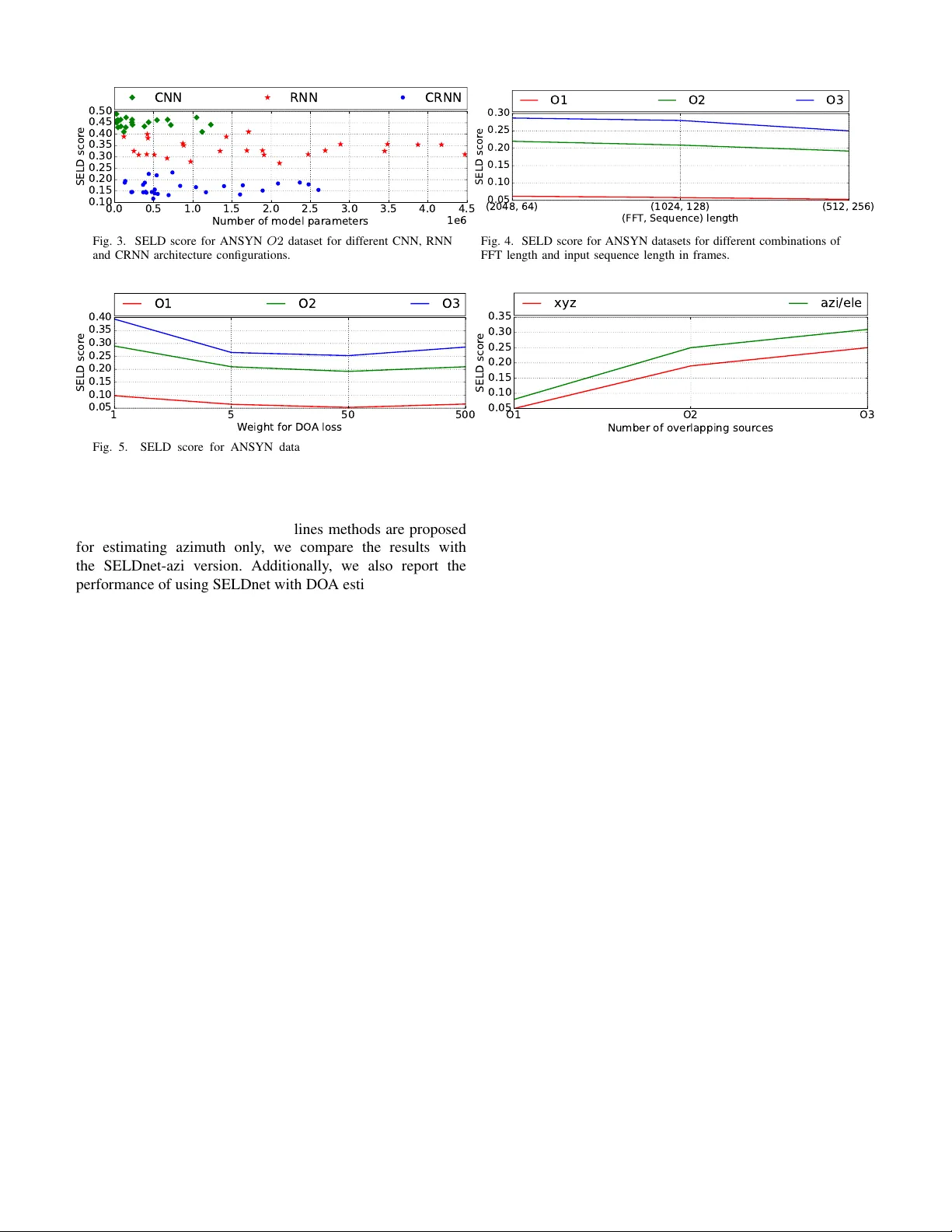

In this paper, we propose a convolutional recurrent neural network for joint sound event localization and detection (SELD) of multiple overlapping sound events in three-dimensional (3D) space. The proposed network takes a sequence of consecutive spec…

Authors: Sharath Adavanne, Archontis Politis, Joonas Nikunen