Systematic Parsing of X.509: Eradicating Security Issues with a Parse Tree

X.509 certificate parsing and validation is a critical task which has shown consistent lack of effectiveness, with practical attacks being reported with a steady rate during the last 10 years. In this work we analyze the X.509 standard and provide a grammar description of it amenable to the automated generation of a parser with strong termination guarantees, providing unambiguous input parsing. We report the results of analyzing a 11M X.509 certificate dump of the HTTPS servers running on the entire IPv4 space, showing that 21.5% of the certificates in use are syntactically invalid. We compare the results of our parsing against 7 widely used TLS libraries showing that 631k to 1,156k syntactically incorrect certificates are deemed valid by them (5.7%–10.5%), including instances with security critical mis-parsings. We prove the criticality of such mis-parsing exploiting one of the syntactic flaws found in existing certificates to perform an impersonation attack.

💡 Research Summary

The paper addresses the long‑standing problem that X.509 certificate parsing and validation remain error‑prone despite a decade of reported attacks. Existing TLS implementations rely on handcrafted parsers for ASN.1 structures, which leads to ambiguous, non‑deterministic handling of many syntactic constructs (e.g., OPTIONAL fields, SET ordering, ANY DEFINED BY, size constraints). The authors propose a systematic, language‑theoretic approach: they translate the X.509 specification, originally expressed in ASN.1, into an equivalent Extended Backus‑Naur Form (EBNF) grammar that is as close to a right‑linear (regular) grammar as possible while still covering all valid certificates up to 4 GiB.

Key technical contributions include:

-

Formal analysis of X.509 – The authors identify which parts of the standard make the language at least context‑sensitive, and they show how to refactor those parts (e.g., by fixing ordering of SET elements, replacing dynamic ANY constructs with static sub‑grammars, and encoding size ranges as bounded repetitions) to obtain a decidable grammar.

-

Predicated grammar design – By adding semantic predicates that enforce constraints such as “SIZE(1..MAX)” or “MIN/MAX” bounds, the grammar remains unambiguous and can be processed by deterministic parsers.

-

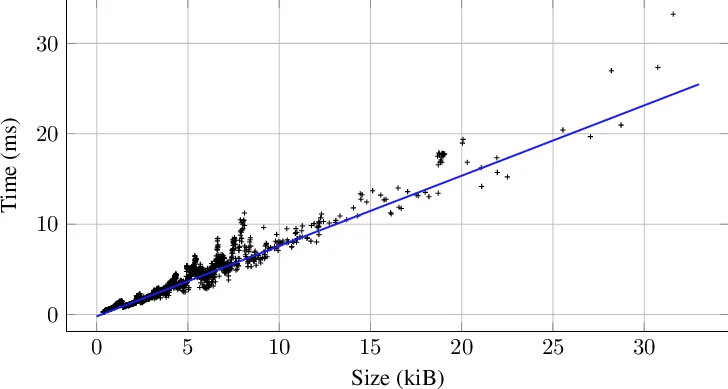

Automatic parser generation – Using ANTLR4, they generate a parser that operates on a byte alphabet (256 symbols). All productions are right‑linear or bounded, guaranteeing constant‑space auxiliary memory and linear‑time parsing for any certificate that fits the size limit.

-

Large‑scale empirical evaluation – The authors scan the entire IPv4 address space for HTTPS servers, collect 11 million X.509 certificates, and run their parser on the whole set. They discover that 21.5 % of the certificates are syntactically invalid according to the formal grammar.

-

Comparison with seven mainstream TLS libraries – The same certificate corpus is fed to OpenSSL, BoringSSL, GnuTLS, LibreSSL, NSS, Java’s JSSE, and WolfSSL. Between 631 k and 1 156 k certificates (5.7 %–10.5 % of the total) that are syntactically malformed are nevertheless accepted as valid by at least one library. The discrepancies arise from differing handling of optional extensions, length fields, and ASN.1 encoding quirks.

-

Demonstration of real‑world impact – The authors exploit one of the identified parsing flaws (incorrect handling of the BasicConstraints or KeyUsage bitmask) to craft a forged certificate that is accepted by OpenSSL and BoringSSL. By presenting this certificate during a TLS handshake, they achieve successful impersonation of an arbitrary domain, effectively a man‑in‑the‑middle attack.

The paper’s findings have several implications. First, they prove that syntactic validation alone, when performed with a rigorously defined grammar, can catch a substantial fraction of certificates that current libraries mistakenly accept. Second, the work shows that many security incidents attributed to “X.509 bugs” are not cryptographic weaknesses but parsing ambiguities that can be eliminated by formal methods. Third, the generated parser’s linear‑time, constant‑memory profile makes it practical for deployment in high‑throughput servers without sacrificing performance.

Finally, the authors outline future directions: integrating policy‑level constraints (e.g., name‑constraints, path‑building rules) into the grammar, extending the approach to other PKI formats such as OpenPGP, and encouraging TLS library developers to adopt formally generated parsers as a baseline. By bridging formal language theory with real‑world PKI engineering, the paper provides a concrete path toward more robust and interoperable certificate validation across the Internet.

Comments & Academic Discussion

Loading comments...

Leave a Comment