Markov modeling of online inter-arrival times

In this paper, we investigate the arising communication patterns on social media, and in particular the series of events happening for a single user. While the distribution of inter-event times is often assimilated to power-law density functions, a debate persists on the nature of an underlying model that explains the observed distribution. In the present, we propose an intuitive explanation to understand the observed dependence of subsequent waiting times. Our contribution is twofold. The first idea consists of separating the short waiting times – out of scope for power-law distributions – from the long ones. The model is further enhanced by introducing a two-state Markovian process to incorporate memory.

💡 Research Summary

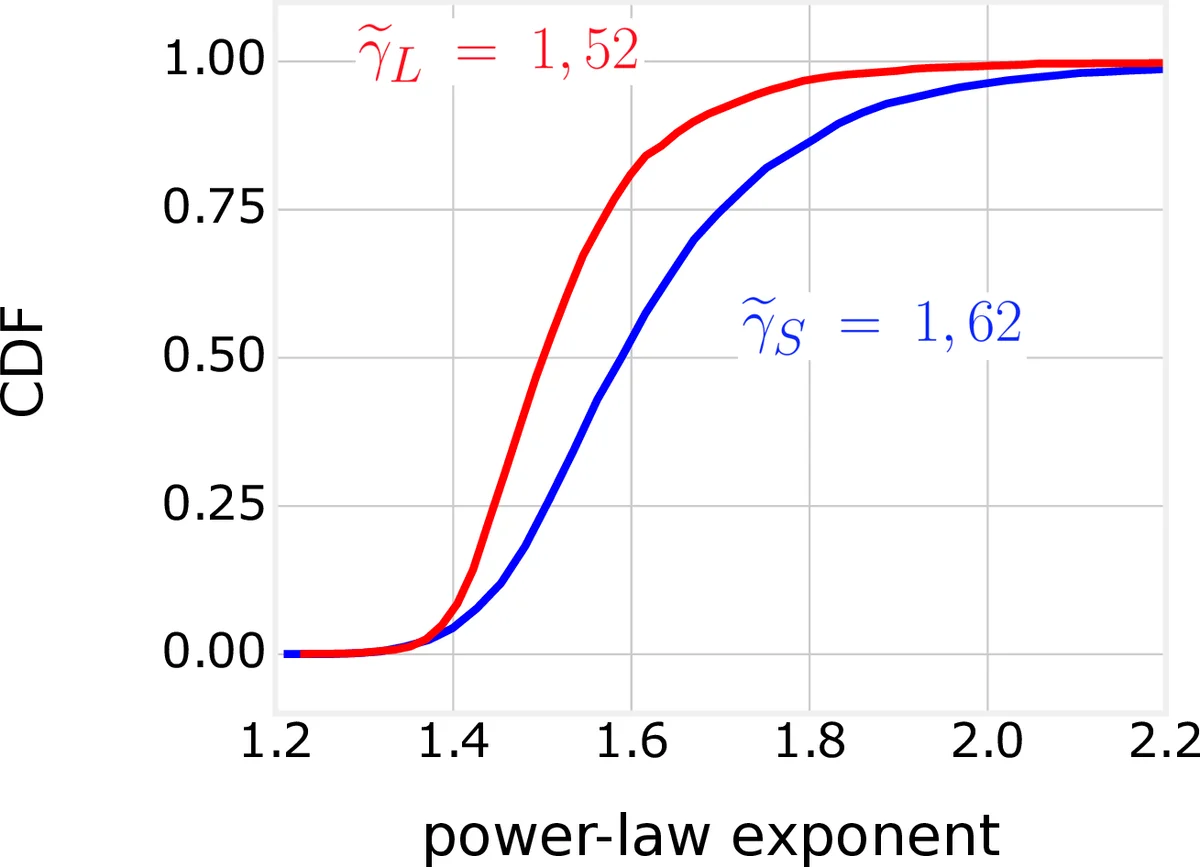

The paper investigates temporal patterns of user activity on social media, focusing on the inter‑event times (the intervals between consecutive posts or comments). While many previous studies have modeled these intervals with heavy‑tailed power‑law distributions, the authors argue that such models overlook a crucial dependence between successive waiting times. They propose a two‑stage approach: first, they introduce a threshold tₜₕᵣₑₛ that separates short waiting times (below the threshold) from long waiting times (above the threshold). Second, they model the sequence of short and long intervals as a two‑state Markov chain, where the state S represents an “intensive” period (short intervals) and L represents an “occasional” period (long intervals).

Data were collected from Twitter and Reddit over a six‑month period (April–September 2016). Only highly active users (≥ 1000 events) were retained, and activity outside 08:00–22:00 was discarded to reduce circadian effects. This yielded 4,796 Twitter users and 3,081 Reddit users. For each user‑day pair the authors computed the series of inter‑event times T₁,…,Tₙ. Empirically, they observed that short intervals tend to follow other short intervals more often than would be expected under independence; they quantified this with a ratio r = p_{SS}/(p_S·p_S) > 1.

The Markov model is defined as follows. Let X_i∈{S,L} denote the type of interval T_i (short or long). Transition probabilities are collected in a matrix P with entries p_{S|S}, p_{L|S}, p_{S|L}, p_{L|L}. Conditional on the current state, the next interval’s distribution is modeled by a mixture: for a short‑state the next interval is drawn either from a uniform distribution on

Comments & Academic Discussion

Loading comments...

Leave a Comment