Learning Dynamic Embeddings from Temporal Interactions

Modeling a sequence of interactions between users and items (e.g., products, posts, or courses) is crucial in domains such as e-commerce, social networking, and education to predict future interactions. Representation learning presents an attractive solution to model the dynamic evolution of user and item properties, where each user/item can be embedded in a euclidean space and its evolution can be modeled by dynamic changes in embedding. However, existing embedding methods either generate static embeddings, treat users and items independently, or are not scalable. Here we present JODIE, a coupled recurrent model to jointly learn the dynamic embeddings of users and items from a sequence of user-item interactions. JODIE has three components. First, the update component updates the user and item embedding from each interaction using their previous embeddings with the two mutually-recursive Recurrent Neural Networks. Second, a novel projection component is trained to forecast the embedding of users at any future time. Finally, the prediction component directly predicts the embedding of the item in a future interaction. For models that learn from a sequence of interactions, traditional training data batching cannot be done due to complex user-user dependencies. Therefore, we present a novel batching algorithm called t-Batch that generates time-consistent batches of training data that can run in parallel, giving massive speed-up. We conduct six experiments on two prediction tasks—future interaction prediction and state change prediction—using four real-world datasets. We show that JODIE outperforms six state-of-the-art algorithms in these tasks by up to 22.4%. Moreover, we show that JODIE is highly scalable and up to 9.2x faster than comparable models. As an additional experiment, we illustrate that JODIE can predict student drop-out from courses five interactions in advance.

💡 Research Summary

The paper introduces JODIE (Joint Dynamic User‑Item Embeddings), a novel framework for learning time‑evolving representations of both users and items from a continuous stream of interactions. Existing approaches either produce static embeddings, treat users and items independently, or cannot scale to large interaction logs. JODIE addresses these shortcomings with three tightly integrated components.

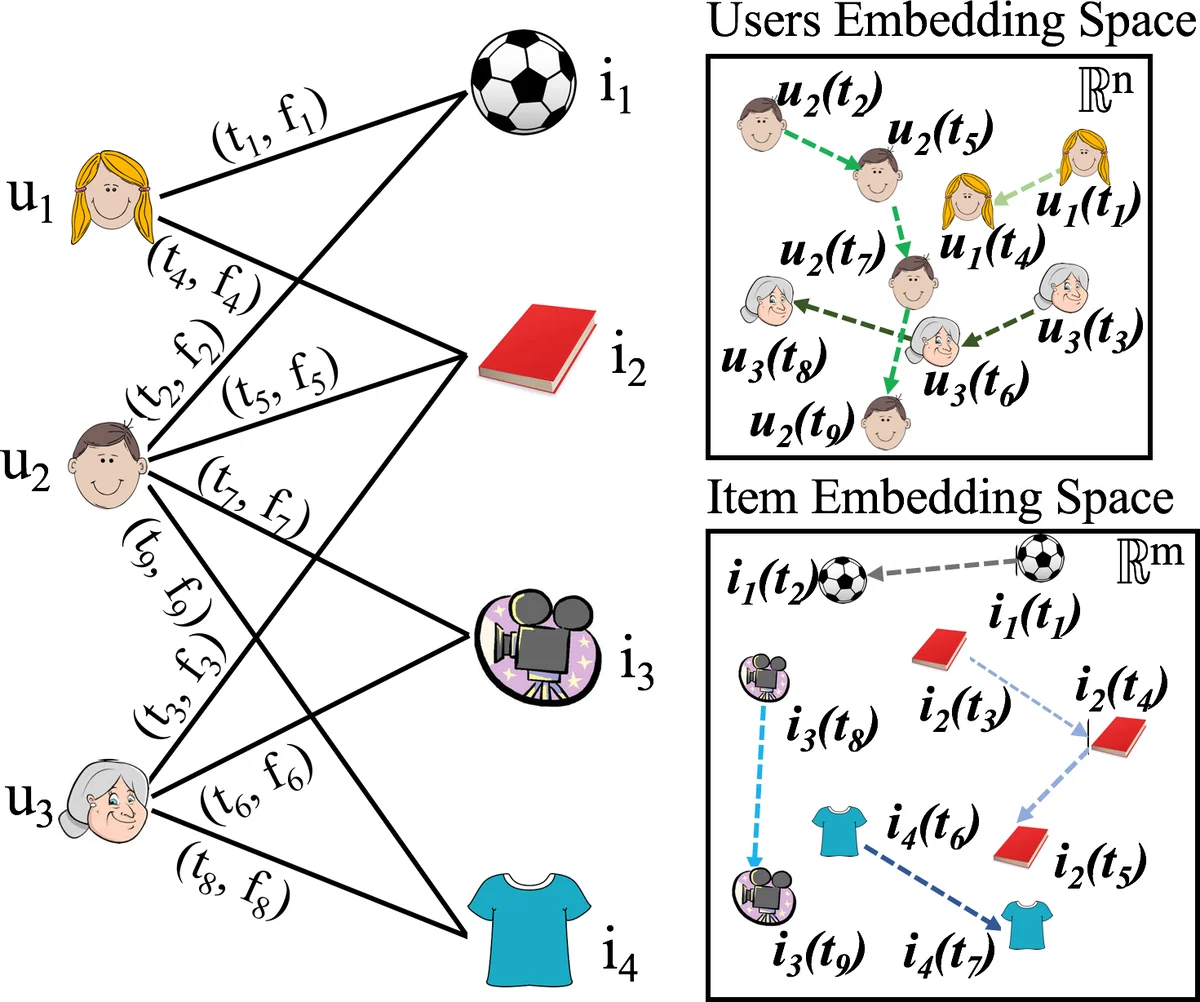

First, the update component consists of two mutually‑recursive recurrent neural networks (RNNs): a user RNN and an item RNN. When an interaction (user u, item i, feature vector f, timestamp t) occurs, the user RNN updates the user’s dynamic embedding using the current embedding of item i and the interaction features, while the item RNN simultaneously updates the item’s embedding using the freshly updated user embedding. This bidirectional coupling explicitly models the inter‑dependency that each entity’s state exerts on the other.

Second, JODIE introduces a projection function ρ that can forecast a user’s embedding at any future time point. Between two observed interactions of a user, the model estimates how the user’s latent state drifts as a function of the elapsed time Δ. This mechanism is analogous to the prediction step of a Kalman filter and enables real‑time recommendation even when a user has been idle for a while.

Third, the prediction component directly outputs the embedding of the item that the user is most likely to interact with next, rather than scoring every candidate item. After projecting the user’s embedding to time t + Δ, a predictor ψ generates a target item embedding ˜j. The final recommendation is obtained by a nearest‑neighbor search in the item embedding space. Because only one forward pass is required, inference cost is reduced from O(|I|) to O(1) per query, a substantial scalability gain over prior methods that must evaluate all items.

Training on sequential interaction data is challenging because (i) interactions involving the same item create complex user‑to‑user dependencies, and (ii) the temporal order must be respected. To overcome this, the authors propose t‑Batch, a batching algorithm that constructs time‑consistent mini‑batches where each user and each item appear at most once per batch and the batches respect the monotonic increase of timestamps for each entity. This design enables parallel processing of interactions within a batch while preserving all dependency constraints, yielding speed‑ups of 7–9× for both JODIE and the comparable DeepCoevolve model.

The authors evaluate JODIE on four real‑world datasets—Reddit, Wikipedia, LastFM, and a Massive Open Online Course (MOOC) platform—across two tasks: (1) next‑interaction prediction and (2) user state‑change prediction (e.g., user bans, student drop‑out). Six strong baselines are considered, including RRN, Time‑LSTM, LatentCross, CTDNE, IGE, and DeepCoevolve. Results show that JODIE consistently outperforms all baselines, achieving up to 22.4 % higher accuracy on interaction prediction and up to 4.5 % improvement on state‑change prediction. Moreover, JODIE can anticipate student drop‑out up to five interactions before it occurs, demonstrating its potential for early intervention.

In summary, JODIE makes four key contributions: (1) a coupled recurrent architecture that learns joint dynamic embeddings for users and items, (2) a learned projection function that estimates future user embeddings at arbitrary times, (3) the t‑Batch algorithm that enables efficient parallel training while preserving temporal dependencies, and (4) extensive empirical evidence of superior predictive performance and scalability. Limitations include the focus on bipartite user‑item interactions; extending the framework to multi‑relational or hypergraph settings remains an open direction for future work.

Comments & Academic Discussion

Loading comments...

Leave a Comment