A survey on software testability

Context: Software testability is the degree to which a software system or a unit under test supports its own testing. To predict and improve software testability, a large number of techniques and metrics have been proposed by both practitioners and researchers in the last several decades. Reviewing and getting an overview of the entire state-of-the-art and state-of-the-practice in this area is often challenging for a practitioner or a new researcher. Objective: Our objective is to summarize the body of knowledge in this area and to benefit the readers (both practitioners and researchers) in preparing, measuring and improving software testability. Method: To address the above need, the authors conducted a survey in the form of a systematic literature mapping (classification) to find out what we as a community know about this topic. After compiling an initial pool of 303 papers, and applying a set of inclusion/exclusion criteria, our final pool included 208 papers. Results: The area of software testability has been comprehensively studied by researchers and practitioners. Approaches for measurement of testability and improvement of testability are the most-frequently addressed in the papers. The two most often mentioned factors affecting testability are observability and controllability. Common ways to improve testability are testability transformation, improving observability, adding assertions, and improving controllability. Conclusion: This paper serves for both researchers and practitioners as an “index” to the vast body of knowledge in the area of testability. The results could help practitioners measure and improve software testability in their projects.

💡 Research Summary

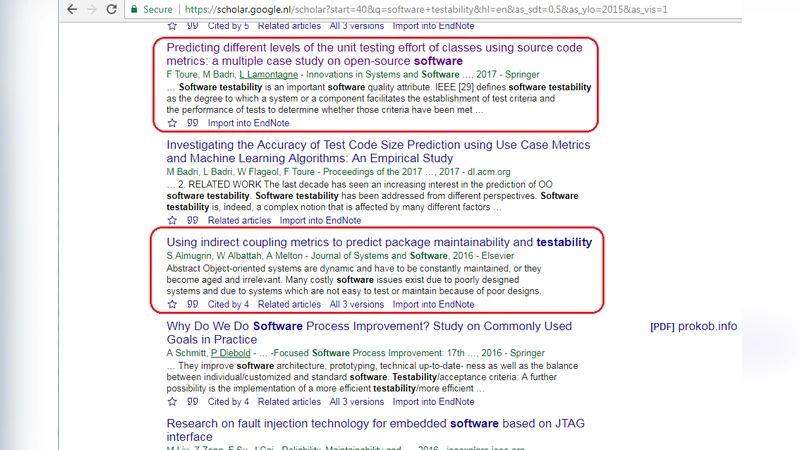

This paper presents a comprehensive systematic literature mapping (SLM) of software testability research spanning from 1982 to 2023. The authors began with 303 candidate papers retrieved from major digital libraries (IEEE Xplore, ACM DL, Scopus, Web of Science) using a combination of keywords such as “software testability,” “observability,” and “controllability.” After applying strict inclusion and exclusion criteria—focus on testability, English language, peer‑reviewed venues, and publication after 1980—208 primary studies remained for analysis.

The mapping classifies each study along four dimensions: (1) contribution type (measurement metrics, improvement techniques, frameworks, tools, case studies, surveys, or meta‑analyses), (2) research method (quantitative experiments, qualitative surveys, case studies, theoretical modeling, or mixed methods), (3) testability factors addressed, and (4) application domain (embedded, web, mobile, cloud, AI/ML, etc.). The authors also extracted demographic information such as author affiliation (academia 55 %, industry 30 %) and citation impact, identifying a handful of highly cited works that introduced seminal testability metrics and transformation tools.

Key findings reveal that the majority of the literature (over 60 %) concentrates on measurement and improvement of testability. Two factors—observability (the ability to inspect internal state during testing) and controllability (the ability to drive inputs to the system under test)—are the most frequently cited determinants of testability, appearing in roughly two‑thirds of the papers. Other supporting factors include reusability, modularity, and documentation quality.

Improvement techniques cluster into four principal categories:

- Testability Transformation – Refactoring, interface simplification, and insertion of test hooks to simultaneously boost observability and controllability. Several automated tools (e.g., Testability Transformer, EvoSuite‑based extensions) have been proposed.

- Observability Enhancement – Adding logging, tracing points, and runtime monitoring dashboards, especially important for embedded systems where hardware‑level instrumentation is required.

- Assertion Insertion – Embedding pre‑conditions, post‑conditions, and invariants (Design‑by‑Contract style) to increase fault detection probability.

- Controllability Improvement – Using mocking frameworks, test harnesses, and input‑control points to make test input delivery more reliable.

Measurement approaches are split between static quantitative metrics (code complexity, coupling, interface count, coverage ratios) and qualitative assessments (expert surveys, checklists). Recent work leverages machine‑learning models trained on these static metrics to predict testability scores, indicating a shift toward data‑driven evaluation.

Empirical studies within the mapping often involve industrial case studies; the typical system under test (SUT) ranges from 10 K to 200 K lines of code, primarily written in Java or C++. The authors note that the majority of empirical evidence comes from domains such as embedded systems, web applications, and mobile apps, with a growing interest in DevOps and continuous integration pipelines.

The discussion highlights practical implications: transformation techniques and observability/controllability enhancements provide high cost‑effectiveness, making them attractive for small‑to‑medium enterprises lacking extensive testing infrastructure. Conversely, the authors identify research gaps—most existing work focuses on static analysis, leaving dynamic, cloud‑native, and AI‑driven environments under‑explored. They also point out the need for testability metrics that capture non‑deterministic behavior typical of machine‑learning components.

Threats to validity include keyword and database selection bias, potential subjectivity in study inclusion decisions, and exclusion of non‑peer‑reviewed sources such as technical blogs or white papers. To mitigate these threats, the study employed multiple reviewers, voting procedures, and cross‑validation of extracted data.

In conclusion, the paper serves as an “index” of the testability knowledge base, summarizing the state‑of‑the‑art measurement methods, improvement strategies, and influencing factors. It provides practitioners with a roadmap to select appropriate metrics and transformation tools, and it offers researchers a clear view of under‑addressed areas—particularly dynamic environments, AI systems, and integration with modern DevOps pipelines—where future contributions can have substantial impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment