Anomaly Detection for Network Connection Logs

We leverage a streaming architecture based on ELK, Spark and Hadoop in order to collect, store, and analyse database connection logs in near real-time. The proposed system investigates outliers using unsupervised learning; widely adopted clustering and classification algorithms for log data, highlighting the subtle variances in each model by visualisation of outliers. Arriving at a novel solution to evaluate untagged, unfiltered connection logs, we propose an approach that can be extrapolated to a generalised system of analysing connection logs across a large infrastructure comprising thousands of individual nodes and generating hundreds of lines in logs per second.

💡 Research Summary

The paper presents a comprehensive streaming architecture for real‑time collection, storage, and analysis of database connection logs generated by CERN’s massive computing infrastructure. Using Apache Flume connectors, each database instance streams connection events into a Kafka buffer. From Kafka, the data follows two parallel paths: short‑term storage in Elasticsearch for immediate visualization with Kibana, and long‑term archival in HDFS using the Parquet file format to satisfy compression and query‑performance requirements.

Performance benchmarks compare Flume‑driven versus Kafka‑driven messaging and Parquet versus Avro storage. Kafka demonstrates higher throughput (thousands of events per second) and lower latency (tens of milliseconds), while Parquet outperforms Avro in scan speed and storage efficiency, making it the preferred choice for large‑scale analytical workloads.

The raw logs contain roughly 30 fields (user ID, host IP, database instance, timestamp, SQL type, etc.). After standard preprocessing—type casting, missing‑value handling, and categorical encoding—the authors apply dimensionality reduction via Principal Component Analysis (PCA) and Singular Value Decomposition (SVD), compressing the high‑dimensional feature space to 2–3 dimensions. This step mitigates the curse of dimensionality, reduces computational load, and enables effective visual exploration of the data.

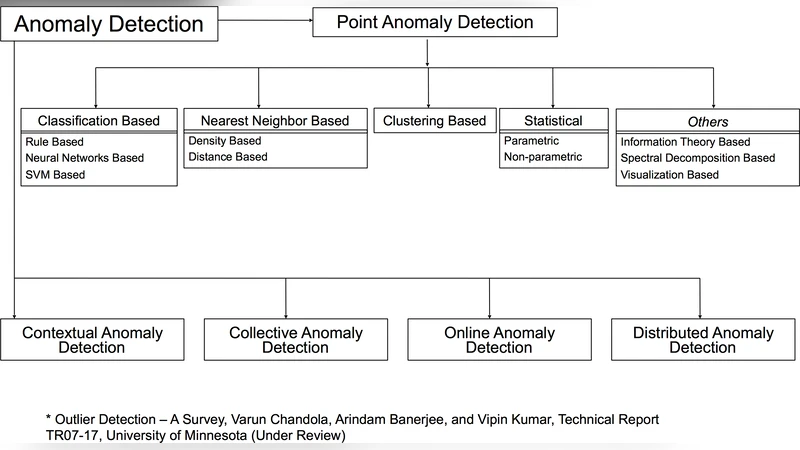

Four unsupervised anomaly‑detection algorithms are evaluated:

- k‑Nearest Neighbors (k‑NN) – distance‑based, using Euclidean distance to a fixed number of neighbours; contamination set to 2 % to filter false positives.

- Isolation Forest – tree‑based isolation mechanism with near‑linear time complexity, well‑suited for high‑dimensional data.

- Local Outlier Factor (LOF) – density‑based, measuring how isolated a point is relative to its local neighbourhood.

- One‑Class Support Vector Machine (OCSVM) – kernel‑based boundary learner that creates a soft decision surface for unlabeled data.

Each model is trained on the same preprocessed dataset, with contamination parameters explored between 2 % and 5 %. Because labeled ground truth is unavailable, evaluation relies on silhouette scores (average ≈ 0.35 for the best models) and manual cross‑validation against known security incidents. The authors report successful detection of three concrete anomaly categories: (a) malware‑induced reconnection spikes, (b) atypical user logins on holidays or off‑hours, and (c) anomalous multi‑resource requests lacking historical precedent.

The entire pipeline is containerized and orchestrated with Docker/Kubernetes, allowing dynamic scaling of Kafka partitions and Spark streaming batch sizes to accommodate thousands of databases and hundreds of gigabytes per second of log traffic. Monitoring and alerting are integrated throughout the stack, providing operators with immediate notifications of detected outliers.

While the implementation is tailored to CERN’s environment, the authors argue that the combination of a robust data lake, real‑time streaming, and unsupervised anomaly detection is readily transferable to other large‑scale IT domains such as cloud service providers, financial institutions, or telecom networks. Future work is suggested to incorporate semi‑supervised techniques, enrich the feature set, and automate remediation actions based on detected anomalies.

Comments & Academic Discussion

Loading comments...

Leave a Comment