Voice Disorder Detection Using Long Short Term Memory (LSTM) Model

Automated detection of voice disorders with computational methods is a recent research area in the medical domain since it requires a rigorous endoscopy for the accurate diagnosis. Efficient screening methods are required for the diagnosis of voice disorders so as to provide timely medical facilities in minimal resources. Detecting Voice disorder using computational methods is a challenging problem since audio data is continuous due to which extracting relevant features and applying machine learning is hard and unreliable. This paper proposes a Long short term memory model (LSTM) to detect pathological voice disorders and evaluates its performance in a real 400 testing samples without any labels. Different feature extraction methods are used to provide the best set of features before applying LSTM model for classification. The paper describes the approach and experiments that show promising results with 22% sensitivity, 97% specificity and 56% unweighted average recall.

💡 Research Summary

The paper addresses the problem of automated detection of voice disorders, which traditionally requires costly and time‑consuming endoscopic examinations. The authors propose a deep learning approach based on a Long Short‑Term Memory (LSTM) recurrent neural network to classify voice recordings as either normal or pathological. The study uses the Far Eastern Memorial Hospital (FEMH) voice disorder detection challenge dataset. The training set consists of 200 audio samples (50 normal, 150 pathological) recorded as 3‑second sustained vowel sounds at 44.1 kHz, 16‑bit resolution. An additional 400 unlabeled recordings are provided for testing.

Feature extraction combines four acoustic descriptors: 13 Mel‑frequency cepstral coefficients (MFCC), 1 spectral centroid value, 12 chroma features, and 7 spectral contrast measures, yielding a 33‑dimensional feature vector per sample. These features capture timbral, spectral, and harmonic characteristics of the voice signal and are intended to provide a rich representation for the LSTM.

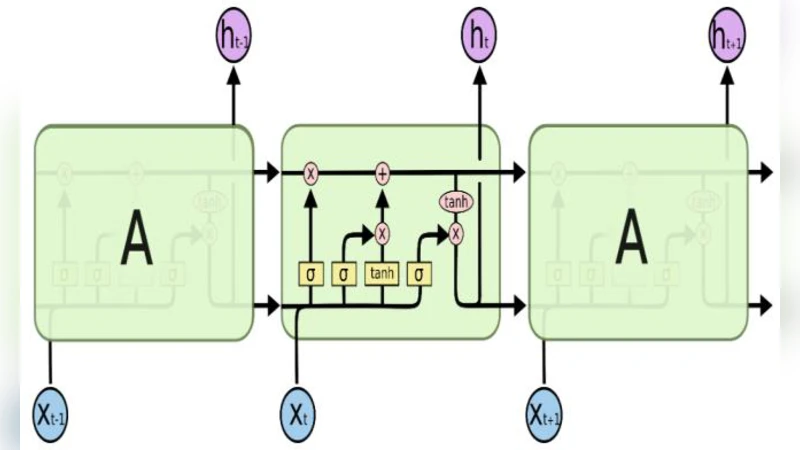

The network architecture comprises an input layer receiving the 33‑dimensional vector, two hidden LSTM layers (first with 128 units, second with 32 units), and an output layer with four neurons corresponding to three disorder categories (phonotrauma, glottic neoplasm, vocal palsy) plus a normal class. The model is trained using categorical cross‑entropy loss and the Adam optimizer. Hyper‑parameters such as batch size and number of epochs are varied to seek optimal performance. Two experimental phases are reported: Phase I with 500 training epochs and Phase II with 5,000 epochs.

Results show a high specificity (95.7 % in Phase I, 97.1 % in Phase II) but low sensitivity (30 % in Phase I, 22 % in Phase II). The unweighted average recall (UAR) improves modestly from 54 % to 56 % when the number of epochs is increased, indicating that the model learns to recognize normal voices reliably while struggling to detect pathological cases. The authors acknowledge that the approach “works fine” but requires further optimization.

Critical analysis reveals several methodological limitations. First, the test set is unlabeled, yet the authors report sensitivity, specificity, and UAR for it, leaving the evaluation procedure ambiguous. Second, the dataset is small and imbalanced (25 % normal vs. 75 % pathological), which predisposes the model to bias toward the majority class and hampers generalization. Third, the paper does not discuss regularization techniques (dropout, weight decay, early stopping) or data augmentation strategies that are standard for mitigating overfitting in deep learning. The decrease in sensitivity despite a tenfold increase in epochs suggests possible overfitting to the training data.

Despite these shortcomings, the work contributes a novel application of LSTM to voice disorder detection, a domain where most prior studies employed support vector machines, Gaussian mixture models, or conventional deep feed‑forward networks. The comprehensive acoustic feature set is appropriate for capturing temporal dynamics, and the network design is reasonable for the task. However, to achieve clinically relevant performance, future research should (1) obtain a fully labeled validation and test set for reliable metric computation, (2) employ techniques to address class imbalance such as weighted loss functions or synthetic minority oversampling, (3) explore hyper‑parameter optimization (different numbers of LSTM layers, unit sizes, learning rates), and (4) incorporate regularization and data augmentation (e.g., noise injection, pitch shifting) to improve robustness.

In summary, the study demonstrates that an LSTM‑based model can achieve very high specificity in distinguishing normal from pathological voice recordings, but the low sensitivity and modest UAR indicate that the current implementation is insufficient for reliable disorder detection. With methodological refinements and larger, balanced datasets, LSTM networks hold promise for developing low‑cost, rapid screening tools for voice disorders in resource‑limited clinical settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment