Particle identification in ground-based gamma-ray astronomy using convolutional neural networks

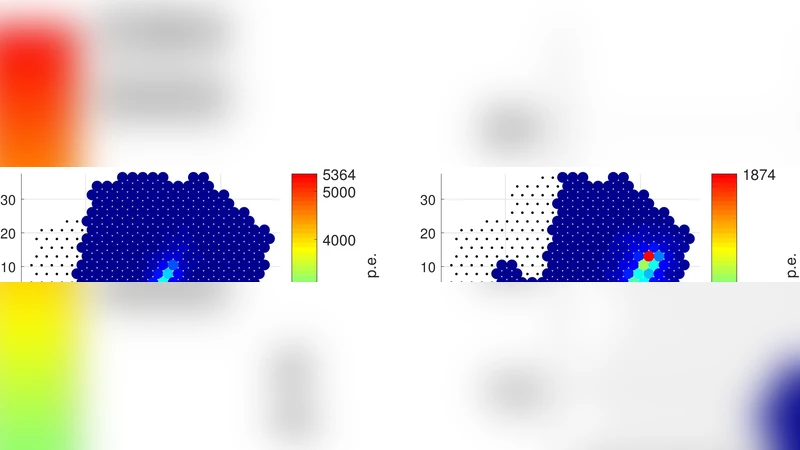

Modern detectors of cosmic gamma-rays are a special type of imaging telescopes (air Cherenkov telescopes) supplied with cameras with a relatively large number of photomultiplier-based pixels. For example, the camera of the TAIGA-IACT telescope has 560 pixels of hexagonal structure. Images in such cameras can be analysed by deep learning techniques to extract numerous physical and geometrical parameters and/or for incoming particle identification. The most powerful deep learning technique for image analysis, the so-called convolutional neural network (CNN), was implemented in this study. Two open source libraries for machine learning, PyTorch and TensorFlow, were tested as possible software platforms for particle identification in imaging air Cherenkov telescopes. Monte Carlo simulation was performed to analyse images of gamma-rays and background particles (protons) as well as estimate identification accuracy. Further steps of implementation and improvement of this technique are discussed.

💡 Research Summary

This paper investigates the use of convolutional neural networks (CNNs) for particle identification in ground‑based gamma‑ray astronomy, focusing on images recorded by imaging atmospheric Cherenkov telescopes (IACTs). The authors concentrate on the TAIGA‑IACT instrument, whose camera comprises 560 hexagonal photomultiplier pixels. Because the pixel layout is not a conventional rectangular grid, the first technical challenge is to transform the raw hexagonal data into a format suitable for standard CNN architectures. The authors adopt a “hex‑to‑square” mapping that interpolates the hexagonal layout onto a 28 × 28 square lattice while preserving relative distances and adjacency relationships. After mapping, each image is normalized to the

Comments & Academic Discussion

Loading comments...

Leave a Comment