Variational Bayes In Private Settings (VIPS)

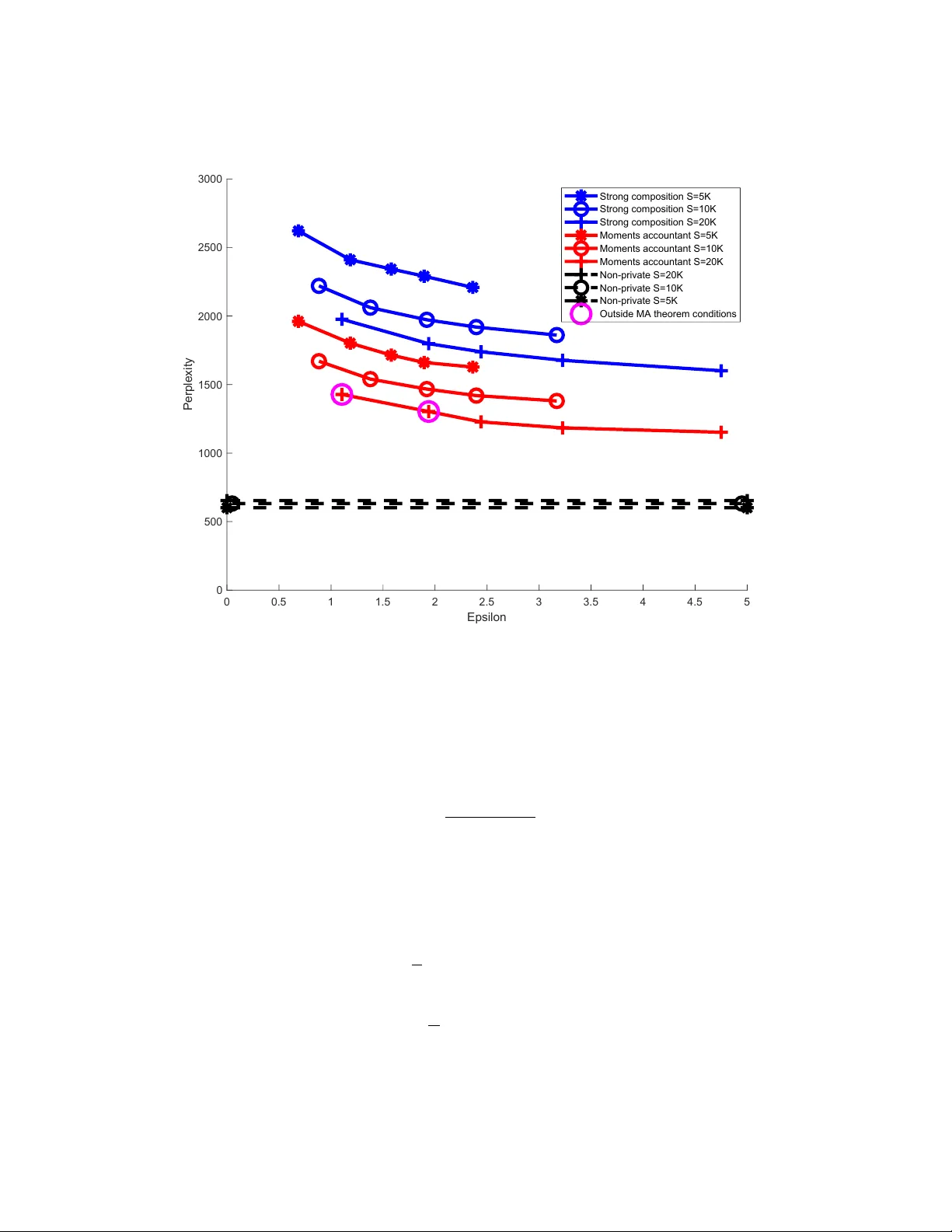

Many applications of Bayesian data analysis involve sensitive information, motivating methods which ensure that privacy is protected. We introduce a general privacy-preserving framework for Variational Bayes (VB), a widely used optimization-based Bay…

Authors: Mijung Park, James Foulds, Kamalika Chaudhuri