A Distributed Augmented Reality System for Medical Training and Simulation

Augmented Reality (AR) systems describe the class of systems that use computers to overlay virtual information on the real world. AR environments allow the development of promising tools in several application domains. In medical training and simulation the learning potential of AR is significantly amplified by the capability of the system to present 3D medical models in real-time at remote locations. Furthermore the simulation applicability is broadened by the use of real-time deformable medical models. This work presents a distributed medical training prototype designed to train medical practitioners’ hand-eye coordination when performing endotracheal intubations. The system we present accomplishes this task with the help of AR paradigms. An extension of this prototype to medical simulations by employing deformable medical models is possible. The shared state maintenance of the collaborative AR environment is assured through a novel adaptive synchronization algorithm (ASA) that increases the sense of presence among participants and facilitates their interactivity in spite of infrastructure delays. The system will allow paramedics, pre-hospital personnel, and students to practice their skills without touching a real patient and will provide them with the visual feedback they could not otherwise obtain. Such a distributed AR training tool has the potential to: allow an instructor to simultaneously train local and remotely located students and, allow students to actually “see” the internal anatomy and therefore better understand their actions on a human patient simulator (HPS).

💡 Research Summary

The paper presents a distributed augmented reality (AR) platform designed to train medical personnel in endotracheal intubation (ETI) while overcoming the limitations of traditional mannequins, human cadavers, and early virtual‑reality simulators. The authors argue that ETI is a life‑saving but technically demanding procedure, with high complication rates and a critical need for effective training, especially for pre‑hospital providers. To address this, they integrate a lightweight optical see‑through head‑mounted display (HMD) with an optical tracking system (Polaris™ NDI) and three custom‑built LED tracking probes attached to the HMD, the human patient simulator (HPS), and the endotracheal tube (ETT). The tracking system supplies six‑degree‑of‑freedom pose data at roughly 30 Hz, enabling real‑time overlay of virtual anatomy onto the physical mannequin.

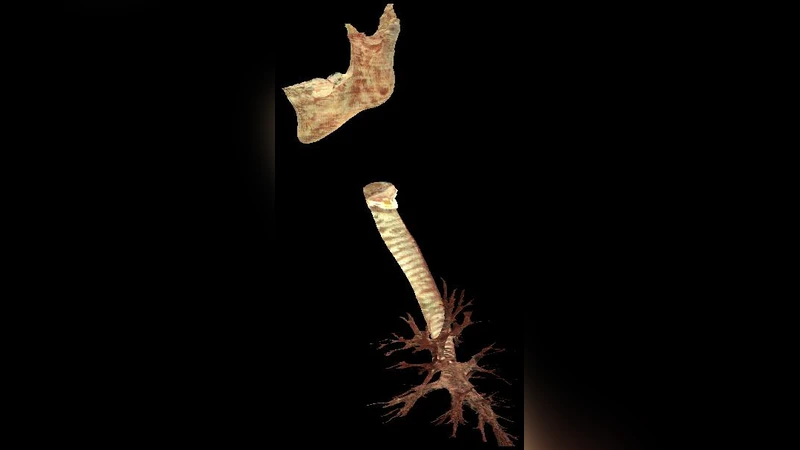

The virtual anatomy initially consists of a low‑resolution trachea‑and‑lung model (≈665 KB) that can be rendered interactively. The authors are extending the system with high‑fidelity models derived from the Visible Human dataset, which contain hundreds of thousands to over a million polygons. These detailed models improve registration accuracy and allow future incorporation of deformable structures. Registration between real and virtual objects is performed using a least‑squares pose estimation algorithm based on four manually identified landmarks on the mannequin’s ribcage and corresponding points on the virtual model. The rotation and translation components are solved via singular value decomposition (SVD), yielding a transformation matrix that aligns the virtual models with the physical HPS.

A major contribution is the Adaptive Synchronization Algorithm (ASA), which maintains a consistent “dynamic shared state” across geographically dispersed participants despite network latency and jitter. ASA dynamically adjusts the update frequency of each client and employs quaternion‑based error correction to keep the orientation of shared objects synchronized. Preliminary quantitative evaluation shows that average positional error stays below 2 cm, and subjective presence questionnaires indicate a noticeable improvement in users’ sense of immersion when ASA is active.

The system supports remote instruction: an instructor and multiple trainees, each wearing identical HMDs, can view the same augmented scene, observe each other’s actions, and exchange verbal feedback. The authors also discuss the integration of a physics‑based deformable lung model, which simulates breathing motions and pathological conditions, thereby adding realism to the training scenario.

In the discussion, the authors highlight the educational benefits of a distributed AR trainer: reduced travel costs, increased training frequency, and the ability to provide visual feedback that is impossible with conventional mannequins alone. They acknowledge current challenges, such as handling large high‑resolution meshes, improving real‑time deformation algorithms, and conducting more extensive user studies. Future work includes optimizing the rendering pipeline for complex models, extending ASA to support more participants, and applying the same framework to other medical procedures like vascular access or surgical incision.

Overall, the paper demonstrates a viable prototype that combines off‑the‑shelf hardware, custom tracking, sophisticated registration, and adaptive network synchronization to create a collaborative, immersive training environment for a critical emergency procedure. The approach promises to enhance medical education by delivering realistic visual cues and enabling remote expert guidance without the need for physical patient contact.

Comments & Academic Discussion

Loading comments...

Leave a Comment