Delay-Aware Coded Caching for Mobile Users

In this work, we study the trade-off between the cache capacity and the user delay for a cooperative Small Base Station (SBS) coded caching system with mobile users. First, a delay-aware coded caching policy, which takes into account the popularity o…

Authors: Emre Ozfatura, Thomas Rarris, Deniz Gunduz

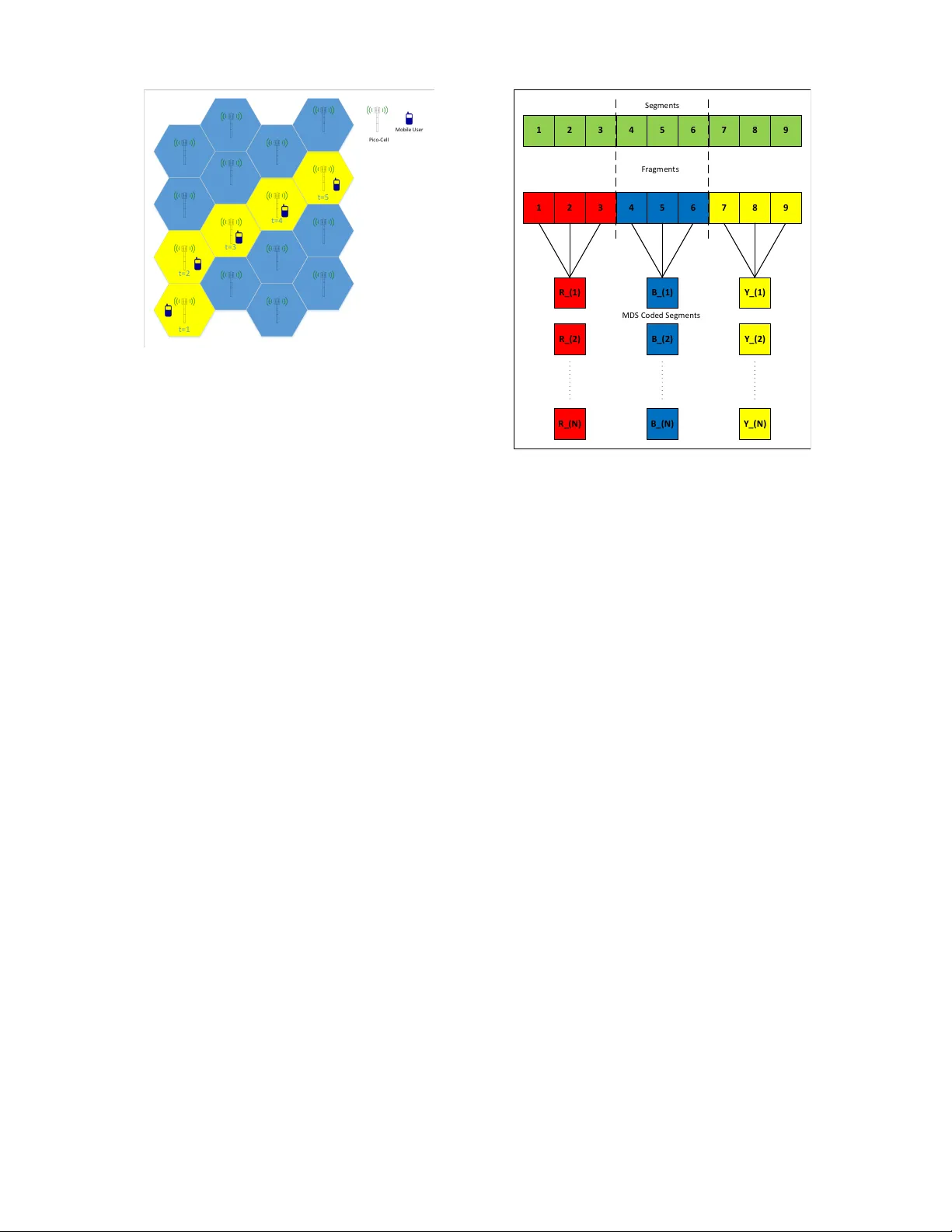

Delay-A ware Coded Caching for Mobile Users Emre Ozfatura ∗ , Thomas Rarris ∗ , Deniz Gündüz ∗ , and Ozgur Ercetin † ∗ Information Processing and Communications Lab Department of Electrical and Electronic Engineering, Imperial College London {m.ozfatura, thomas.rarris14, d.gunduz}@imperial.ac.uk † Sabanci Univ ersity , T urkey , oercetin@sabanciuni v .edu Abstract —In this work, we study the trade-off between the cache capacity and the user delay f or a cooperative Small Base Station (SBS) coded caching system with mobile users. First, a delay-aware coded caching policy , which takes into account the popularity of the files and the maximum re-b uffering delay to minimize the av erage re- buffering delay of a mobile user under a given cache capacity constraint is introduced. Subsequently , we address a scenario where some files are served by the macro-cell base station (MBS) when the cache capacity of the SBSs is not sufficient to store all the files in the library . F or this scenario, we develop a coded caching policy that minimizes the av erage amount of data served by the MBS under an av erage re-b uffering delay constraint. I . I N T RO D U C T I O N During last decade, the on-demand video streaming applications hav e been dominating the bulk of the In- ternet traffic. In 2016, Y ouT ube alone was responsible for 21% of the mobile Internet traffic in North America [1]. According to Cisco V isual Networking Inde x report [2], the size of the Internet video traf fic will be four times larger by the year 2021. This rapid increase in the Internet video traf fic calls for a paradigm shift in the design of cellular networks. A recent trend is to store the popular content at the network edge, closer to the user , in order to mitigate the excessi ve video traffic in the backbone. In heterogeneous cellular networks, SBSs can be equipped with storage devices, containing popular video files, to reduce the latency as well as the transmission cost. In a network of densely deplo yed SBSs, there may be more than one SBS that can serve the requested content of a mobile user (MU). This flexibility in user assignment is e xploited in designing cooperativ e caching policies [3]–[5], wherein the main objecti ve is to min- imize the transmission cost of serving user requests. It has also been sho wn that storing the contents in a coded form, particularly using maximum distance separable (MDS) codes, utilizes the local storage more ef ficiently; thereby increasing the amount of data served locally [6], [7]. This work w as supported in part by the Marie Sklodowska-Curie Actions SCA VENGE (grant agreement no. 675891) and T A CTILENet (grant agreement no. 690893), and by the European Research Council (ERC) Starting Grant BEA CON (grant agreement no. 725731). Howe ver , aforementioned works seek to find an opti- mal cooperativ e caching policy based on a given static user access topology such that the closest SBS to a user do not change o ver the time. Howe ver , in ultra dense networks (UDNs), due to the limited coverage area of SBSs, user access patterns are usually dynamic, and the mobility patterns of users hav e a significant impact on the amount of data that can be deliv ered locally [8]. T o this end, mobility-aware cooperativ e caching policies hav e been recently studied in [9], [10]. In these works, the goal is to maximize the amount of data that is served locally while satisfying a giv en content downloading delay constraint. Howe ver , when the contents are stored in a coded form as in [9], [10], a user cannot start displaying the video content before collecting all the parity bits, which may cause significant initial b uffering delays in video streaming applications. Proactiv e content caching for the continuous video display scenario, in which users can start displaying video content before downloading all the video frag- ments has been pre viously studied in [11] where, SBSs fetch the content dynamically in advance, prior to user arriv als, using the instantaneous user mobility informa- tion. Instead of a dynamic content fetching policy , in this paper , we consider a static caching polic y similarly to [9] and [10], and focus on the continuous display of video. I I . S Y S T E M M O D E L A N D P RO B L E M F O R M U L A T I O N Consider a heterogeneous cellular network that consists of one MBS and N SBSs, denoted by SBS 1 , . . . , SBS N , with disjoint coverage areas of the same size. Further , each SBS is equipped with a cache memory of size C bits. Due to disjoint coverage, a MU is served by only one SBS at any particular time. W e assume that time is divided into equal-length time slots, and the duration of a time slot corresponds to the minimum time that a MU remains in the cov erage area of the same SBS. W e also assume that each SBS is capable of transmitting B bits to a MU within its coverage area in a single time slot. For user requests, we consider library of K video files V = { v 1 , . . . , v K } , each of size F bits. V ideo files in the library are inde xed according to their popularity , such that v k is the k th most popular video file with a request t=1 t=2 t=3 t=4 t=5 P i c o - C e l l M o b i l e U s er Fig. 1: A sample mobility path under the high mobility assumption for T = 5 . probability of p k . Since the size of a video file is F bits and the transmission rate of a SBS is B bits per time slot, a MU can do wnload a single video file in at least T = F /B time slots. For the sake of simplicity , we assume that T is an integer , and we call the T time slots following a request as a video downloading session . Although, a MU is connected to only one SBS at each time slot, due to mobility , it may connect to multiple SBSs within a video downloading session. Due to the limited cache memory size, all video files in the library may not be stored at SBSs and in that case requests for the uncached video files are offloaded to the MBS. A. User Mobility Mobility path of a MU is defined as the sequence of SBSs visited within a video downloading session. For instance, for T = 5 , S B S 1 , S B S 3 , S B S 4 , S B S 5 , S B S 6 is a possible mobility path. W e consider a high mobility scenario, in which a MU does not stay connected to the same SBS more than one time slot so that at the end each time slot, MU moves to one of the neighboring cells as illustrated in Figure 1. Under this assumption, a mobility path is a sequence of T distinct SBSs. B. Delay-awar e coded caching Before proceeding with the problem formulation, we explain the coding scheme that is used to encode the video files. First, each video file is divided into T disjoint video segments of size B bits each, i.e., v k = s (1) k , . . . , s ( T ) k . Second, these segments are grouped into M k disjoint fragments f (1) k , . . . , f ( M k ) k ; that is, v k = M k [ m =1 f ( m ) k , and f ( i ) k ∩ f ( j ) k = ∅ , (1) for any i, j ∈ { 1 , . . . , M k } and i 6 = j . Then, the segments in each fragment are jointly encoded using 1 2 3 4 5 6 7 8 9 1 2 3 4 5 6 7 8 9 R _ ( 1 ) B _( 1 ) Y _ ( 1 ) S e g m e nt s F r a g m e nt s MD S C o de d S e g m e nts R _ ( 2 ) R _ ( N ) B _( 2 ) B _( N ) Y _( 2 ) Y _( N ) Fig. 2: Illustration of the employed coded caching strat- egy for a video do wnload session of T = 9 time slots. a | f ( m ) k | , N MDS code, and each coded segment is cached by a different SBS. Hence, any fragment f ( m ) k can be recovered from any | f ( m ) k | B parity bits collected from any | f ( m ) k | different SBSs within | f ( m ) k | time slots. The video encoding strategy is illustrated by the following example for T = F /B = 9 . A video file is first divided into T = 9 segments, which are then grouped into three fragments of three segments each (each fragment is represented by a different color in Figure 2). The three segments in each fragment are jointly encoded using a (3,N) MDS code to obtain N different coded segments of size B bits each. Then, each coded segment is cached by a different SBS, e.g., the i th coded se gment of each file is cached by S B S i . The ov erall coded caching procedure is illustrated in Figure 2. The reason for constructing N coded segments is to ensure that in any possible path a MU does not receiv e the same coded segment multiple times. W e remark that for gi ven T , certain cells can not be visited in a same mobility path, hence, depending on T , less than N coded segments might be sufficient to prev ent multiple reception of the same coded segment. [12]. Definition 1. A coded caching policy X defines how each file v k is divided into fr agments, i.e ., X , { X k } K k =1 , wher e X k = n f (1) k , . . . , f ( M k ) k o . Note that since the cache capacity of a SBS is C bits and the size of each coded segment is B bits, a feasible caching policy should satisfy the inequality P K k =1 M k B ≤ C . C. Continuous video display and delay analysis The video display rate , λ , defines the average amount of data (bits) required to display a unit duration (nor- malized to one time slot) of a video file 1 . In this work, we consider the scenario in which the service rate of the SBSs and the video display rate of MUs are approximately equal, i.e., B ≈ λ . Hence, at each time slot a MU displays one segment and similarly downloads one coded segment. In order to display a segment, it should be available at the b uffer in an uncoded form. If the corresponding segment is not a vailable in the b uffer , then the user waits until the corresponding segment is av ailable at the buf fer . This waiting time is called the r e-buf fering delay . The cumulative re-buf fering delay for file v k , under policy X k , is denoted by D k ( X k ) , and it is equal to the sum of re-buf fering delays experienced within a video streaming session. For the delay analysis, lets consider a particular file which is di vided into M fragments, i.e., f (1) , . . . , f ( M ) . The display duration of a fragment is the number of segments in it, e.g., if there is only one fragment then the display duration of that fragment is equal to the video duration. Let d ( m ) denote the display duration of the m th fragment, i.e., d ( m ) = | f ( m ) | B /λ ≈ | f ( m ) | . Furthermore, let t ( m ) d and t ( m ) p denote the time instants at which the m th fragment is downloaded and started to be displayed, respectiv ely . If t ( m ) p > t ( m ) d , the user displays the m th fragment without experiencing a stalling event; ho wev er , if t ( m ) d > t ( m ) p , then the user enters a re-buffering period and it stops displaying the video until t ( m ) d . Accordingly , the re-buf fering duration for the m th fragment, ∆ ( m ) , can be formulated as ∆ ( m ) = max n t ( m ) d − t ( m ) p , 0 o . (2) Note that t ( m ) p is equiv alent to the sum of the display times and re-buffering delays experienced by the previ- ously displayed fragments, i.e., t ( m ) p = m − 1 X i =1 ∆ ( i ) + d ( i ) . (3) Similarly , assuming that the fragments are do wnloaded in order , t ( m ) d is the total download time of all the previous fragments, i.e., t ( m ) d = m X i =1 d ( i ) . (4) 1 In general, video files are variable bit rate (VBR) encoded, and the display rate varies over time. Howe ver , λ can be considered as the minimum value satisfying λt ≥ λ c ( t ) , where λ c ( t ) is the cumulative display rate of a VBR-encoded video. Hence, the delay requirements can be satisfied at a constant rate of λ . Hence, (2) can be rewritten as ∆ ( m ) = max ( d ( m ) − m − 1 X i =1 ∆ ( i ) , 0 ) . (5) W e observe that if ∆ ( m ) > 0 , then the following equality holds, m X i =1 ∆ ( i ) = d ( m ) . (6) Let D be the cumulative re-buf fering delay e xperienced ov er all fragments of the video, which is deriv ed by the following lemma. Lemma 1. Cumulative r e-buffering delay D is equal to the display duration of the lar gest fragment, i.e ., D = M X m =1 ∆ ( m ) = max n d (1) , . . . , d ( m ) o . (7) Lemma 1 can be easily prov ed by induction using equality (6) and the fact that ∆ (1) = d (1) . Note that if the first fragment has the largest display duration, then D = ∆ (1) and the cumulative re-buf fering delay is equal to the initial buf fering delay . D. Pr oblem formulation In this work, we aim to find the optimal coded data caching policy X that minimizes the cumulativ e re- buf fering delay av eraged over all files, i.e., D av g ( X ) = P K k =1 p k D k ( X k ) . Before presenting the problem formu- lation, we focus on a particular file and highlight the delay-cache capacity trade-off with an example. If the number of fragments is equal to the number of segments, i.e., f ( m ) = s ( m ) ∀ m ∈ { 1 , . . . , T } , then each SBS caches all the segments. This requires a memory of F = T B bits for the corresponding file. On the other hand, if there is only one fragment that contains all the segments, i.e., f (1) = s (1) , . . . , s ( T ) , then all the segments are jointly encoded, and each SBS caches only B bits for the corresponding file. Note that, although the download time of the content is T slots in both cases; in the first case, each fragment can be displayed right after downloading it; whereas, in the second case, it is not possible to start displaying a fragment until all the F = T B parity bits are collected, since all the segments are encoded jointly . Equiv alently , the cumulative re- buf fering delay is equal to one time slot in the first case and T slots in the second. Next, we introduce a general mathematical model for the delay-cache capacity trade-off. The required cache size for a file depends only on the number of fragments M , and it is M B bits. Howe ver , the cumulative re- buf fering delay is equal to the display time (the number of segments) of the largest fragment. Hence, for giv en M the cumulativ e re-buf fering delay can be minimized by choosing fragment sizes approximately equal, i.e., for any i, j ∈ { 1 , . . . , M } and i 6 = j , | d ( i ) − d ( j ) | ≤ 1 . Consequently , for a gi ven memory constraint of M B bits the minimum achiev able cumulativ e re-buf fering delay is equal to d T / M e time slots. T o mathematically capture this relationship, we intro- duce the delay-cac he capacity function Ω( M ) , d T / M e 1 2 3 4 5 6 7 8 9 10 Number of fragments 1 2 3 4 5 6 7 8 9 10 Cumulative re-buffering delay Ω ( M ) ˜ Ω ( M ) Fig. 3: Delay-cache capacity function and its piece-wise linear approximation for T = 10 which maps the number of fragments in a file to the minimum achiev able re-buf fering delay D . Ω( M ) is a monotonically decreasing step function which is illus- trated in Figure 3 for T = 10 . T o analyze Ω( M ) , we introduce two new parameters: the delay level and the decr ement point . Any possible value of Ω( M ) is called delay le vel and denoted by D ( l ) . F or the gi ven example illustrated in Figure 3, there are L = 6 delay levels, i.e., D (1) = 10 , D (2) = 5 , D (3) = 4 , D (4) = 3 , D (5) = 2 , D (6) = 1 . A decrement point m ( l ) is the minimum value of M that satisfies Ω( M ) = D ( l ) . In the gi ven example m (1) = 1 , m (2) = 2 , m (3) = 3 , m (4) = 4 , m (5) = 5 , m (6) = 10 . Recall that popularity of the files are not identical, which implies that re-buf fering delay of the popular files has more impact on the av erage re-b uffering delay . Hence, for each file v k , we consider a weighted delay- cache capacity function Ω k ( M k ) such that Ω k ( M k ) , p k d T / M k e . Note that for a giv en number of fragments M , we know the optimal caching decision, i.e., the number of segments in each fragment. Hence, from now on, we use M , ( M 1 , . . . , M K ) to denote the caching policy instead of X . Then the average re-buffering delay is rewritten as D av g ( M ) = P K k =1 Ω k ( M k ) . Eventually , we hav e the following optimization problem P1: min M D avg ( M ) subject to: D k ( M k ) ≤ D max , ∀ k, (8) K X k =1 M K B ≤ C, (9) where (8) is the fairness constraint which ensures that the cumulati ve re-buf fering delay is less than D max for any video file, and (9) is the cache capacity constraint. I I I . S O L U T I O N A P P R OAC H Lets denote the minimum l that satisfies D ( l ) < D max in P1 by l min . Then, the optimization problem P1 can be reformulated as P2: min M D avg ( M ) subject to: M k ≥ m ( l min ) , ∀ k, (10) K X k =1 M k ≤ C /B . (11) Note that we simply conv erted the delay constraint to a cache capacity constraint, such that each file requires a cache capacity of at least m ( l min ) B bits. In order to find a feasible solution to P2 the cache capacity C should be larger than K m ( l min ) B bits. In the following section, we first solve P2 assuming that this condition holds. W e will consider the other case in the subsequent section. Note that, if (10) does not hold for all files, then some of the least popular files are not cached at all, and a MU requesting one of these files is offloaded to the MBS causing additional overhead. Later we will show how this overhead is modeled. W e define a caching strategy as Cost-fr ee if all the video files are cached by SBSs. A. Cost-fr ee delay minimization P2 can be shown to be an NP hard problem, since it can be reduced to a knapsack problem. Howe ver , if we use a piece wise linear approximation of the delay- cache capacity function Ω k ( M K ) , which is denoted by ˜ Ω k ( M K ) , then the objective function becomes the sum of piecewise monotonic linear functions. Let γ k,l be the slope of the function ˜ Ω k ( M K ) , in the interval ( m ( l ) m ( l +1) ] . Then, it is easy to observe that | γ k,l | > | γ k,l +1 | holds for all l . Hence, if the objecti ve function is replaced by ˜ D av g ( M ) = P K k =1 ˜ Ω k ( M K ) , we obtain the following conv ex optimization problem: P3: min M ˜ D avg ( M ) = K X k =1 ˜ Ω k ( M K ) subject to: M k ≥ m ( l min ) for all k (12) K X k =1 M k ≤ C /B . (13) Note that the solution of P3 is not equiv alent to the solution of the original problem P2 . Howe ver , we will show that with a small perturbation in the cache size C , solution of P2 and P3 becomes identical. Since the objectiv e is a con ve x function of sum of piecewise linear functions, we follow a similar strategy to the one used in [10]. The proposed algorithm first allocates each file a cache memory of size m ( l min ) B bits, which corresponds to the delay level of D ( l min ) . After this initial phase, it searches for the ˜ Ω k ( M k ) that has the minimum slope (maximum ne gativ e slope), and updates the delay level of file v k to the next one, i.e., D ( l ) to D ( l +1) , and updates M k accordingly . The procedure is repeated until (13) is satisfied with equality . The ov erall coded caching strategy is detailed in Algorithm 1. Note that Ω( m ( l ) ) = ˜ Ω( m ( l ) ) at any decrement point m ( l ) by construction, as illustrated in Figure 3. Algorithm 1: Cost-free delay minimization Input : B , C , n γ k,l L l =1 o K k =1 Output: M 1 M k ← m ( l min ) , k ∈ { 1 , . . . , K } ; 2 γ k ← γ k,l min , k ∈ { 1 , . . . , K } ; 3 l k ← l min ; 4 ˜ C ← C /B ; 5 while ˜ C > 0 do 6 ´ k = argmax { γ 1 , . . . , γ K } ; 7 if ˜ C ≥ ( m ( l ´ k +1) − m ( l ´ k ) ) then 8 l ´ k ← l ´ k + 1 ; 9 γ ´ k ← γ k,l ´ k ; 10 M k ← m ( l ´ k ) ; 11 C B ← C B − ( m ( l ´ k ) − m ( l ´ k − 1) ) 12 else 13 M ´ k ← M ´ k + C B ; 14 ˜ C ← 0 ; 15 end 16 end Hence, if for each k , equality M k = m ( l k ) holds for some l k ∈ { l min , . . . , L } , then D av g ( M ) is equal to ˜ D av g ( M ) . Equiv alently , if Algorithm 1 terminates in if condition , then for the resulting caching policy M , D av g ( M ) = ˜ D av g ( M ) . No w recall that by construction ˜ D av g ( M ) is a lower bound for D av g ( M ) which then implies that M is the optimal solution for the original problem P2 . If Algorithm 1 terminates in else condition , then the obtained policy will be a suboptimal solution for P2 . Nevertheless, it is always possible to ensure that last cache size allocation is done in if condition via increasing cache size C by ≤ F / 2 since m l +1 − m l ≤ B T / 2 = F / 2 for any l . B. A verage delay constr ained cost minimization In some cases, it may not be possible to satisfy the D max constraint for all files in the library due to cache capacity constraints. Furthermore, the av erage re- buf fering delay can be a predefined system parameter, denoted by D av g M ax , in order to offer a certain QoS to the user; ho wev er , the av erage delay obtained from the solution of P2 may not satisfy this requirement. As a result, some of the least popular files are not cached at all and the requests for these files are offloaded to MBS. W e denote the average amount of data that needs to be downloaded from the MBS by Θ and let be the set of cached videos, A = { k : M k > 0 } then Θ = P k / ∈ A p k . Our goal is to find the coded caching policy M that minimizes Θ , thus we have the following optimization problem: P4: min M Θ( M ) = X k / ∈ A p k subject to: D avg ( M ) ≤ D avg M ax , (14) M k ≥ m ( l min ) , ∀ k ∈ A, (15) K X k =1 M k ≤ C /B . (16) Constraint (14) is for the maximum av erage delay requirement and (15) is the fairness constraint for the Algorithm 2: Delay constrained cost minimization Input : B , C , D avg M ax Output: M 1 M k ← 0 , k ∈ { 1 , . . . , K } ; 2 ˜ C ← C /B ; 3 for k ∈ { 1 , . . . , K } do 4 if ˜ C ≥ m ( l min ) then 5 M k ← m ( l min ) ; 6 ˜ C ← ˜ C − m ( l min ) ; 7 end 8 end 9 execute Algorithm 1; 10 while D avg > D avg M ax do 11 k = argmin { p i } , i ∈ { 1 , . . . , K : M i > 0 } ; 12 M k ← 0 ; 13 execute Algorithm 1; 14 end locally cached files. Lastly , (16) imposes the cache capacity constraint. Due to constraint (15), at most ´ K = min ( C /B m ( l min ) , K ) dif ferent files can be stored in the SBS caches. If the most popular ´ K files are cached according to the delay constraint D max , m ( l min ) B bits allocated to each file, then the cache memory size and the fairness constraints are satisfied. If the constraint (14) is also satisfied, i.e., D av g M ax = D max , then the afore- mentioned assignment is the optimal and no further steps are needed. Otherwise, in order to decrease D av g M ax , the least popular file in A is removed and Algorithm 1 is applied to find the optimal cache allocation for the remaining files. The use of Algorithm 1 ensures that the allocation yields the minimum possible av erage cumulative re- buf fering delay for the giv en cache capacity constraint. Using these procedure we increase the average cost by the least possible amount while decreasing the average delay by the highest possible amount. This step is repeated until all the constraints are satisfied. The ov erall procedure is illustrated in Algorithm 2. I V . N U M E R I C A L R E S U LT S A. Simulation setup In this section, we ev aluate the performance of the coded caching policies described in Algorithms 1 and 2. For the simulations we consider a video library of 10000 files. The popularity of the files are mod- eled using a Zipf distribution with parameter w , which adjusts its ske wness. In the simulations we consider w ∈ { 0 . 75 , 0 . 85 , 0 . 95 } and T = 10 . Further we set D max = 10 . For the simulations we consider two differ - ent scenarios. In the first scenario we consider the cache sizes, normalized over the library size, ˆ C ∈ [0 . 1 , 0 . 7] . For the given cache sizes, the maximum delay constraint D max can be satisfied for each video file; and hence, in the first part of simulations we analyze the average cumulativ e re-buf fering delay . In the second case, we consider ˆ C = 0 . 08 where the maximum delay constraint 0.1 0.2 0.3 0.4 0.5 0.6 Cache size normalised over video library size 1 2 3 4 5 6 7 8 9 10 Average Buffering Delay (in time slots) Delay Aware Caching Caching Most Popular files Caching files equally (a) w = 0 . 75 0.1 0.2 0.3 0.4 0.5 0.6 Cache size normalised over video library size 1 2 3 4 5 6 7 8 9 10 Average Buffering Delay (in time slots) Delay Aware Caching Caching Most Popular files Caching files equally (b) w = 0 . 85 0.1 0.2 0.3 0.4 0.5 0.6 Cache size normalised over video library size 1 2 3 4 5 6 7 8 9 10 Average Buffering Delay (in time slots) Delay Aware Caching Caching Most Popular files Caching files equally (c) w = 0 . 95 Fig. 4: Cumulativ e buf fering delay versus cache size for D max = 10 slots D max cannot be satisfied for all the files; and thus, in the second part of the simulations we analyze the trade- off between the av erage cost and the average cumulati ve re-buf fereing delay . B. Simulation r esults In the simulations we consider tow benchmarks, namely; most popular file caching (MPFC) and the equal file caching (EFC). In MPFC, initially , a cache size enough to satisfy D max is allocated to all files, then, starting from the most popular file, allocated cache size is made equal to file size until no space is left in the caches of SBSs. In EFC, again we use the same initial cache size allocation, then starting from the most popular file the allocated cache size is increased to the next decrement point. Once, the cache size of the each file is aligned to the next decrement point, we go back to the most popular file and repeat the process until no empty space is left in the caches. In the first simulation scenario, the cost-free delay minimization algorithm is executed and the results are shown in Figure 4. The a verage cumulative re-buf fering delay of the system is plotted against the av ailable cache size for the proposed caching scheme and the two bench- marks, for three different values of w . The proposed caching policy is observed to have better performance than the two benchmarks in all the scenarios, and in some points the average delay is reduced up to 35% with respect to the benchmark with the best performance at this point. From Figure 4, it is also clear that for highly ske wed distributions (libraries with a few very popular videos), MPFC performs closer to the proposed algorithm, while for less sk ewed distrib utions, the second benchmark is closer . In the second simulation scenario, in which the cache size is not sufficient to satisfy delay constraint D av g M ax , Algorithm 2 is ex ecuted, and its performance is com- pared with MPFC and EFC policies in Figure 5. MPFC with a given D av g M ax constraint is ex ecuted according to the follo wing strate gy: first the most popular C /B m ( l min ) files are cached according to the maximum al- lowed delay D M ax . If the av erage delay constraint is not satisfied, i.e., D max > D av g M ax then the least popular file that is cached is remov ed, and the corresponding cache memory is used for the most popular file that is not cached up to the maximum level. This procedure is repeated until the av erage delay constraint D av g M ax is satisfied for all the cached files. For the EFC benchmark, again after the initial step, if the average delay constraint D av g M ax is not satisfied, then the least popular file in the cache is removed. The equal file caching algorithm described abov e is applied subsequently on the files that are still in the cache. This procedure is repeated until the average delay constraint is satisfied for all the cached files. The three plots portray the relationship between the av erage cost and the a verage delay constraint D av g M ax . Our proposed solution exhibits significant improvement in comparison with the benchmark policies. For example, for w = 0 . 95 and D av g M ax = 2 , the av erage cost is improved by 30% and 44% with respect to EFC and MPFC, respectiv ely . As it is expected, the tighter the av erage delay constraint D av g M ax , the higher the cost. Lastly , for all the three caching policies the cost decreases as the ske wness coefficient w increases. This is attributed to the fact that the popularity of less popular files is lower for more skewed distrib utions. V . C O N C L U S I O N W e studied the cache capacity-delay trade-off in heterogeneous networks with a focus on continuous video display targeting streaming applications. W e first proposed a caching policy that minimizes the average cumulativ e re-buf fering delay under the high mobility assumption. W e then considered a scenario in which the average cumulative re-buf fering delay is a giv en system requirement, and introduced a caching policy that minimizes the amount of data downloaded from the MBS while satisfying this requirement. Numerical simulations hav e been presented, sho wcasing the improved perfor- mance of the proposed caching policy in comparison 2 4 6 8 D avgMax (in time slots) 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 Average cost normalised over file size Delay Aware Caching Caching Most Popular files Caching files equally (a) w = 0 . 75 2 4 6 8 D avgMax (in time slots) 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 Average cost normalised over file size Delay Aware Caching Caching Most Popular files Caching files equally (b) w = 0 . 85 2 4 6 8 D avgMax (in time slots) 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 Average cost normalised over file size Delay Aware Caching Caching Most Popular files Caching files equally (c) w = 0 . 95 Fig. 5: A verage cost v ersus maximum av erage delay constraint for highly mobile users and T = 10 slots with other benchmark caching policies. General user mobility patterns will be studied as a future extension of this work. R E F E R E N C E S [1] Sandvine, “2016 Global internet phenomena report: LA TIN AMERICA & NOR TH AMERICA, ” June 2016, White Paper . [2] Cisco, “Cisco visual networking : Forecast and methodology , 2016-2021, ” June 2017, White Paper . [3] W . Jiang, G. Feng, and S. Qin, “Optimal cooperative content caching and delivery policy for heterogeneous cellular networks, ” IEEE T rans. Mobile Comput. , vol. 16, no. 5, pp. 1382–1393, May 2017. [4] M. Dehghan, B. Jiang, A. Seetharam, T . He, T . Salonidis, J. Kurose, D. T owsley , and R. Sitaraman, “On the complexity of optimal request routing and content caching in heteroge- neous cache networks, ” IEEE/ACM T ransactions on Networking , vol. 25, no. 3, pp. 1635–1648, June 2017. [5] K. Poularakis, G. Iosifidis, and L. T assiulas, “ Approximation algorithms for mobile data caching in small cell networks, ” IEEE T rans. Commun. , vol. 62, no. 10, pp. 3665–3677, Oct. 2014. [6] K. Shanmugam, N. Golrezaei, A. G. Dimakis, A. F . Molisch, and G. Caire, “FemtoCaching: W ireless Content Delivery Through Distributed Caching Helpers, ” IEEE T rans. Inf. Theory , vol. 59, no. 12, pp. 8402–8413, Dec. 2013. [7] J. Liao, K. K. W ong, Y . Zhang, Z. Zheng, and K. Y ang, “Coding, multicast, and cooperation for cache- enabled heterogeneous small cell networks, ” IEEE T rans. W ir eless Commun. , vol. 16, no. 10, pp. 6838–6853, Oct. 2017. [8] R. W ang, X. Peng, J. Zhang, and K. B. Letaief, “Mobility- aware caching for content-centric wireless networks: modeling and methodology , ” IEEE Communications Magazine , vol. 54, no. 8, pp. 77–83, Aug. 2016. [9] K. Poularakis and L. T assiulas, “Code, cache and deliv er on the move: A nov el caching paradigm in hyper -dense small-cell networks, ” IEEE Tr ans. Mobile Comput. , vol. 16, no. 3, pp. 675– 687, Mar . 2017. [10] E. Ozfatura and D. Gündüz, “Mobility and popularity-aware coded small-cell caching, ” IEEE Commun. Lett. , vol. 22, no. 2, pp. 288–291, Feb . 2018. [11] K. Kanai, T . Muto, J. Katto, S. Y amamura, T . Furutono, T . Saito, H. Mikami, K. Kusachi, T . Tsuda, W . Kameyama, Y . J. Park, and T . Sato, “Proactive content caching for mobile video utilizing transportation systems and evaluation through field experiments, ” IEEE J. Sel. Areas Commun. , vol. 34, no. 8, pp. 2102–2114, Aug. 2016. [12] E. Ozfatura and D. Gündüz, “Mobility-aware coded storage and deliv ery , ” in ITG W orkshop on Smart Antennas (WSA) , Mar . 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment