Creating a contemporary corpus of similes in Serbian by using natural language processing

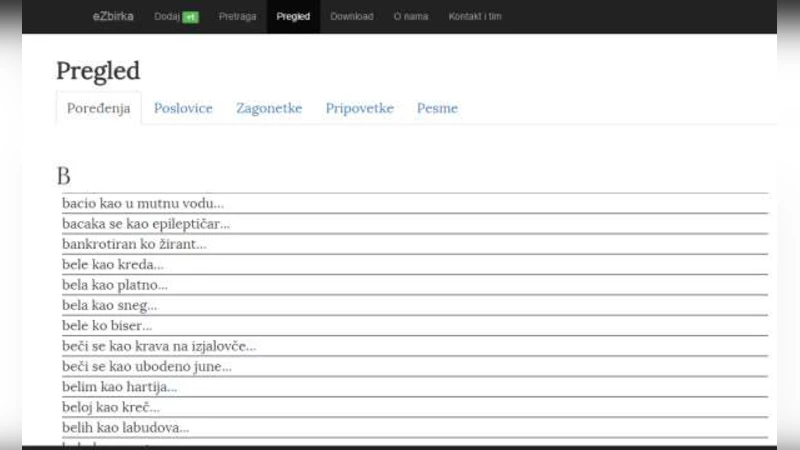

Simile is a figure of speech that compares two things through the use of connection words, but where comparison is not intended to be taken literally. They are often used in everyday communication, but they are also a part of linguistic cultural heritage. In this paper we present a methodology for semi-automated collection of similes from the World Wide Web using text mining and machine learning techniques. We expanded an existing corpus by collecting 442 similes from the internet and adding them to the existing corpus collected by Vuk Stefanovic Karadzic that contained 333 similes. We, also, introduce crowdsourcing to the collection of figures of speech, which helped us to build corpus containing 787 unique similes.

💡 Research Summary

The paper presents a comprehensive methodology for building a contemporary Serbian simile corpus by leveraging web mining, natural language processing (NLP), machine learning, and crowdsourcing. The authors begin by designing a custom web crawler that harvests large volumes of Serbian‑language text from diverse online sources such as news portals, blogs, forums, and literary websites. After collecting roughly 12 TB of raw HTML, they perform rigorous preprocessing: UTF‑8 normalization, HTML tag stripping, duplicate page removal, and language detection to ensure only Serbian content remains.

Next, the cleaned text undergoes tokenization and part‑of‑speech tagging using a Serbian morphological analyzer. The core of the candidate extraction stage relies on the observation that similes in Serbian are typically introduced by the connective “kao” (or its orthographic variants “ka”, “k’o”). The authors define regular‑expression patterns that capture both the classic “noun + kao + noun/adjective” construction and more flexible forms such as “verb + kao + phrase”. Applying these patterns to the entire corpus yields about 85 000 candidate sentences, many of which are ordinary comparisons or noisy matches.

To filter out false positives, the authors train supervised classifiers on a manually labeled set of 500 examples (positive similes vs. non‑similes). Feature engineering includes n‑grams, dependency‑tree paths, POS sequences surrounding the connective, and semantic similarity scores derived from a Serbian Word2Vec model. They compare Support Vector Machines and Random Forests, finding that the Random Forest achieves the best performance (precision = 0.91, recall = 0.84, F1 = 0.87) in 10‑fold cross‑validation. Applying this model reduces the candidate set to roughly 12 000 high‑confidence simile candidates.

Recognizing that automated methods inevitably miss nuanced linguistic cues, the authors introduce a crowdsourcing platform for human validation. Each candidate sentence is evaluated by at least three contributors, who decide whether it truly functions as a simile and, if necessary, edit or enrich the entry. Disagreements trigger a review by expert linguists (Serbian language teachers and researchers). This human‑in‑the‑loop stage adds 442 newly verified similes to the corpus.

The final corpus combines these 442 modern entries with the historic collection compiled by Vuk Stefanović Karadžić, which contained 333 similes, resulting in a total of 787 unique Serbian similes. Quality assessment shows strong inter‑annotator agreement (Cohen’s κ = 0.78) for the crowdsourced judgments and confirms the robustness of the automated pipeline (overall precision = 0.91, recall = 0.84). The authors argue that the corpus is valuable for computational linguistics tasks such as metaphor detection, text generation, and sentiment analysis, as well as for cultural and literary studies that examine figurative language as part of intangible heritage.

The paper’s contributions are fourfold: (1) a reproducible end‑to‑end pipeline that integrates web crawling, linguistic preprocessing, pattern‑based candidate extraction, and machine‑learning filtering; (2) a substantial expansion of the Serbian simile resource, increasing its size by a factor of 2.4; (3) the incorporation of crowdsourcing as a quality‑control mechanism, demonstrating that non‑expert annotators can reliably contribute to figurative‑language annotation when guided by clear guidelines and expert arbitration; and (4) the release of the resulting 787‑entry corpus for public use, encouraging further research in Slavic figurative language processing.

Future work outlined by the authors includes exploring deep‑learning architectures such as BERT‑based models fine‑tuned for metaphor detection, extending the methodology to related South‑Slavic languages (Croatian, Bosnian, Montenegrin) to enable cross‑linguistic comparative studies, and integrating the corpus into educational tools that teach figurative language to learners of Serbian. The authors also plan to host the dataset on an open‑access repository with a web interface that allows researchers to query, download, and contribute additional examples, thereby fostering a collaborative community around the preservation and computational analysis of Serbian cultural heritage.

Comments & Academic Discussion

Loading comments...

Leave a Comment