Limits on Sparse Data Acquisition: RIC Analysis of Finite Gaussian Matrices

One of the key issues in the acquisition of sparse data by means of compressed sensing (CS) is the design of the measurement matrix. Gaussian matrices have been proven to be information-theoretically optimal in terms of minimizing the required number…

Authors: Ahmed Elzanaty, Andrea Giorgetti, Marco Chiani

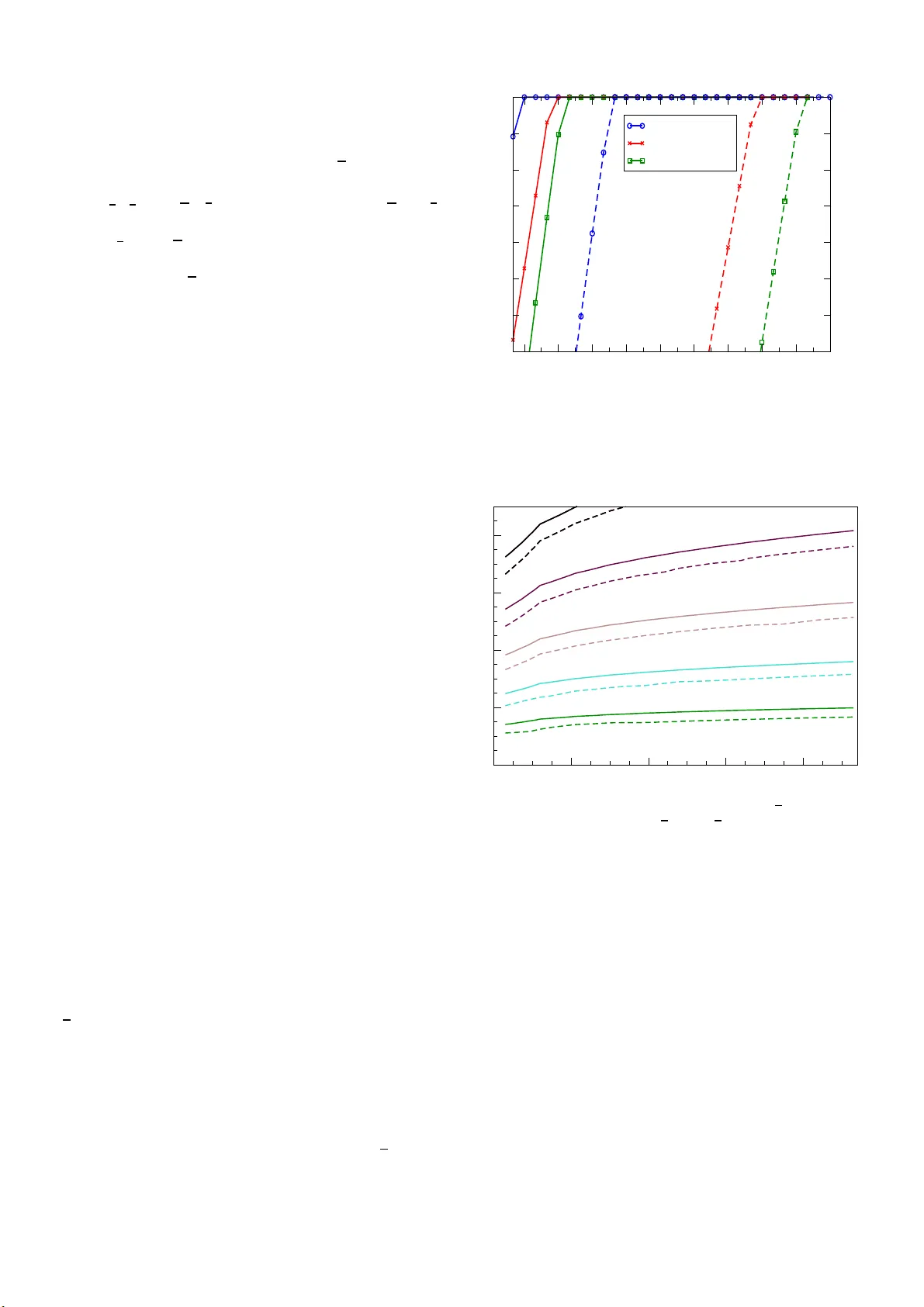

ACCEPTED FOR PUBLICA TION IN IEEE TR A N S. INF . THE OR Y 1 Limits on Sparse Dat a A cqu is ition: RIC Analysis of Finite Gauss ian Matric es Ahmed Elzanaty , Student Me mber , IEEE , An drea Giorgetti, Senior Memb er , IEEE , a nd Marco Chia n i, F ell ow , IEEE Abstract —One of the key issues in the acquisition of sp arse data by means o f compressed sensing ( C S) is the d esign of the measur ement matrix. Gaussian matrices have been prov en to be i n fo rmation-theoretically optimal i n t erms of minimizing the required number of measurements fo r sparse reco very . In this paper we pro vid e a new approach for the analysis of the restricted isometry constant (RIC) of finite dimensional Gaussian measureme nt matrices. The pro posed method relies on the exa ct distributions of the extreme eigen values for Wishart matrices. First, we derive t h e probability that the restricted isometry property is satisfied fo r a give n sufficient recov ery condition on the RIC, and propose a probabilistic frame work to study both the symmetric and asymmetric RICs. Th en, we analyze the reco very of compressible signals in noise through the statistical charac- terization of stability and robustness. The presented framework determines limits on various sparse recov ery a lgorithms for finite size pr obl ems. In particular , it prov i d es a tight lower b ound on th e maximum sparsity order of the acquired d ata allowing signal recov ery with a given target p robability . Also, we d eriv e simple app roxima t i ons for the RICs based on the T racy-Widom distribution. Index T erms —Data acquisition, compressed sensin g, restricted isometry p roperty , Wishart matrices, Gaussian measureme nt matrices, sparse reconstruction, robust recov ery . I . I N T RO D U C T I O N Compressed sensing (CS) is an acq uisition techn ique for efficiently recovering a signal from a small set of line ar measuremen ts, p rovided th at the sen sed data is sparse, i.e., the numb er of its non -zero elements, s , is m uch le ss than its dimension n . If p roperly ch osen, th e nu mber of measur ements, m , can be much smaller than the signal d imension [1]–[7]. CS based techn iques hav e been explo ited to provide effi- cient solutions fo r several problem s in signal p r ocessing and commun ication, e.g., source and channel coding , crypto graphy , random ac cess, radar, channe l estimation, a n d su b-Nyquist data acq uisition [8]–[1 6]. Th e usability o f such applications depend s o n the max imum sparsity ord er s su ch that recovery is g uaranteed with h igh probab ility fo r given m an d n . The th ree main possible ap proache s to find the maximum sparsity or der s guaranteein g recovery of all spa rse vectors are ba sed on the restricted isome try prop e r ty (RIP) analysis, This work was supported in part by the European Commission under the EuroCPS project and the EU-ME T ALIC II project, within the frame work of Erasmus Mundus Action 2. T his paper was presented in part at the IE EE Statist ical Signal Processing W orkshop (SSP), Spain, June 2016. The authors are with the DEI, Univ ersity of Bologna, V ia V enezi a 52, 47521 Cesena, IT AL Y (e-mail: { ahmed.elza naty , andrea.gi orge tti, marco.chia ni } @uni bo.it). Copyri ght (c) 2017 IEEE. Personal use of this material is permitted. Ho we ver , permission to use this m ateri al for any other purposes must be obtaine d from the IE EE by sending a request to pubs-permissions@i eee.or g. geometric metho ds, and coheren ce ana lysis. T he RIP tells how well a linear transfo rmation preserves distances between sparse vectors, an d is qu a n tified by the so-called restricted isometry constant (RIC) [1]. In ge n eral, the smaller the RIC, the closer the tran sformation to an isometry (a p recise definition o f the RIC is g i ven later) . Geometric based methods are useful for th e recovery analysis of exactly sparse signals via ℓ 1 -minimization in the noiseless case [ 1 7]–[20]. Sparse recon struction can also be studied lo oking a t the coh erence o f the measure m ent matrix. Howe ver, the resulting bou nds are too pessimistic compare d to RIP-ba sed boun ds [21, eq. (6.9) and eq . (6 .14)]. This significan t gap justifies prefe rring the RIP b ased analy sis, whenever b oundin g th e RIC is f easible. Furth ermore, non - unifor m recovery gu arantees, like those based on Gau ssian widths, provid e tigh t bound s for the reco nstruction o f a fixed sparse vector, in contr a st to the RIP meth o d, which co n siders the recovery of all sparse vector s (uniform recovery) [21 ] , [22]. Moreover , th e RIP theory is more gen eral comp ared to the geom etric app roach, as it also consider s the stability for comp ressible signals and the robustness to noise, under different measur ement ma trices, f or a wide r r ange of sparse recovery algorithms. In fact, sufficient c o nditions fo r exact recovery h ave b e en ob tain ed for se veral algorithms in ter ms of the RIC (see, e.g ., [ 1], [2 3]–[28] fo r ℓ 1 -minimization , [ 29], [30] for itera ti ve hard thresholdin g (IHT), and [31]–[34] for greedy algo rithms). It has be e n shown by u sin g inf ormation- theoretic method s that Gaussian rand om m atrices with indepen dent, identically distributed (i.i.d.) entries are optimal in te r ms of minimizin g the nu mber of measureme nts requ ired for recovery [3 5]. Hence, precisely analyzing the RIP of such m atrices is im- portant. In fact, Gau ssian matr ices have been proved to satisfy the RIP with overwhelming p r obability [1], [3]. Th e two ma in tools adopted for the p roof ar e th e con centration of measu re inequality for the distribution of the extrem e eigenv alue s of a W ishart matrix, an d the union boun d which acco unts for all possible sign al supp o rts. Howe ver, if the aim is to qu antify the maximal allowable sparsity ord e r s f o r a gi ven num ber of measuremen ts, the use of the conce ntration inequalities leads to overly pessimistic re sults. In this regar d, in [36]– [38] an im proved analysis was presen ted, by b oundin g the asymptotic b ehavior of th e distributions given in [3 9] for the extreme eigenvalues of a W isha r t matrix instead of the concentr a tion inequalities. Explicit bound s fo r th e RIC have been obtained in som e specific asymptotic regions [38], but no b ounds are known in the gener al non- asymptotic setting . In fact, for finite measur ement m atrices the asym ptotic analysis of th e eigenv alu es in [36]–[ 3 8] giv es appr o ximations of the 2 ACCEPTED FOR PUBLICA TION IN IEEE T RANS. INF . T HEOR Y true distributions; therefor e , they cann ot provid e guarantee d bound s for a p a rticular problem d im ension ( s, m, n ). This pap er provides an a c c urate statistical analy sis o f the RIC for finite dime n sional Gau ssian measurem ent matrice s, supportin g the design of real CS applicatio ns (in volving a l- ways finite size prob lems), with g uaranteed recovery prob abil- ity . In p a rticular , we calculate the tightest, to our k nowledge, lower bou nd on th e probab ility of satisfying th e RIP for an arb itrary condition on th e RIC. For a specified nu mber of measur ements, the maximal sparsity o rder can then be found such that perfect recovery is f e asible f or all s -spar se vectors, i.e., the matrix satisfies the RIP, co nsidering, on a random dr aw of the measuremen t matrix , a target p robability 1 − ǫ of successful recovery . Differently , th e usually ado pted asymptotic setting con siders that this p r obability tends to 1 (overwhelming pro bability). T o get better estimates on the maxima l sparsity order, tight lower boun ds on the cumu lati ve distribution function s (CDFs) o f the asymmetric RICs (ARICs) are d eriv e d, based on the exact p robability that th e extreme sing ular values of a Gaussian submatrix are with in a range. He n ce, starting fro m the de r i ved CDFs, we can find th r esholds, below which th e ARICs lie with a predefin e d pro bability . Th ese percentiles allow to calculate a lower bou nd on the max imal recoverable signal sparsity order, using several reconstruc tion methods, such as ℓ 1 -minimization , greedy , an d IHT algorithms. The n ew analysis is used in conjunctio n with the recovery co nditions relaxed to asymmetr ic bo undaries, as suggested in [ 36], to prove exact re c overy fo r signals with larger sparsity order s. In this regard, we r elax the symmetric RIC based condition in [28] to a weaker asymm etric o ne. Additionally , we provide approx imations for the RIC CDFs based on the T r acy-W idom (TW) distribution, along with conv ergen ce in vestigation . I n compariso n with p revious literatur e , the p roposed analy sis giv es, for finite dimension al problem s, a b etter estimation of the sign al sparsity a llowing guaran teed recovery . The con tributions o f this paper can be summ arized as follows: • Accura te symmetric and asym metric RIC analy sis for finite dimension al problem s, accounting for the exact distribution of finite Gau ssian m atrices (differently from previous metho ds based o n asymptotic behavior of th e distributions or loose conce ntration of measure bo unds). • Limits on co mpressiv e data acquisition in term s of the maximum ach iev able sparsity order gu aranteeing arbi- trary target reconstru ction pr obability (instead of th e common overwhelming proba bility approa ch) via various recovery algo r ithms. • Accura te stud y for stable and robust r ecovery of com- pressible signals with tight b ounds on the recon struction error . • Simple approxim ations for the RICs based on the TW laws. Throu g hout this p a p er , we ind icate with det( · ) the determi- nant of a m atrix, with card( · ) the card inality o f a set, with k · k q = ( P n i =1 | x i | q ) 1 q the ℓ q norm of an n -dimen sional vector , with k · k the ℓ 2 norm, with Γ( · ) the ga m ma fun ction, with γ ( a ; x, y ) = R y x t a − 1 e − t dt the g e neralized incomplete gamma functio n, with P ( a, x ) = 1 Γ( a ) γ ( a ; 0 , x ) the regular- ized lower incom plete gam ma fu n ction, with P ( a ; x, y ) = 1 Γ( a ) R y x t a − 1 e − t dt = P ( a, y ) − P ( a, x ) the gene r alized regu- larized inco mplete gamma fun ction, with N ( µ, σ 2 ) the Gaus- sian distribution with mean µ and variance σ 2 . I I . M A T H E M A T I C A L B A C K G RO U N D Compressed sensing allows recovering a signal from a small number o f lin ear m easurements, un der some con stra in ts on both the sensed signal and the sen sing system. More precisely , assume th at we have y = Ax (1) where y ∈ R m and A ∈ R m × n are known, th e number of equations is m < n , an d x ∈ R n is the u nknown. Since m < n , we can th ink of y as a compre ssed version of x . W ithou t oth er constrain ts, the system is underd etermined, a n d there are infinitely many d istinct solutions of (1). If we assume that at m ost s < m elements of x are n on-zero (i.e. , the vector is s - sparse), then there is a uniqu e solutio n (the rig ht one) to (1), p rovided that all possible submatrices co nsisting of 2 s columns of A are maxim um rank. The solution can be fo und by solvin g the following ℓ 0 -minimization [1] ˆ x = a r g min k x k 0 subject to y = Ax (2) where k x k 0 is the num ber of th e non -zero elemen ts of x . Howe ver, even when the maxim u m rank condition is satis- fied, the solution of (2) is compu tationally proh ibitiv e for dimensions of practical inter e st. A m u ch easier problem is to find the ℓ 1 -minimization solution . It is proved in [1], un d er some cond itions on A , that the solution provid e d by the ℓ 1 - minimization ˆ x = a r g min k x k 1 subject to y = Ax (3) is the same as that of (2). The co n ditions on A ar e given in term of the RIC. Definition 1 (The RIC [1]) . Th e RIC of o rder s of A , δ s ( A ) , is th e smallest constant, larger than zero, such that the in equalities 1 − δ s ( A ) ≤ k A S c k 2 k c k 2 ≤ 1 + δ s ( A ) (4) are simultan eously satisfied f or every c ∈ R s and every m × s submatrix A S of A with column s indexed by S ⊂ Ω , { 1 , 2 , ..., n } with ca rd( S ) = s . Under this cond ition, the matrix A is said to satisfy th e RIP of order s with constant δ s ( A ) . Specifically , the im portance of the RIP in CS com es from the possibility to use the computatio nally feasible ℓ 1 - minimization instead of the imp ractical ℓ 0 one, un der some constraints on the RIC. For examp le, it was shown that th e ℓ 1 and the ℓ 0 solutions are coinc id ent f or every s -spar se vectors x if δ s ( A ) < δ with δ = 1 / 3 [ 28]. The next qu estion is how to design a matrix A with a prescribed RIC. On e possible way to design A co nsists simply ELZANA TY et al. : LIMITS ON SP ARSE D A T A ACQUI SITION: RIC ANAL YSIS OF FINITE GAUSS IAN M A TRICES 3 in ran domly generatin g its en tries accor d ing to some statistical distribution. In this ca se, fo r a given n , s and δ , the target is to find a way to gen erate A such that the prob ability P { δ s ( A ) < δ } is close to one . An o ptimal choice is to build the m easurement matrix A w ith i.i.d. entries a i,j ∼ N (0 , 1 /m ) [1], [3 5]. Then, in order to fin d the nu mber of measureme n ts m nee d ed, we start b y using the Rayleig h q uotient ine quality for a fixed S λ min ( W ) ≤ k A S c k 2 k c k 2 ≤ λ max ( W ) (5) where W = A T S A S , and λ min ( W ) an d λ max ( W ) ar e its minimum an d maximu m eigenv alues, respectively . Consider- ing that the in equalities in (4) sho uld be satisfied fo r all the s -column submatrice s of A , th e RIC co nstant can be written as δ s ( A ) = max 1 − min S ⊂ Ω card( S ) = s λ min ( W ) , max S ⊂ Ω card( S ) = s λ max ( W ) − 1 . (6) Hence, the pr obability th a t th e m easurement m atrix satis- fies the RIP with a RIC at most δ , denoted as β ( δ ) , P { δ s ( A ) ≤ δ } , is represented by β ( δ ) = P min S ⊂ Ω card( S )= s λ min ( W ) ≥ 1 − δ, max S ⊂ Ω card( S )= s λ max ( W ) ≤ 1 + δ . (7 ) The u nion bound gives a lower bou nd for th e prob ability of satisfying the RIP as β ( δ ) ≥ 1 − n s 1 − P sw ( δ ) (8) where n s is the bino m ial co efficient an d P sw ( δ ) is the probab ility tha t A S is well condition ed defined as: P sw ( δ ) , P { 1 − δ ≤ λ min ( W ) , λ max ( W ) ≤ 1 + δ } . (9) The pro bability P sw ( δ ) is of fun damental importance , since it determ in es th e p erform a n ce of CS. I n the n ext section, an approa c h fo r exactly calcu lating (9) for Ga u ssian matrices is propo sed. I I I . E I G E N V A L U E S S TA T I S T I C S In this section , we start by recallin g the known concentration inequality based bo und on 1 − P sw ( δ ) , wh ich is the ap proach used in [ 1], [ 2]. Then , an altern ativ e metho d to find P sw ( δ ) f or Gaussian measurem ent matrices are provided . The pro posed technique relies on the exact pr o bability that the eigenv alues of W are within a pr e defined interval. A. Eigenvalues Statistics Based o n the Concentration Inequa l- ity Deviation bo u nds for the largest and the smallest eigen- values of the W ishart matrix W are obtain ed using th e concentr a tion of measure ineq uality [1], [2], as P n p λ max ( W ) ≥ 1 + p s/m + o (1) + t o ≤ e − mt 2 / 2 (10) and P n p λ min ( W ) ≤ 1 − p s/m + o (1) − t o ≤ e − mt 2 / 2 (11) where t > 0 and o (1) is a small term tending to zero as m increases, which will be neglected in the following. Using th e inequality P { A c B c } ≥ 1 − P { A } − P { B } where A, B are arbitrary ev ents, and A c , B c are their com plements, i.e., th e union bou nd, we g et P sw ( δ ) ≥ 1 − e − 1 2 m h ( − 1 − √ s/m + √ 1+ δ ) + i 2 − e − 1 2 m h (1 − √ s/m − √ 1 − δ ) + i 2 (12) where ( x ) + = ma x { 0 , x } . W e will see later that this bound , which we use a s a bench mark, is far fr om the exact prob ability . B. Exa ct Eigen va lues Sta tistics W e propose a meth od to comp ute exactly the prob ability that a Wis hart matrix is well co nditioned , i.e, its eige nvalues are within a predefined limit. The metho d is b a sed on the following re c e nt result [40]. Theorem 1. The p r obability that all n on-zer o eigenvalues of the r ea l W ish a rt matrix M = G T S G S , wher e G S is m × s matrix with en tries g i,j ∼ N (0 , 1) , ar e within the in terval [ a, b ] ⊂ [0 , ∞ ) is ψ ms ( a, b ) = P { a ≤ λ min ( M ) , λ max ( M ) ≤ b } = K ′ p det ( Q ( a, b )) (13) with the constan t K ′ = π s 2 / 2 2 s m/ 2 Γ s ( m/ 2)Γ s ( s/ 2) 2 αs + s ( s +1) / 2 s Y ℓ =1 Γ ( α + ℓ ) wher e Γ s ( a ) , π s ( s − 1) / 4 Q s i =1 Γ( a − ( i − 1) / 2) , and α = m − s − 1 2 . I n (13) , when s is even the elements o f the s × s skew - symmetric matrix Q ( a, b ) are q i,j = P α j , b 2 + P α j , a 2 P α i ; a 2 , b 2 − 2 Γ( α i ) Z b/ 2 a/ 2 x α + i − 1 e − x P ( α j , x ) dx (14 ) for i, j = 1 , . . . , s , wher e α ℓ = α + ℓ . When s is odd, th e elements of the ( s + 1) × ( s + 1) skew-symmetric matrix Q ( a, b ) ar e as in (14) , with the addition al elements q i,s +1 = P α i ; a 2 , b 2 i = 1 , . . . , s q s +1 ,j = − q j,s +1 j = 1 , . . . , s (15) q s +1 ,s +1 = 0 . Mor eover , th e elements q i,j can b e compu ted iteratively , without n umerical in te gration or series expansion [40, Algo- rithm 1 ]. 4 ACCEPTED FOR PUBLICA TION IN IEEE T RANS. INF . T HEOR Y 0.1 0.2 0.3 0.4 0 1 2 3 P S f r a g r e p l a c e m e n t s s/m λ ∗ min ( m, s, η ) , λ ∗ max ( m, s, η ) m = 400 m = 300 m = 200 Fig. 1. T he asymmetric extreme eigen values threshold s of the Wi shart matrix W as a fu nction of s/m , for η = 10 − 10 . The lower thr eshold λ ∗ min ( m, s, η ) and the upper threshold λ ∗ max ( m, s, η ) are represent ed by dashed and solid lines, respecti vely . Considering that in o ur case the entrie s o f A S are distributed as N (0 , 1 /m ) , the exact p robability that A S is well cond i- tioned is calculated f rom Theore m 1 as P sw ( δ ) = P { λ min ( W ) ≥ 1 − δ, λ max ( W ) ≤ 1 + δ } = ψ ms m [1 − δ ] , m [1 + δ ] (16) where ψ ms ( a, b ) can now be compu ted exactly . The exact expression (16) is comp utationally easy for mo d erate matrix dimensions ( we used it up to m = 1 · 10 5 and s = 150 ). C. Asymme tric Natur e of th e Extreme Eigenvalues Clearly , the RIC in ( 6) depen ds on the d eviation of the extreme eigenvalues from u nity . It has been shown that the smallest and the largest eigen values of Wis hart matrices asymptotically d eviate from 1 [3 6]. Hence, the symmetr ic RIC can not efficiently describe the RIP of Gau ssian matrices. Now , it is essential to illustrate whether such asymmetric be havior is still valid f o r finite mea surement matrices. In th is regard, we p roposed to find the two percen tiles λ ∗ min ( m, s, η ) and λ ∗ max ( m, s, η ) f o r the extreme eigenv alues o f W , such th at P { λ min ( W ) ≤ λ ∗ min ( m, s, η ) } = P { λ max ( W ) ≥ λ ∗ max ( m, s, η ) } = η . In fact, such percentiles can b e calcu lated for m the exact eigenv alues distribution in Th eorem 1 as λ ∗ min ( m, s, η ) = ψ − 1 min (1 − η ) , λ ∗ max ( m, s, η ) = ψ − 1 max (1 − η ) (17) where ψ − 1 min ( y ) an d ψ − 1 max ( y ) are the inverse o f ψ ms ( m x, ∞ ) and ψ ms (0 , m x ) , respectively . In Fig. 1 we repor t the thresholds λ ∗ min ( m, s, η ) an d λ ∗ max ( m, s, η ) as a f u nction of s/ m , f or some finite values of m and a fixed exceeding probab ility η = 10 − 10 . W e can see tha t they asymmetric a lly deviate fro m u nity , as already observed fo r asymptotic large matrices in [ 3 6]. Add itio nally , since f or small values of m th e deviation o f th e extreme eigenv alues from unity is more significant, i.e., th e RIC shou ld be larger, the asympto tic tail behavior of the eigenv alues distributions in [3 6]–[38] c a nnot be used fo r up per b oundin g the RICs in th e finite case. Definition 2 (ARIC [ 24], [36]) . The lower RIC (LRIC) o f order s of A , δ s ( A ) , is defined as the sm a llest constan t larger than zero that satisfies 1 − δ s ( A ) ≤ k A S c k 2 k c k 2 ∀ c ∈ R s , ∀ S ⊂ Ω : card( S ) = s (18) and the uppe r RIC (U RIC) of o rder s of A , δ s ( A ) , is defin ed as the smallest co nstant larger than zer o that satisfies k A S c k 2 k c k 2 ≤ 1 + δ s ( A ) ∀ c ∈ R s , ∀ S ⊂ Ω : card( S ) = s. (19) Clearly , the re lation with th e symmetr ic RIC is δ s ( A ) = max { δ s ( A ) , δ s ( A ) } . More over, from Definition 2 an d (5), we can repr esent the ARICs as δ s ( A ) = 1 − min S ⊂ Ω card( S )= s λ min ( W ) (20) δ s ( A ) = max S ⊂ Ω card( S )= s λ max ( W ) − 1 . (21) I V . S Y M M E T R I C A N D A S Y M M E T R I C R I C S The symmetric and asymm etric RICs of a Gau ssian matrix can be seen as f u nctions of the extrem e eigenvalues of W ishart matrices as in (20) and (2 1), and he nce are the mselves ran dom variables (r .v .s). In this section , we d eriv e at first lower bound s on the proba b ility of satisfying RIP f or finite dimen sional Gaussian rando m m atrices using the exact eigenv a lu es dis- tribution, and then a lower bo u nd o n the RIC . Ad ditionally , the CDFs of the ARICs are lower bou nded using the CDFs of the extreme eigenv alu e s. Finally , threshold s for ARICs that are n ot exceeded w ith a target prob ability are deduced . In th e following, th e analy sis derived starting f rom the exact eigenv alues statistic (1 6) will be r eferred as the exact eigenv alues distribution (EED) ba sed appro ach. A. RIP Analysis for Gau ssian Matrices A Gaussian m atrix is said to satisfy the RIP of ord er s if its RIC, δ s ( A ) , is less than a constant δ with h igh pr obability on a rando m d raw o f A . I n other words, if a su fficient condition for p erfect reconstru ction u sing a sparse re c overy algorith m is satisfied with high prob ability . This prob ability ca n be lower bound ed fro m (8) and ( 16) as β ( δ, m, n, s ) ≥ 1 − n s h 1 − ψ ms m [1 − δ ] , m [1 + δ ] i . (22 ) The expression (22) gives, to the best of ou r knowledge, the tightest lower b ound on the probab ility o f satisfying the RIP, β ( δ ) , for fin ite d imensional Gaussian matrices. This is attributed to emp loying th e exact joint distribution o f the extreme eig en values o f W ishart matrices, provid ing a q uantita- ti vely sharper estimates com pared to the con centration bound and the asymp to tic app roaches. ELZANA TY et al. : LIMITS ON SP ARSE D A T A ACQUI SITION: RIC ANAL YSIS OF FINITE GAUSS IAN M A TRICES 5 When apply in g CS, it is importan t to estimate the RIC to assess the recovery proper ty of th e measur ement matrix . Let us define δ ∗ s, min ( m, n, ǫ ) as the RIC which is exceeded with probab ility ǫ , such that P { δ s ( A ) ≤ δ ∗ s, min ( m, n, ǫ ) } = 1 − ǫ . (23) Using ( 22) we can upper b o und this value as δ ∗ s, min ( m, n, ǫ ) ≤ δ ∗ s ( m, n, ǫ ) , ψ − 1 ms 1 − ǫ/ n s (24) where ψ − 1 ms ( y ) is the in verse of ψ ms m (1 − x ) , m [1 + x ] . In the fo llowing we will refer to δ ∗ s ( m, n, ǫ ) in (24) as the RIC threshold (RICt ) , where fr om (23) an d (24) we have P { δ s ( A ) ≤ δ ∗ s ( m, n, ǫ ) } ≥ 1 − ǫ . (25) B. Asymmetric RIP An alysis for Gaussian Matrices Let δ s ( A ) be th e LRIC as defined in (20). T he CDF of th e LRIC, F ℓ RIC ( x ) , is lower boun ded as P { δ s ( A ) ≤ x } ≥ 1 − n s h 1 − ψ ms m [1 − x ] , ∞ i (26) In fact, f rom (20) the CDF of the LRIC δ s ( A ) is F ℓ RIC ( x ) = P 1 − min S ⊂ Ω card( S )= s λ min ( W ) ≤ x ≥ 1 − n s P { λ min ( W ) ≤ 1 − x } (27) = 1 − n s h 1 − ψ ms m [1 − x ] , ∞ i . Let us defin e δ ∗ s, min ( m, n, ǫ ) as the LRIC which is exceeded with p r obability ǫ , such that P { δ s ( A ) ≤ δ ∗ s, min ( m, n, ǫ ) } = 1 − ǫ . This q u antity is u pper boun ded as follows δ ∗ s, min ( m, n, ǫ ) ≤ δ ∗ s ( m, n, ǫ ) = ψ − 1 ms, lower 1 − ǫ/ n s (28) where ψ − 1 ms, lower ( y ) is the inverse o f ψ ms m [1 − x ] , ∞ . In the following we will refer to δ ∗ s ( m, n, ǫ ) as the L RIC threshold (LRICt). Similarly , for the CDF of the URIC, F uRIC ( x ) , we have P δ s ( A ) ≤ x ≥ 1 − n s P { λ max ( W ) ≥ 1 + x } (29) = 1 − n s h 1 − ψ ms 0 , m [1 + x ] i . Then, we can compute a threshold such that P { δ s ( A ) ≤ δ ∗ s, min ( m, n, ǫ ) } = 1 − ǫ , which leads to δ ∗ s, min ( m, n, ǫ ) ≤ δ ∗ s ( m, n, ǫ ) = ψ − 1 ms, upper 1 − ǫ n s ! (30) where ψ − 1 ms, upper ( y ) is the in verse of ψ ms 0 , m [1 + x ] . In th e following we will ref er to δ ∗ s ( m, n, ǫ ) as the URIC thresho ld (URICt). Note that, while p r eviously known app roaches refe r to infinite dimensio n al matrices, our analysis accou nts fo r th e (always finite) true d imensions of the p roblem. V . C O N D I T I O N S F O R P E R F E C T R E C OV E RY In this section, the estimated thresho lds fo r th e RICs (b oth symmetric and asymmetric) of fin ite matrice s a re used to quan- tify the maximum allowed sign al sparsity ord e r f or various recovery algo r ithms. Definition 3 (Th e m a x imum sparsity order ) . Let A b e a random m × n measur ement m atrix, s be the signal sparsity order, and 0 < ǫ < 1 b e an ar bitrary constan t. The maximum sparsity ord er, s ∗ , is the value such that ev ery s -sparse vector with s < s ∗ can b e recovered p erfectly with pr obability P PR at least 1 − ǫ o n a ra n dom draw of A . Then the maxim um oversampling ratio, a finite regime version of th e asymp totic phase transition functio n, is defined as s ∗ /m . The maxim um spar sity ord er is used to compar e the per- forman ce of different recovery algorith m s and their associated sufficient conditio ns. As me ntioned before, the perf ect rec o n- struction conditio n s f or many sparse rec overy algor ithms are stated in term s o f the RICs [ 1], [23], [25]–[3 4]. W e now explo it these con ditions to provide a pro babilistic fr amew o rk for th e recovery pro blem. A. Symm e tric RIC Based Sparse Rec overy About the symmetric RIC, the sufficient condition for p er- fect signal recovery v ia ℓ 1 -minimization can be represen ted in a generic fo rm as δ ks ( A ) < δ , where k is a po siti ve integer and δ is a constant. As a co nsequence, the pr obability of per fect recovery can b e bo unded as P PR ≥ P { δ ks ( A ) < δ } = β ( δ, m, n, k s ) (31) with the propo sed (22). Sufficient recovery co ndition of this class ar e, e.g., δ s ( A ) < 1 / 3 [28], δ 2 s ( A ) < 0 . 624 6 [2 1], etc. The in verse problem is th e calc u lation o f th e m a x imum sparsity or der , f or a g i ven m and a g i ven n , such that the P PR is at least 1 − ǫ . For this target we h av e s ∗ = max { s : β ( δ, m, n, k s ) ≥ 1 − ǫ } . (32) B. Asymmetric RIC B a sed Sparse Recovery Although the asymmetric RICs are less investigated, it is known that the conditio n s stated in terms of them lead to tighter bounds for the m aximum sparsity o rder [36]. This is attributed to the asymmetr ic be h avior of the extreme eigenv a l- ues fo r W ishart matrices as analyze d in section I II-C. A gene ral class of suf ficient rec overy c o nditions based on the ARICs has th e for m µ ( s, A ) , f δ k 1 s ( A ) , δ k 2 s ( A ) < 1 (33) where k 1 and k 2 are arbitrar y positive integers and f δ k 1 s ( A ) , δ k 2 s ( A ) is a no n -decreasing fun ction in both δ k 1 s ( A ) and δ k 2 s ( A ) . In this regard, we propo se a gen er- alization o f the sy m metric RIC based condition , δ s ( A ) < 1 3 , to an asymmetric one. In particula r, it is possible to pr ove that if the following co ndition is satisfied µ ECG ( s, A ) , 2 δ s ( A ) + δ s ( A ) < 1 (34) 6 ACCEPTED FOR PUBLICA TION IN IEEE T RANS. INF . T HEOR Y then all s -sparse vectors ca n be recovered perfectly using ℓ 1 - minimization . 1 Other sufficient con ditions in the f o rm of (33) are f ound in [2 4], [41]. For example, it is shown in [24] that if µ FL ( s, A ) , 1 4 1 + √ 2 1 + δ 2 s ( A ) 1 − δ 2 s ( A ) − 1 < 1 (35) and in [41] tha t if µ BT ( s, A ) , δ 2 s ( A ) + δ 6 s ( A ) + δ 6 s ( A ) / 4 < 1 then per fect reconstru ction is also gu aranteed. Therefo re, for rando m measur e ment matric e s, the pro babil- ity of perfect recovery by incorp o rating the ARICs can be bound ed as P PR ≥ P { µ ( s, A ) < 1 } . (36) For the design problem of calculatin g the m aximum sparsity order, by exploiting the monoton icity of the fu nction f ( · , · ) , we have P { µ ( s, A ) ≤ 1 } ≥ P n δ k 1 s ( A ) ≤ δ ∗ k 1 s , δ k 2 s ( A ) ≤ δ ∗ k 2 s o ≥ 1 − P n δ k 1 s ( A ) ≥ δ ∗ k 1 s o − P n δ k 2 s ( A ) ≥ δ ∗ k 2 s o (37) for any δ ∗ k 1 s and δ ∗ k 2 s such that f δ ∗ k 1 s , δ ∗ k 2 s < 1 . Equation (37) is du e to the u nion bound , (20), an d (21). Setting th e bound (37) to 1 − η and distributing equ ally the pro b ability on the lower and up per RICs, we get P n δ k 2 s ( A ) ≤ δ ∗ k 2 s o = P n δ k 1 s ( A ) ≤ δ ∗ k 1 s o = 1 − η 2 . ( 38) Finally , th e ma x imum sparsity order s ∗ is the ma ximum s com p atible with f δ ∗ k 1 s , δ ∗ k 2 s < 1 , where δ ∗ k 1 s , δ ∗ k 2 s are calculated from (28) and (30) with ǫ = η / 2 to satisfy (38). Then, every spar se vector with s < s ∗ can b e per f ectly recovered with pro bability at least 1 − η on a rando m draw of A . Although we focused on ℓ 1 -minimization based r ecovery , the same app roach can be used to estimate the m aximum spar- sity or der using g reedy or thre sholding algorithm s. For exam- ple, sufficient conditio ns on th e RIC for perfect recovery using compressive sampling matching pu rsuit (CoSaMP), o rthogon al matching p u rsuit (OMP), and IHT are δ 4 s ( A ) < 0 . 4 782 [21], δ 13 s ( A ) < 0 . 1666 [ 2 1], [34], and δ 3 s ( A ) < 0 . 5773 [ 4 2], respectively . Additionally , asymmetric RIC based cond itions have been ob tained in [43] for CoSaMP, IHT, and subspac e pursuit (SP). For example, µ BCTT ( s, A ) , 2 √ 2 δ 3 s ( A ) + δ 3 s ( A ) 2 + δ 3 s ( A ) − δ 3 s ( A ) < 1 is a sufficient condition for perfect re covery using IHT [43]. 1 The proof is obtained by reformulating equations (33) and (34) in [28] to accoun t for the asymmetric RICs. V I . R O B U S T R E C OV E RY O F C O M P R E S S I B L E S I G N A L S Up to now , we have studied th e case o f perfe ct recovery of sparse data in noiseless setting. H owever , in practice signals can also be not exactly sparse, but rathe r compr essible, i.e., the data is well approx im ated by a sparse signal. M o reover , noise can be pr esent durin g the acquisition p rocess. A measu re of the discre p ancy between a compressible signal and its sparse repr esentation is the ℓ 1 -error o f best s -term approx imation σ s ( x ) 1 , defined as σ s ( x ) 1 , inf {k x − x s k 1 , x s ∈ R n is s -sparse } . (39) Hence, a signal is well ap proxim a te d by an s - sparse vector if σ s ( x ) 1 is small [ 2 1]. Besides conside r ing compressible signals, we can also include the measurem ent no ise in th e model, so that th e measured vector ca n be written as y = Ax + z (40) where z is a bo unded noise with k z k ≤ κ . Assumin g κ is known, we can account f o r the n oise term b y mo difying the constraint in the ℓ 1 -minimization prob lem ( 3) as ˆ x = a r g min k x k 1 subject to k y − Ax k ≤ κ . (41) This algorithm is called quadr atically con strained ℓ 1 - minimization [4 4]. There are also other algorithm s for sparse recovery in n oisy cases, e.g ., Dantzig selector [45], b asis pur- suit deno ising [46], d enoising-o rthogon al approx imate mes- sage p assing [47], etc. For the mode l illustrated in (40), we cannot guar antee per- fect signal re c overy , but rather an ap p roximate reco nstruction can be assured with boun ded error . For example, it was shown in [2 8] that if δ s ( A ) < 1 / 3 , th e erro r a f ter recovery can be bound ed by a weigh ted comb ination of κ an d σ s ( x ) 1 , i.e., k ˆ x − x k ≤ C 1 κ + C 2 σ s ( x ) 1 √ s (42) where C 1 ( δ s ( A )) = q 8 1 + δ s ( A ) 1 − 3 δ s ( A ) (43) C 2 ( δ s ( A )) = √ 8 2 δ s ( A ) + q 1 − 3 δ s ( A ) δ s ( A ) 1 − 3 δ s ( A ) + 2 . (44) The c onstants C 1 and C 2 giv e an in sight abou t both the robustness (ability to han d le n oise) and the stability (ab ility to h andle comp r essible sign als) of th e recovery algorith m, respectively . When A is a ran dom matrix, both C 1 and C 2 are ra n dom variables. T o c h aracterize th eir statistical distribution, we pro - pose to fin d a bou nd on th e th reshold C ∗ i, min , with i = 1 , 2 , which is not excee d ed with a p redefined probab ility ǫ i , i.e., P C i ( δ s ( A )) ≤ C ∗ i, min = 1 − ǫ i . (45) Noting th at C i ( δ s ( A )) is mo notonically increasing in δ s ( A ) , we have P { C i ( δ s ( A )) ≤ C i ( δ ∗ s ( m, n, ǫ i )) } = P { δ s ( A ) ≤ δ ∗ s ( m, n, ǫ i ) } ≥ 1 − ǫ i (46) ELZANA TY et al. : LIMITS ON SP ARSE D A T A ACQUI SITION: RIC ANAL YSIS OF FINITE GAUSS IAN M A TRICES 7 where the RICt δ ∗ s ( m, n, ǫ i ) ca n be ca lcu lated from (24). Consequently , from ( 45) and ( 4 6) we up per bound C ∗ i, min as C ∗ i, min ≤ C ∗ i , C i ( δ ∗ s ( m, n, ǫ i )) . (47) The inv er se prob lem is finding the maximu m sparsity order, for a given m and a given n , such tha t the r .v . C i , with i = 1 , 2 , is less tha n a targeted constant c i with prob ability at least 1 − ǫ i . For this aim we h av e s ∗ = max { s : C i ( δ ∗ s ( m, n, ǫ i )) ≤ c i } . Analogou s results relating the recovery erro r with σ s ( x ) 1 and κ h av e been o btained for different a lgorithms under suitable symmetric and asymmetric RIC based sufficient con- ditions [2 4], [ 4 3], [ 48]–[50]. By f o llowing the same app roach, the p roposed methodo logy can b e app lied to describe the statistics of the stability and ro bustness constants also f or th ese cases. V I I . T R AC Y - W I D O M B A S E D R I C A NA L Y S I S Although the prop osed f ramew ork based on the exact dis- tribution of the eigenvalues (16) provide s tight bou nds on the RICs, it could be comp utationally expensiv e fo r large matrices, for which easier approac h es are preferr e d. In this section , we derive ap proxima tions for the RIC s of finite matrices based on the TW distribution, m uch tighter than those obtained from concentra tio n of measure in equalities. Also, we stu d y the conver g ence rate of the distribution of extreme eig en values to those b ased on the T W by exploiting the small deviation analysis of the extrem e eigen values around their mean. In pa r ticular , we pr ove that T W based distributions approx imate the eigenv alu es statistics o f finite Gau ssian m a- trices with exponen tially small error in m , leading to accurate estimation of the RICs. In fact, it is well k nown th at the distribution of the smallest and largest eigenv alues of W isha r t matrices tend, un der some condition s, to a prop erly scaled and shifted TW distributions [51]–[57]. Specifically , it h as been shown that for the real W ishart matrix M when m, s − →∞ and m/s − → γ ∈ (0 , ∞ ) λ max ( M ) − µ ms σ ms D − → TW 1 (48) where TW 1 is a Tracy-W idom r .v . o f order 1 with comp le - mentary CDF (CCDF) Ψ TW 1 ( t ) , µ ms = ( √ m + √ s ) 2 , and σ ms = √ µ ms (1 / √ s + 1 / √ m ) 1 / 3 [53]. More precisely , fro m the conv e rgen ce in distribution defin itio n a nd letting ρ , s/ m we have lim m − → ∞ P { λ max ( M ) ≥ µ ms + t σ ms } = lim m − → ∞ P n λ max ( W ) ≥ (1 + √ ρ ) 2 + t m − 2 3 ρ − 1 6 (1 + √ ρ ) 4 3 o = Ψ TW 1 ( t ) . (49) Similarly , fo r the smallest eigenvalue, when m, s − → ∞ and m/s − → γ ∈ (1 , ∞ ) [ 5 6] − ln λ min ( M ) − v ms τ ms D − → TW 1 (50) with scaling and cen tering parame ters τ ms = ( s − 1 / 2) − 1 / 2 − ( m − 1 / 2) − 1 / 2 1 / 3 p m − 1 / 2 − p s − 1 / 2 v ms = 2 ln p m − 1 / 2 − p s − 1 / 2 + 1 8 τ 2 ms . Regarding th e RIC analysis for finite Gaussian matr ic e s, let δ ∗ s ( m, n, ǫ ) , δ ∗ s ( m, n, ǫ ) , an d δ ∗ s ( m, n, ǫ ) be the RIC th r esholds as d efined in ( 30), (28), and ( 24), respectively . W e will show that th ey can be ap p roximated as δ ∗ s ( m, n, ǫ ) ≃ δ ∗ TW , m − 2 3 ρ − 1 6 (1 + √ ρ ) 4 3 Ψ − 1 TW 1 ǫ/ n s + ρ + 2 √ ρ (51) δ ∗ s ( m, n, ǫ ) ≃ δ ∗ TW , 1 − 1 m exp v ms − τ ms Ψ − 1 TW 1 ǫ/ n s ! (52) δ ∗ s ( m, n, ǫ ) ≃ δ ∗ TW , e P − 1 sw 1 − ǫ/ n s ! (53) for δ ∗ TW , δ ∗ TW , and δ ∗ TW less than one , where Ψ − 1 TW 1 ( y ) is the in verse of the TW’ s CCDF and e P − 1 sw ( y ) is the inverse o f e P sw ( x ) , 1 − Ψ TW 1 v ms − ln m [1 − x ] τ ms ! − Ψ TW 1 m [1 + x ] − µ ms σ ms ! . (54) In ord e r to prove th e se form u las, at first the co n vergence rate o f the extreme eigenvalue distributions to tho se based on the T W is p rovided. For the URIC, it has bee n shown in [58, Theorem 2 ] th at ther e exists a co nstant c > 0 , depend ing only on ρ , such tha t P n λ max ( M ) ≥ µ ms [1 + z ] o ≤ c exp − 1 c s z 3 2 (55) for all m > s ≥ 1 an d 0 < z ≤ 1 . Th is sm a ll deviation analysis provid es tighter bo unds com pared to the concentr a tion in equality (10) and Edelm an boun d [39, Lemma 4 . 2 ] used f or large m in [36]–[ 38]. From (55), the L.H. S. of (4 9) can be tightly bo unded for finite m and for t ≤ m 2 / 3 ρ 1 / 6 1 + √ ρ 2 / 3 as P n λ max ( W ) ≥ (1 + √ ρ ) 2 + t m − 2 3 ρ − 1 6 (1 + √ ρ ) 4 3 o ≤ c exp − c 1 t 3 2 (56) where c 1 , c − 1 ρ 3 / 4 1 + √ ρ − 1 . Regarding the R.H.S, fo r sufficiently large t we have Ψ TW 1 ( t ) ≤ c 2 exp − c 3 t 3 2 (57) where c 2 > 0 and c 3 > 0 a r e constants [59, eq . (2)], [60]. Now th e error in using th e TW can be b ounded as P n λ max ( W ) ≥ (1 + √ ρ ) 2 + t m − 2 3 ρ − 1 6 (1 + √ ρ ) 4 3 o − Ψ TW 1 ( t ) ≤ c 4 exp − c 5 t 3 2 (58) 8 ACCEPTED FOR PUBLICA TION IN IEEE T RANS. INF . T HEOR Y where c 4 = max { c, c 2 } and c 5 = min { c 1 , c 3 } . Ther efore, th e error du e to approx imating P { λ max ( W ) ≥ 1 + x } in (29) by that o f the T W can be bo u nded from (58) as P { λ max ( W ) ≥ 1 + x } − Ψ TW 1 ( x − 2 √ ρ − ρ ) × m 2 3 ρ 1 6 (1 + √ ρ ) − 4 3 ≤ c 4 exp − m ( x − 2 √ ρ − ρ ) 3 2 × c 5 ρ 1 4 (1 + √ ρ ) − 2 (59) for x ≤ 2 1 + √ ρ 2 − 1 . 2 Hence, the absolute error in approx imating the exact probab ility with that based on the TW distribution is expon entially small in m and the URICt can b e appro ximated b y (51). A similar re a soning c an be used to derive the thresho lds f or the lower and symmetr ic RICs ( the p roof is n ot r eported her e for the sake of concisene ss). Finally , we would like to remark that T racy-W ido m based approa c h es co uld be used not only for Wishart ensemb le s, but also for a wider class of matrices like th ose d rawn from some sub-Gaussian distributions, e.g., Radem a c her and Bernoulli measuremen t matrices. This is motiv ated by the univ e rsality of the TW laws for the extreme eigenv alue s o f large rando m matrices [6 1], [62], altho ugh further research is requ ired to in vestigate such extensio ns. V I I I . N U M E R I C A L R E S U LT S In this sectio n, n u merical results are p resented to comp are the propo sed exact an d TW ap proaches with the concen tration inequalities, for an alyzing the proba b ility that the RIP is satisfied. Moreover, the statistics of the RICs, the probab ility of perfect reco nstruction, the maximu m sparsity order for various recovery alg orithms, and the robustness and stability constants are also inves tigated. Fig. 2 shows upper bound s o n the pr obability of n ot satisfy- ing the RIP, P { δ s ( A ) ≥ 1 / 3 } , using the EED b ased ap proach (22), th e TW approxim ation ( 8), ( 5 4), a nd the concentr ation bound (8), (1 2). Note that wh en the sparsity level is beyond some threshold value, the proba b ility of not satisfying the RIP rapidly increases fro m zero to o ne. This figure also illustrates the limit on the maxim u m sparsity ratio that still permits satisfying th e RIP with a targeted pro bability . W e can see that the EE D b ased app roach ind icates h igher sparsity ratios (less sparse vectors) co mpared to tho se estimated b y the well- known concen tration bou nd (more than 220 % incr ease in s/n when the pro bability is 10 − 14 and m/n = 0 . 4 ) . In fact, the con centration inequality is q uite loo se in boun ding th e probab ility th at a submatr ix is ill con ditioned, 1 − P sw ( δ ) , and con sequently in an alyzing the RIP. Regarding the ARICs, the upper RIC threshold s, δ ∗ s ( m, n, ǫ ) , comp uted by means of ( 30) and (51), are plotted in Fig. 3 for an excess p robability ǫ = 10 − 3 , as a function o f th e comp ression ratio, m/n , and the oversampling ratio, s/m . In this figur e, we set m = 4000 an d vary n from 2 · 10 5 to 40 00 . As ca n be noticed the T W appro ximation is quite accur ate. 2 Note that x ≤ 1 is a stronger condition than x ≤ 2 1 + √ ρ 2 − 1 . 1 2 3 4 5 6 7 8 9 10 10 -14 10 -12 10 -10 10 -8 10 -6 10 -4 10 -2 10 0 P S f r a g r e p l a c e m e n t s s/n P { δ s ( A ) ≥ 1 / 3 } Concentr . bound EED approach TW a pprox. 1 × 10 − 4 Fig. 2. Symmetric RIP: upper bounds on the probabilit y of not satisfying the RIP, P { δ s ( A ) ≥ 1 / 3 } , for m/n = 0 . 1 (solid) and m/n = 0 . 4 (dashed). The signal dimension is n = 3 · 10 4 . Curves obtained through the concen tratio n bound, (8) and (12), the EE D, (22), and the TW approxima tion, (8) and (54 ). 0 0.2 0.4 0.6 0.8 0 2 4 6 8 0.3 0.4 0.5 0.6 0.7 P S f r a g r e p l a c e m e n t s s/m m/n × 10 − 3 Fig. 3. Lev el sets of the upper RIC th reshold δ ∗ s ( m, n, ǫ ) ∈ { 0 . 3 , 0 . 4 , 0 . 5 , 0 . 6 , 0 . 7 } such that P { δ s ( A ) ≥ δ ∗ s ( m, n, ǫ ) } ≤ ǫ , using the EED (solid) and TW (dashed), for m = 4000 and ǫ = 10 − 3 . T o f u rther inv e stiga te the RIC bou nds, we r eport in T able I both the LRIC a nd URIC thr esholds f or different m/n using various appro aches: th e p roposed EED (28), (30), the TW approx imation ( 52), (51), the empirical lower bo unds in [63], and the asy mptotic bo unds in [36], [37]. W e can see th a t th e upper boun ds on the RICs o b tained f rom th e EED appr o ach is sharp, with sm a ll differences fro m th e em pirical lower bo u nds (averaged over 100 different realizations) indicated by [6 3]. W ith the aim of compa r ing different su ffi cient recovery condition s via ℓ 1 -minimization , IHT, an d CoSaMP algo rithms, in Fig. 4 we rep o rt the m aximum oversamplin g ratio, s ∗ /m , such that P PR ≥ 0 . 9 99 . All curves have been o b tained by using the EED based ap proach. Sp ecifically , f or ℓ 1 -minimization we consider the symmetric RIC co ndition δ s ( A ) ≤ 1 / 3 [28], its relaxed asymmetr ic extension µ ECG ( s, A ) < 1 p roposed ELZANA TY et al. : LIMITS ON SP ARSE D A T A ACQUI SITION: RIC ANAL YSIS OF FINITE GAUSS IAN M A TRICES 9 T ABLE I T H E R I C T H R E S H O L D S U S I N G T H E E E D B O U N D A N D T W A P P RO X I M ATI O N F O R ǫ = 10 − 2 , E M P I R I C A L AVE R A G E D L O W E R B O U N D S [ 6 3] , B C T [ 3 6 ] , A N D B T [ 3 7] A P P RO AC H E S , F O R m = 2000 A N D s = 4 . F O R E A C H m/n , T H E T W O RO W S G I V E T H E U P P E R A N D L OW E R R I C . Finite Asymptotic m/n ↓ EED upper bounds (30), (28) TW approximat ion (51), (52) Empirical lo wer bounds [63] BCT [36] BT [37] 0 . 3071 0 . 3395 0 . 2703 0 . 3408 0 . 3402 0 . 4 0 . 2561 0 . 2846 0 . 2322 0 . 2777 0 . 2772 0 . 3000 0 . 3304 0 . 2626 0 . 3344 0 . 3337 0 . 6 0 . 2512 0 . 2778 0 . 2268 0 . 2734 0 . 2729 0 . 2949 0 . 3239 0 . 2580 0 . 3297 0 . 3291 0 . 8 0 . 2477 0 . 2729 0 . 2214 0 . 2703 0 . 2698 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 1 2 3 4 5 6 7 8 9 10 11 12 P S f r a g r e p l a c e m e n t s m/n s ∗ /m ℓ 1 , µ BT ( s, A ) < 1 ℓ 1 , δ 2 s ( A ) < 0 . 624 ℓ 1 , 1 1 δ 1 2 s ( A ) + δ 1 1 s ( A ) < 1 0 ℓ 1 , µ FL ( s, A ) < 1 ℓ 1 , µ ECG ( s, A ) < 1 ℓ 1 , δ s ( A ) < 1 / 3 IHT, δ 3 s ( A ) < 0 . 5773 CoSaMP, δ 4 s ( A ) < 0 . 4782 IHT, µ BCTT ( s, A ) < 1 × 10 − 3 Fig. 4. The maximum oversampl ing ratio, s ∗ /m , for variou s reco very algorit hms and their associate d sufficie nt conditions using the proposed EED based approach, for m = 4000 and P PR ≥ 0 . 999 ( η = 10 − 3 ). in Section V -B, δ 2 s ( A ) < 0 . 624 [21], µ FL ( s, A ) < 1 [ 24], and µ BT ( s, A ) < 1 [ 41]. For IHT we used th e conditio ns δ 3 s ( A ) < 0 . 5 773 [ 42] and µ BCTT ( s, A ) < 1 [4 3], wh ile for the CoSaMP we considered δ 4 s ( A ) < 0 . 4782 [21]. W e can see that the asymm etric condition s provide h ig her estimates of th e sparsity which can be h a ndled by comp ressed sensing , com - pared to the symmetric conditio ns (mor e than 40 % incr ease in s ). As known, the ℓ l -minimization and IHT algorithm s allow higher oversampling ratios than the CoSaM P algor ith m. Moreover , w e provide in Fig. 5 th e m a ximum oversampling ratio, for un iform recovery , indicated by o ur pr oposed ap- proach along with those ob tained fr om the po lytope [2 0], Nu ll space [21, Theo rem 9 . 29 ] , geom etric func tional [18, The o rem 4 . 1 ], and RIP [21, Theo rem 9 . 2 7 ] analy ses f o r fin ite matrice s with m = 40 00 an d P PR ≥ 0 . 5 . Howe ver , we would like to note that the polytop e based approach sug gests tig hter bound s o n the maximu m sparsity order, as it fully exploits the g eometry of the ℓ 1 -minimization f or signal recovery from Gaussian measurem ents. On the oth e r han d, the RIP is suitable for an alyzing the ro bust and stab le reco nstruction with several sparse recovery algor ithms, such as o ptimization, greedy , and 0.2 0.4 0.6 0.8 1 2 4 8 16 32 64 128 P S f r a g r e p l a c e m e n t s m/n s ∗ /m RIP ana lysis (EED), µ BT ( s, A ) < 1 Polytope analys is [20] Geometric functional analysis [18] Null s pace property [21 ] RIP ana lysis [21], δ 2 s ( A ) < 0 . 624 × 10 − 3 Fig. 5. The maximum oversa m pling ratio, s ∗ /m , for perfect recove ry via ℓ 1 -minimizat ion, estimate d by the proposed RIP based approach (EED) along with the RIP [21], polytope [20], Null space [21], and geometric functiona l [18] analyse s, for m = 4000 and P PR ≥ 0 . 5 . thresholdin g. Finally , regard in g the analysis for compressible signals in noise, the c o ntours for r obustness and stability thre sh olds C ∗ 1 and C ∗ 2 are shown in Fig. 6. A s can be seen for small s/m the thresholds ar e small, indicating that the m ore sparse is the signal, the mor e robust a n d stable is the re construction process. T h erefore, a comp romise between sparsity and ro- bustness/stability sho uld b e co nsidered when designin g the acquisition system. T his figur e also gives the max im um over - sampling ratio for a given m and n , suc h that the minimization progr am (41) can appr oximately recover the m easured signal with a pred efined discrep ancy . I X . C O N C L U S I O N For sparse d ata acq uisition we have fou nd that the co ncen- tration of measure ineq uality provides a loo se upper bou nd o n the pro b ability th at a measurem ent submatrix is ill condition ed. For example, in some cases it overestimate the maximum sparsity ratio b y over 22 0% with respect to the prop osed exact eigenv alues based app roach. For fin ite matrices, b y tig htly 10 ACCEPTED FOR PUBLICA TION IN IEEE TR A N S. INF . THE OR Y 0.2 0.4 0.6 0.8 1 1 3 5 7 9 4 5 6 7 8 9 P S f r a g r e p l a c e m e n t s s/m m/n × 10 − 4 Fig. 6. Le vel sets of robustness and stability threshol ds in Section VI, C ∗ 1 (solid) and C ∗ 2 (dashed), with C ∗ 1 , C ∗ 2 ∈ { 4 , 5 , 6 , 7 , 8 , 9 } , for m = 2 · 10 4 and ǫ 1 = ǫ 2 = 10 − 3 , using the EE D based approach. bound ing the symmetric and asymmetric RICs, the best curren t lower boun d on the maxim u m sparsity ord e r guara n teeing successful recovery has b e e n provided, fo r various sparse reconstruc tion algo rithms. For stable and robust recovery of compressible d ata, we have noticed that when the sparsity order decreases the discrepancy between the recovered and original sign als reduces. Fina lly , we have shown that simple approx imations f o r the RICs can be obtain ed based on TW distributions. R E F E R E N C E S [1] E. Candes and T . T ao, “Decoding by linea r programming, ” IEEE T rans. Inf. Theory , vol. 51, no. 12, pp. 4203–4215, Dec. 2005. [2] D. D onoho, “Compressed sensing, ” IE EE Tr ans. Inf. Theory , vol. 52, no. 4, pp. 1289 –1306, April 2006. [3] ——, “For most large underdetermin ed systems of linear equations the minimal ℓ 1 -norm solution is also the sparsest solution, ” Comm. on Pure and Applied Math. , vol. 59, no. 6, pp. 797–829, 2006. [4] E. Candes and T . T ao, “Near -optimal signal recov ery from random project ions: Uni versal encoding strate gies?” IEE E T rans. Inf. Theory , vol. 52, no. 12, pp. 5406 –5425, Dec. 2006. [5] E. Candes and M. W akin, “ An introduct ion to compressiv e sampling, ” IEEE Signal P r ocess. Mag . , vol. 25, no. 2, pp. 21–30, March 2008. [6] Y . Eldar , P . Kupping er , and H. Bolcsk ei, “Blo ck-sparse s ignal s: Un- certai nty relatio ns and ef ficient recov ery , ” IEE E T rans. Signal Process. , vol. 58, no. 6, pp. 3042–3054, June 2010. [7] Y . Eldar and G. Kutyniok, Compr essed sen sing: th eory and applicati ons . Cambridge Univ ersity Press, 2012. [8] G. Coluccia, A. Roumy , and E . Magli, “Operati onal rate-di s tortio n performanc e of single-source and di stributed compressed sensing, ” IEEE T rans. Commun. , vol. 62, no. 6, pp. 2022–2033, June 2014. [9] D. V alsesia, G. Coluccia , and E. Magli, “Graded quantiz ation for multiple desc ription coding of co mpressi ve measurements, ” IEEE T rans. Commun. , vol. 63, no. 5, pp. 1648–1660, May 2015. [10] H. Schepker , C. Bockelman n, and A. Dekorsy , “Ef ficient detectors for joint compressed sensing detection and channel decoding , ” IEEE T rans. Commun. , vol. 63, no. 6, pp. 2249–2260, June 2015. [11] A. Kipnis, G. Reev es, Y . Eldar , and A. Goldsmith, “Fundamental limits of compressed sensing under optimal quantizat ion, ” in Proc. IE EE Inter . Sympos. on Information Theory (ISIT) , June 2017. [12] J. Mota, N. Deligiannis, and M. Rodrigues, “Compressed sensing with prior information : Strate gies, geometry , and bounds, ” IEEE T rans. Inf. Theory , vol. 63, no. 7, pp. 4472–4496, July 2017. [13] L. Potter , E . E rtin, J. Park er, and M. Cetin, “Sparsity and compressed sensing in radar imaging, ” Proc. of the IEEE , vol. 98, no. 6, pp. 1006– 1020, June 2010. [14] D. Dorsch and H. Rauhut, “Refined analysis of sparse MIMO radar , ” J. of F ourier A nal. and Appl. , vol. 23, no. 3, pp. 485–529, Jun 2017. [15] G. T aubock, F . Hlaw atsch, D. E iwen, and H. Rauhut, “Compressi ve estimati on of doubly sele cti ve channel s in multic arrier systems: Leakage ef fects and sparsity-enha ncing processing, ” IEEE J . Sel. T opics Signal Pr ocess. , vol. 4, no. 2, pp. 255–271, April 2010. [16] M. Mishali and Y . Eldar , “From theory to practice : Sub-Nyquist sam- pling of sparse wideband analog signals, ” IEEE J . Sel. T opics Signal Pr ocess. , vol. 4, no. 2, pp. 375–391, April 2010. [17] D. Donoho and J. T anner , “Precise undersampling theorems, ” Pr oc. IEEE , vol. 98, no. 6, pp. 913–924, May 2010. [18] M. Rudelson and R. V ershynin, “On sparse reconstruc tion from Fourier and Gaussian m easuremen ts, ” Comm. on P ure and Applied Math. , vol. 61, no. 8, pp. 1025–1045, 2008. [19] M. Bayati, M. Lelarge , and A. Montanari , “Uni versali ty in polytope phase transitions and message passing algorithms, ” The Annals of Applied Pr obability , vol. 25, no. 2, pp. 753–822, March 2015. [20] D. Donoho and J. T anner , “Exponentia l bounds implying construct ion of compressed sensing matrices, error-correct ing codes, and neighborly polytope s by random sampling, ” IE EE T rans. Inf . T heory , vol. 56, no. 4, pp. 2002–2016, April 2010. [21] S. Foucart and H. Rauhut, A mathematica l intr oduction to compressiv e sensing . Spri nger , 2013. [22] V . Chandraseka ran, B. Recht , P . Parrilo, and A. W illsk y , “The con vex geometry of linear in verse problems, ” F ound. of Computational Math. , vol. 12, no. 6, pp. 805–849, 2012. [23] E. Cand ` es, “The restricted isometry property and its implicati ons for compressed sensing, ” Comptes Rendus Mathemat ique , vol. 346, no. 9, pp. 589–592, May 2008. [24] S. Foucar t and M.-J. Lai, “Sparsest solutions of underdete rm ined linear systems via ℓ q -minimizat ion for 0 < q ≤ 1 , ” Appl. Comput. Harmon. Anal. , vol. 26, no. 3, pp. 395 – 407, 2009. [25] S. Foucart, “ A note on guarant eed sparse recov ery via ℓ 1 -minimizat ion, ” Appl. Comput. Harmon. A nal. , vol. 29, no. 1, pp. 97–103, 2010. [26] T . Cai, L. W ang, and G. Xu, “New bounds for restricted isometry constant s , ” IEEE T rans. Inf . Theory , vol. 56, no . 9, pp. 4388–4394, Sept. 2010. [27] Q. Mo and S. Li, “Ne w bounds on the restricte d isometry constant δ 2 k , ” Appl. Comput. Harmon. Anal. , vol. 31, no. 3, pp. 460–468, Nov . 2011. [28] T . Cai and A. Zhang, “Sharp RIP bound for sparse signal and lo w- rank matrix reco very , ” Appl. Comput. Harmon. Anal. , vol . 35, no. 1, pp. 74–93, 2013. [29] T . Blumensath and M. Davies, “Itera tiv e thresholding for sparse approx- imations, ” J. F ourier Anal. Appl. , vol. 14, no. 5-6, pp. 629–654, Sep. 2008. [30] S. Foucart, “Spa rse reco very alg orithms: Sufficient condition s in terms of restrict ed isometry constants, ” Appro ximati on Theory X III, San Antonio 2010 , vol. 13, pp. 65–77, 2012. [31] D. Needell and J. Tropp, “CoSaMP: Iterati ve signal recov ery from incomple te and inaccura te s amples, ” Applied and Computation al Har- monic Analysis , vol. 26, no. 3, pp. 301 – 321, 2009. [32] W . Dai and O. Milenko vic, “Subspace pursuit for compressi ve sensing signal rec onstruction, ” IEEE T rans. Inf. Theory , vol. 55, no. 5, pp. 2230– 2249, May 2009. [33] D. Needell and R. V ershynin, “Signal recov ery from incomplete and inacc urate measurements via regulariz ed orthogonal matching pursuit, ” IEEE J. Sel. T opics Signal Proce ss . , vol. 4, no. 2, pp. 310–316, April 2010. [34] T . Zhang, “Sparse reco very with orthogonal matchi ng pursuit under RIP, ” IEEE T rans. Inf. Theory , vol . 57, no. 9, pp. 6215–6221, Sept 2011. [35] W . W ang, M. W ainwright, and K. Ramchandra n, “Information-the oretic limits on sparse signal recov ery: Dense versus sparse measurement matrice s, ” IEEE T rans. Inf. Theory , vol. 56, no. 6, pp. 2967–2979, J une 2010. [36] J. Blanchard, C. Carti s, and J. T anner , “Compressed sensing: How sharp is the restrict ed isometry property , ” SIAM revi ew , vol . 53, no. 1, pp. 105– 125, 2011. [37] B. Bah and J. T anner , “Impro ved bounds on restric ted isometry constan ts for Gaussian matrices, ” SIAM J. Matrix Anal. A ppl. , vol. 31, no. 5, pp. 2882–2898, 2010. [38] ——, “Bounds of restricted isometry constants in extre me asymptotics: formulae for Gaussian matrices, ” Linear Algebra and Its Applications , vol. 441, no. Complete, pp. 88–109, Jan. 2014. ELZANA TY et al. : LIMITS ON SP ARSE D A T A ACQUI SITION: RIC ANAL YSIS OF FINITE GAUSS IAN M A TRICES 11 [39] A. Edelman, “Eigen va lues and condition numbers of random matrices, ” SIAM J. Matrix Anal. Appl. , vol. 9, no. 4, pp. 543–560, 1988. [40] M. Chiani, “On the probability that all eigen va lues of Gaussian, W ishart, and dou ble W ishart random matri ces lie within an i nterv al, ” IEEE T rans. Inf. Theory , vol. 63, no. 7, pp. 4521–4531, July 2017. [41] J. Blanc hard and A. Thompson, “On support sizes of restricted isometry constant s , ” Appl. Comput. Harmon. Anal. , vol. 29, no. 3, pp. 382–390, 2010. [42] S. Foucart, “Hard thresholding pursuit: An algorithm for compressi ve sensing, ” SIAM J. Numer . Anal. , vol. 49, no. 6, pp. 2543–2563, 2011. [43] J. Blanchard, C. Cartis, J. T anner , and A. T hompson, “Phase transitions for greedy sparse approximati on algorith ms, ” Appl. Comput. Harmon. Anal. , vol. 30, no. 2, pp. 188–203, 2011. [44] R. Tibshi rani, “Regre s s ion shrinkage and selecti on via the LASSO, ” J. R. Stat. Soc.. Series B (Methodolo gical) , pp. 267–288, 1996. [45] E. Candes and T . T ao, “The Da ntzig selec tor: Statistical estimation when p is much larger than n , ” The Annals of Statistic s , vol. 35, no. 6, pp. 2313–2351, Dec. 2007. [46] S. Chen and D. Donoho, “Basis pursuit, ” in P r oc. of the T wenty-Eighth Asilomar Conf. on Signals, Systems and Computers, P acific Gr ove, CA, USA , vol. 1, Oct. 1994, pp. 41–44. [47] Z. Xue, J. Ma, and X. Y uan, “D-O AMP: A denoi sing-based signal reco very algorit hm for c ompressed sensing , ” in Pr oc. IEEE Global Conf . on Signal and Inf. Pro cess. (GlobalSIP) , Dec. 2016, pp. 267–271. [48] R. Saab, R. Chartran d, and O. Y ilmaz, “Stable sparse approximati ons via noncon vex optimiza tion, ” in Proc . IEEE International Conf. on Acoustics, Speech and Sig. Proce s s., ICASSP , Las V egas, USA , March 2008, pp. 3885–3888. [49] L. Jacques, D. Hammond, and J. Fadili , “W eighted ℓ p constrai nts in noisy compressed sensing, ” in Pr oc. Sig. Proc ess. with Adaptive Sparse Structur ed Represen tation s W orkshop, E dinbur gh, Scotlan d, U K , June 2011, p. 49. [50] W . Zeng, H. So, and X. Jiang, “Outlier -robust greedy pursuit algorithms in ℓ p -space for sparse approximati on, ” IEEE T rans. Signal Proce ss . , vol. 64, no. 1, pp. 60–75, Jan 2016. [51] C. Tra cy and H. Wid om, “Lev el-spacing distributi ons and the Airy kerne l, ” Comm. Math. Phys. , vol. 159, no. 1, pp. 151–174, Dec. 1994. [52] K. Johansson, “Shape fluctuatio ns and random matric es, ” Comm, in Math. P hysics , vol. 209, no. 2, pp. 437–476, 2000. [53] M. Johnstone, “On the distrib ution of the large st eigen v alue in principa l component s analysis, ” T he Annals of Statisti cs , vol. 29, no. 2, pp. 295– 327, 2001. [54] C. T racy and H. W idom, “The distri buti ons of random m atrix theory and their ap plica tions, ” New T rends in Mathematic al Physics, V . Sidor avicius (ed.) , pp. 753–765, 2009. [55] F . Bornemann, “On the numerical ev aluation of distributi ons in random matrix theory: a re view , ” J . Markov Pr ocess. Related Fie lds , vol. 16, p. 803866, 2010. [56] Z. Ma, “ Accurac y of the Tracy W idom limits for the extre m e eigen v alues in white W ishart m atric es, ” Bernoulli , vol. 18, no. 1, pp. 322–359, 02 2012. [57] E. Basor , Y . Chen, and L. Zhang, “PDEs satisfied by extreme eigen- v alues distrib utions of GUE and L UE , ” Random Matrice s: Theory and Applicat ions , vol. 01, no. 01, p. 1150003, 2012. [58] M. Ledoux and B. Rider , “Small de viations for beta ensembles, ” Electr on. J. Prob . , vol. 15, pp. 1319–1343, 2010. [59] G. Aubrun, “ A sharp small de viatio n inequalit y for the largest eigen valu e of a random matrix, ” in S ´ e minair e de Pr obabili t ´ es XXX VIII . Springer , 2005, pp. 320–337. [60] L. Dumaz and B. V ir ´ ag, “The right tail exponent of the Trac y-W idom distrib ution, ” Ann. l’institu t Henri P oincar e P r ob . Stat. , vol. 49, no. 4, pp. 915–923, 2011. [61] O. Feldheim and S. Sodin, “ A uni versality result for the smallest eigen value s of certai n sample cov ariance matrices, ” Geometric and Functional Analysis , vol. 20, no. 1, pp. 88–123, 2010. [62] S. P ´ e ch ´ e, “Uni versalit y results for t he lar gest eige nv alues of some sample cov ariance matrix ensembles, ” Pro babilit y Theory and Related Fie lds , vol. 143, no. 3, pp. 481–516, 2009. [63] C. Dossal, G. P eyr, and J. Fadili, “ A numerical explorati on of com- pressed sampling recov ery , ” Linear A lgebra Appl. , vol. 432, no. 7, pp. 1663 – 1679, 2010. Ahmed Elzanaty (S’13) receiv ed the B.Sc. ( with honors ) and M.Sc. degree s in E lect ronics and Communications Engineering from Port Said Univ ersity , Egypt, in 2008 and 2013, respect i vely , and the Ph.D. degre e ( excell ent cum laude ) in Electroni cs, T elecommunic ations, and Informatio n technol ogy from the Uni versity of Bologna, Italy , in 2018. He was a recipi ent of a doctoral scholarshi p from the EU-MET ALIC II project, within the framew ork of Erasmus Mundus Acti on 2. Curren tly , he is a research fello w at the Uni versity of Bologna. He has participat ed in seve ral national and European projects, such as GRET A and EuroCPS. His research interests include statisti cal signal processing and digital communicati ons, with particular emphasis on compressed sensing and sparse s ource coding. He was the recipient of the best paper awa rd at the IEEE Int. Conf. on Ubiquitous Wire less Broadband (ICUWB 2017). Dr . E lzana ty was a member of the T echnical Program Com- mittee of the European Signal Processing Conf. (EUSIPCO 2017 and 2018). He is also a repre sentati ve of the IEEE Communicatio ns Societ y’ s Radio Communicat ions T echnical Committee for seve ral internati onal conference s. Andrea Giorgetti (S’98–M’04–SM’13) receiv ed the Dr . Ing. degree ( summa cum laude ) in elect ronic engineering and the Ph.D. degree in electroni c enginee ring and computer scienc e from the Univ ersity of Bologna, Italy , in 1999 and 2003, respecti vely . From 2003 to 2005, he was a Research er with the National Research Council, Italy . He joined the Department of Electric al, Electroni c, and Information Engineering “Guglielmo Marconi , ” Uni versity of Bologna, as an Assistant Professor in 2006 and was promoted to As s ociat e Professor in 2014. In spring 2006, he was with the Laboratory for Informati on and Decision Systems (L IDS), Massachusetts Institute of T echnology (MIT), Cambridge , MA, USA. Since then, he has been a frequent visitor to the Wire less Information and Network Science s Laboratory at the MIT , where he presently holds the Research Affiliat e appointment. His research intere sts include ultrawi de bandwidth communication systems, acti ve and passiv e localiza tion, wireless sensor networks, and cogniti ve radio. He has co-authored the book Cogniti ve Radio T echn iques: Spect r um Sensing , Interfer ence Mitigation , and Localizati on (Artech House, 2012). He was the T echnical Program Co-Chair of sev eral symposia at the IEEE Int. Conf. on Commun. (ICC), and IEEE G lobal Commun. Conf. (Globecom). He has been an Editor for the I E E E C O M M U N I C AT I O N S L E T T E R S and for the I E E E T R A N S AC T I O N S O N W I R E L E S S C O M M U N I C AT I O N S . He has been elect ed Chair of the IEEE Communicati ons Society ’ s Radio Communications T echnical Committee. Marco Chiani (M’94–SM’02–F’11) recei ved the Dr . Ing. degree ( summa cum laude ) in electronic engineerin g and the Ph.D. degree in electron ic and computer engineering from the Uni versit y of Bolo gna, Italy , in 1989 and 1993, respect i vely . He is a Full Professor in T elecommunica tions at the Univ ersity of Bologna. During summer 2001, he was a V isiting Scient ist at A T&T Researc h Laboratori es, Middleto wn, NJ. Since 2003 he has been a frequent visitor at the Massachusetts Institute of T echnology (MIT), Cambridge, where he presently holds a Research Affiliate appointment. His research intere sts are in the areas of communications theory , wireless systems, and statisti cal signal processing, including MIMO s tati stical analysis, codes on graphs, wireless multimedia, cogniti ve radio techni ques, and ultra-wid eband radios. In 2012 he has been appointed Distinguished V isiting Fello w of the Royal Academy of Engineering, UK. He is the past chair (2002– 2004) of the Radio Comm unica tions Committee of the IEE E Communicatio n Society and past Editor of W ireless Communicati on (2000–20 07) for the journal I E E E T R A N S AC T I O N S O N C O M M U N I C ATI O N S . He recei ved the 2011 IEEE Communicati ons Societ y Leonard G. Abraham Prize in the Field of Communicat ions Systems, the 2012 IEEE Communicat ions Society Fred W . Ellersick Prize, and the 2012 IE E E Communicatio ns Society Stephen O. Rice Prize in the Field of Comm unica tions Theory .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment