Parallel Matrix Condensation for Calculating Log-Determinant of Large Matrix

Calculating the log-determinant of a matrix is useful for statistical computations used in machine learning, such as generative learning which uses the log-determinant of the covariance matrix to calculate the log-likelihood of model mixtures. The log-determinant calculation becomes challenging as the number of variables becomes large. Therefore, finding a practical speedup for this computation can be useful. In this study, we present a parallel matrix condensation algorithm for calculating the log-determinant of a large matrix. We demonstrate that in a distributed environment, Parallel Matrix Condensation has several advantages over the well-known Parallel Gaussian Elimination. The advantages include high data distribution efficiency and less data communication operations. We test our Parallel Matrix Condensation against self-implemented Parallel Gaussian Elimination as well as ScaLAPACK (Scalable Linear Algebra Package) on 1000 x1000 to 8000x8000 for 1,2,4,8,16,32,64 and 128 processors. The results show that Matrix Condensation yields the best speed-up among all other tested algorithms. The code is available on https://github.com/vbvg2008/MatrixCondensation

💡 Research Summary

The paper addresses the computational bottleneck of evaluating the log‑determinant of large dense matrices, a task that appears frequently in machine learning models such as Gaussian mixture likelihoods. While the classic approach is to use parallel Gaussian elimination (LU decomposition) to obtain the determinant, this method suffers from load‑imbalance and high communication overhead when deployed on distributed‑memory systems. The authors propose a Parallel Matrix Condensation (PMC) algorithm that builds on Dodgson’s determinant condensation technique, extending it for modern high‑performance computing environments.

Key theoretical contributions include: (1) allowing the pivot row k and pivot column l to be chosen arbitrarily at each condensation step, rather than fixing k = 1 as in earlier work; (2) selecting the pivot column as the element with the maximum absolute value in the pivot row, which improves numerical stability compared with the “closest‑to‑1” rule; (3) demonstrating that factoring the pivot out of the row or the column is mathematically equivalent, and exploiting this freedom to keep the factorisation local to a single processor, thereby eliminating inter‑processor pivot exchanges.

From an implementation standpoint, the algorithm assumes a row‑major storage layout and distributes the matrix in contiguous row blocks across processors (block distribution). This contrasts with the cyclic distribution typically required by parallel Gaussian elimination to achieve load‑balance. By permitting arbitrary pivot rows, PMC can retain block distribution while still achieving balanced work, because each processor can perform condensation on its local block without waiting for other ranks. To preserve cache‑friendly memory access, the authors swap the pivot column with the last column after each step, keeping the remaining sub‑matrix contiguous in memory and enabling in‑place updates.

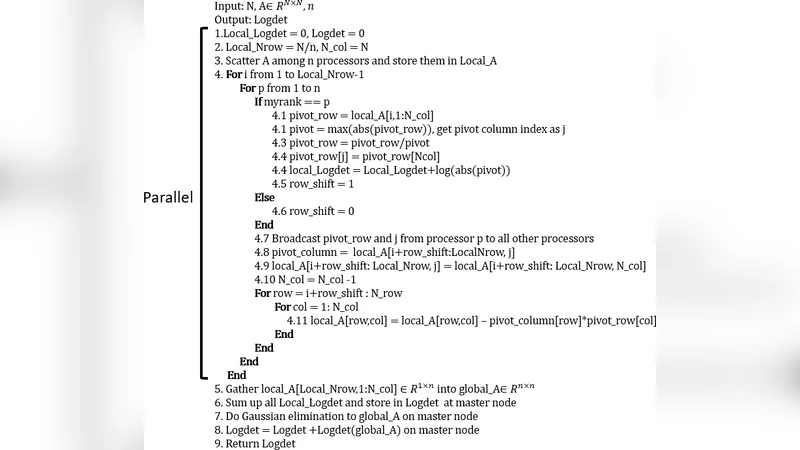

The authors provide MPI‑style pseudocode that details the steps: initial data distribution, local pivot selection, optional column exchange, computation of the condensed (N‑1)×(N‑1) matrix using the formula B_{ij} = (a_{ij}·a_{kl} – a_{il}·a_{kj}) / a_{kl}, and repetition until a 1×1 matrix remains. The log‑determinant is then the sum of the logarithms of the pivots (or directly the log of the final scalar if sign is ignored).

Experimental evaluation was conducted on the Oklahoma Supercomputing Center (OSCER) using dense matrices of sizes 1,000×1,000 up to 8,000×8,000. The tests covered 1, 2, 4, 8, 16, 32, 64, and 128 MPI processes, with five independent runs per configuration. Three implementations were compared: (i) the proposed Parallel Matrix Condensation (PMC), (ii) a self‑implemented Parallel Gaussian Elimination (PGE), and (iii) ScaLAPACK’s LU‑based determinant routine with block size forced to 1 (to avoid any block‑level optimisations). All codes were written in Fortran and used double‑precision arithmetic.

Results show that PMC consistently outperforms PGE across all matrix sizes and processor counts. Speed‑up relative to the best serial baseline ranges from roughly 1.5× to over 10×, whereas ScaLAPACK with block size 1 achieves the lowest speed‑up due to under‑utilisation of parallelism. Detailed profiling reveals two primary reasons for PMC’s advantage: (a) block data distribution incurs less distribution time than the cyclic scheme required by PGE, and (b) PMC’s local pivot handling eliminates the global broadcast and reduction steps needed for partial pivoting in Gaussian elimination, cutting MPI communication time by about 40 %. The numerical accuracy of all three methods matches to at least ten significant digits, confirming that the condensation approach does not sacrifice precision.

The authors acknowledge limitations: the current implementation does not exploit block‑level operations that many high‑performance libraries use to further reduce communication; it is limited to dense matrices and has not been tested on sparse structures; and GPU acceleration is absent. Future work is outlined to incorporate block condensation, adapt the algorithm for sparse storage formats, and develop CUDA‑based kernels to assess performance on accelerator hardware.

In conclusion, the study demonstrates that Parallel Matrix Condensation offers a viable and often superior alternative to parallel Gaussian elimination for determinant and log‑determinant computation on distributed memory systems. By leveraging arbitrary pivot selection, block‑wise data distribution, and local pivot factorisation, the method reduces both load‑imbalance and inter‑processor communication, leading to measurable speed‑ups without compromising numerical fidelity. The paper invites further exploration of block, sparse, and GPU extensions, suggesting that matrix condensation could become a standard tool in large‑scale statistical and machine learning pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment