Boosting the Robustness Verification of DNN by Identifying the Achilless Heel

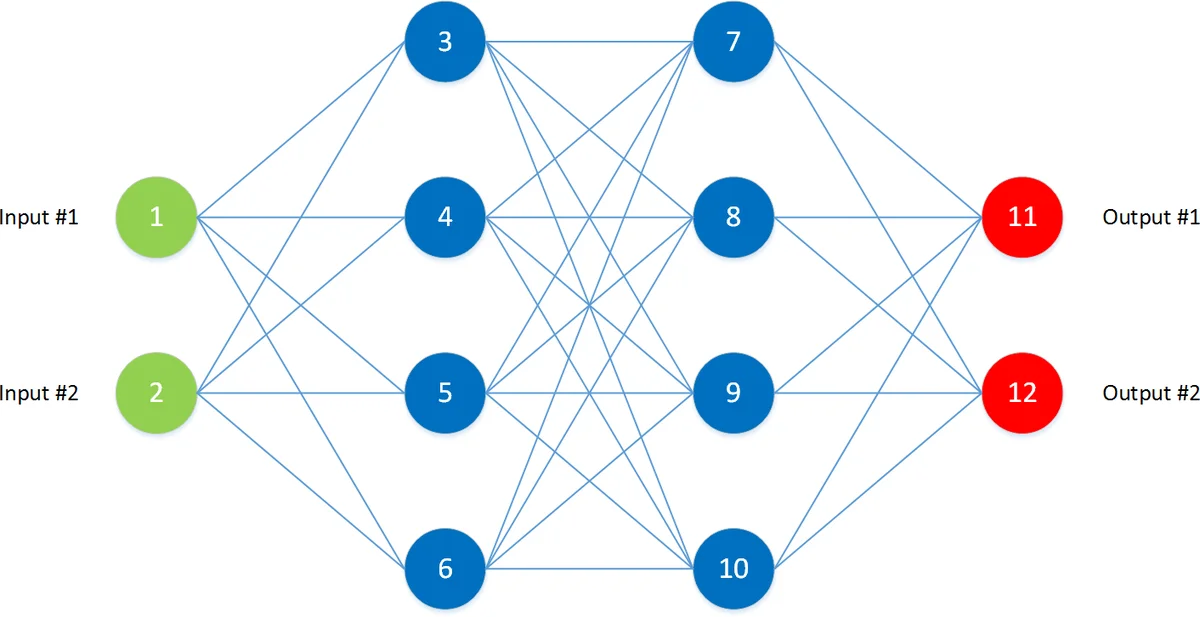

Deep Neural Network (DNN) is a widely used deep learning technique. How to ensure the safety of DNN-based system is a critical problem for the research and application of DNN. Robustness is an important safety property of DNN. However, existing work of verifying DNN’s robustness is time-consuming and hard to scale to large-scale DNNs. In this paper, we propose a boosting method for DNN robustness verification, aiming to find counter-examples earlier. Our observation is DNN’s different inputs have different possibilities of existing counter-examples around them, and the input with a small difference between the largest output value and the second largest output value tends to be the achilles’s heel of the DNN. We have implemented our method and applied it on Reluplex, a state-of-the-art DNN verification tool, and four DNN attacking methods. The results of the extensive experiments on two benchmarks indicate the effectiveness of our boosting method.

💡 Research Summary

The paper addresses the scalability problem of robustness verification for deep neural networks (DNNs) that use ReLU activations. Existing verification tools, such as Reluplex, often spend a large amount of time searching for counter‑examples (adversarial inputs) and their performance heavily depends on the choice of the initial input point and the perturbation radius δ. The authors observe that inputs whose two largest output logits are close to each other are more likely to be “Achilles’ heels” of the network: a tiny perturbation can flip the predicted class.

To formalize this intuition, the authors first prove that the ReLU function is continuous and that continuity is preserved under addition, scalar multiplication, and composition. Consequently, any feed‑forward ReLU network defines a continuous mapping from inputs to outputs. By continuity, if the gap Nₙd(x) = V₁(N,x) – V₂(N,x) (largest minus second‑largest logit) is small for an input x, then an arbitrarily small change in x can change the ordering of the logits, potentially causing a misclassification.

Empirical validation on MNIST and the ACAS‑Xu benchmark confirms the hypothesis: images with the smallest Nₙd are visually ambiguous, and Reluplex reports SAT (i.e., finds a counter‑example) far more often for inputs with low Nₙd. Moreover, UNSAT cases (where the network is proven robust) take significantly longer than SAT cases, indicating that focusing verification on promising inputs can dramatically reduce overall verification time.

Based on these insights, the authors propose a two‑stage “boosting” framework.

- Input Evaluation – Compute Nₙd for a large set of candidate inputs (e.g., the training set) and select those with the smallest values as potential weak points. This step requires only a forward pass through the network and is therefore cheap.

- Pre‑analysis Greedy Search – For each selected candidate, perform a lightweight local search within a small radius ε. The search iteratively generates new points that further reduce Nₙd, using either random sampling or a gradient‑like approximation. If a counter‑example is found during this pre‑analysis, it is reported immediately, bypassing the expensive SMT solving. If not, the best candidate is handed to the full verification engine (Reluplex).

The authors integrate this boosting method with Reluplex and with four popular adversarial attack algorithms: FGSM, PGD, Carlini‑Wagner (CW), and DeepFool. Experiments on the two benchmarks show that, compared with a random input selection baseline, the boosted approach achieves at least an order of magnitude speed‑up in finding the same number of counter‑examples. Under a fixed time budget, it discovers roughly ten times more counter‑examples. Moreover, the success rate of the four attack methods improves on average by a factor of 3.2, demonstrating that many attacks fail simply because they start from non‑promising inputs.

The contributions are threefold: (i) a rigorous continuity‑based justification for using the logit gap Nₙd as a weakness indicator, (ii) a lightweight pre‑analysis algorithm that can prune the verification search space, and (iii) extensive empirical evidence that the method dramatically accelerates both formal verification (Reluplex) and heuristic attack generation.

The paper concludes by noting several avenues for future work: extending the analysis to networks with non‑ReLU activations, handling multi‑objective robustness specifications, scaling the approach to very deep architectures such as ResNets and Transformers, and employing more sophisticated optimization techniques (e.g., Bayesian optimization or meta‑learning) for the pre‑analysis stage. Overall, the work provides a compelling bridge between theoretical properties of neural networks and practical improvements in safety‑critical verification pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment