EdgeSpeechNets: Highly Efficient Deep Neural Networks for Speech Recognition on the Edge

Despite showing state-of-the-art performance, deep learning for speech recognition remains challenging to deploy in on-device edge scenarios such as mobile and other consumer devices. Recently, there have been greater efforts in the design of small, …

Authors: Zhong Qiu Lin, Audrey G. Chung, Alex

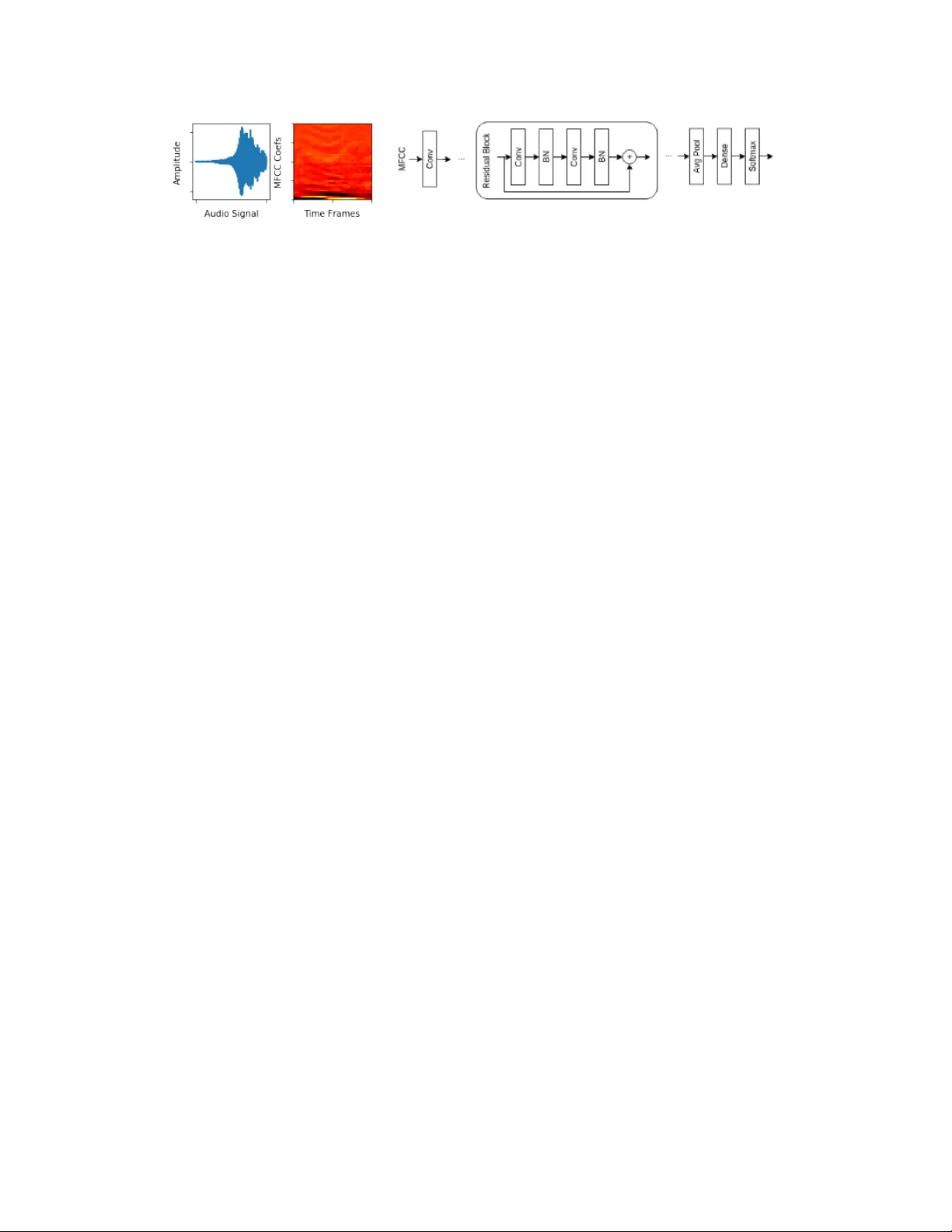

EdgeSpeechNets: Highly Efficient Deep Neural Networks f or Speech Recognition on the Edge Zhong Qiu Lin ∗ , 1 , 3 , A udrey G. Chung 1 , 2 , 3 , Alexander W ong 1 , 2 , 3 1 V ision and Image Processing Research Group, Univ ersity of W aterloo, W aterloo, ON, Canada 2 W aterloo Artificial Intelligence Institute, Uni versity of W aterloo, W aterloo, ON, Canada 3 DarwinAI Corp., W aterloo, ON, Canada ∗ zq2lin@edu.uwaterloo.ca Abstract Despite sho wing state-of-the-art performance, deep learning for speech recognition remains challenging to deploy in on-de vice edge scenarios such as mobile and other consumer devices. Recently , there hav e been greater efforts in the design of small, low-footprint deep neural networks (DNNs) that are more appropriate for edge devices, with much of the focus on design principles for hand-crafting efficient network architectures. In this study , we explore a human-machine collaborative design strategy for b uilding low-footprint DNN architectures for speech recogni- tion through a marriage of human-dri ven principled network design prototyping and machine-driv en design exploration. The efficacy of this design strategy is demonstrated through the design of a family of highly-ef fi cient DNNs (nicknamed EdgeSpeechNets ) for limited-vocab ulary speech recognition. Experimental results using the Google Speech Commands dataset for limited-vocab ulary speech recog- nition showed that EdgeSpeechNets ha ve higher accuracies than state-of-the-art DNNs (with the best EdgeSpeechNet achieving ∼ 97% accuracy), while achie ving significantly smaller network sizes (as much as 7 . 8 × smaller) and lower computa- tional cost (as much as 36 × fewer multiply-add operations, 10 × lower prediction latency , and 16 × smaller memory footprint on a Motorola Moto E phone), making them very well-suited for on-de vice edge voice interface applications. 1 Introduction Deep learning has seen widespread interest in recent years, and has been demonstrated to achiev e state-of-the-art performance for a wide range of applications in speech recognition. In particular , limited-vocab ulary speech recognition [ 1 ], also known as ke yword spotting, has recently seen significant interest as an important application of deep learning for mobile, IoT , and other edge devices. The ability to rapidly recognize specific ke ywords from a stream of v erbal utterances can enable voice interfaces with which the user can interact in a natural, verbal manner without the need for cloud computing, which is particularly important in scenarios where pri vac y and internet connectivity are of concern. Despite the promises, deep learning for speech recognition tasks such as limited-vocab ulary speech recognition remains challenging to deploy in on-de vice edge scenarios such as mobile and other consumer de vices due to computational and memory requirements. As such, there has been greater recent ef forts to design small, low-footprint deep neural network (DNN) architectures that are more appropriate for edge devices, with much of the focus on design principles for hand-crafting efficient network architectures [ 1 – 3 ]. In this study , we explore a human-machine collaborative design strategy for building lo w-footprint DNN architectures for speech recognition through a marriage of human- driv en principled network design prototyping and machine-driv en design exploration via generati ve synthesis [ 4 ]. More specifically , a family of highly-ef ficient DNNs (nicknamed EdgeSpeechNets ) are designed for limited-vocab ulary speech recognition using this strategy . 32nd Conference on Neural Information Processing Systems (NIPS 2018), Montréal, Canada. Figure 1: (a) Audio signal; (b) MFCC representations; (c) initial design prototype. 2 Methods The human-machine collaborati ve design strate gy presented in this study for building EdgeSpeech- Nets (low-footprint DNN architectures for limited-v ocabulary speech recognition) comprises of two main steps. First, design principles are leveraged to construct an initial design prototype catered to- wards the task of limited-vocab ulary speech recognition. Second, machine-driv en design exploration is performed based on the constructed initial design prototype and a set of design requirements to generate a set of alternative highly-ef ficient DNN designs appropriate to the problem space. As such, the goal of this strategy is to combine human ingenuity with the meticulousness of a machine. Details of these two steps are described belo w . 2.1 Human-driven Design Pr ototyping The first step of the presented design strate gy is to lev erage design principles to construct an initial design prototype catered towards the task of limited-vocab ulary speech recognition. Based on past literature, a very ef fectiv e strategy for le veraging deep learning for limited-v ocabulary speech recognition is to first transform the input audio signal into mel-frequency cepstrum coefficient (MFCC) representations. Inspired by [ 5 ], the input layer of the design prototype tak es in a two-dimensional stack of MFCC representations using a 30ms windo w with a 10ms time shift across a one-second band-pass filtered (cutoff from 20Hz to 4kHz for reducing noise) audio sample. For the intermediate representation layers of the initial design prototype, we le verage the concept of deep residual learning [ 6 ] and specify the use of residual blocks comprised of alternating con volution and batch normalization layers, with skip connections between residual blocks. Networks b uilt around deep residual stacks hav e been shown to enable easier learning of DNNs with greater representation capabilities, and has been previously demonstrated to enable state-of-the-art speech recognition performance [ 3 ]. After the intermediate representation layers, av erage pooling is specified in the initial design prototype, followed by a dense layer . Finally , a softmax layer is defined as the output of the initial design prototype to indicate which of the ke ywords was detected from the verbal utterance. Driv en by these design principles, the initial design prototype is shown in Figure 1. 2.2 Machine-driven Design Exploration The second step in the presented design strategy is to perform machine-dri ven design exploration based on the initial design prototype, while considering a set of design requirements that ensure the generation of a set of alternativ e highly-efficient DNN designs appropriate for on-de vice limited- vocab ulary speech recognition. Previous literature [ 3 ] has le veraged manual design e xploration for designing low-footprint DNNs for speech recognition, where high-lev el parameters such as the width and depth of a network are varied to observe the trade-of fs between resource usage and accuracy in a similar vein as [ 7 , 8 ]. Howe ver , the coarse-grained nature of such a design e xploration strategy can be quite limiting in terms of the di versity in the network architectures that can be found. Motiv ated to ov ercome these limitations, we instead take advantage of a highly fle xible machine-driven design exploration strate gy in the form of generativ e synthesis [ 4 ]. Briefly , the goal of generative synthesis is to learn a generator G that, giv en a set of seeds S , can generate DNNs { N s | s ∈ S } that maximize a univ ersal performance function U (e.g., [ 9 ]) while satisfying requirements defined by an indicator function 1 r ( · ) , i.e., G = max G U ( G ( s )) subject to 1 r ( G ( s )) = 1 , ∀ s ∈ S. (1) An approximate solution to this optimization problem is found in an progressi ve manner , with the generator initialized based on prototype ϕ , U , and 1 r ( · ) and a number of successive generators being constructed. W e take full adv antage of this interesting phenomenon by lev eraging this set of generators to synthesize a family of EdgeSpeechNets that satisfies these requirements. More 2 specifically , we configure the indicator function 1 r ( · ) such that the validation accuracy ≥ 95% on the Google Speech Commands dataset [10]. 2.3 Final Architectur e Designs The architecture design of the EdgeSpeechNets produced using the presented human-machine collaborativ e strategy are summarized in T ables 1 and 2. It can be observed that the architecture designs produced ha ve di verse architectural diff erences that can only be achie ved via fine-grained machine-driv en design exploration. T able 1: Network architectures of EdgeSpeechNet-A (left) and EdgeSpeechNet-B (right) T ype m r n Params con v 3 3 39 351 con v 3 3 20 7020 con v 3 3 39 7020 con v 3 3 15 5265 con v 3 3 39 5265 con v 3 3 25 8775 con v 3 3 39 8775 con v 3 3 22 7722 con v 3 3 39 7722 con v 3 3 22 7722 con v 3 3 39 7722 con v 3 3 25 8775 con v 3 3 39 8775 con v 3 3 45 15795 avg-pool - - - - dense - - 12 540 softmax - - - - T otal - - - 107K T ype m r n Params con v 3 3 30 270 con v 3 3 8 2160 con v 3 3 30 2160 con v 3 3 9 2430 con v 3 3 30 2430 con v 3 3 11 2970 con v 3 3 30 2970 con v 3 3 10 2700 con v 3 3 30 2700 con v 3 3 8 2160 con v 3 3 30 2160 con v 3 3 11 2970 con v 3 3 30 2970 con v 3 3 45 12150 avg-pool - - - - dense - - 12 540 softmax - - - - T otal - - - 43.7K T able 2: Network architectures of EdgeSpeechNet-C (left) and EdgeSpeechNet-D (right) T ype m r n Params con v 3 3 24 216 con v 3 3 6 1296 con v 3 3 24 1296 con v 3 3 9 1944 con v 3 3 24 1944 con v 3 3 12 2592 con v 3 3 24 2592 con v 3 3 6 1296 con v 3 3 24 1296 con v 3 3 5 1080 con v 3 3 24 1080 con v 3 3 6 1296 con v 3 3 24 1296 con v 3 3 2 432 con v 3 3 24 432 con v 3 3 45 9720 avg-pool - - - - dense - - 12 540 softmax - - - - T otal - - - 30.3K T ype m r n Params con v 3 3 45 405 avg-pool - - - - con v 3 3 30 12150 con v 3 3 45 12150 con v 3 3 33 13365 con v 3 3 45 13365 con v 3 3 35 14175 con v 3 3 45 14175 avg-pool - - - - dense - - 12 540 softmax - - - - T otal - - - 80.3k 3 Results and Discussion T able 3: T est accuracy of EdgeSpeechNets in comparison to trad-fpool13 [ 2 ], tpool2 [ 2 ], res15 [ 3 ], and res15-narro w [ 3 ], NetScores, and model sizes in terms of number of parameters and multiply-add operations. All results are the mean across 5 runs. Best results are in bold . Model T est Accuracy NetScore Params Mult-Adds trad-fpool13 [2] 90 . 5% 85 . 93 1.37M 125M tpool2 [2] 91 . 7% 87 . 99 1.09M 103M res15 [3] 95 . 8% 85 . 98 238K 894M res15-narrow [3] 94 . 0% 100 . 59 42.6K 160M EdgeSpeechNet-A 96.8 % 93 . 75 107K 343M EdgeSpeechNet-B 96 . 3% 101 . 82 43.7K 126M EdgeSpeechNet-C 96 . 2% 105 . 12 30.3K 83.5M EdgeSpeechNet-D 95 . 8% 106.67 80.3K 24.5M 3 The efficac y of the produced EdgeSpeechNets were ev aluated using the Google Speech Commands dataset [ 10 ] 1 . The Speech Commands Dataset was designed for limited-v ocabulary speech recog- nition and contains 65,000 one-second samples of 30 short words and background noise samples. For comparison purposes, the results for two state-of-the-art deep neural networks presented in [ 3 ] (res15 and res15-narrow) and the Google netw orks presented in [ 2 ] (trad-fpool13, tpool2) were also presented. As sho wn in T able 3, the produced EdgeSpeechNets had higher accuracies at much smaller sizes and lo wer computational costs than state-of-the-art deep neural networks. In terms of best accuracy , EdgeSpeechNet-A achiev ed 1% higher accuracy compared to the state-of-the-art res15 [ 3 ] while ha ving >2.2 × fewer parameters and requiring >2.6 × fewer multiply-add operations. In fact, the best of 5 runs for EdgeSpeechNet-A achiev ed a test accuracy reaching ∼ 97% , thus noticeably outperforming pre viously published results. More interesting, EdgeSpeechNet-B still achie ved higher accuracy ( 0.5% higher) compared to res15 while having >5.4 × fewer parameters and requiring ∼ 7.1 × fewer multiply-add operations. In terms of smallest size, EdgeSpeechNet-C achieved higher accuracy ( 0.4% higher) compared to res15 b ut has >7.8 × fewer parameters and requiring >10.7 × fewer multiply-add operations. In terms of lowest computational cost, EdgeSpeechNet-D achie ved the same accuracy compared to res15 but requires ∼ 36.5 × fewer multiply-add operations. When compared to the Google netw ork tpool2 [ 2 ], EdgeSpeechNet-D achie ved 4.1% higher accurac y while having >13.5 × fewer parameters and requiring ∼ 4.2 × fewer multiply-add operations. In terms of the highest NetScore, EdgeSpeechNet-D achie ved a NetScore that is > 20 points than res15, which demonstrates a strong balance between accurac y , computational cost, and size. Finally , running on a 1.4 GHz Cortex-A53 mobile processor in a Motorola Moto E phone using T ensorFlo w Mobile, EdgeSpeechNet-D ran with an av erage prediction latency of 34ms and memory footprint of ∼ 1MB ( >10 × lower latency and >16.5 × smaller memory footprint than res15). These results demonstrate that the EdgeSpeechNets were able to achie ve state-of-the-art performance while still being noticeably smaller and requiring significantly fewer computations, making them v ery well-suited for on-device edge voice interface applications. Giv en the promising prospects of the presented human-machine collaborativ e design strategy , we aim to further explore this strategy for designing highly-ef ficient deep neural networks in other applications such as visual perception and natural language processing. Acknowledgements This work was supported by NSERC, Canada Research Chairs Program, and DarwinAI Corp. References [1] P . W arden, “Speech commands: A dataset for limited-vocab ulary speech recognition, ” arXiv preprint arXiv:1804.03209 , 2018. [2] T . N. Sainath and C. Parada, “Conv olutional neural networks for small-footprint ke yword spotting, ” in Sixteenth Annual Confer ence of the International Speech Communication Association , 2015. [3] R. T ang and J. Lin, “Deep residual learning for small-footprint keyword spotting, ” arXiv preprint arXiv:1710.10361 , 2017. [4] A. W ong, M. J. Shafiee, B. Chwyl, and F . Li, “Ferminets: Learning generative machines to generate efficient neural netw orks via generativ e synthesis, ” arXiv pr eprint arXiv:1809.05989 , 2018. [5] R. T ang and J. Lin, “Honk: A pytorch reimplementation of con volutional neural networks for keyword spotting, ” arXiv pr eprint arXiv:1710.06554 , 2017. [6] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Pr oceedings of the IEEE confer ence on computer vision and pattern recognition , 2016, pp. 770–778. [7] A. G. Howard, M. Zhu, B. Chen, D. Kalenichenko, W . W ang, T . W eyand, M. Andreetto, and H. Adam, “Mobilenets: Efficient con volutional neural networks for mobile vision applications, ” arXiv preprint arXiv:1704.04861 , 2017. [8] M. Sandler , A. Howard, M. Zhu, A. Zhmoginov , and L.-C. Chen, “Mobilenetv2: Inv erted residuals and linear bottlenecks, ” in Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2018, pp. 4510–4520. [9] A. W ong, “Netscore: T ow ards universal metrics for large-scale performance analysis of deep neural networks for practical usage, ” arXiv pr eprint arXiv:1806.05512 , 2018. [10] P . W arden, “Launching the speech commands dataset, ” in Google Researc h Blog , 2017. 1 https://research.googleblog.com/2017/08/ launching-speech-commands-dataset.html 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment