Characterizing machine learning process: A maturity framework

Academic literature on machine learning modeling fails to address how to make machine learning models work for enterprises. For example, existing machine learning processes cannot address how to define business use cases for an AI application, how to convert business requirements from offering managers into data requirements for data scientists, and how to continuously improve AI applications in term of accuracy and fairness, and how to customize general purpose machine learning models with industry, domain, and use case specific data to make them more accurate for specific situations etc. Making AI work for enterprises requires special considerations, tools, methods and processes. In this paper we present a maturity framework for machine learning model lifecycle management for enterprises. Our framework is a re-interpretation of the software Capability Maturity Model (CMM) for machine learning model development process. We present a set of best practices from our personal experience of building large scale real-world machine learning models to help organizations achieve higher levels of maturity independent of their starting point.

💡 Research Summary

The paper “Characterizing machine learning process: A maturity framework” proposes a comprehensive maturity model for managing the lifecycle of machine learning (ML) models in enterprise settings. Recognizing that academic ML research focuses mainly on algorithmic development and neglects the practical challenges faced by businesses, the authors reinterpret the classic Software Capability Maturity Model (CMM) for the ML domain. They argue that traditional software development processes are deterministic, whereas ML models are probabilistic, data‑driven, and require continuous monitoring for accuracy, fairness, transparency, and explainability. Consequently, a new set of roles, tools, and governance mechanisms is needed.

The authors first contrast traditional software development with ML model development, highlighting differences in data handling (structured vs. unstructured, multi‑stage pipelines), the need for data acquisition, annotation, quality checks, augmentation, and the emergence of ethical concerns such as bias and explainability. They then identify concrete enterprise‑level gaps: defining business use cases, translating business requirements into data requirements, continuously improving model performance and fairness, and customizing generic models with domain‑specific data.

To address these gaps, the paper outlines an eight‑step ML service lifecycle, each associated with a dedicated role:

- Model Goal Setting and Offering Management – An Offering Manager defines business goals, performance thresholds, fairness criteria, and service‑level agreements, and monitors model performance across versions.

- Content Management Strategy – A Content Manager identifies legal data sources, negotiates contracts, manages budgets, and ensures data lineage.

- Data Pipeline – A Data Lead oversees data collection, labeling, quality assurance, augmentation, and lineage tracking.

- Feature Preparation – Feature engineers create domain‑specific tokenizers, parsers, phonetic dictionaries, etc., depending on the service (e.g., NLU or speech‑to‑text).

- Model Training – A Training Lead selects algorithms, frameworks, hyper‑parameters, conducts experiments, and performs error analysis.

- Testing and Benchmarking – A Test Lead evaluates the model against internal test sets, competitor services, and conducts detailed error analysis.

- Model Deployment – A Deployment Lead decides on infrastructure sizing, containerization, and production‑grade packaging.

- AI Operations Management – An AI‑Ops team monitors deployed models for drift, bias, performance degradation, and orchestrates continuous retraining cycles.

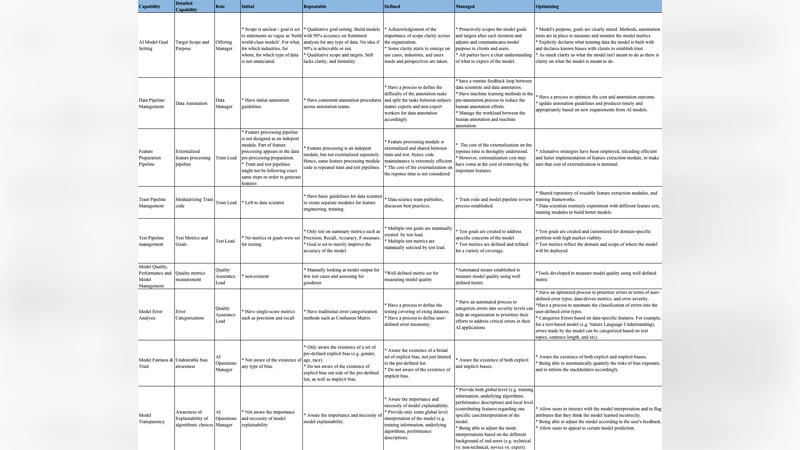

Each step is iterative, feeding back into earlier stages to enable continuous improvement. The authors map these steps onto the five CMM levels—Initial, Repeatable, Defined, Managed, Optimizing—defining the characteristics, artifacts, and recommended tools for each level. For example, at the Managed level, organizations should have quantitative KPIs (e.g., model accuracy improvement rate, data labeling quality scores) and automated fairness checks; at the Optimizing level, they should employ advanced MLOps platforms, automated drift detection, and a culture of systematic experimentation.

The related‑work section surveys software CMM, big‑data maturity models, and knowledge‑discovery processes, positioning this paper as the first to adapt a maturity framework specifically for ML pipelines. The authors provide a brief excerpt of a questionnaire for assessing maturity in an appendix, suggesting a practical way for organizations to self‑evaluate.

In the conclusion, the paper claims that adopting the proposed framework can bridge the gap between business objectives and technical execution, improve model quality and fairness, and reduce operational risk. It also outlines future work: gathering empirical case studies, defining quantitative metrics for each maturity level, and integrating the framework with existing ITSM/DevOps processes.

Overall, the contribution is a structured, role‑based, and stage‑wise maturity model that translates the abstract concept of “ML lifecycle management” into actionable practices for enterprises. Its strengths lie in the clear mapping of CMM levels to ML-specific activities and the detailed role definitions. However, the paper lacks real‑world validation, quantitative evidence of benefits, and guidance on tailoring the model to organizations of different sizes. Adding case studies, KPI definitions, and integration pathways with existing governance frameworks would strengthen its practical impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment