Fast Path Localization on Graphs via Multiscale Viterbi Decoding

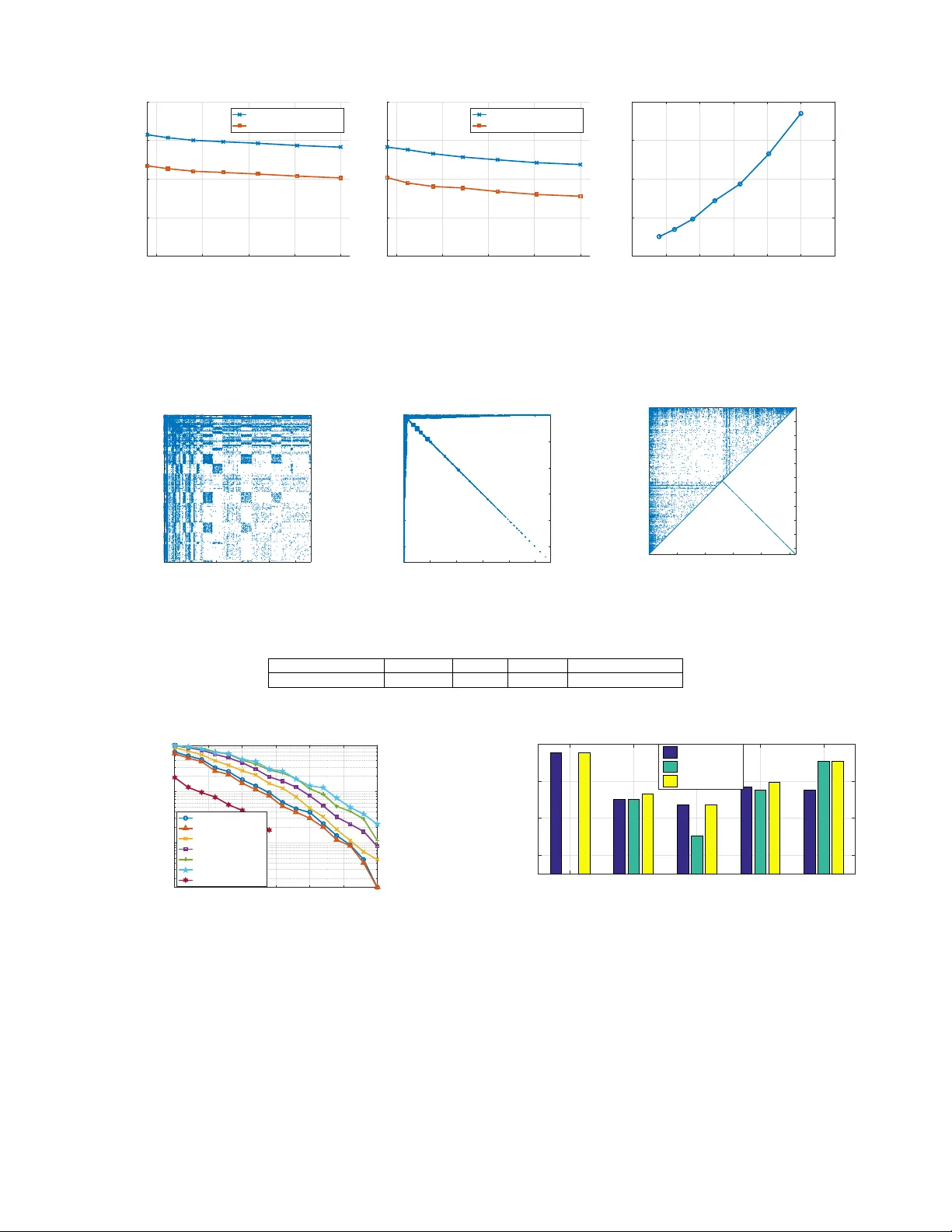

We consider a problem of localizing a path-signal that evolves over time on a graph. A path-signal can be viewed as the trajectory of a moving agent on a graph in several consecutive time points. Combining dynamic programming and graph partitioning, …

Authors: Yaoqing Yang, Siheng Chen, Mohammad Ali Maddah-Ali