PerformanceNet: Score-to-Audio Music Generation with Multi-Band Convolutional Residual Network

Music creation is typically composed of two parts: composing the musical score, and then performing the score with instruments to make sounds. While recent work has made much progress in automatic music generation in the symbolic domain, few attempts…

Authors: Bryan Wang, Yi-Hsuan Yang

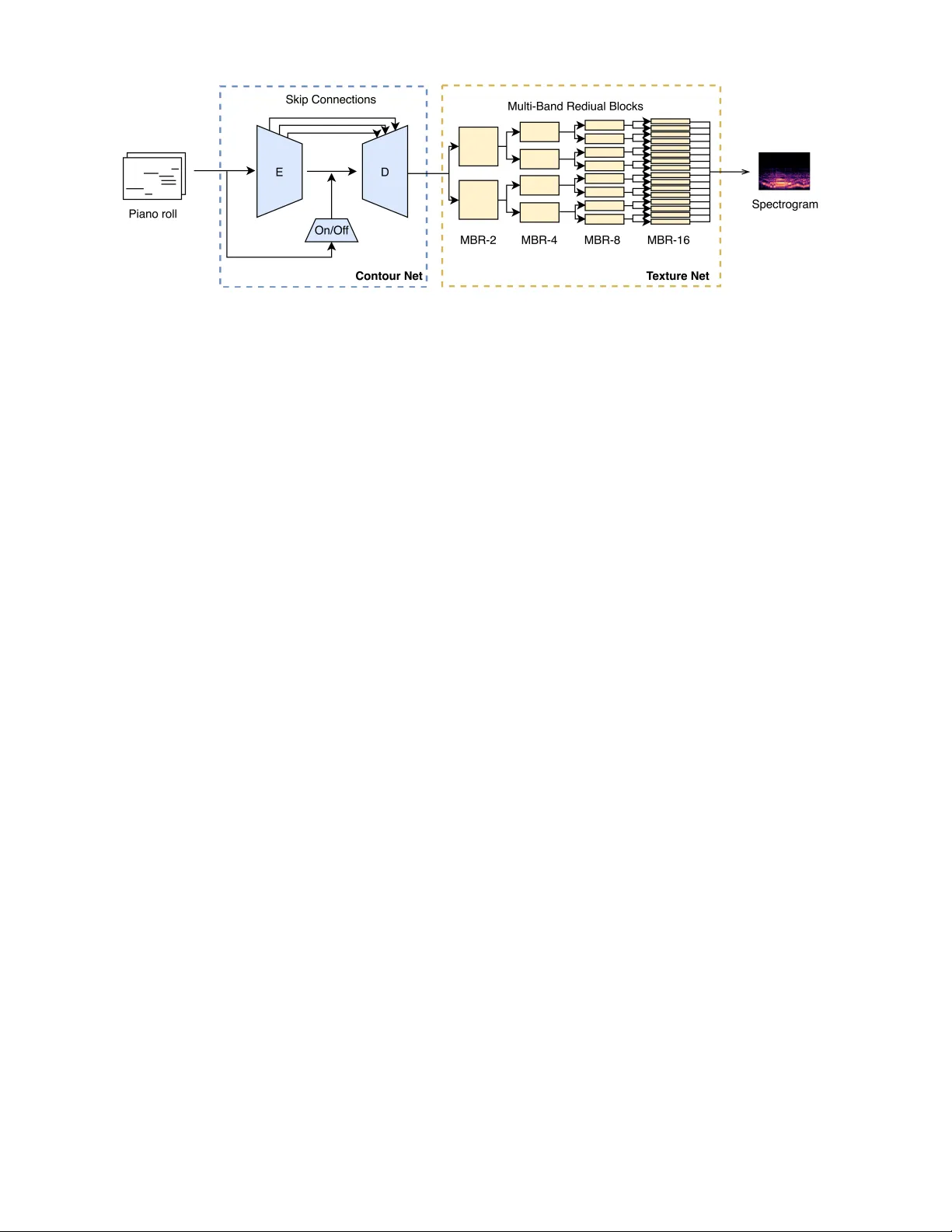

P erf ormanceNet: Scor e-to-A udio Music Generation with Multi-Band Con volutional Residual Network Bryan W ang and Y i-Hsuan Y ang Research Center for Information T echnology Innov ation, Academia Sinica, T aipei, T aiwan { bryanw , yang } @citi.sinica.edu.tw Abstract Music creation is typically composed of two parts: compos- ing the musical score, and then performing the score with in- struments to make sounds. While recent work has made much progress in automatic music generation in the symbolic do- main, few attempts have been made to build an AI model that can render realistic music audio from musical scores. Directly synthesizing audio with sound sample libraries of- ten leads to mechanical and deadpan results, since musical scores do not contain performance-le vel information, such as subtle changes in timing and dynamics. Moreov er , while the task may sound lik e a text-to-speech synthesis problem, there are fundamental differences since music audio has rich poly- phonic sounds. T o build such an AI performer , we propose in this paper a deep con v olutional model that learns in an end-to- end manner the score-to-audio mapping between a symbolic representation of music called the pianorolls and an audio representation of music called the spectrograms. The model consists of two subnets: the ContourNet , which uses a U-Net structure to learn the correspondence between pianorolls and spectrograms and to giv e an initial result; and the T extur eNet , which further uses a multi-band residual netw ork to refine the result by adding the spectral texture of ov ertones and timbre. W e train the model to generate music clips of the violin, cello, and flute, with a dataset of moderate size. W e also present the result of a user study that shows our model achieves higher mean opinion score (MOS) in naturalness and emo- tional expressi vity than a W aveNet-ba sed model and two of f- the-shelf synthesizers. W e open our source code at https: //github.com/bwang514/PerformanceNet Introduction Music is an uni versal language used to express the emo- tion and feeling beyond words. W e can easily enumerate a number of commonalities between music and language. For example, the musical score and text are both the symbolic transcript of their analog counterparts—music and speech, which manifest themselves by sounds or ganized in time. Howe ver , a fundamental dif ference that distinguishes mu- sic from every other languages is that the essence of mu- sic exists mainly in its audio performance (W idmer 2016). Humans can comprehend the meaning of language by read- ing text. But, we cannot fully understand the meaning of a Copyright c 2019, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserved. music piece by merely reading its musical score. Music per - formance is necessary to giv e music meanings and feelings. In addition, musical score only specifies what notes to be played instead of elaborating how to play them. Musicians can lev erage this freedom to interpret the score and add ex- pressiv eness in their own ways, to render unique pieces of sound and to “bring the music to life” (Raphael 2009). The space left for human interpretation giv es music the aesthetic idiosyncrasy , but it also makes automated music synthesis, the process of conv erting a musical score to audio by machine, a challenging task. While modern music pro- duction industry has de veloped sophisticated digital systems for music synthesis, using the default synthesis parameters would still often lead to deadpan results. One has to fine-tune the synthesis parameters for each indi vidual note, sometimes with laborious trial-and-error experiments, to produce a real- istic music clip (Riionheimo and V ¨ alim ¨ aki 2003). These pa- rameters include dynamics attributes such as velocities and timing, as well as timbre attributes such as effects. This re- quires a great amount of time and domain expertise. One approach to automate the fine-tuning process is to train a machine learning model to predict the expressiv e pa- rameters for the synthesizers (Macret and Pasquier 2014). Howe ver , such an auto-parameterization approach may work better for instruments that use relativ ely simple mechanisms to produce sounds, e.g., striking instruments such as the pi- ano and drums. Instruments that produce sounds with more complicated methods, such as the bowing string instruments and the aerophones, are harder to be comprehensiv ely pa- rameterized by pre-defined attributes. T o address this problem, we propose to directly model the end-to-end mapping between musical scores and their audio realizations with a deep neural network, forcing the model to learn its own way the audio attributes that are important and the mechanism to fine-tune them. W e believ e that this is a core task in building an “ AI performer”—to learn how a human performer renders a musical score into audio. Other tasks that are important include modeling the style and men- tal processes of the human performers (e.g., the emotion they try to express), and using these personal attributes to condition the audio generation process. W e attempt to learn only the score-to-audio mapping here, leaving the ability to condition the generation a future work. T ext-to-speech (TTS) generation has been widely stud- Figure 1: The spectrogram of a music clip (right) and its corresponding pianoroll (left). ied in the literature, with W aveNet and its v ariants being the state-of-the-art (Oord et al. 2016; Shen et al. 2018). Howe ver , music is different from speech in that music is polyphonic (while speech is monophonic), and that the mu- sical score can be vie wed as a time-frequency representa- tion (while the text transcript for speech cannot). Since mu- sic is polyphonic, we cannot directly apply a TTS model to score-to-audio generation. But, since the score contains time-frequency information, we can exploit this property to design a new model for our task. A musical score can be viewed as a time-frequency rep- resentation when it is represented by the pianor olls (Dong, Hsiao, and Y ang 2018). As exemplified in Figure 1, a pi- anoroll is a binary , scoresheet-like matrix representing the presence of notes over different time steps for a single instru- ment. When there are multiple instruments, we can extend it to a tensor , leading to a multitrack pianoroll. A multitrack pianoroll would be a set of pianorolls, one for a different track (instrument). On the other hand, we can represent an audio signal by the spectrogram, a real-valued matrix rep- resenting the spectrum of frequencies of sounds over time. Figure 1 shows the nice correspondence between a (single- track) pianoroll and its spectrogram—the presence of a note in the pianoroll would incur a harmonic series (i.e., the fun- damental frequency and its overtones) in the spectrogram. Giv en paired data of scores and audio, we can exploit this correspondence to build a deep con v olutional network that con v ert a matrix (i.e., the pianoroll) into another matrix (i.e., the spectrogram), to realize score-to-audio generation. This is the core idea of the proposed method. A straightforward implementation of the above idea, how- ev er , cannot work well in practice, because the pianorolls are much smaller than the spectrograms in size. The ma- jor technical contribution of the paper is a ne w model that combines con volutional encoders/decoders and multi-band residual blocks to address this issue. W e will talk about the details of the proposed model in the Methodology Section. T o our best knowledge, this work represents the first at- tempt to achiev e score-to-audio generation using fully con- volutional neural netw orks. Compared to W a veNet, the pro- posed model is less data-hungry and is much faster to train. For ev aluation, we train our model to generate the sounds of three different instruments and conduct a user study . The ratings from 156 participants show that our model performs better than a W aveNet-based model (Manzelli et al. 2018) and two off-the-shelf synthesizers in the the mean opinion score of naturalness and emotional expressi vity . (a) Score-to-score model (b) Audio-to-audio model (c) Score-to-audio model (d) AI Composer + AI Performer Figure 2: Illustration of the encoder/decoder netw ork for dif- ferent tasks. (Notation: E , D , z , and c denote the encoder, decoder , latent code, and condition code, respecti vely .) Background Music Generation Algorithmic composition of music has been studied for decades. It garners remarkably increasing attention in re- cent years along with the resurgence of AI research. Many deep learning models have been proposed to build an AI composer . Among existing works, MelodyRNN (W aite et al. 2016) and DeepBach (Hadjeres, Pachet, and Nielsen 2017) are arguably the most well-known. They claim that they can generate realistic melodies and Bach chorales, respec- tiv ely . Follow-up research has been made to generate duets (Roberts et al. 2016), lead sheets (Roberts et al. 2018), mul- titrack pianorolls, (Dong et al. 2018; Simon et al. 2018), or lead sheet arrangement (Liu and Y ang 2018), to name a fe w . Many of these models are based on either generative adver - sarial networks (GAN) (Goodfellow et al. 2014) or varia- tional autoencoders (V AE) (Kingma and W elling 2014). Although exciting progress is being made, we note that the majority of recent work on music generation concerns with only symbolic domain data, not the audio performance. There are attempts to generate melodies with performance attributes such as the PerformanceRNN (Simon and Oore 2017), but it is still in the symbolic domain and it concerns with only the piano. Broadly speaking, such scor e-to-scor e models in volv e learning the latent representation (or latent code) of symbolic scores, as illustrated in Figure 2(a). A few attempts have been made to generate musical au- dio. A famous e xample is NSynth (Engel et al. 2017), which uses V AE to learn a latent space of musical notes from dif- ferent instruments. The model can create new sounds by in- terpolating the latent codes. There are some other models that are based on W av eNet (Dieleman, Oord, and Simonyan 2018) or GAN (Donahue, McAuley , and Puckette 2018; W u et al. 2018). Broadly speaking, we can illustrate such audio-to-audio models with Figure 2(b). The topic addressed in this paper is expressiv e music au- dio generation (or synthesis) from musical scores, which in- volv es a scor e-to-audio mapping illustrated in Figure 2(c). Expressiv e music synthesis has a long tradition of research (W idmer and Goebl 2004), b ut few attempts hav e been made to apply deep learning to score-to-audio generation for ar- bitrary instruments. For example, (Hawthorne et al. 2018) deals with the piano only . One notable e xception is the work presented by (Manzelli et al. 2018), which uses W a veNet to render pianorolls. Howe ver , W av eNet is a general-purpose model for generating any sounds. W e expect our model can perform better , since ours is designed for music. Figure 2(d) sho ws that the score-to-score symbolic music generation model (dubbed the AI composer) can be com- bined with the score-to-audio generation model (dubbed the AI performer) to mimic the way human beings generate mu- sic. This figure also shows that, besides the latent codes, we can add the so-called “condition codes” (Radford, Metz, and Chintala 2016) to condition the generation process. For the symbolic domain, such conditions can be the target musical genre. For the audio domain, such conditions can be some “personal attrib utes” of the AI performer , such as its person- ality , style, or the emotion it intends to express or conv ey in the performance. W e treat the realization of such a complete model as a future work. Deep Encoder/Decoder Networks W e adopt a deep encoder/decoder network structure in this work for score-to-audio generation. W e present some basics of such a network structure belo w . The most fundamental and well-known encoder/decoder architecture is the autoencoder (AE) (Masci et al. 2011). In AE, the input and target output are the same. The goal is to compress the input into a low-dimensional latent code , and then uncompress the latent code to reconstruct the orig- inal input, as shown in Figures 2(a) and (b). The data com- pression is achiev ed with a stack of downsampling layers known collectiv ely as the encoder , and the uncompression is made with a few upsampling layers, the decoder . The en- coders and decoder can be implemented with either fully- connected, conv olutional or recurrent layers. When the input is a noisy v ersion of the target output, the network is called a denoising AE. When we further regularize the distribution of the latent code to follow a Gaussian distribution (which fa- cilitates sampling and accordingly data generation), the net- work is called a V AE (Kingma and W elling 2014). A widely-used design for an encoder/decoder network is to add links between layers of the encoder and the decoder, by concatenating the output of an encoding layer to that of a decoding layer . While training the model, the gradi- ents can be propagated directly through such skip connec- tions . This helps mitigate the gradient vanishing problem and helps train deeper networks. People also refer to an en- coder/decoder network that has skip connections as a U-net (Ronneberger , Fischer , and Brox 2015). Methodology Overview of the Proposed Perf ormanceNet Model A main challenge of score-to-audio generation using a con- volutional network is that the pianorolls are smaller than the spectrograms in size. For example, in our implementation, the size of a (single-track) pianoroll is 128 × 860, whereas the size of the corresponding spectrogram is 1,025 × 860. Moreov er , a pianoroll is a binary-valued matrix with few number of 1’ s (i.e., it is fairly sparse), whereas a spectro- gram is a dense, real-valued matrix. T o make an analogy to computer vision problems, learn- ing the mapping between pianorolls and spectrograms can be thought of as an image-to-image translation task (Gatys, Ecker , and Bethge 2016; Liu, Breuel, and Kautz 2017), which aims to maps a sketch-like binary matrix to an image- like real-valued matrix. On the other hand, the challenges in- volv ed in con verting a low-dimensional pianoroll to a high- dimensional spectrogram can also be found in image super- resolution problems (Dong et al. 2014; Ledig et al. 2017). In other words, we need to address image-to-image translation and image super-resolution at the same time. T o deal with this challenge, we propose a new network architecture that consists of two subnets, one for image-to- image translation and the other for image super-resolution. The model architecture is illustrated in Figure 3. • The first subnet, dubbed the ContourNet , deals with the image-to-image translation part and aims to generate an initial result. It uses a con v olutional encoder/decoder structure to learn the correspondence between pianorolls and spectrograms shown in Figure 1. • The second subnet, dubbed the T extur eNet , deals with im- age super-resolution and aims to enhance the quality of the initial result by adding the spectral texture of ov er- tones and timbre. • After T extureNet, we use the classic Grif fin-Lim algo- rithm (Griffin and Lim 1984) to estimate the phase of the spectorgram and then create the audio w av eforms. Specifically , being moti vated by the success of the pro- gressiv e generative adversarial network (PGGAN) in gen- erating high resolution images (Karras et al. 2018), we at- tempt to improv e the resolution of the generated spectro- gram layer-by-layer in the T extureNet with a few r esidual blocks (He et al. 2016). Howe v er , unlike PGGAN, we use a multi-band design in the T extureNet and aim to improve only the frequency resolution (but not the temporal resolu- tion) progressiv ely . This design is based on the assumption that the ContourNet has learned the temporal characteristics of the tar get and hence the T extureNet can focus on improv- ing the spectral texture of overtones. Such a multi-band de- sign has been shown effecti v e recently for blind audio source separation (T akahashi and Mitsufuji 2017). Moreov er , to further encode the exact timing and duration of the musical notes, we add an additional encoder whose Figure 3: System diagram of the proposed model architecture for score-to-audio generation. (Notation: On/Of f and MBR- k de- note the onset/offset encoder and a multi-band residual block that divides a (log-scaled) spectrogram into k bands, respectiv ely .) output is connected to the bottleneck layer of the ContourNet to pro vide the onset and of fset information of the pianorolls. In what follows, we present the details of its two compo- nent subnets, the ContourNet and the T extureNet. Data Representation The input data of our model is a pianoroll for an instrument, and the target output is the spectrogram of the correspond- ing audio clip. A pianoroll is a binary matrix representing the presence of notes over time. It can be derived from a MIDI file (Dong, Hsiao, and Y ang 2018). W e consider N = 128 notes for the pianorolls in this work. On the other hand, the spectrogram of an audio clip is the magnitude part of its short-time Fourier T ransform (STFT). Its size depends on the windo w size and hop size of STFT , and the length of the audio clip. In our implementation, the pianorolls and spec- torgrams are time-aligned. When the size of a pianoroll is N × T , the size of the corresponding spectrogram would be F × T , where F denotes the number of frequency bins in STFT . T ypically , N F . Since a pianoroll may hav e vari- able length, we adopt a fully-con volutional design (Oquab et al. 2015) and use no pooling layer at all in our network. Y et, for the con venience of training the models with mini- batches, in the training stage we cut the wav eforms in the training set to fixed length chunks of the same T . ContourNet Con volutional U-net with an Asymmetric Structure As said, we use a con v olutional encoder/decoder structure for the ContourNet. Specifically , as illustrated in Figure 3, we add skip connections between the encoder (‘E’) and the de- coder (‘D’) to make it a U-net structure. W e find that such a U-net structure works well in learning the correspondence between a pianoroll and a spectrogram. Specifically , it helps the encoder communicate with the decoder information re- garding the pitch and duration of notes, which would oth- erwise be lost or be vague in the latent code, if we do not use the skip connections. Such detailed pitch information is needed to render coherent music audio. Moreov er , since the dimensions of the pianorolls and spectrograms do not match, we adopt an asymmetric design for the U-net so as to increase the frequency dimension grad- ually from N to F . Specifically , we use a U-Net with a depth of 5 layers. In the encoder , we use 1D conv olutional filters to compress the input pianoroll along the time axis and grad- ually increase the number of channels (i.e. the number of feature maps) by a factor of 2 per layer, from N = 128 up to 4,096 to reach the bottleneck layer . In the decoder , we use 1D deconv olutional filters to gradually uncompress the latent code along the time axis, but decrease the number of channels from 4,096 to F . When the number of channels is equal to F for a layer in the decoder , we no longer decrease the number of channels for the subsequent layers, to ensure the output has the frequency dimension we desire. Onset/offset Encoder Though the model presented abo ve can already generate non-noisy , music-like audio, in our pi- lot studies on generating cello sounds, we find that it tends to perform le gato for a note sequence, even though we can see clear boundary or silent intervals between the notes from the pianoroll. In other words, this model always plays the notes smoothly and connected, suggesting that it has no sense of the offsets and does not kno w when to stop the notes. T o address this issue, we improve the model by adding an encoder to incorporate the onset and offset information of each note from the pianoroll. This encoder is represented by the block marked ‘On/Off ’ in Figure 3. It is implemented by two con volutional layers. Its input is an ‘onset/of fset roll’ we compute from the pianoroll—we use +1 to represent the time of an onset, − 1 to represent the offset, and 0 other- wise. The onset/offset roll is e ven sparser than the pianoroll, but it makes onset/of fset information explicit. Moreo ver , we also use skip connections here and concatenate the output of these two layers to the beginning layers of the U-Net de- coder , to inform the decoder the note boundaries during the early stage of spectrogram generation. Our pilot study shows that the information of the note boundaries greatly helps the ContourNet to learn how to play a note. For example, when playing the violin, the sound at the onset time of the note (i.e., when the bow contacts the string) is dif ferent from the sound for the rest of the time (i.e., during the time period the note is played). W e can di- vide the sound of the note into three stages: it first starts with Figure 4: Illustration of a multi-band residual (MBR) block in the proposed T extureNet. In each MBR block, the input spec- trogram is split into a specific number of frequency bands. W e then feed each band individually to identical sub-blocks con- sisting of the following fiv e layers: 1D-con volution, instance norm (Ulyanov , V edaldi, and Lempitsky 2017), leaky ReLU, 1D-con v olution, and instance norm. The output of all the sub-blocks are then concatenated along the frequency dimension and then summed up with the input of the MBR block. W e then pass the output to the next MBR block. a thin timbre and low volume, gradually develops its veloc- ity (i.e., energy) to the climax with obvious bowing sound, and then vanishes. The onset/offset encoder marks these dif- ferent stages and makes it easier for the decoder to learn the subtle dynamics of music performance. T extureNet While the ContourNet alone can already generate audio with temporal dependency and coherence, it fails to generate the details of the overtones, leading to unnatural sounds with low audio quality . W e attribute this to the fact that we use the same conv olutional filters for all the frequency bins in ContourNet. As a result, it cannot capture the various local spectral textures in different frequency bins. For instance, maybe the sounds from the high frequency bins should be sharper than those from the low frequenc y bins. T o address this issue, we propose a progressiv e multi- band residual learning method to better capture the spectral features of musical ov ertones. The basic idea is to di vide the F × T spectrogram along the frequency axis into sev eral smaller matrices F 0 × T , each corresponding to a frequenc y band. T o learn the different characteristics in different bands, we use dif ferent con volutional filters for different bands, and finally concatenate the result from different bands to have the original size. Instead of doing this multi-band process- ing once, we do it progressi vely—we di vide the spectrogram into fe wer bands in the earlier layers of T extureNet, and into more bands in the latter layers of T extureNet, as Figure 3 shows. In this way , we can gradually improv e the result in different frequenc y resolution. Specifically , similar to PGGAN (Karras et al. 2018), we train different layers of T extureNet with r esidual learning (He et al. 2016). As illustrated in Figure 4, this is im- plemented by adding a skip connection between the input and output of a multi-band r esidual block (MBR), and use element-wise addition to combine the input with the result of the band-wise processing inside an MBR. W ith this skip connection, the model may choose to ignore the band-wise processing inside an MBR. In consequence, we can use se v- eral MBRs for progressiv e learning, each MBR divides the spectrogram into a certain number of bands. W e argue that the proposed design has the following two advantages. First, compared to separately generating the frequency bands, residual learning reduces the diver gence among the generated bands, since the result of the band-wise processing inside an MBR would be integrated with the orig- inal input of the MBR, which has stronger coherence among the whole spectrogram. Second, by progressiv ely dividing the spectrogram into smaller bands, the receptiv e field of each band becomes smaller . This helps the con volutional kernels in T extureNet to capture the spectral features from a wider range to a narrower range. The coarse-to-fine process is analogous to the way human painters paint— oftentimes, painters first draw a coarse sketch, then gradually add the details and put on the colors layer-by-layer . W e note that the idea of multi-band processing has been shown effecti ve by (T akahashi and Mitsufuji 2017) for blind audio source separation, whose goal is to reco v er the sounds of the audio sources that compose an audio mixture. How- ev er , they do not use a progressiv e-gro wing strategy . On the other hand, in PGGAN (Karras et al. 2018), they progres- siv ely enhance the quality of an image by impro ving the res- olution along the two axes simultaneously . W e propose to do so along the frequency dimension only , so that ContourNet and T exture can ha ve dif ferent focuses. Giv en that processing dif ferent bands separately may lead to artifacts at the boundary of the bands, (T akahashi and Mit- sufuji 2017) propose to split the frequency bands with over - laps and apply a hamming window to concatenate the over - lapping areas. W e do not find this impro ves our result much. Dataset W e train our model with the MusicNet dataset (Thick- stun, Harchaoui, and Kakade 2017), which provides time- aligned MIDIs and audio clips for more than 34 hours of chamber music. W e conv ert the MIDIs to pianorolls by the pypianoroll package (Dong, Hsiao, and Y ang 2018). The audio clips are sampled at 44.1 kHz. T o hav e fixed- length input for our training data, we cut the clips into 5-second chunks. W e compute the log-scaled spectrogram with 2,048 window size and 256 hop size with the librosa package (McFee et al. 2015), leading to a 1,025 × 860 spec- trogram and 128 × 860 pianoroll for each input/output data pair . W e remark that it is important to use a small hop size for the audio quality of the generated result. Due to the dif ferences in timbre, we need to train a model for each instrument. From MusicNet, we can find solo clips for the following four instruments: piano, cello, violin and flute. Considering that e xpressi ve performance synthesis for piano has been addressed in prior arts (Simon and Oore 2017; Ha wthorne et al. 2018), we decide to e xperiment with the other three instruments here. This reduces the total dura- tion of the dataset to approximately 87 minutes, with 49, 30, 8 minutes for the cello, violin and flute, respectiv ely . As the scale of the training set is small, we use the fol- lowing simple data augmentation method to increase the data size—when cutting an audio clip into 5-second chunks, we largely increase the temporal overlaps between these chunks. Specifically , the overlaps between chunks are set to 4, 4.5, and 4.75 seconds for the cello, violin, and flute, re- spectiv ely . Although the augmented dataset would contain a large number of similar chunks, it still helps the model learn better , especially when we use a small hop size in the STFT to compute the spectrograms. W e remark that the training data we have for the flute is only composed of 3 audio clips that are 8 minutes long in total. It is interesting to test whether our model can learn to play the flute with such a small dataset. Experiment In training the model, we reserve 1/5 of the clips as the val- idation set for parameter tunning. W e implement the model in Pytorch. The model for the cello con ver ges only after 8 hours of training, on a singe NVIDIA 1080T i GPU. W e conduct two runs of subjective listening test to ev alu- ate the performance of the proposed model. 156 adults par- ticipated in both runs. 41 of them music professionals or stu- dents majored in music. In the first run, we compare the re- sult of our model with that of two MIDI synthesizers. Syn- thesizer 1 uses an open-source one called Aria Maestosa, Figure 5: The result of subjective ev aluation for the first run, comparing the generated samples for three different instru- ments by our model and by two synthesizers. whereas Synthesizer 2 uses Logic Pro, a commercial syn- thesizer . The first synthesizer uses synthesized audio with digital signals, while the second one uses a library of real recordings. Subjects are asked to listen to three 45-second audio pieces for each model (i.e., ours and the two synthe- sizers), without knowing what the model is. Each piece is the result for one instrument, for three pianorolls that are not in the training set. The subjects are recruited from the Internet and they are asked to rate the pieces in terms of the following metrics in a fi ve-point Likert scale: • Does the timbre sound like real instruments? • Naturalness : whether the performance is generated by hu- man, not by machine? • How good is the audio quality ? • Emotion : whether the performance expresses emotion? • The overall perception of the piece. W e average the ratings for the three pieces and consider that as the assessment for a subject for the result of each model. Figure 5 shows the mean opinion scores (MOS). The fol- lowing observations are made. First, and perhaps the most importantly , the proposed model performs better than the two synthesizers in terms of naturalness and emotion . This shows that our model indeed learns the expressi v e attributes in music performance. In terms of natur alness , the proposed model outperforms synthesizer 2 (i.e., Logic Pro) by 0.29 in MOS. Second, in terms of timbr e , the MOS of the proposed model falls within those of the two synthesizers. This sho ws that the timbre of our model is better than soft synth, b ut it is inferior to that of real performance. Third, there is still room to improve the sound quality of our model. Both synthesiz- ers perform better , especially synthesizer 2. This also affects the ratings in overall . From the comments of the subjects, our model works much better in generating performance at- tributes like velocities and emotion, but the audio quality has to be further improv ed. In the second run, we additionally compare our model with the W av eNet-based model proposed by (Manzelli et al. 2018). Because they only show the generated cello clips for two pianorolls on their demo website, we only use our model Figure 6: The result of subjective ev aluation for the second run, comparing the generated samples for the cello by our model and an existing model (Manzelli et al. 2018). to generate audio for the same two pianorolls for compari- son. Figure 6 shows that our model performs consistently better than this prior art in all the metrics. This result demon- strates ho w challenging it is for a neural network to generate realistic audio performance, but it also shows that our model actually represents a big step forward. Conclusion and Discussion In this paper , we present a new deep con volutional model for score-to-audio music generation. The model takes as input a pianoroll and generates as output an audio clip playing that pianoroll. The model is trained with audio recordings of real performance, so it learns to render the pianorolls in an ex- pressiv e way . Because the training is data-driven, the model can learn to play an instrument in dif ferent styles and flav ors provided a training set of corresponding audio recordings. Moreov er , as our model is fully con volutional, the training is fast and it does not require much training data. The user study shows our model achiev es higher mean opinion score in naturalness and emotional expressivity than a W av eNet- based model and two of f-the-shelf synthesizers, for generat- ing solo performance of the cello, violin and flute. W e discuss a few directions of future research below . Firstly , the user study shows that our model does not per- form well for timbre and audio quality . W e may improv e this by GAN training or a W aveNet-based decoder . W e can extend the U-Net+Encoder architecture of the ContourNet to better condition the generation process. For example, we can use multiple encoders to incorporate dif- ferent side information, such as the second-by-second in- strument activity (Hung and Y ang 2018) when the input has multiple instruments, and the intended playing technique for each note. In addition, the use of datasets with performance- lev el annotations (e.g., (Xi et al. 2018)) also makes it possi- ble to condition and better control the generation process. T o facilitate learning the score-to-audio mapping, it is im- portant to hav e some ways to objectiv ely ev aluate the gen- erated result. W e plan to explore the following metrics: i) a pre-trained instrument classifier for the timbre aspect of the result, ii) a pre-trained pitch detector for the pitch aspect, and iii) psychoacoustic features (Cabrera 1999) such as spectral tonalness/noisiness for the perceptual audio quality . The proposed multi-band residual (MBR) blocks can be used for other audio generation tasks such as audio-to-audio, text-to-audio or image/video-to-audio generation. Further- more, the proposed ‘translation + refinement’ architecture may find its application in translation tasks in other domains. Compared to existing two-stage methods (W ang et al. 2018; Karras et al. 2018), the proposed model may have some ad- vantages since the training is done end-to-end. Because the MIDI/audio files of the MusicNet dataset are aligned, our model does not learn the timing aspect of real performances. And, we do not generate multi-instrument music yet. These are to be addressed in the future. Finally , there are many important tasks to realize an “ AI performer , ” e.g. to model the personality , style, and intended emotion of a performer . W e e xpect relev ant research to flour- ish in the near future. References Cabrera, D. 1999. Psysound: A computer program for psy- choacoustical analysis. In Pr oc. Austr alian Acoustical Soci- ety Conf. , 47–54. Dieleman, S.; Oord, A. v . d.; and Simonyan, K. 2018. The challenge of realistic music generation: modelling raw audio at scale. arXiv pr eprint arXiv:1806.10474 . Donahue, C.; McAuley , J.; and Puckette, M. 2018. Syn- thesizing audio with generativ e adversarial networks. arXiv pr eprint arXiv:1802.04208 . Dong, C.; Loy , C. C.; He, K.; and T ang, X. 2014. Learning a deep con v olutional network for image super -resolution. In Pr oc. ECCV . Dong, H.-W .; Hsiao, W .-Y .; Y ang, L.-C.; and Y ang, Y .-H. 2018. MuseGAN: Symbolic-domain music generation and accompaniment with multi-track sequential generative ad- versarial networks. In Pr oc. AAAI . Dong, H.-W .; Hsiao, W .-Y .; and Y ang, Y .-H. 2018. Pyp- ianoroll: Open source Python package for handling mul- titrack pianoroll. In Pr oc. ISMIR . Late-breaking pa- per; [Online] https://github.com/salu133445/ pypianoroll . Engel, J.; Resnick, C.; Roberts, A.; Dieleman, S.; Eck, D.; Simonyan, K.; and Norouzi, M. 2017. Neural audio syn- thesis of musical notes with W aveNet autoencoders. arXiv pr eprint arXiv:1704.01279 . Gatys, L. A.; Ecker , A. S.; and Bethge, M. 2016. Image style transfer using conv olutional neural networks. In Proc. CVPR , 2414–2423. Goodfellow , I. J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; W arde-Farley , D.; Ozair , S.; Courville, A.; and Bengio, Y . 2014. Generativ e adversarial nets. In Pr oc. NIPS . Griffin, D., and Lim, J. 1984. Signal estimation from mod- ified short-time Fourier transform. IEEE T rans. Acoustics, Speech, and Signal Pr ocessing 32(2):236–243. Hadjeres, G.; Pachet, F .; and Nielsen, F . 2017. DeepBach: A steerable model for Bach chorales generation. In Pr oc. ICML , 1362–1371. Hawthorne, C.; Stasyuk, A.; Roberts, A.; Simon, I.; Huang, C.-Z. A.; Dieleman, S.; Elsen, E.; Engel, J.; and Eck, D. 2018. Enabling factorized piano music modeling and generation with the MAESTR O dataset. arXiv pr eprint arXiv:1810.12247 . He, K.; Zhang, X.; Ren, S.; and Sun, J. 2016. Deep residual learning for image recognition. In Pr oc. CVPR , 770–778. Hung, Y .-N., and Y ang, Y .-H. 2018. Frame-lev el instrument recognition by timbre and pitch. In Pr oc. ISMIR . Karras, T .; Aila, T .; Laine, S.; and Lehtinen, J. 2018. Pro- gressiv e growing of GANs for improved quality , stability , and variation. In Pr oc. ICLR . Kingma, D. P ., and W elling, M. 2014. Auto-encoding vari- ational Bayes. In Pr oc. ICLR . Ledig, C.; Theis, L.; Huszar, F .; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.; T ejani, A.; T otz, J.; W ang, Z.; and Shi, W . 2017. Photo-realistic single image super-resolution using a generativ e adv ersarial network. In Pr oc. CVPR . Liu, H.-M., and Y ang, Y .-H. 2018. Lead sheet generation and arrangement by conditional generative adversarial net- work. In Proc. ICMLA . Liu, M.-Y .; Breuel, T .; and Kautz, J. 2017. Unsuper- vised image-to-image translation networks. In Guyon, I.; Luxbur g, U. V .; Bengio, S.; W allach, H.; Fergus, R.; V ish- wanathan, S.; and Garnett, R., eds., Pr oc. NIPS , 700–708. Macret, M., and Pasquier , P . 2014. Automatic design of sound synthesizers as Pure Data patches using coev olution- ary mixed-typed Cartesian genetic programming. In Pr oc. GECCO . Manzelli, R.; Thakkar , V .; Siahkamari, A.; and Kulis, B. 2018. Conditioning deep generativ e raw audio mod- els for structured automatic music. In Pr oc. ISMIR . http://people.bu.edu/bkulis/projects/ music/index.html . Masci, J.; Meier , U.; Cires ¸ an, D.; and Schmidhuber, J. 2011. Stacked conv olutional auto-encoders for hierarchical feature extraction. In Pr oc. ICANN . McFee, B.; Raffel, C.; Liang, D.; Ellis, D. P .; McV icar , M.; Battenberg, E.; and Nieto, O. 2015. librosa: Audio and music signal analysis in python. In Pr oc. SciPy , 18–25. https://librosa.github.io/librosa/ . Oord, A. v . d.; Dieleman, S.; Zen, H.; Simonyan, K.; V inyals, O.; Graves, A.; Kalchbrenner, N.; Senior , A.; and Kavukcuoglu, K. 2016. W av eNet: A generative model for raw audio. arXiv preprint . Oquab, M.; Bottou, L.; Laptev , I.; and Sivic, J. 2015. Is ob- ject localization for free?-weakly-supervised learning with con v olutional neural networks. In Proc. CVPR , 685–694. Radford, A.; Metz, L.; and Chintala, S. 2016. Unsupervised representation learning with deep con v olutional generativ e adversarial networks. In Pr oc. ICLR . Raphael, C. 2009. Representation and synthesis of melodic expression. In Pr oc. IJCAI . Riionheimo, J., and V ¨ alim ¨ aki, V . 2003. Parameter estima- tion of a plucked string synthesis model using a genetic al- gorithm with perceptual fitness calculation. EURASIP J. Ap- plied Signal Pr ocessing 791–805. Roberts, A.; Engel, J.; Hawthorne, C.; Simon, I.; W aite, E.; Oore, S.; Jaques, N.; Resnick, C.; and Eck, D. 2016. Inter- activ e musical impro visation with Magenta. In Pr oc. NIPS . Roberts, A.; Engel, J.; Raffel, C.; Hawthorne, C.; and Eck, D. 2018. A hierarchical latent vector model for learning long-term structure in music. In Pr oc. ICML , 4361–4370. Ronneberger , O.; Fischer, P .; and Brox, T . 2015. U-net: Con v olutional networks for biomedical image segmentation. In Pr oc. MICCAI , 234–241. Springer . Shen, J.; Pang, R.; W eiss, R. J.; Schuster, M.; Jaitly , N.; Y ang, Z.; Chen, Z.; Zhang, Y .; W ang, Y .; Skerry-Ryan, R. J.; Saurous, R. A.; Agiomyrgiannakis, Y .; and W u, Y . 2018. Natural TTS synthesis by conditioning W av eNet on Mel spectrogram predictions. In Pr oc. ICASSP . Simon, I., and Oore, S. 2017. Performance RNN: Generat- ing music with expressi ve timing and dynamic. https:// magenta.tensorflow.org/performance- rnn . Simon, I.; Roberts, A.; Raffel, C.; Engel, J.; Hawthorne, C.; and Eck, D. 2018. Learning a latent space of multitrack measures. In Pr oc. ISMIR . T akahashi, N., and Mitsufuji, Y . 2017. Multi-scale multi- band densenets for audio source separation. In Pr oc. W AS- P AA , 21–25. Thickstun, J.; Harchaoui, Z.; and Kakade, S. M. 2017. Learning features of music from scratch. In Proc. ICLR . https://homes.cs.washington.edu/ ˜ thickstn/musicnet.html . Ulyanov , D.; V edaldi, A.; and Lempitsky , V . 2017. Improved texture networks: Maximizing quality and diversity in feed- forward stylization and texture synthesis. In Pr oc. CVPR . W aite, E.; Eck, D.; Roberts, A.; and Abolafia, D. 2016. Project Magenta: Generating long- term structure in songs and stories. https: //magenta.tensorflow.org/blog/2016/07/ 15/lookback- rnn- attention- rnn/ . W ang, T .-C.; Liu, M.-Y .; Zhu, J.-Y .; T ao, A.; Kautz, J.; and Catanzaro, B. 2018. High-resolution image synthesis and se- mantic manipulation with conditional gans. In Proc. CVPR . W idmer , G., and Goebl, W . 2004. Computational models of expressi v e music performance: The state of the art. J. New Music Resear ch 33(3):203–216. W idmer , G. 2016. Getting closer to the essence of mu- sic: The Con Espressione Manifesto. ACM T rans. Intelligent Systems and T echnology 8(2):19:1–19:13. W u, C.-W .; Liu, J.-Y .; Y ang, Y .-H.; and Jang, J.-S. R. 2018. Singing style transfer using cycle-consistent boundary equi- librium generative adversarial networks. In Proc. Joint W orkshop on Machine Learning for Music . Xi, Q.; Bittner, R. M.; Pauwels, J.; Y e, X.; and Bello, J. P . 2018. GuitarSet: A dataset for guitar transcription. In Pr oc. ISMIR , 453–460.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment