Music Generation by Deep Learning - Challenges and Directions

In addition to traditional tasks such as prediction, classification and translation, deep learning is receiving growing attention as an approach for music generation, as witnessed by recent research groups such as Magenta at Google and CTRL (Creator …

Authors: Jean-Pierre Briot, Franc{c}ois Pachet

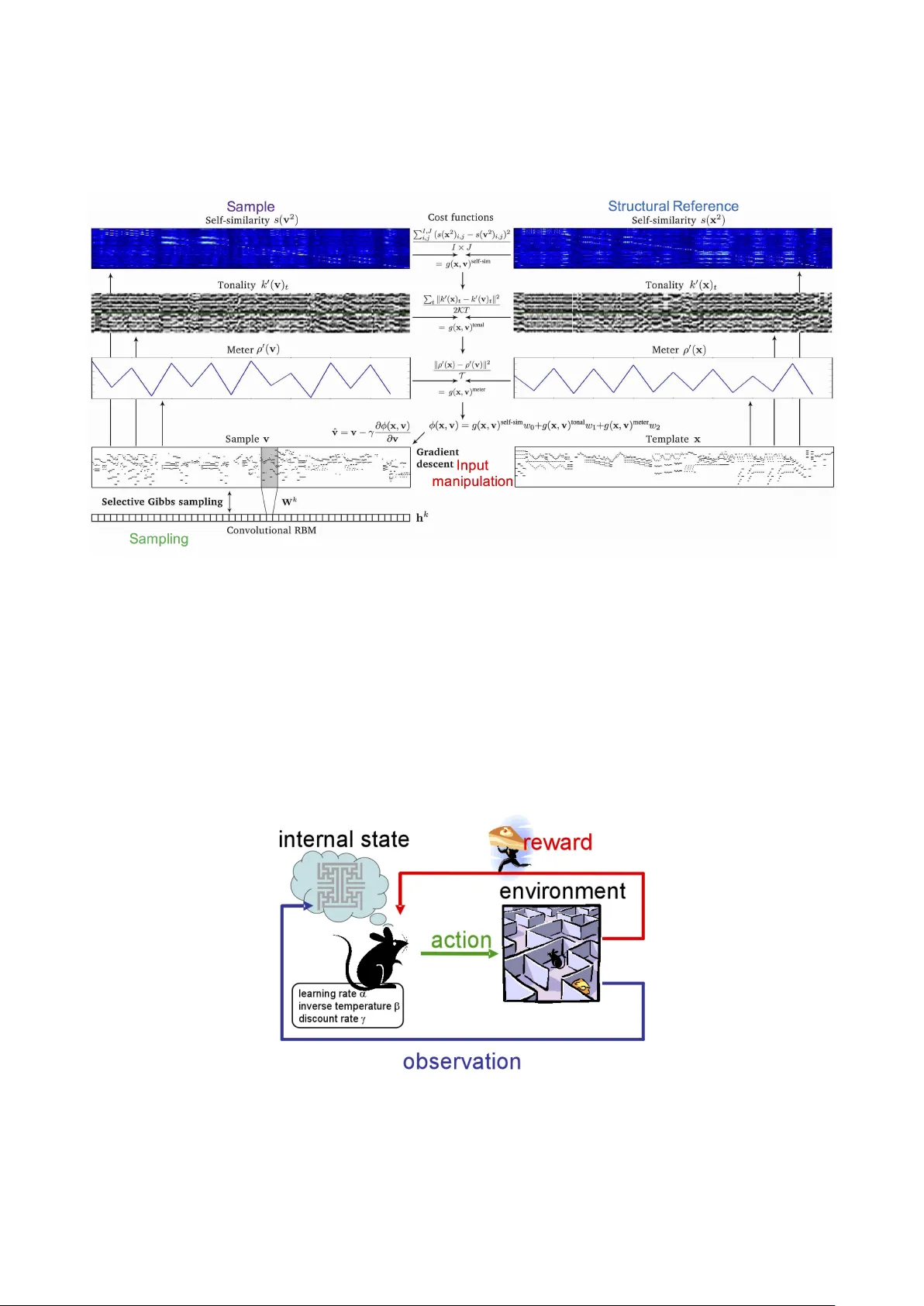

Music Generation b y Deep Learning – Challenges and Directions ∗ Jean-Pierre Briot † F ran¸ cois P achet ‡ † Sorb onne Univ ersit´ es, UPMC Univ P aris 06, CNRS, LIP6, Paris, F rance Jean-Pierre.Briot@lip6.fr ‡ Sp otify Creator T ec hnology Research Lab, P aris, F rance francois@spotify.com Abstract: In addition to traditional tasks suc h as prediction, classification and translation, deep learning is receiving gro wing attention as an approach for music generation, as witnessed by recent research groups such as Magen ta at Google and CTRL (Creator T ec hnology Research Lab) at Sp otify . The motiv ation is in using the capacity of deep learning architectures and training techniques to automatically learn m usical styles from arbitrary m usical corp ora and then to generate samples from the estimated distribution. Ho wev er, a direct application of deep learning to generate conten t rapidly reaches limits as the generated conten t tends to mimic the training set without exhibiting true creativity . Moreo ver, deep learning arc hitectures do not offer direct w ays for controlling generation (e.g., imp osing some tonality or other arbitrary constraints). F urthermore, deep learning architectures alone are autistic automata which generate music autonomously without human user in teraction, far from the ob jectiv e of in teractively assisting musicians to comp ose and refine m usic. Issues such as: con trol, structure, creativit y and in teractivity are the fo cus of our analysis. In this paper, we select some limitations of a direct application of deep learning to music generation, analyze why the issues are not fulfilled and how to address them by p ossible approaches. V arious examples of recen t systems are cited as examples of promising directions. 1 In tro duction 1.1 Deep Learning Deep learning has become a fast growing domain and is no w used routinely for classification and prediction tasks, such as image and voice recognition, as well as translation. It emerged ab out 10 y ears ago, when a deep learning architecture significantly outperformed standard tec hniques using handcrafted features on an image classification task [HOT06]. W e may explain this success and reemergence of artificial neural netw orks arc hitectures and techniques by the combination of: 1. te chnic al pr o gr ess , such as: conv olutions, whic h pro vide motif translation inv ariance [CB98], and LSTM (Long Short-T erm Memory), which resolved inefficient training of recurren t neural netw orks [HS97]; 2. av ailability of multiple data sets ; 3. av ailability of efficient and che ap c omputing p ower , e.g., offered by graphics pro cessing units (GPU). There is no consensual definition of deep learning. It is a rep ertoire of machine learning (ML) techniques, based on artificial neural netw orks 1 . The common ground is the term de ep , which means that there are multiple la yers pro cessing multiple levels of abstractions, which are automatically extracted from data, as a wa y to express complex representations in terms of simpler representations. Main applications of deep learning are within the tw o traditional machine learning tasks of classific ation and pr e diction , as a testimony of the initial DNA of neural netw orks: logistic regression and linear regression. But a growing area of application of deep learning techniques is the gener ation of c ontent : text, images, and music , the fo cus of this article. ∗ T o appear in Special Issue on Deep learning for music and audio, Neural Computing & Applications, Springer Nature, 2018. 1 With many v ariants such as conv olutional netw orks, recurrent netw orks, auto enco ders, restricted Boltzmann machines, etc. [GBC16]. 1 1.2 Deep Learning for Music Generation The motiv ation for using deep learning, and more generally machine learning techniques, to generate musical con tent is its generalit y . As opp osed to handcrafted mo dels for, e.g., grammar-based [Ste84] or rule-based m usic generation systems [Eb c88], a mac hine-learning-based generation system can automatically learn a mo del, a style , from an arbitrary corpus of m usic. Generation can then take place b y using prediction (e.g., to predict the pitch of the next note of a melody) or classification (e.g., to recognize the chord corresp onding to a melo dy), based on the distribution and correlations learnt by the deep mo del which represent the style of the corpus. As stated b y Fiebrink and Caramiaux in [FC16], b enefits are: 1) it can make creation feasible when the desired application is to o complex to b e describ ed by analytical formulations or manual brute force design; 2) learning algorithms are often less brittle than manually-designed rule sets and learned rules are more likely to generalize accurately to new contexts in which inputs may change. 1.3 Challenges A direct application of deep learning arc hitectures and techniques to generation, although it could pro duce impressing results 2 , suffers from some limitations. W e consider here 3 : • Contr ol , e.g., tonality conformance, maximum num b er of rep eated notes, rhythm, etc.; • Structur e , v ersus wandering music without a sense of direction; • Cr e ativity , versus imitation and risk of plagiarism; • Inter activity , versus automated single-step generation. 1.4 Related W ork A comprehensive survey and analysis by Briot et al. of deep learning techniques to generate musical conten t is a v ailable in a b o ok [BHP18]. In [HCC17], Herremans et al. prop ose a function-oriented taxonomy for v arious kinds of m usic generation systems. Examples of surv eys ab out of AI-based metho ds for algorithmic music comp osition are b y Papadopoulos and Wiggins [PW99] and b y F ern´ andez and Vico [FV13], as well as b o oks by Cop e [Cop00] and b y Nierhaus [Nie09]. In [Gra14], Grav es analyses the application of recurrent neural net works arc hitectures to generate sequences (text and music). In [FC16], Fiebrink and Caramiaux address the issue of using machine learning to generate creative m usic. W e are not aw are of a comprehensive analysis dedicated to deep learning (and artificial neural net w orks tec hniques) that systematically analyzes limitations and c hallenges, solutions and directions, in other words that is pr oblem-oriente d and not just application-oriented. 1.5 Organization The article is organized as follo ws. Section 1 (this section) in tro duces the general context of deep learning-based m usic generation and lists some imp ortant challenges. It also includes a comparison to some related work. The follo wing sections analyze eac h challenge and some solutions, while illustrating through examples of actual systems: control/section 2, structure/section 3, creativity/section 4 and in teractivity/section 5. 2 Con trol Musicians usually wan t to adapt ideas and patterns b orro wed from other contexts to their own ob jective, e.g., transp osition to another key , minimizing the num b er of notes. In practice this means the abilit y to con trol generation b y a deep learning arc hitecture. 2.1 Dimensions of con trol strategies Suc h arbitrary con trol is actually a difficult issue for curren t deep learning architectures and techniques, because standard neural net works are not designed to b e controlled. As opp osed to Mark ov mo dels which hav e an op erational mo del where one can attach constraints onto their internal op erational structure in order to control the generation 4 , neural netw orks do not offer suc h an op erational entry p oin t. Moreov er, the distributed nature of their representation do es not provide a direct corresp ondence to the structure of the conten t generated. As a result, strategies for controlling deep learning generation that we will analyze hav e to rely on some external in terven tion at v arious entry p oints (ho oks), such as: 2 Music difficult to distinguish from the original corpus. 3 Additional challenges are analyzed in [BHP18]. 4 Two examples are Markov constraints [PRB11] and factor graphs [PPR17]. 2 • Input ; • Output ; • Enc apsulation/r eformulation . 2.2 Sampling Sampling a model 5 to generate con tent ma y be an entry p oin t for control if we in tro duce c onstr aints on the output generation (this is called c onstr aint sampling ). This is usually implemented by a generate-and-test approac h, where v alid solutions are pick ed from a set of generated random samples from the mo del 6 . As we will see, a key issue is ho w to guide the sampling pro cess in order to fulfill the ob jectives (constraints), thus sampling will b e often combined with other strategies. 2.3 Conditioning The strategy of c onditioning (sometimes also named c onditional ar chite ctur e ) is to condition the architecture on some extra conditioning information, which could b e arbitrary , e.g., a class lab el or data from other mo dalities. Examples are: • a b ass line or a b e at structur e , in the rhythm generation system [MKPKK17]; • a chor d pr o gr ession , in the MidiNet architecture [YCY17]; • a music al genr e or an instrument , in the W a veNet architecture [vdODZ + 16]; • a set of p ositional c onstr aints , in the An ticipation-RNN architecture [HN17]. In practice, the conditioning information is usually fed in to the architecture as an additional input lay er. Conditioning is a wa y to ha ve some degree of p ar ameterize d c ontr ol ov er the generation pro cess. 2.3.1 Example 1: W av eNet Audio Sp eec h and Music Generation The W a veNet architecture b y v an der Oord et al. [vdODZ + 16] is aimed at generating ra w audio w av eforms. The arc hitecture is based on a conv olutional feedforward netw ork without p o oling lay er 7 . It has b een exp erimen ted on generation for three audio domains: m ulti-sp eaker, text-to-sp eech (TTS) and music. The W a veNet architecture uses conditioning as a wa y to guide the generation, by adding an additional tag as a conditioning input. Tw o options are considered: glob al conditioning or lo c al conditioning, dep ending if the conditioning input is shared for al l time steps or is sp ecific to e ach time step. An example of application of conditioning W a veNet for a text-to-sp eech application domain is to feed linguistic features (e.g., North American English or Mandarin Chinese sp eak ers) in order to generate sp eech with a b etter proso dy . The authors also rep ort preliminary experiments on conditioning music mo dels to generate m usic given a set of tags sp ecifying, e.g., genre or instruments. 2.3.2 Example 2: Anticipation-RNN Bac h Melo dy Generation Hadjeres and Nielsen prop ose a system named An ticipation-RNN [HN17] for generating melo dies with unary constrain ts on notes (to enforce a giv en note at a giv en time p osition to ha ve a given v alue). The limitation when using a standard note-to-note iterative strategy for generation b y a recurren t netw ork is that enforcing the constraint at a certain time step may retrosp ectively inv alidate the distribution of the previously generated items, as shown in [PRB11]. The idea is to condition the recurrent net work (RNN) on some information summarizing the set of further (in time) constraints as a w ay to anticipate oncoming constrain ts, in order to generate notes with a correct distribution. Therefore, a second RNN architecture 8 , named Constrain t-RNN, is used and it functions bac kward in time and ts outputs are used as additional inputs of the main RNN (named T ok en-RNN), resulting in the arc hitecture sho wn at Figure 1, with: 5 The mo del can b e sto chastic, such as a restricted Boltzmann machine (RBM) [GBC16], or deterministic, such as a feedforward or a recurrent net work. In that latter case, it is common practice to sample from the softmax output in order to in tro duce variability for the generated conten t [BHP18]. 6 Note that this may b e a very costly pro cess and moreov er with no guaran tee to succeed. 7 An important sp ecificity of the architecture (not discussed here) is the notion of dilate d c onvolution , where con volution filters are incrementally dilated in order to provide very large receptive fields with just a few lay ers, while preserving input resolution and computational efficiency [vdODZ + 16]. 8 Both are 2-lay er LSTMs [HS97]. 3 Figure 1: Anticipation-RNN architecture. Repro duced from [HN17] with p ermission of the authors Figure 2: Examples of melo dies generated by Anticipation-RNN. Repro duced from [HN17] with p ermission of the authors • c i is a p ositional c onstr aint ; • o i is the output at index i (after i iterations) of Constraint-RNN – it summarizes constraint informations from step i to final step (end of the sequence) N . It will b e concatenated ( ⊕ ) to input s i − 1 of T ok en-RNN in order to predict next item s i . The architecture has b een tested on a corpus of melo dies taken from J. S. Bach c horales. Three examples of melo dies generated with the same set of positional constraints (indicated with notes in green within a rectangle) are sho wn at Figure 2. The mo del is indeed able to anticipate each p ositional constraint by adjusting its direction to wards the target (low er-pitched or higher-pitched note). 2.4 Input Manipulation The strategy of input manipulation has b een pioneered for images by DeepDream [MOT15]. The idea is that the initial input con tent, or a brand new (randomly generated) input conten t, is incrementally manipulated in order to matc h a tar get pr op erty . Note that control of the generation is indir e ct , as it is not b eing applied to the output but to the input , b efor e gener ation . Examples are: • maximizing the activation of a sp ecific unit , to exagger ate some visual elemen t sp ecific to this unit, in DeepDream [MOT15]; • maximizing the similarity to a given tar get , to create a c onsonant melo dy , in DeepHear [Sun17]; • maximizing b oth the c ontent similarity to some initial image and the style similarity to a reference st yle image, to p erform style tr ansfer [GEB15]; • maximizing the similarity of structur e to some reference music, to p erform style imp osition [LGW16]. In terestingly , this is done by reusing standard training mechanisms, namely back-propagation to compute the gradien ts, as well as gradien t descent to minimize the cost. 4 Figure 3: Generation in DeepHear. Extension of a figure repro duced from [Sun17] with permission of the author 2.4.1 Example 1: DeepHear Ragtime Melo dy Accompaniment Generation The DeepHear architecture by Sun [Sun17] is aimed at generating ragtime jazz melo dies. The architecture is a 4-la yer stack ed auto enco ders (that is 4 hierarc hically nested auto enco ders), with a decreasing num b er of hidden units, do wn to 16 units. A t first, the mo del is trained 9 on a corpus of 600 measures of Scott Joplin’s ragtime music, split into 4- measure long segments. Generation is p erformed by inputing random data as the seed into the 16 b ottleneck hidden lay er units and then by feedforwarding it into the chain of deco ders to pro duce an output (in the same 4-measure long format of the training examples), as shown at Figure 3. In addition to the generation of new melo dies, DeepHear is used with a different ob jectiv e: to harmonize a melo dy , while using the same architecture as well as what has already b een learnt 10 . The idea is to find a lab el instance of the set of features i.e. a set of v alues for the 16 units of the b ottleneck hidden lay er of the stack ed auto encoders whic h will result in some deco ded output matching as muc h as p ossible a given melo dy . A simple distance function is defined to represen t the dissimilarity b et ween t wo melodies (in practice, the n umber of not matched notes). Then a gradient descent is conducted onto the v ariables of the embedding, guided by the gradien ts corresp onding to the distance function until finding a sufficiently similar deco ded melo dy . Although this is not a real counterpoint but rather the generation of a similar (consonant) melo dy , the results do pro duce some naiv e counterpoint with a ragtime flav or. 2.4.2 Example 2: VRAE Video Game Melo dy Generation Note that input manipulation of the hidden lay er units of an auto encoder (or stack ed auto encoders) b ears some analogy with v ariational auto enco ders 11 , such as for instance the VRAE (V ariational Recurrent Auto-Enco der) arc hitecture of F abius and v an Amersfo ort [FvA15]. Indeed in b oth cases, there is some exploration of p ossible v alues for the hidden units (laten t v ariables) in order to generate v ariations of m usical con tent by the deco der (or the chain of deco ders). The imp ortan t difference is that in the c ase of v ariational auto enco ders, the exploration of v alues is user-dir e cte d , although it could b e guided by some principle, for example an interpolation to create a medley of t wo songs, or the addition or subtraction of an attribute vector capturing a giv en characteristic (e.g., high density of notes as in Figure 4). In the case of input manipulation, the exploration of v alues is automatically guided by the gradient following mec hanism, the user having priorly sp ecified a cost function to b e minimized or an ob jectiv e to b e maximized. 2.4.3 Example 3: Image and Audio Style T ransfer St yle transfer has b een pioneered by Gatys et al. [GEB15] for images. The idea, summarized at Figure 5, is to use a deep learning architecture to indep endently capture: • the features of a first image (named the c ontent ), 9 Autoenco ders are trained with the same data as input and output and therefore ha ve to discov er significative features in order to b e able to reconstruct the compressed data. 10 Note that this is a simple example of transfer le arning [GBC16], with a same domain and a same training, but for a different task. 11 A v ariational auto enco der (V AE) [KW14] is an auto enco der with the added constraint that the enco ded representation (its latent v ariables) follows some prior probability distribution (usually a Gaussian distribution). Therefore, a v ariational auto enco der is able to learn a “smo oth” laten t space mapping to realistic examples. 5 Figure 4: Example of melo dy generated (b ottom) b y MusicV AE b y adding a “high note density” attribute v ector to the latent space of an existing melody (top). Repro duced from [RER + 18a] with permission of the authors • and the style (the correlations b etw een features) of a second image (named the style ), • and then, to use gradien t following to guide the incremental mo dification of an initially random third image, with the double ob jectiv e of matching b oth the c ontent and the style descriptions 12 . T ransp osing this st yle transfer tec hnique to music was a natural direction and it has b een exp erimen ted indep enden tly for audio, e.g., in [UL16] and [FYR16], b oth using a sp ectrogram (and not a direct wa ve signal) as input. The result is effectiv e, but not as interesting as in the case of painting style transfer, b eing somehow more similar to a sound merging of the style and of the conten t. W e b elieve that this is b ecause of the anisotr opy 13 of global music conten t representation. 2.4.4 Example 4: C-RBM Mozart Sonata Generation The C-RBM arc hitecture prop osed by Lattner et al. [LGW16] uses a restricted Boltzmann machine (RBM) to learn the lo c al structur e , seen as the music al textur e , of a corpus of musical pieces (in practice, Mozart sonatas). The architecture is conv olutional (only) on the time dimension, in order to mo del temp orally inv ariant motiv es, but not pitch in v ariant motiv es whic h would break the notion of tonality. The main idea is in imp osing by c onstr aints onto the generated piece some more glob al structur e (form, e.g., AABA, as w ell as tonalit y), seen as a structur al template inspired from the reference of an existing m usical piece. This is called structur e imp osition 14 , also coined as templagiarism (short for template plagiarism) by Hofstadter [Hof01]. Generation is done by sampling from the RBM with three types of c onstr aints : • Self-similarity , to sp ecify a glob al structur e (e.g., AABA) in the generated music piece. This is mo deled by minimizing the distance betw een the self-similarit y matrices of the reference target and of the in termediate solution; • T onality c onstr aint , to sp ecify a key (tonality). T o estimate the k ey in a given temporal windo w, the distribution of pitch classes is compared with the key profiles of the reference; • Meter c onstr aint , to imp ose a sp ecific meter (also named a time signatur e , e.g., 4/4) and its related rh ythmic pattern (e.g., accent on the third b eat). The relative occurrence of note onsets within a measure is constrained to follow that of the reference. 12 Note that one may balance b etw een conten t and style ob jectiv es through some α and β parameters in the L total combined loss function shown at top of Figure 5. 13 In the case of an image, the correlations b etw een visual elements (pixels) are equiv alent whatev er the direction (horizontal axis, vertical axis, diagonal axis or an y arbitrary direction), in other w ords correlations are isotr opic . In the case of a global represen tation of musical conten t (see, e.g., Figure 12), where the horizon tal dimension represents time and the v ertical dimension represents the notes, horizontal correlations represent temp or al correlations and vertical correlations represen t harmonic correlations, which hav e very different nature. 14 Note that this also some kind of style transfer [DZX18], although of a high-level structure and not a low-level timbre as in Section 2.4.3. 6 Figure 5: Style transfer full architecture/process. Repro duced with p ermission of the authors Generation is p erformed via c onstr aine d sampling , a mechanism to restrict the set of p ossible solutions in the sampling process according to some pre-defined constrain ts. The principle of the process (illustrated at Figure 6) is as follows. At first, a sample is randomly initialized, follo wing the standard uniform distribution. A step of constrained sampling is comp osed of n runs of gradien t descent to imp ose the high-lev el structure, follo wed by p runs of sele ctive Gibbs sampling to selectively realign the sample onto the learnt distribution. A simulate d anne aling algorithm is applied in order to decrease exploration in relation to a decrease of v ariance o ver solutions. Results are quite convincing. How ever, as discussed by the authors, their approach is not exact, as for instance b y the Marko v constraints approach prop osed in [PRB11]. 2.5 Reinforcemen t The strategy of r einfor c ement is to r eformulate the generation of musical conten t as a r einfor c ement le arn- ing pr oblem , while using the output of a trained recurrent net work as an obje ctive and adding user defined constrain ts, e.g., some tonality rules according to music theory , as an additional obje ctive . Let us at first quickly remind the basic concepts of reinforcement learning, illustrated at Figure 7: • An agent sequentially selects and p erforms actions within an envir onment ; • Each action p erformed brings it to a new state , • with the fe e db ack (b y the en vironment) of a r ewar d ( r einfor c ement signal ), which represents some ade qua- tion of the action to the environmen t (the situation). • The ob jectiv e of r einfor c ement le arning is for the agen t to learn a near optimal p olicy (sequence of actions) in order to maximize its cumulate d r ewar ds (named its gain ). Generation of a melo dy may b e form ulated as follo ws (as in Figure 8): the state s represen ts the musical con tent (a p artial melo dy ) generated so far and the action a represents the selection of next note to b e generated. 2.5.1 Example: RL-T uner Melo dy Generation The r einfor c ement str ate gy has b een pioneered by the RL-T uner arc hitecture by Jaques et al. [JGTE16]. The arc hitecture, illustrated at Figure 8, consists in tw o reinforcement learning arc hitectures, named Q Netw ork and 7 Figure 6: C-RBM Architecture Figure 7: Reinforcement learning (Conceptual mo del) – Repro duced from [DU05] 8 Figure 8: RL-T uner architecture T arget Q Netw ork 15 and t wo r e curr ent network (RNN) architectures, named Note RNN and Reward RNN. After training Note RNN on the corpus, a fixed copy named Reward RNN is used as a r efer enc e for the reinforcemen t learning architecture. The r ewar d r of Q Netw ork is defined as a combination of tw o ob jectiv es: • Adherence to what has b e en le arnt , by measuring the similarit y of the action selected (next note to b e generated) to the note predicted by Reward RNN in a similar state (partial melo dy generated so far); • Adherence to user-define d c onstr aints (e.g., consistency with current tonalit y , av oidance of excessive rep- etitions. . . ), b y measuring how well they are fulfilled. Although preliminary , results are convincing. Note that this strategy has the p otential for adaptive genera- tion b y incorp orating feedback from the user. 2.6 Unit Selection The unit sele ction strategy relies in querying successive music al units (e.g., a melo dy within a measure) from a data base and in c onc atenating them in order to generate some sequence according to some user characteristics. 2.6.1 Example: Unit Selection and Concatenation Melo dy Generation This strategy has b een pioneered b y Bretan et al. [BWH16] and is actually inspired by a technique commonly used in text-to-sp eech (TTS) systems and adapted in order to generate melo dies (the corpus used is diverse and includes jazz, folk and ro c k). The key pro cess here is unit sele ction (in general each unit is one measure long), based on t wo criteria: semantic r elevanc e and c onc atenation c ost . The arc hitecture includes one auto enc o der and t wo LSTM r e curr ent networks . The first preparation phase is feature extraction of musical units. 10 manually handcrafted features are considered, following a b ag-of-wor ds (BOW) approach (e.g., coun ts of a certain pitch class, counts of a certain pitc h class rhythm tuple, if first note is tied to previous measure, etc.), resulting in 9,675 ac tual features. The key of the generation is the pro cess of selection of a b est (or at least, very go o d) successor candidate to a giv en musical unit. Two criteria are considered: • Suc c essor semantic r elevanc e – It is based on a mo del of transition b etw een units, as learn t by a LSTM recurren t netw ork. In other words, that relev ance is based on the distance to the (ideal) next unit as predicted b y the mo del; • Conc atenation c ost – It is based on another mo del of transition 16 , this time b etw een the last note of the unit and the first note of the next unit, as learnt by another LSTM recurren t netw ork. The com bination of the t wo criteria (illustrated at Figure 9) is handled b y a heuristic-based dynamic r anking pro cess. As for a recurren t netw ork, generation is iterated in order to create, unit b y unit (measure b y measure), an arbitrary length melo dy. 15 They use a deep learning implementation of the Q-learning algorithm. Q Netw ork is trained in parallel to T arget Q Netw ork which estimates the value of the gain) [vHGS15]. 16 At a more fine-grained level, note-to-note level, than the previous one. 9 Figure 9: Unit selection based on seman tic cost Note that the unit selection strategy actually provides entry p oints for con trol, as one may extend the selection framework based on tw o criteria: successor semantic relev ance and concatenation cost with user defined constrain ts/criteria. 3 Structure Another challenge is that most existing systems hav e a tendency to generate music with “no sense of direction”. In other words, although the style of the generated m usic corresponds to the corpus learnt, the m usic lacks some structur e and app ears to wander without some higher organization, as opp osed to human composed music whic h usually exhibits some global organization (usually named a form ) and identified comp onents, such as: • Overture, Allegro, Adagio or Finale for classical music; • AABA or AAB in Jazz; • Refrain, V erse or Bridge for songs. Note that there are v arious p ossible lev els of structure. F or instance, an example of finer grain structure is at the lev el of melo dic patterns that can b e rep eated, often transp osed in order to adapt to a new harmonic structure. Reinforcemen t (as used by RL-T uner at Section 2.5.1) and structure imp osition (as used b y C-RBM at Section 2.4.4) are approaches to enforce some constraints, p ossibly high-level, onto the generation. An alternative top-do wn approach is follo wed by the unit selection strategy (see Section 2.6), by incrementally generating an abstract sequence structure and filling it with musical units, although the structure is currently flat. Therefore, a natural direction is to explicitly consider and pro cess differen t levels (hierarc hies) of temp oralit y and of structure. 3.1 Example: MusicV AE Multivoice Generation Rob erts et al. prop ose a hierarc hical architecture named MusicV AE [RER + 18b] follo wing the principles of a v ariational autoenco der encapsulating recurrent netw orks (RNNs, in practice LSTMs) such as VRAE introduced at Section 2.4.2, with tw o differences: • the enco der is a bidirectional RNN; • the deco der is a hierarchical 2-level RNN comp osed of: 10 Figure 10: MusicV AE architecture. Reproduced from [RER + 18b] with p ermission of the authors – a high-level RNN named the Conductor pro ducing a sequence of embeddings; – a b ottom-lay er RNN using eac h embedding as an initial state 17 and also as an additional input concatenated to its previously generated token to pro duce each subsequence. The resulting architecture is illustrated at Figure 10. The authors rep ort that an equiv alent “flat” (with- out hierarch y) arc hitecture, although accurate in modeling the st yle in the case of 2-measure long examples, turned out inaccurate in the case of 16-measure long examples, with a 27% error increase for the auto enco der reconstruction. Some preliminary ev aluation has also b een conducted with a comparison by listeners of three v ersions: flat architecture, hierarchical architecture and real music for three types of music: melo dy , trio and drums, sho wing a very significant gain with the hierarchical architecture. 4 Creativit y The issue of the cr e ativity of the music generated is not only an artistic issue but also an economic one, b ecause it raises a c opyright issue 18 . One approac h is a p osteriori , by ensuring that the generated music is not to o similar (e.g., in not having recopied a significant amount of notes of a melo dy) to an existing piece of music. T o this aim, existing to ols to detect similarities in texts may b e used. Another approach, more systematic but more challenging, is a priori , by ensuring that the music generated will not recop y a given p ortion of m usic from the training corpus 19 . A solution for m usic generation from Mark ov c hains has b een prop osed [PRP14]. It is based on a v ariable order Marko v mo del and constraints ov er the order of the generation through some min order and max order constrain ts, in order to attain some sw eet spot b et ween junk and plagiarism. How ever, there is none yet equiv alent solution for deep learning architectures. 17 In order to prioritize the Conductor RNN o ver the b ottom la yer RNN, its initial state is reinitialized with the decoder generated embedding for each new subsequence. 18 On this issue, see a recent pap er [Del17]. 19 Note that this addresses the issue of avoiding a significant recopy from the training corpus, but it does not preven t to r einvent an existing music outside of the training corpus. 11 Figure 11: Creative adversarial netw orks (CAN) architecture 4.1 Conditioning 4.1.1 Example: MidiNet Melo dy Generation The MidiNet architecture by Y ang et al. [YCY17], inspired by W av eNet (see Section 2.3.1), is based on generativ e adversarial net works (GAN) [GP AM + 14] (see Section 4.2). It includes a conditioning mec hanism incorp orating history information (melo dy as well as chords) from previous measures. The authors discuss tw o metho ds to con trol creativity: • by restricting the conditioning by inserting the conditioning data only in the intermediate conv olution la yers of the generator architecture; • by decreasing the v alues of the tw o con trol parameters of feature matching regularization, in order to less enforce the distributions of real and generated data to b e close. These exp eriments are interesting although the approach remains at the level of some ad ho c tuning of some h yp er-parameters of the architecture. 4.2 Creativ e Adv ersarial Net w orks Another more systematic and conceptual direction is the concept of creative adversarial netw orks (CAN) pro- p osed by El Gammal et al. [ELEM17], as an extension of generative adversarial netw orks (GAN) architecture, b y Go o dfellow et al. [GP AM + 14] whic h trains simultaneously tw o netw orks: • a Gener ative mo del (or gener ator ) G, whose ob jectiv e is to transform random noise vectors into faked samples , whic h resemble real samples dra wn from a distribution of real images; and • a Discriminative mo del (or discriminator ) D, that estimates the probability that a sample came from the training data rather than from G. The generator is then able to pro duce user-app ealing synthetic samples (e.g., images or music) from noise v ectors. The discriminator may then b e discarded. Elgammal et al. prop ose in [ELEM17] to extend a GAN architecture into a cr e ative adversarial networks (CAN) architecture, sho wn at Figure 11, where the generator receiv es from the discriminator not just one but two signals : • the first signal, analog to the case of the standard GAN, sp ecifies how the discriminator b elieves that the generated item comes from the training dataset of real art pieces; • the second signal is about ho w easily the discriminator can classify the generated item into establishe d styles . If there is some strong am biguity (i.e., the v arious classes are equiprobable), this means that the generated item is difficult to fit within the existing art styles. These tw o signals are thus contradictory forces and push the generator to explore the space for generating items that are at the same time close to the distribution of existing art pieces and with some st yle originality . Note that this approach assumes the existence of a prior st yle classification and it also reduces the idea of creativit y to exploring new st yles (which indeed has some grounding in the art history). 12 Figure 12: Strategies for instantiating notes during generation 5 In teractivit y In most of existing systems, the generation is automated, with little or no inter activity . As a result, lo cal mo dification and regeneration of a musical conten t is usually not supp orted, the only a v ailable option b eing a whole regeneration (and the loss of previous attempt). This is in contrast to the w ay a musician works, with successiv e partial refinemen t and adaptation of a comp osition 20 . Therefore, some requisites for interactivit y are the incremen tality and the lo cality of the generation, i.e. the wa y the v ariables of the musical conten t are instan tiated. 5.1 Instan tiation Strategies Let us consider the example of the generation of a melo dy . The tw o most common strategies (illustrated at Figure 12) 21 for instan tiating the notes of the melo dy are: • Single-step/Glob al – A global represen tation including all time steps is generated in a single step by a feedforw ard architecture. An example is DeepHear [Sun17] at Section 2.4.1. • Iter ative/Time-slic e – A time slice represen tation corresp onding to a single time step is iteratively gener- ated b y a recurrent architecture (RNN). An example is Anticipation-RNN [HN17] at Section 2.3.2. Let us no w consider an alternativ e strategy , incr emental variable instantiation . It relies on a global represen- tation including all time steps. But, as opp osed to single-step/global generation, generation is done incr emental ly b y progressively instantiating and refining v alues of v ariables (notes), in a non deterministic order. Thus, it is p ossible to generate or to r e gener ate only an arbitr ary p art of the musical conten t, for a sp ecific time interval and/or for a sp ecific subset of voic es (shown as selectiv e regeneration in Figure 12), without regenerating the whole con tent. 5.2 Example: DeepBac h Chorale Generation This incremental instantiation strategy has b een used b y Hadjeres et al. in the DeepBach architecture [HPN17] for generation of Bach chorales 22 . The architecture, shown at Figure 13, combines tw o recurrent and tw o feed- forw ard net works. As opp osed to standard use of recurren t net works, where a single time direction is considered, DeepBac h architecture considers the tw o directions forwar d in time and b ackwar ds in time. Therefore, t wo re- curren t net works (more precisely , LSTM) are used, one summing up past information and another summing up information coming from the future, together with a non recurrent netw ork for notes o ccurring at the same 20 An example of in teractive comp osition environmen t is FlowComposer [PRP16]. It is based on v arious techniques such as Marko v mode ls, constraint solving and rules. 21 The representation shown is of type piano roll with two sim ultaneous voices (tracks). Parts already pro cessed are in light grey; parts b eing curren tly processed ha ve a thic k line and are p oin ted as “current”; notes to b e played are in blue. 22 J. S. Bach c hose v arious given melodies for a soprano and comp osed the three additional ones (for alto, tenor and bass) in a c ounterp oint manner. 13 Figure 13: DeepBach architecture Create four lists V = ( V 1 ; V 2 ; V 3 ; V 4 ) of length L ; Initialize them with random notes drawn from the ranges of the corresp onding voices for m from 1 to max number of iter ations do Cho ose voice i uniformly b et ween 1 and 4; Cho ose time t uniformly b et ween 1 and L ; Re-sample V t i from p i ( V t i | V \ i,t , θ i ) end for Figure 14: DeepBach incremental generation/sampling algorithm time. Their three outputs are merged and passed as the input of a final feedforward neural netw ork. The first 4 lines of the example data on top of the Figure 13 corresp ond to the 4 voices 23 . Actually this architecture is replicated 4 times, one for each voice (4 in a chorale). T raining, as well as generation, is not done in the con ven tional wa y for neural netw orks. The ob jectiv e is to predict the v alue of current note for a a given voice (sho wn with a red ? on top center of Figure 13), using as information surrounding contextual notes. The training set is formed on-line by rep eatedly randomly selecting a note in a voice from an example of the corpus and its surrounding context. Generation is done by sampling, using a pseudo-Gibbs sampling incremen tal and iterative algorithm (shown in Figure 14, see details in [HPN17]) to pro duce a set of v alues (each note) of a p olyphon y , following the distribution that the netw ork has learnt. The adv antage of this metho d is that generation ma y be tailored. F or example, if the user c hanges only one or tw o measures of the soprano voice, he can resample only the corresp onding counterpoint voices for these measures. The user in terface of DeepBach, sho wn at Figure 15, allows the user to in teractively select and con trol global or partial (re)generation of c horales. It opens up new w ays of comp osing Bac h-like chorales for non experts in an in teractive manner, similarly to what is prop osed b y FlowComposer for lead sheets [PRP16]. It is implemented as a plugin for the MuseScore music editor. 23 The tw o bottom lines corresp ond to metadata (fermata and beat information), not detailed here. 14 Figure 15: DeepBach user interface 6 Conclusion The use of deep learning architectures and techniques for the generation of music (as well as other artistic con tent) is a growing area of research. Ho wev er, there remain op en challenges such as control, structure, creativit y and interactivit y , that standard te c hniques do not directly address. In this article, we ha ve discussed a list of challenges, introduced some strategies to address them and hav e illustrated them through examples of actual architectures 24 . W e hop e that the analysis presented in this article will help at a b etter understanding of issues and p ossible solutions and therefore ma y contribute to the general researc h agenda of deep learning-based m usic generation. Ac knowledgemen ts W e thank Ga¨ etan Hadjeres and Pierre Roy for related discussions. This research was partly conducted within the Flo w Machines pro ject whic h received funding from the Europ ean Researc h Council under the European Union Sev enth F ramework Programme (FP/2007-2013) / ERC Grant Agreement n. 291156. References [BHP18] Jean-Pierre Briot, Ga¨ etan Hadjeres, and F ran¸ cois P achet. De ep L e arning T e chniques for Music Gener ation . Computational Synthesis and Creative Systems. Springer Nature, 2018. [BWH16] Mason Bretan, Gil W einberg, and Larry Heck. A unit selection metho dology for music generation using deep neural netw orks, December 2016. [CB98] Y ann Le Cun and Y oshua Bengio. Conv olutional netw orks for images, sp eec h, and time-series. In The handb o ok of br ain the ory and neur al networks , pages 255–258. MIT Press, Cam bridge, MA, USA, 1998. [Cop00] Da vid Cop e. The Algorithmic Comp oser . A-R Editions, 2000. [Del17] Jean-Marc Deltorn. Deep creations: Intellectual prop ert y and the automata. F r ontiers in Digital Humanities , 4, F ebruary 2017. Article 3. [DU05] Kenji Doy a and Eiji Uchibe. The Cyb er Ro dent pro ject: Exploration of adaptive mec hanisms for self-preserv ation and self-repro duction. A daptive Behavior , 13(2):149–160, 2005. [DZX18] Shuqi Dai, Zheng Zhang, and Gus Guangyu Xia. Music style transfer issues: A p osition pap er, Marc h 2018. [Eb c88] Kemal Eb cio˘ glu. An exp ert system for harmonizing four-part chorales. Computer Music Journal (CMJ) , 12(3):43–51, Autumn 1988. [ELEM17] Ahmed Elgammal, Bingchen Liu, Mohamed Elhoseiny , and Marian Mazzone. CAN: Creative adv ersarial net works generating “art” by learning ab out st yles and deviating from st yle norms, June 2017. [F C16] Reb ecca Fiebrink and Baptiste Caramiaux. The mac hine learning algorithm as creative musical to ol, Nov ember 2016. 24 A more complete survey and analysis is [BHP18]. 15 [FV13] Jose David F ern´ andez and F rancisco Vico. AI metho ds in algorithmic composition: A compre- hensiv e survey . Journal of Artificial Intel ligenc e R ese ar ch (JAIR) , (48):513–582, 2013. [FvA15] Otto F abius and Joost R. v an Amersfo ort. V ariational Recurrent Auto-Enco ders, June 2015. [FYR16] Da vis F o ote, Daylen Y ang, and Mostafa Rohaninejad. Audio style transfer – Do androids dream of electric b eats?, Decem b er 2016. h ttps://audiostyletransfer.w ordpress.com. [GBC16] Ian Go o dfello w, Y osh ua Bengio, and Aaron Courville. De ep L e arning . MIT Press, 2016. [GEB15] Leon A. Gat ys, Alexander S. Eck er, and Matthias Bethge. A neural algorithm of artistic st yle, Septem b er 2015. [GP AM + 14] Ian J. Go o dfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-F arley , Sher- jil Ozairy , Aaron Courville, and Y oshua Bengio. Generativ e adversarial nets, June 2014. [Gra14] Alex Grav es. Generating sequences with recurrent neural netw orks, June 2014. [HCC17] Dorien Herremans, Ching-Hua Chuan, and Elaine Chew. A functional taxonomy of m usic gener- ation systems. ACM Computing Surveys (CSUR) , 50(5), September 2017. [HN17] Ga¨ etan Hadjeres and F rank Nielsen. Interactiv e m usic generation with p ositional constrain ts using An ticipation-RNN, September 2017. [Hof01] Douglas Hofstadter. Staring Emm y straight in the eye–and doing my b est not to flinch. In David Cop e, editor, Virtual Music – Computer Synthesis of Music al Style , pages 33–82. MIT Press, 2001. [HOT06] Geoffrey E. Hinton, Simon Osindero, and Y ee-Why e T eh. A fast learning algorithm for deep b elief nets. Neur al Computation , 18(7):1527–1554, July 2006. [HPN17] Ga ¨ etan Hadjeres, F ran¸ cois Pac het, and F rank Nielsen. DeepBach: a steerable mo del for Bach c horales generation, June 2017. [HS97] Sepp Ho c hreiter and J ¨ urgen Schmidh ub er. Long short-term memory . Neur al Computation , 9(8):1735–1780, 1997. [JGTE16] Natasha Jaques, Shixiang Gu, Richard E. T urner, and Douglas Ec k. T uning recurren t neural net works with reinforcement learning, Nov ember 2016. [KW14] Diederik P . Kingma and Max W elling. Auto-enco ding v ariational Ba yes, Ma y 2014. [LGW16] Stefan Lattner, Maarten Grac hten, and Gerhard Widmer. Imp osing higher-level structure in p olyphonic music generation using con volutional restricted Boltzmann machines and constraints, Decem b er 2016. [MKPKK17] Dimos Makris, Maximos Kaliak atsos-Papak ostas, Ioannis Karydis, and Katia Lida Kermanidis. Com bining LSTM and feed forward neural netw orks for conditional rhythm comp osition. In Gi- acomo Boracchi, Lazaros Iliadis, Chrisina Ja yne, and Aristidis Lik as, editors, Engine ering Appli- c ations of Neur al Networks: 18th International Confer enc e, EANN 2017, Athens, Gr e e c e, August 25–27, 2017, Pr o c e e dings , pages 570–582. Springer Nature, 2017. [MOT15] Alexander Mordvin tsev, Christopher Olah, and Mik e T yk a. Deep Dream, 2015. h ttps://research.googleblog.com/2015/06/inceptionism-going-deep er-into-neural.h tml. [Nie09] Gerhard Nierhaus. A lgorithmic Comp osition: Par adigms of Automate d Music Gener ation . Springer Nature, 2009. [PPR17] F ran¸ cois Pac het, Alexandre Papadopoulos, and Pierre Roy . Sampling v ariations of sequences for structured music generation. In Pr o c e e dings of the 18th International So ciety for Music Informa- tion R etrieval Confer enc e (ISMIR 2017), Suzhou, China, Octob er 23–27, 2017 , pages 167–173, 2017. [PRB11] F ran¸ cois Pac het, Pierre Roy , and Gabriele Barbieri. Finite-length marko v pro cesses with con- strain ts. In Pr o c e e dings of the 22nd International Joint Confer enc e on Artificial Intel ligenc e (IJ- CAI 2011) , pages 635–642, Barcelona, Spain, July 2011. 16 [PRP14] Alexandre Papadopoulos, Pierre Roy , and F ran¸ cois Pac het. Avoiding plagiarism in Marko v se- quence generation. In Pr o c e e dings of the 28th AAAI Confer enc e on Artificial Intel ligenc e (AAAI 2014) , pages 2731–2737, Qu´ eb ec, PQ, Canada, July 2014. [PRP16] Alexandre Papadopoulos, Pierre Ro y , and F ran¸ cois Pac het. Assisted lead sheet comp osition using Flo wComp oser. In Mic hel Rueher, editor, Principles and Pr actic e of Constr aint Pr o gr amming: 22nd International Confer enc e, CP 2016, T oulouse, F r anc e, Septemb er 5-9, 2016, Pr o c e e dings , pages 769–785. Springer Nature, 2016. [PW99] George Papadopoulos and Geraint Wiggins. AI metho ds for algorithmic comp osition: A survey , a critical view and future prosp ects. In AISB 1999 Symp osium on Music al Cr e ativity , pages 110–117, April 1999. [RER + 18a] Adam Rob erts, Jesse Engel, Colin Raffel, Curtis Hawthorne, and Douglas Ec k. A hierarchical laten t vector mo del for learning long-term structure in m usic, June 2018. [RER + 18b] Adam Rob erts, Jesse Engel, Colin Raffel, Curtis Hawthorne, and Douglas Eck. A hierarc hical laten t vector model for learning long-term structure in music. In Pr o c e e dings of the 35th In- ternational Confer enc e on Machine L e arning (ICML 2018) . ACM, Montr ´ eal, PQ, Canada, July 2018. [Ste84] Mark Steedman. A generative grammar for Jazz chord sequences. Music Per c eption , 2(1):52–77, 1984. [Sun17] F elix Sun. DeepHear – Comp osing and harmonizing m usic with neural netw orks, Accessed on 21/12/2017. https://fephsun.gith ub.io/2015/09/01/neural-music.h tml. [UL16] Dmitry Uly anov and V adim Leb edev. Audio texture syn thesis and style transfer, December 2016. h ttps://dmitryulyano v.github.io/audio-texture-syn thesis-and-style-transfer/. [vdODZ + 16] A¨ aron v an den Oord, Sander Dieleman, Heiga Zen, Karen Simony an, Oriol Viny als, Alex Grav es, Nal Kalch brenner, Andrew Senior, and Koray Kavuk cuoglu. W av eNet: A generative mo del for ra w audio, December 2016. [vHGS15] Hado v an Hasselt, Arthur Guez, and David Silver. Deep reinforcement learning with double Q-learning, Decem b er 2015. [YCY17] Li-Chia Y ang, Szu-Y u Chou, and Yi-Hsuan Y ang. MidiNet: A con volutional generativ e adv ersarial net work for symbolic-domain m usic generation. In Pr o c e e dings of the 18th International So ciety for Music Information R etrieval Confer enc e (ISMIR 2017) , Suzhou, China, Octob er 2017. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment