A Novel Study of the Relation Between Students Navigational Behavior on Blackboard and their Learning Performance in an Undergraduate Networking Course

This paper provides an overview of students behavior analysis on a learning management system (LMS), Blackboard (Bb) Learn for a core data communications course of the Undergraduate IT program in the Information Sciences and Technology (IST) Department at George Mason University (GMU). This study is an attempt to understand the navigational behavior of students on Blackboard Learn which can be further attributed to the overall performance of the students. In total, 160 undergraduate students participated in the study. Vast amount of students activities data across all four sections of the course were collected. All sections have similar content, assessment design and instruction methods. A correlation analysis between the different assessment methods and various key variables such as total student time, total number of logins and various other factors were performed, to evaluate students engagement on Blackboard Learn. Our findings can help instructors to efficiently identify students strengths or weaknesses and fine-tune their courses for better student engagement and performance.

💡 Research Summary

This paper investigates the relationship between undergraduate students’ navigational behavior on the Blackboard Learning Management System (LMS) and their academic performance in a core networking course (IT 341) at George Mason University. Data were collected from four sections of the course (01, 02, 04, DL) during the Spring 2017 semester, encompassing 160 students. For each student, 59 variables were extracted from Blackboard logs, including total time spent on the LMS each day of the week, total number of logins, frequency of accessing lecture slides, homework assignments, lab sessions, and skills assessments. The authors removed records with missing values, resulting in a clean dataset for analysis.

The study first performed exploratory correlation analysis. Pearson correlation coefficients were computed between the amount of time spent on each day of the week (Sunday through Saturday) and the students’ mid‑term exam scores. Saturday showed the strongest positive correlation with the score, followed by Friday and Monday. Conversely, Sunday and Tuesday displayed weak negative correlations, suggesting that time spent on those days was associated with lower scores. A second correlation matrix examined the relationship between specific course‑content accesses (e.g., “Ch4Slides”, “Ch6Slides”) and the mid‑term score; unsurprisingly, the slides most heavily weighted in the exam (Ch4, Ch5, Ch6) had the highest positive correlations.

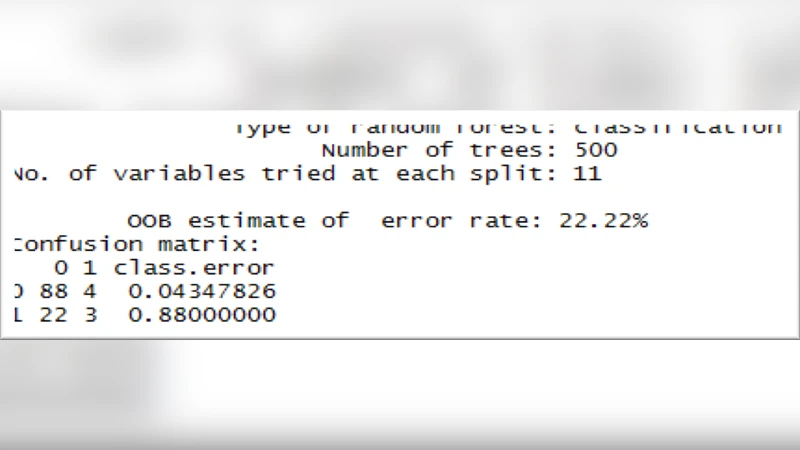

To move beyond simple association, the authors built a predictive classification model using the Random Forest algorithm. They encoded the final grades as a binary target: 0 for C/D/F and 1 for A/B. The model comprised 500 decision trees, each split on a random subset of 11 features. Using the out‑of‑bag (OOB) estimate, the model achieved an overall accuracy of 77.78 % (error rate 22.22 %). Variable importance analysis revealed that “Saturday” was the most influential predictor, followed by “Tuesday”, “Study Guide”, “Monday”, and several slide‑access variables (Ch4Slides, Ch6Slides, etc.). The confusion matrix indicated that the model was particularly adept at identifying students likely to receive a low grade (95.6 % accuracy for class 0), though its ability to correctly predict high‑performing students was comparatively weaker.

The authors interpret the prominence of Saturday usage as reflecting either assignment deadlines that fall on weekends or a pattern of students dedicating weekend time to intensive study. They argue that early and consistent engagement with key lecture materials (as captured by slide‑access counts) also contributes positively to performance. However, the paper acknowledges several limitations: the dataset is modest in size and originates from a single course, the class distribution is imbalanced (more low‑grade than high‑grade cases), and the handling of missing data by outright deletion may introduce bias. Moreover, the study does not report cross‑validation or external test‑set performance, leaving the generalizability of the model uncertain.

In conclusion, the research demonstrates that LMS interaction metrics—particularly day‑of‑week usage patterns and specific content accesses—can be leveraged to predict student performance with reasonable accuracy. The authors suggest that such models could be used for early warning systems, allowing instructors to intervene with at‑risk students before major assessments. Future work is proposed to expand the dataset across multiple courses, address class imbalance with techniques such as SMOTE or cost‑sensitive learning, and explore separate models for temporal versus content‑based predictors. Overall, the paper contributes to the growing field of learning analytics by showing how granular Blackboard logs can inform pedagogical decisions and support student success.

Comments & Academic Discussion

Loading comments...

Leave a Comment