Phonetic-attention scoring for deep speaker features in speaker verification

Recent studies have shown that frame-level deep speaker features can be derived from a deep neural network with the training target set to discriminate speakers by a short speech segment. By pooling the frame-level features, utterance-level represent…

Authors: Lantian Li, Zhiyuan Tang, Ying Shi

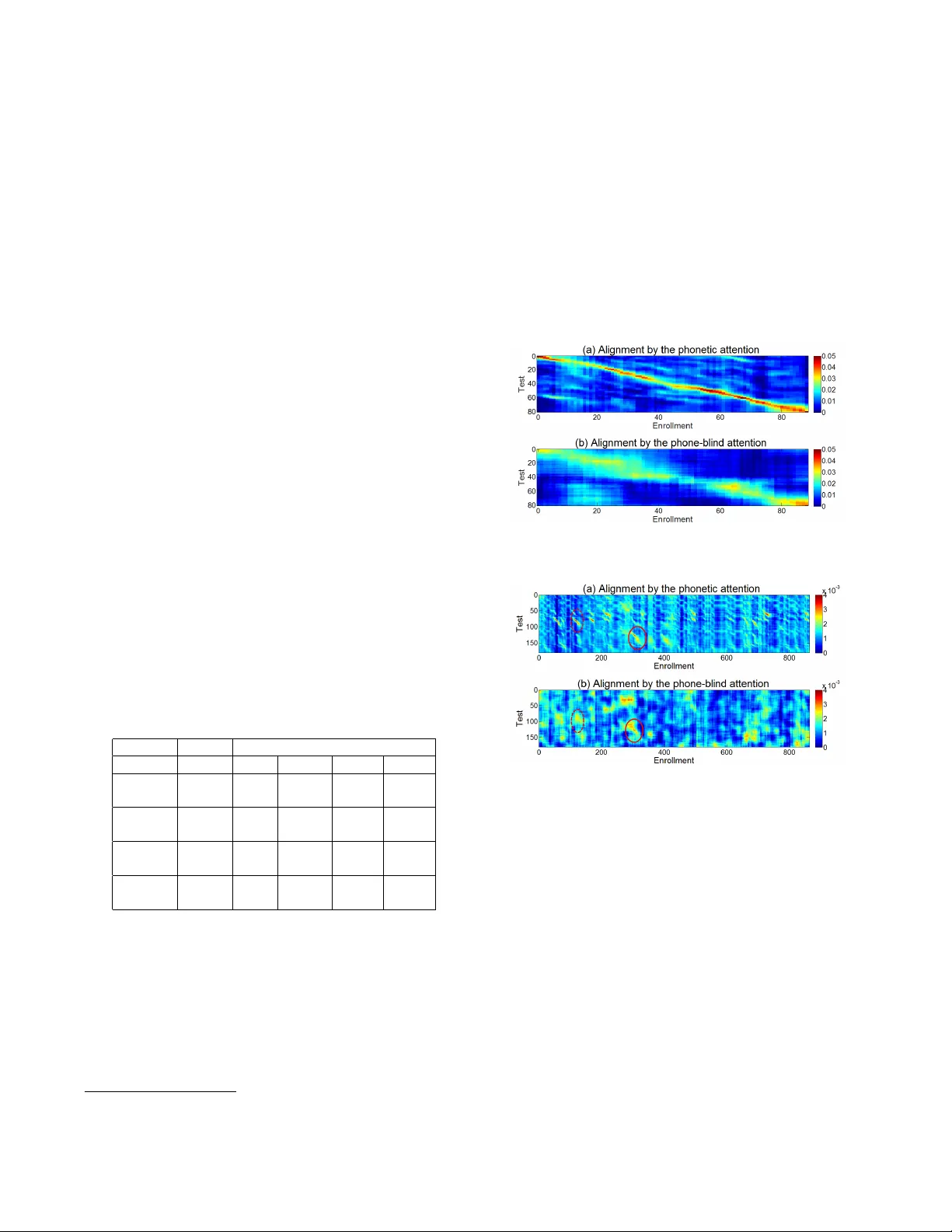

PHONETIC-A TTENTION SCORING FOR DEEP SPEAKER FEA TURES IN SPEAKER VERIFICA TION Lantian Li, Zhiyuan T ang, Y ing Shi, Dong W ang CSL T , Tsinghua Uni versity , Beijing, 100084, China ABSTRA CT Recent studies ha ve shown that frame-le vel deep speaker fea- tures can be deriv ed from a deep neural network with the training target set to discriminate speakers by a short speech segment. By pooling the frame-lev el features, utterance-le vel representations, called d-vectors, can be derived and used in the automatic speaker verification (ASV) task. This simple av erage pooling, howe ver , is inherently sensitive to the pho- netic content of the utterance. An interesting idea borrowed from machine translation is the attention-based mechanism, where the contrib ution of an input word to the translation at a particular time is weighted by an attention score. This score reflects the relev ance of the input word and the present trans- lation. W e can use the same idea to align utterances with different phonetic contents. This paper proposes a phonetic-attention scoring ap- proach for d-vector systems. By this approach, an attention score is computed for each frame pair . This score reflects the similarity of the two frames in phonetic content, and is used to weigh the contribution of this frame pair in the utterance- based scoring. This new scoring approach emphasizes the frame pairs with similar phonetic contents, which essentially provides a soft alignment for utterances with any phonetic contents. Experimental results show that compared with the naiv e a verage pooling, this phonetic-attention scoring ap- proach can deliver consistent performance improvement in ASV tasks of both text-dependent and te xt-independent. Index T erms — speaker recognition, deep neural net- work, attention 1. INTRODUCTION Automatic speaker verification (ASV) is an important bio- metric authentication technology and has a broad range of applications. The current ASV approach can be categorized into two groups: the statistical model approach and the neu- ral model approach. The most famous statistical models for ASV in volv e the Gaussian mixture model-universal back- ground model (GMM-UBM) [1], the joint factor analysis This work was supported by the National Natural Science Foundation of China under Grant No. 61371136 and 61633013. Dong W ang is the corre- sponding author (wangdong99@mails.tsinghua.edu.cn). model [2] and the i-vector model [3, 4, 5]. As for the neural model approach, Ehsan et al. proposed the first successful implementation [6], where frame-lev el speaker features were extracted from a deep neural network (DNN), and utterance- lev el speaker representations (‘d-vectors’) were deriv ed by av eraging the frame-lev el features, i.e., a verage pooling. This work was follo wed by a b unch of researchers [7, 8, 9, 10]. The neural-based approach is essentially a feature learn- ing approach, i.e., learning frame-le v el speaker features from raw speech. In previous work, we found that by this feature learning, speakers can be discriminated by a speech segment as short as 0 . 3 seconds [10], either a word or a cough [11]. Howe v er , with the con ventional d-vector pipeline, this bril- liant frame-le vel discriminatory power cannot be fully uti- lized by the utterance-le vel ASV , due to the simple average pooling. This shortage was quickly identified by researchers, and hence almost all the studies after Ehsan et al. [6] chose to learn representations of segments rather than frames, the so- called end-to-end approach [8, 12, 13, 14]. Howe ver , frame- lev el feature learning possesses its own advantages in both generalizability and ease of training [15], and meets our long- term desire of deciphering speech signals [16]. An ideal ap- proach, therefore, is to keep the feature learning framework but solv e the problem caused by av erage pooling. T o understand the problem of av erage pooling, first notice that feature pooling is equiv alent to score pooling. T o make the presentation clear , we consider the simple inner product score: ~ s u · ~ s u 0 = 1 | u | X f ∈ u ~ v f · 1 | u 0 | X f 0 ∈ u 0 ~ v f 0 , where u and u 0 are two utterances in test, f denotes frames; ~ v f and ~ s u are frame-le vel speak er features and utterance-le vel d-vectors, respecti vely . A simple arrangement leads to: ~ s u · ~ s u 0 = 1 | u | 1 | u 0 | X f ∈ u X f 0 ∈ u 0 ~ v f · ~ v f 0 . This formula indicates that with a verage pooling, the utterance- lev el score ~ s u · ~ s u 0 is the average of the frame-level scores ~ v f · ~ v f 0 . Most importantly , the scores of all the frame pairs ( f , f 0 ) are equally weighted, which is obviously suboptimal, as the reliability of scores from dif ferent frame pairs may be substantially different. In particular , a pair of frames in the same phonetic context may result in a much more reliable frame-lev el score compared to a pair in dif ferent phonetic context, as demonstrated by the f act that te xt-dependent ASV generally outperforms text-independent ASV . This indicates that a ke y problem of the a verage pooling method is that phonetic variation may cause serious performance degrada- tion. This partly explains why d-vector systems are mostly successful in text-dependent tasks. A simple idea is to discriminate frame pairs in similar / different phonetic contents, and put more emphasis on the frame pairs in similar phones. This can be formulated by: ~ s u · ~ s u 0 = 1 | u | 1 | u 0 | X f ∈ u X f 0 ∈ u 0 α ( f , f 0 ) · ~ v f · ~ v f 0 , (1) where α ( f , f 0 ) represents the weight for the frame pair ( f , f 0 ) , computed from the similarity of their phonetic con- tents. This is essentially a soft-alignment approach that aligns two utterances with respect to phonetic contents, where α ( f , f 0 ) represents the alignment de gree of frames f and f 0 , deriv ed from the phonetic information of the two frames. The idea of soft-alignment w as motiv ated by the attention mechanism in neural machine translation (NMT) [17], where the contrib ution of an input word to the translation at a partic- ular time is weighted by an attention score, and this attention score reflects the rele vance of the input word and the present translation. W e therefore name our new scoring model by Eq. (1) as phonetic-attention scoring . By paying more at- tention to frame pairs in similar phonetic contents, this new scoring approach essentially turns a te xt-independent task to a text-dependent task, hence partly solving the problem caused by phone variation with the nai ve a verage pooling . In the next section, we will briefly describe the attention mechanism. The phonetic-attention scoring approach will be presented in Section 3, and the experiments will be reported in Section 5. The entire paper will be concluded in Section 6. 2. A TTENTION MECHANISM The attention mechanism w as firstly proposed by [17] in the framew ork of sequence to sequence learning, and was applied to NMT . Recently , this model has been widely used in man y sequential learning tasks, e.g., speech recognition [18]. In a nutshell, the attention approach looks up all the input ele- ments (e.g., words in a sentence or frames in an utterance) at each decoding time, and computes an attention weight for each element that reflects the relev ance of that element with the present decoding. Based on these attention weights, the information of the input elements is collected and used to guide decoding. As shown in Fig. 1, at decoding time t , the attention weight α t,i is computed for each input element ~ x i (more precisely , the annotation of ~ x i , denoted by ~ h i ), formally written as: α t,i = σ ( g ( ~ z t − 1 , ~ h i )) where ~ z t − 1 is the decoding status at time t , and g is a value function that can be in any form. σ is a normalization function (usually softmax) that ensures P i α t,i = 1 . The decoding for ~ y t is then formally written as: ~ y t = g 0 ( ~ z t − 1 , ~ y t − 1 , X i α t,i ~ h i ) , where g 0 is the decoding model. In the con ventional setting, g is a parametric function, e.g., a neural net, whose parameters are jointly optimized with other parts of the model, e.g., the decoding model g 0 . z t - 1 y t - 1 z t y t h 1 x 1 h 2 x 2 h T - 1 x T - 1 h T x T ,2 t ,1 t ,1 tT , tT A t t e n t i o n Fig. 1 . Attention mechanism in sequence to sequence model. 3. PHONETIC-A TTENTION SCORING W e borrow the architecture shown in Fig. 1 to build our phonetic-attention model in Eq. (1). Since our purpose is to align two existing sequences rather than sequence to sequence generation, the structure can be largely simplified. For ex- ample, the recurrent connection in both the input and output sequence can be omitted. Secondly , in Fig. 1, the value func- tion g is learned from data; for our scoring model, we have a clear goal to align utterances by phonetic content, so we can design the v alue function by hand (although function learning with prior may help). This leads to the phonetic-attention model shown in Fig. 2. E nr o l l T es t p ' t - 1 s ' t - 1 y t - 1 p ' t s ' t y t p ' t + 1 s ' t + 1 y t + 1 p 1 s 1 x 1 p 2 s 2 x 2 p T - 1 s T - 1 x T - 1 p T s T x T ,2 t ,1 t ,1 tT , tT Fig. 2 . Diagram of the phonetic-attention model. The architecture and the associated scoring method can be summarized into the following four steps: (1) For both the enrollment and test utterances, compute the frame-lev el speaker features from a speaker recognition DNN, denoted by S = [ ~ s 1 , ~ s 2 , ..., ~ s T ] and S 0 = [ ~ s 0 1 , ~ s 0 2 , ..., ~ s 0 T 0 ] . Additionally , compute the frame-le vel phonetic features from a speech recognition DNN, denoted by P = [ ~ p 1 , ~ p 2 , ..., ~ p T ] and P 0 = [ ~ p 0 1 , ~ p 0 2 , ..., ~ p 0 T 0 ] . (2) F or each frame t in the test utterance, compute the atten- tion weight α t,i for each frame i in the enrollment utterance. α t,i = KL − 1 ( p 0 t , p i ) P i KL − 1 ( p 0 t , p i ) , where the KL − 1 ( · , · ) denotes the reciprocal of KL distance. This step is represented by the red dashed line in Fig. 2. (3) Compute the matching score of frame t in the test utter- ance as follows: d t = X i α t,i · cos ( s 0 t , s i ) . This step is represented by the green solid line in Fig. 2. (4) Compute the matching score of the two utterances by av- eraging the frame-lev el matching score: d = 1 T X t d t = 1 T X t X i α t,i · cos ( s 0 t , s i ) . 4. RELA TED WORK The attention mechanism has been studied by sev eral authors in ASV , e.g., [13, 19, 20]. Howe ver , most of the propos- als used the attention mechanism to produce a better frame pooling, while we use it to produce a better utterance align- ment. In essence, these methods learn which frame should contribute to the speaker embedding, while our approach learn which frame-pair should contribute to the matching score. Moreov er , most of these studies do not use phonetic knowledge e xplicitly , except [13]. Another work relev ant to ours is the segmental dynamic time warping (SDTW) approach proposed by Mohamed et al. [21]. This work holds the same idea as ours in align- ing frame-level speaker features, howe ver their alignment is based on local temporal continuity , while ours is based on global phonetic contents. 5. EXPERIMENTS 5.1. Data 5.1.1. T raining data The data used to train the d-vector systems is the CSL T -7500 database, which was collected by CSL T@Tsinghua Univer - sity . It consists of 7 , 500 speakers and 1 , 532 , 766 utterances. The sampling rate is 16 kHz and the precision is 16 -bit. Data augmentation is applied to cov er more acoustic conditions, for which the MUSAN corpus [22] is used to provide additi ve noise, and the room impulse responses (RIRS) corpus [23] is used to generate rev erberated samples. 5.1.2. Evaluation data (1) CIIH : a dataset contains short commands used in the in- telligent home scenario. It contains recordings of 10 short commands from 100 speakers, and each command consists of 2 ∼ 5 Chinese characters. For each speak er , e very command is recorded 15 times, amounting to 150 utterances per speaker . This dataset is used to ev aluate the text-dependent (TD) task. (2) DSDB : a dataset inv olving digital strings. It contains 1 , 099 speakers, each speaking 15 ∼ 20 Chinese digital strings. Each string contains 8 Chinese digits, and is about 2 ∼ 3 sec- onds. F or each speaker , 5 utterances are randomly sampled as enrollment, and the rest are used for test. This dataset is used to ev aluate the text-prompted (TP) task. (3) ALI-WILD : a dataset collected by the Ali crowdsource platform. It covers unlimited real-world scenarios, and con- tains 669 speakers and 27 , 861 speech segments. W e designed two test conditions: a short-duration scenario Ali(S) where the duration of the enrollment is 15 seconds and the test is 3 seconds, and a long-duration scenario Ali(L) where the dura- tion of the enrollment is 30 seconds and the test is 15 seconds. This dataset is used to e valuate the te xt-independent (TI) task. 5.2. Settings The DNN model to produce frame-level speaker features is a 9-layer time-delay neural network (TDNN), where the slicing parameters are { t - 2 , t - 1 , t , t + 1 , t + 2 } , { t - 2 , t + 2 } , { t } , { t - 1 , t + 1 } , { t } , { t - 2 , t + 2 } , { t } , { t - 4 , t + 4 } , { t } . Except the last hidden layer that inv olves 400 neurons, the size of all other layers is 1 , 000 . Once the DNN has been fully trained, 400 -dimensional deep speaker features were extracted from the last hidden layer . The model was trained using the Kaldi toolkit [24]. Based on this model, we built a standard d-vector system with the naiv e average pooling, denoted by Baseline . The phonetic-attention model requires frame-lev el pho- netic features. W e built a DNN-HMM hybrid system using Kaldi following the WSJ S5 recipe. The training used 500 hours of Chinese speech data. The model is a TDNN, and each layer contains 512 nodes. The output layer contains 463 units, corresponding to the number of GMM senones. Once the model was trained, 463 -dimensional phone poste- riors were derived from the output layer and were used as phonetic features. The phonetic-attention system based on the phone posteriors is denoted by Att-P ost . Another type of phonetic features can be deri ved from the final af fine layer . T o compress the size of the feature vector , the Singular V alue De- composition (SVD) was applied to decompose the final affine matrix into two low-rank matrices, where the rank was set to 100 . The 100 -dimensional activ ations were read from the low-rank layer of the decomposed matrix, which we call bot- tleneck features. The phonetic-attention system based on the bottleneck features is denoted by Att-BN . Finally , we built a phone-blind attention system where the attention weight is computed from the speaker feature itself, rather than phonetic features. This approach is similar to the work in [19, 20], though the attention function is not trained. This system is denoted by Att-Spk . 5.3. Results The results in terms of the equal error rate (EER) are shown in T able 1, where the baseline system is based on the naiv e av erage pooling, while the three attention-based systems use attention models based on dif ferent features. For each system, it reports results with two frame-lev el metrics: cosine distance and cosine distance after LD A. The LDA model was trained on CSL T -7500, and the dimensionality of its projection space was set to 150 . There are four tasks in total: the TD task on CIIH, the TP task on DSDB, the TI short-duration task on Ali(S), and the TI long-duration task on Ali(L). The best performance is marked in bold face. From these results, it can be seen that on all these tasks, the attention-based systems outperform the baseline system, indicating that the naiv e average pooling is indeed problem- atic. When comparing these three attention-based systems, we find they perform quite different on different tasks. On the TD task CIIH and TP task DSDB, the phone-blind at- tention system Att-Spk seems slightly superior , while on the TI task Ali(S) and Ali(L), the two phonetic-attention systems are clearly better . This observation is understandable, as on the TD or the TP tasks, the phonetic variation in enrollment and test utterances are lar gely identical, so the appropriate alignment can be easily found by ev en a phone-blind atten- tion. On the TI tasks, howe ver , the phonetic variation is much more complex, for which additional phonetic information is required to align the enrollment and test utterances. Finally , comparing the two phonetic-attention systems, the Att-BN is consistently better . This indicates that the bottleneck feature is a more compact representation for the phonetic content. T able 1 . Performance of different systems on dif ferent tasks. Systems Metric EER(%) CIIH DSDB Ali(S) Ali(L) Baseline Cosine 3.71 1.02 9.24 4.95 LD A 2.49 0.70 5.84 2.44 Att-Spk Cosine 3.27 0.95 9.07 4.95 LD A 2.11 0.65 5.80 2.50 Att-Post Cosine 3.28 0.97 9.12 4.85 LD A 2.22 0.69 5.76 2.32 Att-BN Cosine 3.20 0.98 9.11 4.84 LD A 2.18 0.70 5.69 2.31 5.4. Analysis T o better understand the difference behavior of the phone- blind attention and the phonetic attention, we draw the align- ment produced by them on two samples from the TD and TI tasks respectively . The figures are sho wn in Fig. 3 and Fig. 4. 1 It can be seen that on the TD task, two attention approaches produce similar alignments, while the alignment produced by phonetic attention is more concentrated. This is not surpris- ing, as the phonetic features are short-term and change more 1 The observations of the TD and TP tasks are quite similar, so here the figure on the TP task is omitted. quickly than the speaker features. Actually , this might be a key problem of the present implementation of the phonetic attention, as the concentration means less frames in one utter - ance being aligned for each frame in the other utterance, lead- ing to unreliable scores. Nev ertheless, the explicit phonetic information does provide much more accurate alignments in the TI scenario, where the phonetic variation is complex and phone-blind attention may produce rather poor alignments. This can be seen from Fig. 4 that the aligned segments pro- duced by the phonetic attention show clear slopped patterns, which is more realistic than the flat patterns produced by the phone-blind attention. Fig. 3 . Alignment produced by the phone-blind and phonetic attentions on the TD task. Fig. 4 . Alignment produced by the phone-blind and phonetic attentions on the TI task. 6. CONCLUSIONS This paper proposed a phonetic-attention scoring approach for the d-vector speaker recognition system. This approach uses frame-lev el phonetic information to produce a soft align- ment between the enrollment and test utterances, and com- putes the matching score by emphasizing the aligned frame pairs. W e tested the method on text-dependent, text-prompted and text-independent tasks, and found that it delivered con- sistent performance improvement over the baseline system. The phonetic attention was also compared with a naive phone- blind attention, and the results showed that the phone-blind at- tention worked well in text-dependent and text-prompt tasks, but failed in text-independent tasks. Analysis was conducted to explain the observation. In the further work, we will study speaker features that change more slo wly . e.g., vo wel-only feature. It is also interesting to learn the value function. 7. REFERENCES [1] Douglas A Reynolds, Thomas F Quatieri, and Robert B Dunn, “Speaker verification using adapted Gaussian mixture models, ” Digital signal pr ocessing , vol. 10, no. 1-3, pp. 19–41, 2000. [2] Patrick Kenny , Gilles Boulianne, Pierre Ouellet, and Pierre Dumouchel, “Joint factor analysis versus eigen- channels in speaker recognition, ” IEEE T ransactions on Audio, Speech, and Language Processing , vol. 15, no. 4, pp. 1435–1447, 2007. [3] Najim Dehak, Patrick J Kenny , R ´ eda Dehak, Pierre Du- mouchel, and Pierre Ouellet, “Front-end factor analysis for speaker verification, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 19, no. 4, pp. 788–798, 2011. [4] Serge y Ioffe, “Probabilistic linear discriminant analy- sis, ” Computer V ision–ECCV , pp. 531–542, 2006. [5] Y un Lei, Nicolas Scheffer , Luciana Ferrer , and Mitchell McLaren, “ A novel scheme for speaker recognition using a phonetically-aware deep neural network, ” in ICASSP . IEEE, 2014, pp. 1695–1699. [6] Ehsan V ariani, Xin Lei, Erik McDermott, Ignacio Lopez Moreno, and Javier Gonzalez-Dominguez, “Deep neu- ral networks for small footprint text-dependent speaker verification, ” in ICASSP . IEEE, 2014, pp. 4052–4056. [7] Y uan Liu, Y anmin Qian, Nanxin Chen, T ianfan Fu, Y a Zhang, and Kai Y u, “Deep feature for te xt-dependent speaker verification, ” Speech Communication , vol. 73, pp. 1–13, 2015. [8] David Snyder , Pe gah Ghahremani, Daniel Povey , Daniel Garcia-Romero, Y ishay Carmiel, and Sanjeev Khudan- pur , “Deep neural network-based speaker embeddings for end-to-end speaker verification, ” in Spoken Lan- guage T echnology W orkshop . IEEE, 2016, pp. 165–170. [9] David Snyder , Daniel Garcia-Romero, Daniel Pov ey , and Sanjeev Khudanpur , “Deep neural network embed- dings for text-independent speaker verification, ” in In- terspeech , 2017, pp. 999–1003. [10] Lantian Li, Y ixiang Chen, Y ing Shi, Zhiyuan T ang, and Dong W ang, “Deep speaker feature learning for text- independent speaker verification, ” in Interspeec h , 2017, pp. 1542–1546. [11] Miao Zhang, Y ixiang Chen, Lantian Li, and Dong W ang, “Speaker recognition with cough, laugh and” wei”, ” arXiv pr eprint arXiv:1706.07860 , 2017. [12] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in ICASSP . IEEE, 2016, pp. 5115–5119. [13] Shi-Xiong Zhang, Zhuo Chen, Y ong Zhao, Jinyu Li, and Y ifan Gong, “End-to-end attention based text- dependent speaker verification, ” in Spoken Language T echnology W orkshop . IEEE, 2016, pp. 171–178. [14] Chunlei Zhang and Kazuhito Koishida, “End-to-end text-independent speaker verification with triplet loss on short utterances, ” in Interspeech , 2017. [15] Dong W ang, Lantian Li, Zhiyuan T ang, and Thomas Fang Zheng, “Deep speaker verification: Do we need end to end?, ” arXiv preprint , 2017. [16] Lantian Li, Dong W ang, Y ixiang Chen, Y ing Shi, Zhiyuan T ang, and Thomas Fang Zheng, “Deep fac- torization for speech signal, ” in ICASSP . IEEE, 2018. [17] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Ben- gio, “Neural machine translation by jointly learning to align and translate, ” in ICLR , 2015. [18] W illiam Chan, Navdeep Jaitly , Quoc Le, and Oriol V inyals, “Listen, attend and spell: A neural network for large vocab ulary con versational speech recognition, ” in ICASSP . IEEE, 2016, pp. 4960–4964. [19] F A Rezaur rahman Chowdhury , Quan W ang, Igna- cio Lopez Moreno, and Li W an, “ Attention-based mod- els for text-dependent speaker v erification, ” in ICASSP . IEEE, 2018, pp. 5359–5363. [20] Y i Liu, Liang He, W eiwei Liu, and Jia Liu, “Explori ng a unified attention-based pooling framework for speaker verification, ” arXiv preprint , 2018. [21] Mohamed Adel, Mohamed Afify , and Akram Gaballah, “T ext-independent speaker verification based on deep neural networks and segmental dynamic time warping, ” arXiv pr eprint arXiv:1806.09932 , 2018. [22] David Snyder , Guoguo Chen, and Daniel Povey , “MU- SAN: A Music, Speech, and Noise Corpus, ” 2015, [23] T om K o, V ijayaditya Peddinti, Daniel Pove y , Michael L Seltzer , and Sanjee v Khudanpur , “ A study on data augmentation of rev erberant speech for robust speech recognition, ” in ICASSP . IEEE, 2017, pp. 5220–5224. [24] Daniel Pove y , Arnab Ghoshal, Gilles Boulianne, Lukas Burget, Ondrej Glembek, Nagendra Goel, Mirko Han- nemann, Petr Motlicek, Y anmin Qian, Petr Schwarz, et al., “The kaldi speech recognition toolkit, ” in W ork- shop on automatic speech r ecognition and understand- ing . IEEE Signal Processing Society , 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment