Exploring Speech Enhancement with Generative Adversarial Networks for Robust Speech Recognition

We investigate the effectiveness of generative adversarial networks (GANs) for speech enhancement, in the context of improving noise robustness of automatic speech recognition (ASR) systems. Prior work demonstrates that GANs can effectively suppress …

Authors: Chris Donahue, Bo Li, Rohit Prabhavalkar

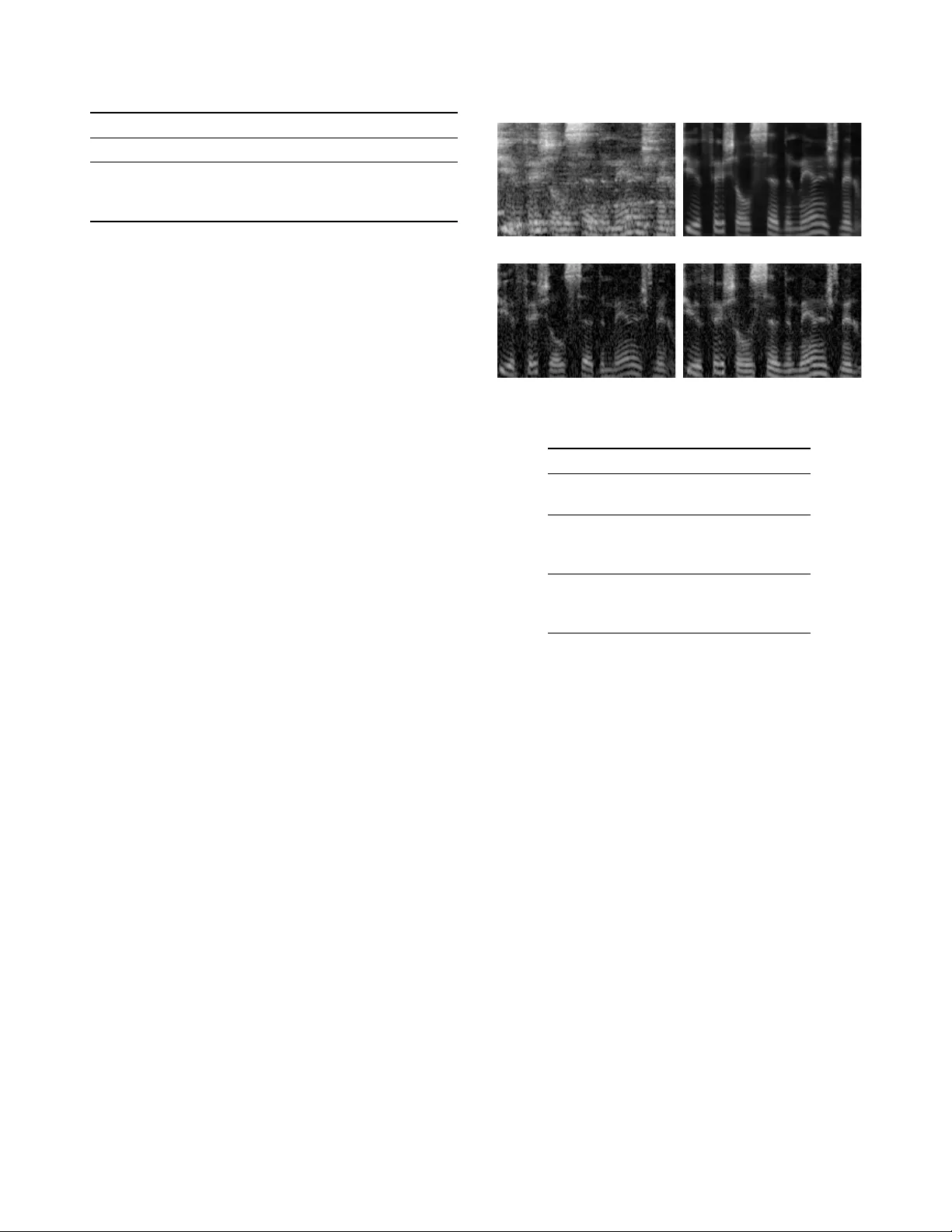

EXPLORING SPEECH ENHANCEMENT WITH GENERA TIVE AD VERSARIAL NETWORKS FOR R OBUST SPEECH RECOGNITION Chris Donahue ∗ † UC San Diego Department of Music cdonahue@ucsd.edu Bo Li, Rohit Prabhavalkar Google { boboli,prabhavalkar } @google.com ABSTRA CT W e in vestigate the effecti veness of generativ e adversarial networks (GANs) for speech enhancement, in the context of improving noise robustness of automatic speech recognition (ASR) systems. Prior work [1] demonstrates that GANs can effecti vely suppress additiv e noise in raw wa veform speech signals, improving perceptual quality metrics; howe ver this technique was not justified in the context of ASR. In this work, we conduct a detailed study to measure the effecti ve- ness of GANs in enhancing speech contaminated by both additiv e and rev erberant noise. Motiv ated by recent advances in image processing [2], we propose operating GANs on log- Mel filterbank spectra instead of wa veforms, which requires less computation and is more robust to reverberant noise. While GAN enhancement improves the performance of a clean-trained ASR system on noisy speech, it falls short of the performance achieved by con ventional multi-style train- ing (MTR). By appending the GAN-enhanced features to the noisy inputs and retraining, we achieve a 7 % WER improve- ment relativ e to the MTR system. Index T erms — Speech enhancement, spectral feature mapping, automatic speech recognition, generativ e adversar - ial networks, deep learning 1. INTRODUCTION Speech enhancement techniques aim to improv e the quality of speech by reducing noise. They are crucial components, either explicitly [3] or implicitly [4, 5], in ASR systems for noise robustness. Even with state-of-the-art deep learning- based ASR models, noise reduction techniques can still be beneficial [6]. Besides the conv entional enhancement tech- niques [3], deep neural networks have been widely adopted to either directly reconstruct clean speech [7, 8] or estimate masks [9, 10, 11] from the noisy signals. Different types of ∗ W ork performed as an intern at Google. † Copyright (c) 2017 IEEE. Personal use of this material is permitted. Howe ver , permission to reprint/republish this material for advertising or pro- motional purposes or for creating new collecti ve works for resale or redistri- bution to servers or lists, or to reuse an y copyrighted component of this work in other works must be obtained from the IEEE. networks ha ve also been inv estigated in the literature for en- hancement, such as denoising autoencoders [12], con volution networks [13] and recurrent networks [14]. In their limited history , GANs [15] hav e attracted atten- tion for their ability to synthesize con vincing images when trained on corpora of natural images. Refinements to netw ork architecture hav e improved the fidelity of the synthetic im- ages [16]. Isola et al. [2] demonstrate the effecti veness of GANs for image “translation” tasks, mapping images in one domain to related images in another . In spite of the success of GANs for image synthesis, e xploration on audio has been limited. Pascual et al. [1] demonstrate promising performance of GANs for speech enhancement in the presence of additiv e noise, posing enhancement as a translation task from noisy signals to clean ones. Their method, speech enhancement GAN (SEGAN), yields improvements to perceptual speech quality metrics over the noisy data and traditional enhance- ment baselines. Their inv estigation seeks to improve speech quality for telephony rather than ASR. In this work, we study the benefit of GAN-based speech enhancement for ASR. In order to limit the confounding fac- tors in our study , we use an existing ASR model trained on clean speech data to measure the ef fectiveness of GAN-based enhancement. T o gauge performance under more-realistic ASR conditions, we consider re verberation in addition to ad- ditiv e noise. W e first train a SEGAN model to map simulated noisy speech to the original clean speech in the time domain. Then, we measure the performance of the ASR model on noisy speech before and after enhancement by SEGAN. Our experiment indicates that SEGAN does not improve ASR performance under these noise conditions. T o address this, we refine the SEGAN method to operate on a time-frequency representation, specifically , log-Mel fil- terbank spectra. W ith this spectral featur e mapping (SFM) approach, we can pass the output of our enhancement model directly to the ASR model (Figure 1). While deep learning has previously been applied to SFM for ASR [17, 18, 19], our work is the first to use GANs for this task. Michelsanti et al. [20] employ GANs for SFM, b ut target speaker verifi- cation rather than ASR. Our frequency-domain approach im- prov es ASR performance dramatically , though performance Feature Extractor GAN-based Spectral Enhancer ASR Model Transcript Hypothesis Fig. 1 : System overvie w . is comparable to the same enhancement model trained with an L1 reconstruction loss. Anecdotally speaking, the GAN- enhanced spectra appear more realistic than the L1-enhanced spectra when visualized (Figure 3), s uggesting that ASR mod- els may not benefit from the fine-grained details that GAN enhancement produces. State-of-the-art ASR systems use MTR [21] to achiev e robustness to noise at inference time. While this strategy is known to be effecti ve, a resultant model may still benefit from enhancement as a preprocessing stage. T o measure this ef- fect, we also use an existing ASR model trained with MTR and compare its performance on noisy speech with and with- out enhancement. W e find that GAN-based enhancement de- grades performance of this model, e ven with retraining. Ho w- ev er , retraining the MTR model with both noisy and enhanced features in its input representation improv es performance. 2. GENERA TIVE AD VERSARIAL NETWORKS Generativ e adversarial networks (GANs) are unsupervised generativ e models that learn to produce realistic samples of a giv en dataset from low-dimensional, random latent vec- tors [15]. GANs consist of two models (usually neural net- works), a generator and a discriminator . The generator G maps latent v ectors drawn from some known prior p z to sam- ples: G : z 7→ ˆ y , where z ∼ p z . The discriminator D is tasked with determining if a giv en sample is real ( y ∼ p data , a sample from the real dataset) or fake ( G ( z ) ∼ p G , where p G is the implicit distrib ution of the generator when z ∼ p z ). The two models are pitted against each other in an adv ersarial framew ork. Real-world datasets often contain additional information associated with each example, e.g. the type of object depicted in an image. Conditional GANs (cGANs) [22] use this infor - mation x by providing it as input to the generator , typically in a one-hot representation: G : { x , z } 7→ ˆ y . After train- ing, we can sample from the generator’ s implicit posterior p G ( ˆ y | x ) by fixing x and sampling z ∼ p z . T o accom- plish this, G is trained to minimize the following objecti ve, while D is trained to maximize it: L cGAN ( G, D ) = E x,y ∼ p data [log D ( x , y )]+ E x ∼ p data , z ∼ p z [log(1 − D ( x , G ( x , z )))] . (1) Recently , researchers have used full-resolution images as conditioning information. Isola et al. [2] propose a cGAN ap- proach to address image-to-image “translation” tasks, where appropriate datasets consist of matched pairs of images ( x , y ) in two different domains. Their approach, dubbed pix2pix , uses a con volutional generator that recei ves as input an image x , a latent v ector z and produces G ( x , z ) : an image of iden- tical resolution to x . A conv olutional discriminator is sho wn pairs of images stacked along the channel axis and is trained to determine if the pair is real ( x , y ) or fake ( x , G ( x , z )) . For conditional image synthesis, prior w ork [23] demon- strates the ef fectiveness of combining the GAN objectiv e with an unstructured loss. Noting this, Isola et al. [2] use a hybrid objectiv e to optimize their generator, penalizing it for L1 re- construction error in addition to the adversarial objecti ve: min G max D V ( G, D ) = L cGAN ( G, D ) + 100 · L L 1 ( G ) , where L L 1 ( G ) = E x,y ∼ p data , z ∼ p z [ k y − G ( x , z ) k 1 ] . (2) 3. METHOD W e describe our approach to spectral feature mapping us- ing GANs, beginning by outlining the related time-domain SEGAN approach. 3.1. SEGAN Pascual et al. [1] propose SEGAN, a technique for enhanc- ing speech in the time domain. The SEGAN method is a 1D adaptation of the 2D pix2pix [2] approach. The fully- con volutional generator receiv es second-long ( 16384 samples at 16 kHz ) windo ws of noisy speech as input and is trained to output clean speech. During inference, the generator is used to enhance longer segments of speech by repeated application on one-second windows without o verlap. The generator’ s encoder consists of 11 layers of stride- 2 con volution with increasing depth, resulting in a feature map at the bottle-neck of 8 timesteps with depth 1024 . Here, the authors append a latent noise vector z of the same dimension- ality along the channel axis. The resultant 8 x 2048 matrix is input to an 11 -layer upsampling decoder , with skip connec- tions from corresponding input feature maps. As a departure from pix2pix, the authors remove batch normalization from the generator . Furthermore, they use 1D filters of width 31 instead of 2D filters of size 4 x 4 . They also substitute the tra- ditional GAN loss function with the least squares GAN ob- jectiv e [24]. In agreement with observations from [25], we found that the SEGAN generator learned to ignore z . W e hypothesize that latent vectors may be unnecessary giv en the presence of noise in the input. W e removed the latent vector from the gen- Fig. 2 : T ime-frequency FSEGAN enhancement strategy . G (composition of encoder G e and decoder G d ) maps stereo, noisy spectra x to enhanced G ( x ) . D recei ves as input either ( x , y ) or ( x , G ( x )) and decides if the pair is real or enhanced. erator altogether; the resultant deterministic model demon- strated improv ed performance in our experiments. 3.2. FSEGAN It is common practice in ASR to preprocess time-domain speech data into time-frequency spectral representations. Phase information of the signal is typically discarded; hence, an enhancement model only needs to reconstruct the magni- tude information of the time-frequency representation. W e hypothesize that performing GAN enhancement in the fre- quency domain will be more effecti ve than in the time do- main. W ith this motivation, we propose a frequency-domain SEGAN (FSEGAN), which performs spectral feature map- ping using an approach similar to pix2pix [2]. FSEGAN ingests time-windowed spectra of noisy speech and is trained to output clean speech spectra. The fully-con volutional FSEGAN generator contains 7 encoder and 7 decoder layers ( 4 x 4 filters with stride 2 and increasing depth), and features skip connections across the bottleneck between corresponding layers. The final decoder layer has linear activ ation and outputs a single channel. As with SEGAN, we exclude both batch normalization and la- tent codes z from our generator , resulting in a deterministic model. The discriminator contains 4 con volutional layers with 4 x 4 filters and a stride of 2 . A final 1 x 8 layer (stride 1 with sigmoid nonlinearity) aggregates the acti vations from 8 frequency bands into a single decision for each of 8 timesteps. W e train FSEGAN with the objecti ve in Equation 2. Other ar - chitectural details are identical to pix2pix [2]. 1 The FSEGAN approach is depicted in Figure 2. 1 W e modify the following open-source implementation: https://github.com/affinelayer/pix2pix-tensorflow 4. EXPERIMENTS 4.1. Dataset W e use the W all Street Journal (WSJ) corpus [26] as our source of clean speech data. Specifically , we train on the 16 kHz , speaker -independent SI-284 set ( 81 hours, 284 speakers, 37k utterances). W e perform validation on the dev93 set and e v aluate on the ev al92 set. W e use lar ge, stereo datasets of musical and ambient sig- nals as our additiv e noise sources for MTR. This data is col- lected from Y ouT ube and recordings of daily life en viron- ments. During training, we use discrete mixtures ranging from 0 dB to 30 dB SNR, a veraging 11 dB . At test time, the SNRs are slightly offset, ranging from 0 . 2 dB to 30 . 2 dB . As a source of rev erberation for MTR, we use a room sim- ulator as described in [27]. The simulator randomizes the po- sitions of the speech and noise sources, the position of a vir- tual stereo microphone, the T 60 of the re verberation, and the room geometry . Through this process, our monaural speech data becomes stereo. Room configurations for training and testing are drawn from distinct sets; they are randomized dur - ing training and fixed during testing. 4.2. ASR Model W e train a monaural listen, attend and spell (LAS) model [28] on the clean WSJ training data as described in Section 4.1, performing early stopping by the WER of the model on the validation set. T o compare the effecti veness of GAN-based enhancement to MTR, we also train the same model using MTR as described in Section 4.1, using only one channel of the noisy speech. W e refer to the clean-trained model as ASR- Clean and the MTR-trained model as ASR-MTR. T o preprocess the time-domain data, we first apply the short-time Fourier transform with a window size of 32 ms and a hop size of 10 ms . W e retain the magnitude spectrum of the output and discard the phase. Then, we calculate triangu- lar windo ws for a bank of 128 filters, where filter center fre- quencies are equally spaced on the Mel scale between 125 Hz and 7500 Hz . After applying this transform to the magnitude spectrum, we take the logarithm of the output and normalize each frequency bin to ha ve zero mean and unit v ariance. T o process these features, our LAS encoder contains two con volutional layers with filter sizes: 1) 3 x 5 x 1 x 32 , and 2) 3 x 3 x 32 x 32 . The activ ations of the second layer are passed to a bidirectional, con volutional LSTM layer [29, 30], fol- lowed by three bidirectional LSTM layers. The decoder contains a unidirectional LSTM with additi ve attention [31] whose outputs are fed to a softmax over characters. 4.3. GAN For our GAN experiments, we generate multi-style, matched pairs of noisy and clean speech in the manner described in T est Set Enhancer ASR-Clean WER ASR-MTR WER Clean None 11 . 9 14 . 3 MTR None 72 . 2 20 . 3 SEGAN 80 . 7 52 . 8 FSEGAN 33 . 3 25 . 4 T able 1 : Results of GAN enhancement experiments. Section 4.1. For our FSEGAN experiments, we transform these pairs into time-frequency spectra in a manner identical to that of the ASR model described in Section 4.2. W e frame the pairs into 1 . 28 s windows with 50% overlap and train with random minibatches of size 100 . The resultant SEGAN inputs are 20480 samples long and the FSEGAN inputs are 128 x 128 . In alignment with [1], we use no overlap during ev aluation. W e perform early stopping based on the WER of ASR-Clean on the enhanced validation set. 5. RESUL TS W e compute the WER of ASR-Clean and ASR-MTR on both the clean and MTR test sets. W e also compute the WER of both models on the MTR test set enhanced by SEGAN and FSEGAN. Results are sho wn in T able 1. While the WER of ASR-Clean ( 11 . 9% ) is not state-of-the-art, we focus more on relativ e performance with enhancement. Our previous work [32] has shown that LAS can approach state-of-the-art performance when trained on larger amounts of data. The SEGAN method degrades performance of ASR- Clean on the MTR test set by 12% relative. T o verify the accuracy of this result, we also ran an experiment to remove only additi ve noise with SEGAN: the conditions in the orig- inal paper . Under that condition, we found that SEGAN improv ed performance of ASR-Clean by 21% relativ e, indi- cating that SEGAN struggles to suppress rev erberation. In contrast, our FSEGAN method improv es the per- formance of ASR-Clean by 54% relati ve. While this is a dramatic improvement, it does not exceed the performance achiev ed with MTR training ( 33% vs. 20% WER). Fur- thermore, FSEGAN degraded performance for ASR-MTR, consistent with observations in [33]. W e sho w a visualization of FSEGAN enhancement in Fig- ure 3. The procedure appears to reduce both the presence of additiv e noise and rev erberant smearing. Despite this, the pro- cedure degrades performance of ASR-MTR. W e hypothesize that the enhancement process may be introducing hitherto- unseen distortions that compromise performance. 5.1. Retraining Experiments Hoping to improv e performance beyond that of MTR tr aining alone, we retrain ASR-MTR using FSEGAN-enhanced fea- tures. T o examine the effecti veness of the adv ersarial compo- nent of FSEGAN, we also experiment with training the same (a) Noisy speech input x (b) L1-trained output G ( x ) (c) Clean speech target y (d) FSEGAN output G ( x ) Fig. 3 : Noisy utterance enhanced by FSEGAN. Model WER (%) MTR Baseline * 20 . 3 + Stereo 19 . 0 MTR + FSEGAN Enhancer * 25 . 4 + Retraining 21 . 0 + Hybrid Retraining 17 . 6 MTR + L1-trained Enhancer * 21 . 4 + Retraining 18 . 0 + Hybrid Retraining 17 . 1 T able 2 : Results of ASR-MTR retraining. Ro ws marked with * are the same model under different enhancement conditions. enhancement model using only the L1 portion of the hybrid loss function ( L L 1 ( G ) from Equation 2). Considering that the model may benefit from knowledge of both the enhanced and noisy features, we also train a model to ingest these two representations stacked along the chan- nel axis. W e initialize this new hybrid model from the exist- ing ASR-MTR checkpoint, setting the additional parameters to zero to ensure identical performance at the start of train- ing. T o ensure that the hybrid model is not strictly benefiting from increased parametrization, we train an LAS model from scratch with stereo MTR input. Results for these experiments appear in T able 2. Retraining ASR-MTR with FSEGAN-enhanced features improv es performance by 17% relative to nai vely feeding them, b ut still falls short of MTR training. Hybrid retraining with both the original noisy and enhanced features improves performance further , exceeding the performance of stereo MTR training alone by 7% relati ve. Our results indicate that training the same enhancer with the L1 objectiv e achie ves better ASR performance than an adversarial approach, sug- gesting limited usefulness of GANs in this context. 6. CONCLUSIONS W e ha ve introduced FSEGAN, a GAN-based method for per- forming speech enhancement in the frequency domain, and demonstrated improvements in ASR performance o ver a prior time-domain approach. W e provide e vidence that, with re- training, FSEGAN can improve the performance of existing MTR-trained ASR systems. Our experiments indicate that, for ASR, simpler regression approaches may be preferable to GAN-based enhancement. FSEGAN appears to produce plausible spectra and may be more useful for telephonic ap- plications if paired with an in vertible feature representation. 7. A CKNO WLEDGEMENTS The authors would like to thank Arun Narayanan, Chanwoo Kim, Ke vin Wilson, and Rif A. Saurous for helpful comments and suggestions on this work. 8. REFERENCES [1] Santiago Pascual, Antonio Bonafonte, and Joan Serr ` a, “SEGAN: Speech Enhancement Generativ e Adversarial Net- work, ” in Pr oc. INTERSPEECH , 2017. [2] Phillip Isola, Jun-Y an Zhu, Tinghui Zhou, and Alex ei A Efros, “Image-to-image translation with conditional adversarial net- works, ” in Pr oc. CVPR , 2017. [3] Jingdong Chen, Jacob Benesty , Y iteng Arden Huang, and Eric J Diethorn, “Fundamentals of noise reduction, ” in Springer Handbook of Speech Pr ocessing , pp. 843–872. Springer , 2008. [4] Y ong Xu, Jun Du, Li-Rong Dai, and Chin-Hui Lee, “ An ex- perimental study on speech enhancement based on deep neural networks, ” IEEE Signal processing letters , v ol. 21, no. 1, pp. 65–68, 2014. [5] Bo Li, T ara N Sainath, Ron J W eiss, Ke vin W W ilson, and Michiel Bacchiani, “Neural Network Adaptive Beamforming for Robust Multichannel Speech Recognition, ” in Pr oc. IN- TERSPEECH , 2016. [6] Jinyu Li, Y an Huang, and Y ifan Gong, “Improved cepstra minimum-mean-square-error noise reduction algorithm for ro- bust speech recognition, ” in Proc. ICASSP . IEEE, 2017. [7] Xue Feng, Y aodong Zhang, and James Glass, “Speech feature denoising and dere verberation via deep autoencoders for noisy rev erberant speech recognition, ” in Proc. ICASSP . IEEE, 2014. [8] Y ong Xu, Jun Du, Li-Rong Dai, and Chin-Hui Lee, “ A regres- sion approach to speech enhancement based on deep neural networks, ” IEEE/ACM T ransactions on ASLP , vol. 23, no. 1, pp. 7–19, 2015. [9] DeLiang W ang and Jitong Chen, “Supervised speech separa- tion based on deep learning: an overvie w , ” , 2017. [10] Bo Li and Khe Chai Sim, “ A spectral masking approach to noise-robust speech recognition using deep neural networks, ” IEEE/A CM T ransactions on ASLP , vol. 22, no. 8, pp. 1296– 1305, 2014. [11] Arun Narayanan and DeLiang W ang, “Ideal ratio mask estima- tion using deep neural networks for rob ust speech recognition, ” in Pr oc. ICASSP . IEEE, 2013. [12] Xugang Lu, Y u Tsao, Shigeki Matsuda, and Chiori Hori, “Speech enhancement based on deep denoising autoencoder ., ” in Pr oc. INTERSPEECH , 2013. [13] Szu-W ei Fu, Y u Tsao, and Xugang Lu, “Snr -aware con volu- tional neural network modeling for speech enhancement., ” in Pr oc. INTERSPEECH , 2016. [14] Felix W eninger, Hakan Erdogan, and et al. , “Speech enhance- ment with lstm recurrent neural networks and its application to noise-robust asr , ” in International Confer ence on Latent V ariable Analysis and Signal Separation . Springer , 2015, pp. 91–99. [15] Ian Goodfello w , Jean Pouget-Abadie, and et al. , “Generativ e adversarial nets, ” in Pr oc. NIPS , 2014. [16] Alec Radford, Luke Metz, and Soumith Chintala, “Unsuper - vised representation learning with deep con volutional genera- tiv e adversarial networks, ” in Pr oc. ICLR , 2016. [17] Andrew L Maas, Quoc V Le, and et al. , “Recurrent neural networks for noise reduction in robust ASR, ” in Pr oc. INTER- SPEECH , 2012. [18] Kun Han, Y anzhang He, Deblin Bagchi, Eric Fosler -Lussier , and DeLiang W ang, “Deep neural network based spectral fea- ture mapping for robust speech recognition, ” in Pr oc. INTER- SPEECH , 2015. [19] Arun Narayanan and DeLiang W ang, “Impro ving robustness of deep neural network acoustic models via speech separation and joint adaptive training, ” IEEE/ACM transactions on ASLP , vol. 23, no. 1, pp. 92–101, 2015. [20] Daniel Michelsanti and Zheng-Hua T an, “Conditional gener- ativ e adversarial networks for speech enhancement and noise- robust speak er verification, ” in Pr oc. INTERSPEECH , 2017. [21] R Lippmann, Edw ard Martin, and D P aul, “Multi-style training for robust isolated-word speech recognition, ” in Pr oc. ICASSP . IEEE, 1987. [22] Mehdi Mirza and Simon Osindero, “Conditional generati ve adversarial nets, ” , 2014. [23] Deepak Pathak, Philipp Krahenbuhl, Jeff Donahue, Tre vor Darrell, and Alexei A Efros, “Context encoders: Feature learn- ing by inpainting, ” in Pr oc. CVPR , 2016. [24] Xudong Mao, Qing Li, and et al. , “Least squares generativ e adversarial networks, ” , 2016. [25] Michael Mathieu, Camille Couprie, and Y ann LeCun, “Deep multi-scale video prediction beyond mean square error, ” in Pr oc. ICLR , 2016. [26] Douglas B Paul and Janet M Bak er, “The design for the W all Street Journal-based CSR corpus, ” in Pr oceedings of the work- shop on Speech and Natural Language . Association for Com- putational Linguistics, 1992, pp. 357–362. [27] Chanwoo Kim, Ananya Misra, K ean Chin, Thad Hughes, Arun Narayanan, T ara Sainath, and Michiel Bacchiani, “Gener- ation of large-scale simulated utterances in virtual rooms to train deep-neural networks for far -field speech recognition in Google Home, ” in Pr oc. INTERSPEECH , 2017. [28] W illiam Chan, Navdeep Jaitly , Quoc V Le, and Oriol V inyals, “Listen, attend and spell, ” , 2015. [29] Y u Zhang, W illiam Chan, and Navdeep Jaitly , “V ery deep con volutional networks for end-to-end speech recognition, ” in Pr oc. ICASSP . IEEE, 2017. [30] SHI Xingjian, Zhourong Chen, Hao W ang, Dit-Y an Y eung, W ai-Kin W ong, and W ang-chun W oo, “Conv olutional LSTM network: A machine learning approach for precipitation now- casting, ” in Pr oc. NIPS , 2015. [31] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Bengio, “Neural machine translation by jointly learning to align and translate, ” in Pr oc. ICLR , 2015. [32] Rohit Prabha valkar , Kanishka Rao, T ara N Sainath, Bo Li, Leif Johnson, and Navdeep Jaitly , “ A comparison of sequence-to- sequence models for speech recognition, ” in Pr oc. INTER- SPEECH , 2017. [33] Arun Narayanan and DeLiang W ang, “Joint noise adaptiv e training for robust automatic speech recognition, ” in Proc. ICASSP . IEEE, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment