Robust Learning of Fixed-Structure Bayesian Networks

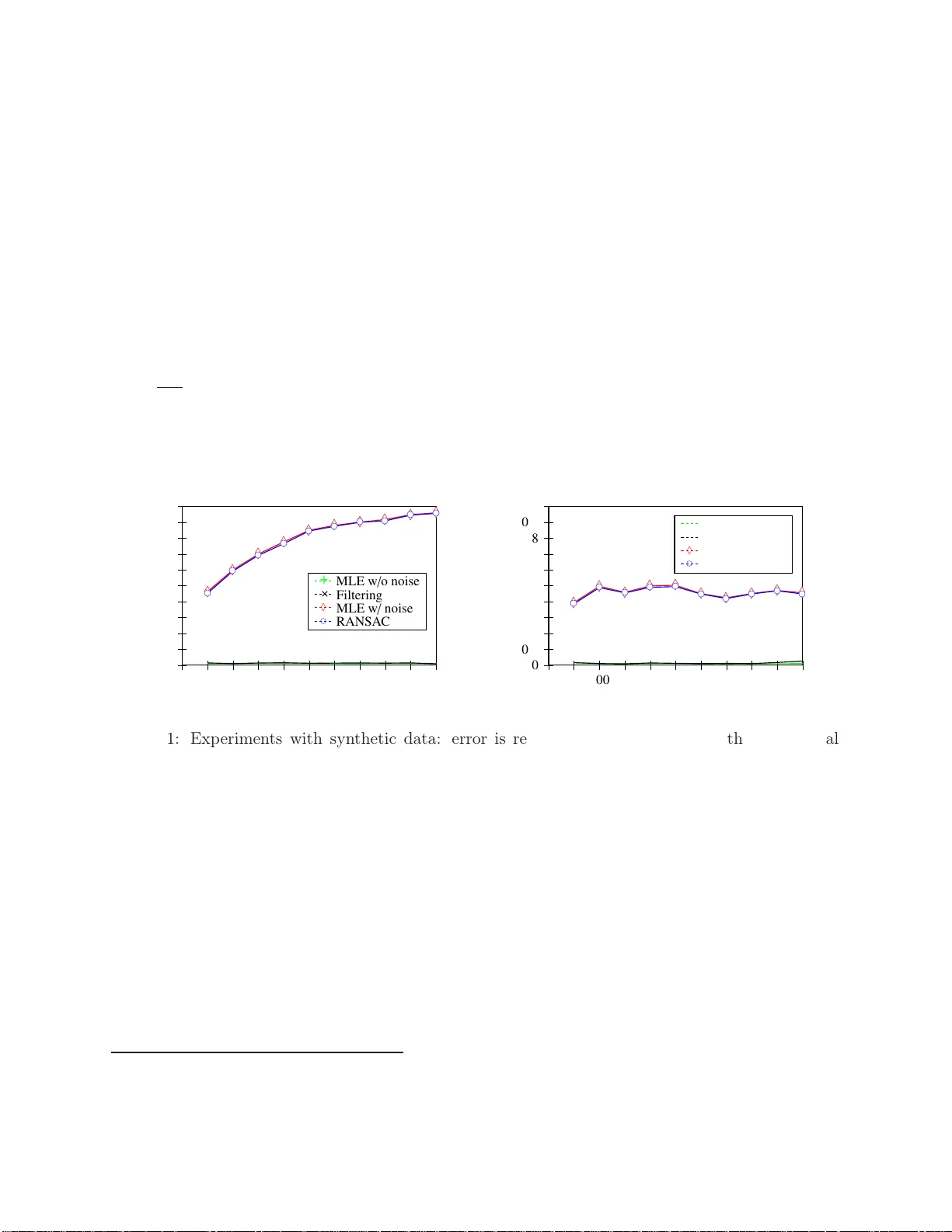

We investigate the problem of learning Bayesian networks in a robust model where an $\epsilon$-fraction of the samples are adversarially corrupted. In this work, we study the fully observable discrete case where the structure of the network is given.…

Authors: Yu Cheng, Ilias Diakonikolas, Daniel Kane