5Gperf: signal processing performance for 5G

The 5Gperf project was conducted by Huawei research teams in 2016-17. It was concerned with the acceleration of signal-processing algorithms for a 5G base-station prototype. It improved on already optimized SIMD-parallel CPU algorithms and designed a new software tool for higher programmer productivity when converting MATLAB code to optimized C

💡 Research Summary

The paper reports on the 5Gperf project carried out by Huawei’s research teams in 2016‑2017, whose goal was to accelerate the signal‑processing chain of a 5G base‑station prototype. The prototype consists of a massive‑MIMO front‑end with 64 transceivers covering a 100 MHz band, driven by five Huawei E9000 blade servers interconnected via InfiniBand. Each CPU core runs an instance of a two‑pipeline processing chain; each stage of the pipeline implements a specialized algorithm originally designed in MATLAB. Converting these MATLAB models to high‑performance C code is traditionally a labor‑intensive process, often requiring a man‑month per algorithm, and the resulting kernels are small enough that naïve SIMD vectorization yields limited gains.

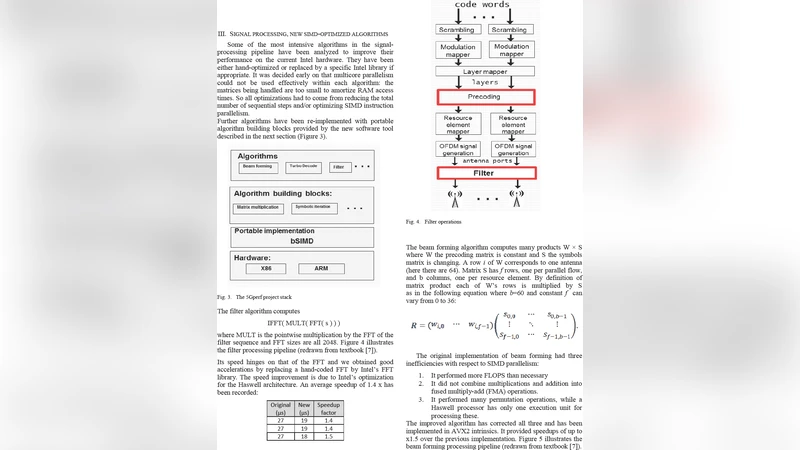

The project tackled two complementary challenges. First, it applied aggressive SIMD‑parallel optimizations to the most compute‑intensive stages—FFT, channel estimation, bit‑interleaving, and turbo decoding. By carefully aligning data, unrolling loops, and exploiting the full width of vector registers on both x86 and ARM architectures, the team achieved speed‑ups ranging from 20 % to 100 % across the pipeline. Turbo decoding, in particular, saw a 1.7‑to‑1.9× acceleration, allowing the number of CPU cores required for parallel multi‑stream execution to drop from 20 to 12, which translates directly into energy savings.

Second, the project introduced a novel software tool called the “Optimizer” that dramatically improves programmer productivity when porting MATLAB code to optimized C. The Optimizer works by recognizing special pragma annotations embedded in the C source. These pragmas specify which algorithmic building blocks (e.g., matrix multiplication, FFT, filtering) should replace the annotated code region. The tool consults a database of pre‑implemented, highly tuned SIMD kernels built on Numscale’s bSIMD library, selects the best implementation for the given matrix size and target architecture, and injects the generated code along with any required includes or helper functions. This approach reduces the manual conversion effort by a factor of five, while delivering performance comparable to hand‑tuned code. Moreover, because the underlying kernels are portable across ARM and x86, the same source can be compiled for heterogeneous hardware platforms without modification.

Experimental validation was performed on the actual field‑test setup, which involved 26 cell phones connected to the antenna array. The optimized pipeline processed real traffic in continuous mode, confirming that the achieved speed‑ups are sufficient to meet the stringent latency and throughput requirements of 5G‑NR. The authors also note that memory bandwidth, rather than raw compute, remains a limiting factor for many of the small kernels, underscoring the importance of cache‑friendly data layouts and prefetching strategies employed in the SIMD optimizations.

In conclusion, the 5Gperf project demonstrates that a combination of low‑level SIMD engineering and high‑level automated code generation can yield substantial performance gains (20 %‑100 %) and significant reductions in development time for 5G base‑station signal processing. The Optimizer’s pragma‑driven workflow provides a scalable path for future algorithmic upgrades, and the SIMD‑accelerated kernels lay a solid foundation for upcoming 5G‑NR and massive‑MIMO deployments that demand real‑time processing at scale. The paper ends with acknowledgments to the contributors and a brief outlook on extending the toolchain to more complex algorithms and further power‑efficiency improvements.

Comments & Academic Discussion

Loading comments...

Leave a Comment