OS Scheduling Algorithms for Improving the Performance of Multithreaded Workloads

Major chip manufacturers have all introduced multicore microprocessors. Multi-socket systems built from these processors are used for running various server applications. However to the best of our knowledge current commercial operating systems are not optimized for multi-threaded workloads running on such servers. Cache-to-cache transfers and remote memory accesses impact the performance of such workloads. This paper presents a unified approach to optimizing OS scheduling algorithms for both cache-to-cache transfers and remote DRAM accesses that also takes cache affinity into account. By observing the patterns of local and remote cache-to-cache transfers as well as local and remote DRAM accesses for every thread in each scheduling quantum and applying different algorithms, we come up with a new schedule of threads for the next quantum taking cache affinity into account. This new schedule cuts down both remote cache-to-cache transfers and remote DRAM accesses for the next scheduling quantum and improves overall performance. We present two algorithms of varying complexity for optimizing cache-to-cache transfers. One of these is a new algorithm which is relatively simpler and performs better when combined with algorithms that optimize remote DRAM accesses. For optimizing remote DRAM accesses we present two algorithms. Though both algorithms differ in algorithmic complexity we find that for our workloads they perform equally well. We used three different synthetic workloads to evaluate these algorithms. We also performed sensitivity analysis with respect to varying remote cache-to-cache transfer latency and remote DRAM latency. We show that these algorithms can cut down overall latency by up to 16.79% depending on the algorithm used.

💡 Research Summary

The paper tackles a pressing performance problem in modern server platforms that combine multicore processors with multi‑socket NUMA architectures. While commercial operating systems excel at balancing CPU load and minimizing latency for single‑threaded or loosely coupled workloads, they largely ignore two sources of overhead that become dominant for tightly coupled multithreaded applications: (1) remote cache‑to‑cache transfers caused by the cache‑coherency protocol and (2) remote DRAM accesses that suffer from the non‑uniform memory latency of NUMA systems. Both phenomena increase execution time, especially when the application exhibits high inter‑thread communication or when a large fraction of memory references target a remote memory node.

Problem Formulation

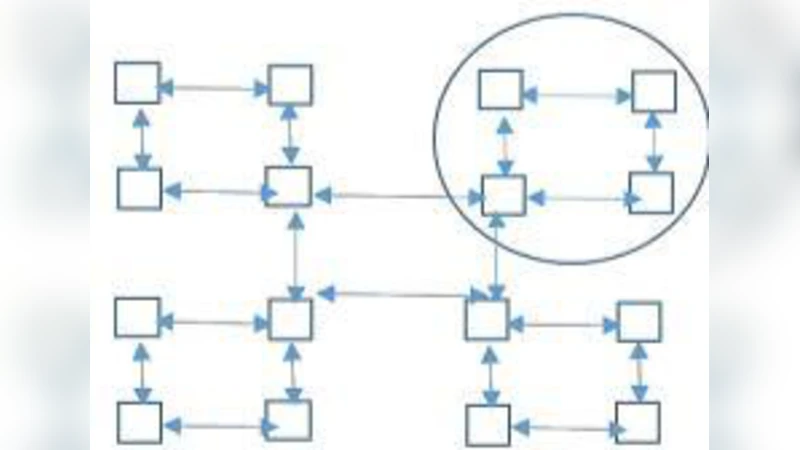

For each scheduling quantum the authors collect four counters for every thread i: local cache‑to‑cache transfers (C_i^L), remote cache‑to‑cache transfers (C_i^R), local DRAM accesses (M_i^L), and remote DRAM accesses (M_i^R). These counters are obtained via hardware performance events and page‑fault tracing, producing a thread‑to‑thread transfer graph (weights = number of cache lines exchanged) and a thread‑to‑memory affinity map (weights = number of accesses to each NUMA node). The goal for the next quantum is to assign each thread to a socket such that the total cost of remote cache traffic and remote memory traffic is minimized, while preserving cache affinity (i.e., avoiding unnecessary thread migrations that would evict useful cache lines).

Algorithms for Cache‑to‑Cache Optimization

Two strategies are explored.

- Greedy Pairing: Sort thread pairs by descending remote transfer volume and co‑locate each pair on the same socket if possible. This runs in (O(N \log N)) and provides a quick, baseline improvement.

- Hill‑Climbing Heuristic: Formulate the total remote transfer cost as (\sum_{i,j} w_{ij},d(s_i,s_j)) where (w_{ij}) is the transfer weight and (d(s_i,s_j)) is 0 for the same socket and 1 otherwise. Starting from the greedy solution, the algorithm repeatedly swaps the socket assignments of two threads if the swap reduces the objective. The process stops when no improving swap exists. Empirically the method converges in a few hundred iterations for up to a few hundred threads, yielding a near‑optimal placement with modest runtime overhead (≈0.8 ms for 100 threads).

Algorithms for Remote DRAM Optimization

Again two approaches are presented.

- Memory‑Affinity Placement: For each thread, identify the NUMA node that receives the majority of its accesses and pin the thread to the socket physically attached to that node. This eliminates most remote DRAM latency but may increase remote cache traffic.

- Mixed‑Cost Minimization: Define a combined objective

\

Comments & Academic Discussion

Loading comments...

Leave a Comment