DNN-based Source Enhancement to Increase Objective Sound Quality Assessment Score

We propose a training method for deep neural network (DNN)-based source enhancement to increase objective sound quality assessment (OSQA) scores such as the perceptual evaluation of speech quality (PESQ). In many conventional studies, DNNs have been …

Authors: Yuma Koizumi, Kenta Niwa, Yusuke Hioka

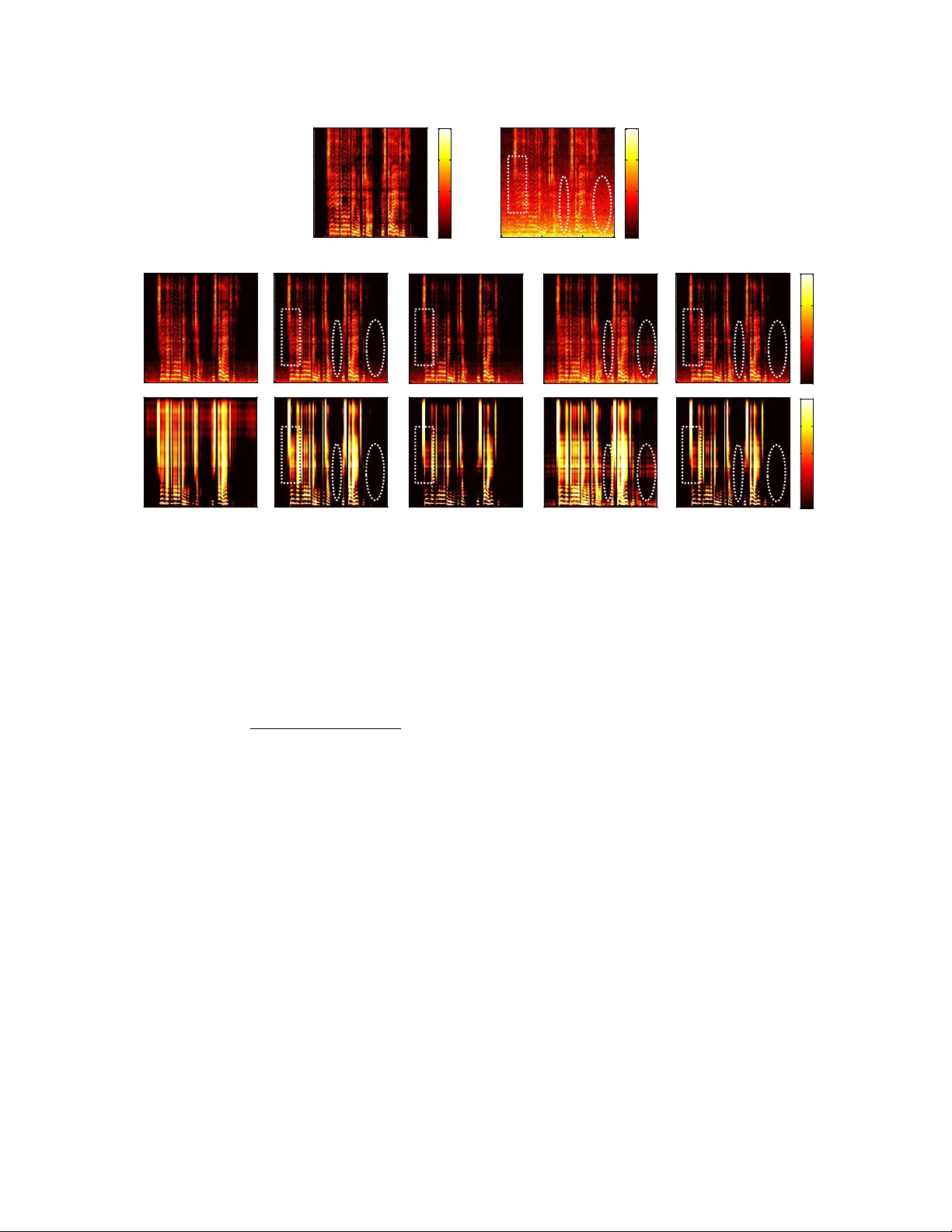

1 DNN-based Source Enhancement to Increase Objecti v e Sound Quality Assessment Score Y uma K oizumi 1 Member , IEEE, , K enta Niwa 1 Member , IEEE, , Y usuke Hioka 2 Senior Member , IEEE , Kazunori K obayashi 1 and Y oichi Haneda 3 Senior Member , IEEE, Abstract —W e propose a training method for deep neural network (DNN)-based source enhancement to increase objective sound quality assessment (OSQA) scores such as the per ceptual evaluation of speech quality (PESQ). In many con ventional studies, DNNs hav e been used as a mapping function to estimate time-frequency masks and trained to minimize an analytically tractable objective function such as the mean squar ed error (MSE). Since OSQA scores have been used widely for sound- quality evaluation, constructing DNNs to incr ease OSQA scor es would be better than using the minimum-MSE to create high- quality output signals. However , since most OSQA scores are not analytically tractable, i.e. , they ar e black boxes, the gradient of the objective function cannot be calculated by simply applying back-propagation. T o calculate the gradient of the OSQA-based objective function, we formulated a DNN optimization scheme on the basis of black-box optimization , which is used f or training a computer that plays a game. For a black-box-optimization scheme, we adopt the policy gradient method for calculating the gradient on the basis of a sampling algorithm. T o simulate output signals using the sampling algorithm, DNNs are used to estimate the probability-density function of the output signals that maximize OSQA scores. The OSQA scores are calculated from the simulated output signals, and the DNNs are trained to increase the probability of generating the simulated output signals that achieve high OSQA scor es. Thr ough several exper- iments, we found that OSQA scores significantly increased by applying the proposed method, even though the MSE was not minimized. Index T erms —Sound-source enhancement, time-frequency mask, deep learning, objective sound quality assessment (OSQA) score. I. INTRODUCTION S OUND-source enhancement has been studied for many years [1]–[6] because of the high demand for its use for various practical applications such as automatic speech recognition [7]–[9], hands-free telecommunication [10], [11], hearing aids [12]–[15], and immersive audio field represen- tation [16], [17]. In this study , we aimed at generating an enhanced target source with high listening quality because the processed sounds are assumed perceiv ed by humans. Recently , deep learning [18] has been successfully used for sound-source enhancement [8], [15], [19]–[35] . In many of 1 : NTT Media Intelligence Laboratories, NTT Corporation, T okyo, Japan (e- mail: koizumi.yuma@ieee.org, niwa.kenta, kobayashi.kazunori@lab .ntt.co.jp) 2 : Department of Mechanical Engineering, Uni versity of Auckland, 20 Symonds Street, Auckland, 1010 New Zealand (e-mail: yusuke.hioka@ieee.org) 3 : Department of Informatics, The University of Electro-Communications, T okyo, Japan (e-mail: haneda.yoichi@uec.ac.jp) Copyright (c) 2018 IEEE. This article is the “accepted” version. Digital Object Identifier: 10.1109 / T ASLP .2018.2842156 these con ventional studies, deep neural networks (DNNs) were used as a regression function to estimate time-frequency (T -F) masks [19]–[22] and / or amplitude-spectra of the tar get source [23]–[31]. The parameters of the DNNs were trained using back-propagation [36] to minimize an analytically tractable objectiv e function such as the mean squared error (MSE) between supervised outputs and DNN outputs. In recent stud- ies, advanced analytical objective functions were used such as the maximum-likelihood (ML) [31], [32], the combination of multi-types of MSE [25]–[27], the Kullback-Leibler and / or Itakura-Saito di vergence [33], the modified short-time intelli- gibility measure (STOI) [22], the clustering cost [34], and the discriminativ e cost of a clean target source and output signal using a generati ve adversarial network (GAN) [35]. When output sound is perceiv ed by humans, the objective function that reflects human perception may not be analytically tractable, i.e. , it is a black-box function. In the past fe w years, objectiv e sound quality assessment (OSQA) scores, such as the perceptual ev aluation of speech quality (PESQ) [37] and STOI [38], ha ve been commonly used to e valuate output sound quality . Thus, it might be better to construct DNNs to increase OSQA scores directly . Howe ver , since typical OSQA scores are not analytically defined ( i.e. , the y are black-box functions), the gradient of the objectiv e function cannot be calculated by simply applying back-propagation. W e pre viously proposed a DNN training method to estimate T -F masks and increase OSQA scores [39]. T o ov ercome the problem that the objectiv e function to maximize the OSQA scores is not analytically tractable, we dev eloped a DNN- training method on the basis of the black-box optimization framew ork [40], as used in predicting the winning percentage of the game Go [41]. The basic idea of block-box optimization is estimating a gradient from randomly simulated output. For example, in the training of a DNN for the Go-playing computer , the computer determines a “ move ” (where to put a Go-stone) depending on the DNN output. Then, when the computer won the g ame, a gradient is calculated to increase the selection probability of the selected “ moves ”. W e adopt this strategy to increase the OSQA scores; some output signals are randomly simulated and a DNN is trained to increase the generation probability of the simulated output signals that achiev ed high OSQA scores. For the first trial, we prepared a finite number of T -F mask templates and trained DNNs to select the best template that maximizes the OSQA score. Although we found that the OSQA scores increased using this method, the output performances would improve by extending the method to a more flexible T -F mask design scheme from 2 ! " # $ % & ' ( ) * + , - , ./ 0 1 0 0 0 2 3 4 5 6 7 8 Fig. 1. Concept of proposed method the template-selection scheme. In this study , to arbitrarily estimate T -F masks, we modified the DNN source enhancement architecture to estimate the latent parameters in a continuous probability density function (PDF) of the T -F mask processing output signals, as shown in Fig. 1. T o calculate the gradient of the objecti ve function, we adopt the policy gradient method [42] as a black-box optimization scheme. With our method, the estimated latent parameters construct a continuous PDF as the “ policy ” of T - F-mask estimation to increase OSQA scores. On the basis of this polic y , the output signals are directly simulated using the sampling algorithm. Then, the gradient of the DNN is estimated to increase / decrease the generation probability of output signals with high / lo w OSQA scores, respecti vely . The sampling from continuous PDF causes the estimate of the gradient to fluctuate, resulting in unstable training behavior . T o avoid this problem, we additionally formulate two tricks: i) score normalization to reduce the v ariance in the estimated gradient, and ii) a sampling algorithm to simulate output signals to satisfy the constraint of T -F mask processing. The rest of this paper is organized as follows. Section II introduces DNN source enhancement based on the ML approach. In Section III, we propose our DNN training method to increase OSQA scores on the basis of the black- box optimization. After in vestig ating the sound quality of output signals through sev eral experiments in Section IV, we conclude this paper in Section V. II. CONVENTIONAL METHOD A. Sound sour ce enhancement with time-fr equency mask Let us consider the problem of estimating a target source S ω,τ ∈ C , which is surrounded by ambient noise N ω,τ ∈ C . A signal observed with a single microphone X ω,τ ∈ C is assumed to be modeled as X ω,τ = S ω,τ + N ω,τ , (1) where ω = { 1 , 2 , ..., Ω } and τ = { 1 , 2 , ..., T } denote the frequency and time indices, respecti vely . In sound-source enhancement using T -F masks, the output signal ˆ S ω,τ is obtained by multiplying a T -F mask by X ω,τ as ˆ S ω,τ = G ω,τ X ω,τ , (2) where 0 ≤ G ω,τ ≤ 1 is a T -F mask. The IRM G IRM ω,τ [8] is an implementation of T -F mask, which is defined by G IRM ω,τ = | S ω,τ | | S ω,τ | + | N ω,τ | . (3) The IRM maximizes the signal-to-noise-ratio (SNR) when the phase spectrum of S ω,τ coincides with that of N ω,τ . Howe ver , this assumption is almost nev er satisfied in most practical cases. T o compensate for this mismatch, the phase sensitiv e spectrum approximation (PSA) [19], [20] w as proposed G PSA ω,τ = min 1 , max 0 , | S ω,τ | | X ω,τ | cos θ ( S ) ω,τ − θ ( X ) ω,τ !! , (4) where θ ( S ) ω,τ and θ ( X ) ω,τ are the phase spectra of S ω,τ and X ω,τ , respectiv ely . Since the PSA G PSA ω,τ is a T -F mask that minimizes the squared error between S ω,τ and ˆ S ω,τ on the complex plane, we use this as a T -F masking scheme. B. Maximum-likelihood-based DNN training for T -F mask estimation In many con ventional studies of DNN-based source en- hancement, DNNs were used as a mapping function to es- timate T -F masks. In this section, we explain DNN training based on ML estimation, on which the proposed method is based. Since the ML-based approach explicitly models the PDF of the target source, it becomes possible to simulate output signals by generating random numbers from the PDF . In ML-based training, the DNNs are constructed to estimate the parameters of the conditional PDF of the target source providing the observation is given by p ( S τ | X τ , Θ ). Here, Θ denotes the DNN parameters. Its example on a fully connected DNN is described later (after (16)). The target and observation source are assumed to be v ectorized for all frequenc y bins as S τ : = ( S 1 ,τ , ..., S Ω ,τ ) > , (5) X τ : = ( X 1 ,τ , ..., X Ω ,τ ) > , (6) where > is transposition. Then Θ is trained to maximize the expectation of the log-lik elihood as Θ ← arg max Θ J ML ( Θ ) , (7) where the objecti ve function J ML ( Θ ) is defined by J ML ( Θ ) = E S , X ln p ( S | X , Θ ) , (8) and E x [ · ] denotes the expectation operator for x . Howe ver , since (8) is di ffi cult to analytically calculate, the expectation calculation is replaced with the a verage of the training dataset as J ML ( Θ ) ≈ 1 T T X τ = 1 ln p ( S τ | X τ , Θ ) . (9) The back-propagation algorithm [36] is used in training Θ to maximize (9). When p ( S τ | X τ , Θ ) is composed of di ff erentiable functions with respect to Θ , the gradient is calculated as ∇ Θ J ML ( Θ ) ≈ 1 T T X τ = 1 ∇ Θ ln p ( S τ | X τ , Θ ) , (10) 3 Fig. 2. ML-based DNN architecture used in T -F mask estimation where ∇ x is a partial di ff erential operator with respect to x . T o calculate (10), p ( S τ | X τ , Θ ) is modeled by assuming that the estimation error of S ω,τ is independent for all frequency bins and follows the zero-mean complex Gaussian distrib ution with the variance σ 2 ω,τ . The assumption is based on state-of- the-art methods, which train DNNs to minimize the MSE be- tween S ω,τ and ˆ G ω,τ X ω,τ on the comple x plane [19], [20]. The minimum-MSE (MMSE) on the complex plane is equivalent to assuming that the errors are independent for all frequency bins and follow the zero-mean complex Gaussian distrib ution with variance 1. Our assumption relaxes the assumption of the con ventional methods; the v ariances of each frequency bin vary according to the error v alues to maximize the likelihood. Thus, since ˆ S ω,τ is gi ven by ˆ G ω,τ X ω,τ , p ( S τ | X τ , Θ ) is modeled by the following complex Gaussian distribution as p ( S τ | X τ , Θ ) = Ω Y ω = 1 1 2 πσ 2 ω,τ exp − S ω,τ − ˆ G ω,τ X ω,τ 2 2 σ 2 ω,τ . (11) In this model, it can be regarded that the MSE between S ω,τ and ˆ S ω,τ on the complex plane is extended to the likelihood of S ω,τ defined on the complex Gaussian distribution, the mean and variance parameters of which are ˆ S ω,τ and σ 2 ω,τ , respectiv ely . (11) includes unknown parameters: the T -F mask ˆ G ω,τ and error v ariance σ 2 ω,τ . Thus, we construct DNNs to estimate ˆ G ω,τ and σ 2 ω,τ from X τ , as shown in Fig. 2. The vectorized T -F masks and error variances for all frequency bins are defined as G ( x τ ) : = ˆ G 1 ,τ , ..., ˆ G Ω ,τ > , (12) σ ( x τ ) : = σ 2 1 ,τ , ..., σ 2 Ω ,τ > . (13) Here x τ is the input vector of DNNs that is prepared by concatenating sev eral frames of observations to account for previous and future Q frames as x τ = ( X τ − Q , ..., X τ , ..., X τ + Q ) > , and G ( x τ ) and σ ( x τ ) are estimated by G ( x τ ) ← φ g n W ( µ ) z ( L − 1) τ + b ( µ ) o , (14) σ ( x τ ) ← φ σ n W ( σ ) z ( L − 1) τ + b ( σ ) o + C σ , (15) z ( l ) τ = φ h n W ( l ) z ( l − 1) τ + b ( l ) o , (16) where C σ is a small positiv e constant value to prev ent the variance from being very small. Here, l , L , W ( l ) , and b ( · ) are the layer index, number of layers, weight matrix, and bias vector , respectively . W ( µ ) , W ( σ ) are the weight matrices and b ( µ ) , b ( σ ) are the bias vectors to estimate the T -F mask and variance, respecti vely . The DNN parameters are composed of Θ = { W ( µ ) , b ( µ ) , W ( σ ) , b ( σ ) , W ( l ) , b ( l ) | l ∈ (2 , ..., L − 1) } . The functions φ g , φ σ , and φ h are nonlinear acti v ation functions, and in con ventional studies, sigmoid and exponential functions were used as an implementation of φ g [19], [20] and φ σ [32], respectiv ely . The input vector x τ is passed to the first layer of the network as z (1) τ = x τ . III. PROPOSED METHOD Our proposed DNN-training method increases OSQA scores. W ith the proposed method, the policy gradient method [42] is used to statistically calculate the gradient with respect to Θ by using a sampling algorithm, ev en though the objec- tiv e function is not di ff erentiable. Howe ver , sampling-based gradient estimation would frequently make the DNN training behavior become unstable. T o a void this problem, we introduce two tricks: i) score normalization that reduces the variance in the estimated gradient (in Sec. III-B), and ii) a sampling algorithm to simulate output signals to satisfy the constraint of T -F mask processing (in Sec. III-C). Finally , the ov erall training procedure of the proposed method is summarized in Sec. III-D. A. P olicy gradient-based DNN training for T -F mask estima- tion Let B ( ˆ S , X ) be a scoring function that quantifies the sound quality of the estimated sound signal ˆ S : = ( ˆ S 1 , ..., ˆ S Ω ) > defined by (2). T o implement B ( ˆ S , X ), subjectiv e ev aluation is simple. Howe ver , it would be di ffi cult to use in practical implementation because DNN training requires a massive amount of listening-test results. Thus, B ( ˆ S , X ) quantifies the sound quality based on OSQA scores, as sho wn in Fig. 1, and the details of its implementation are discussed in Sec. III-B. W e assume B ( ˆ S , X ) is non-di ff erentiable with respect to Θ , because most OSQA scores are black-box functions. Let us consider the expectation maximization of B ( ˆ S , X ) as a metric of performance of the sound-source enhancement that increases OSQA scores as E ˆ S , X h B ( ˆ S , X ) i = " B ( ˆ S , X ) p ( ˆ S , X ) d ˆ S d X . (17) Since the output signal ˆ S is calculated from the observ ation X , we decompose the joint PDF p ( ˆ S , X ) into the conditional PDF of the output signal gi ven the observation p ( ˆ S | X ) and the marginal PDF of the observation p ( X ) as p ( ˆ S , X ) = p ( ˆ S | X ) p ( X ). Then, (17) can be reformed as E ˆ S , X h B ( ˆ S , X ) i = Z p ( X ) Z B ( ˆ S , X ) p ( ˆ S | X ) d ˆ S d X . (18) W e use DNNs to estimate the parameters of the conditional PDF of the output signal p ( ˆ S | X , Θ ), as with the case of ML- based training. For example, the comple x Gaussian distribution in (11) can be used as p ( ˆ S | X , Θ ). T o train Θ , E ˆ S , X [ B ( ˆ S , X )] is used as an objecti ve function by replacing the conditional PDF p ( ˆ S | X ) with p ( ˆ S | X , Θ ) as J ( Θ ) = E ˆ S , X h B ( ˆ S , X ) i , (19) = Z p ( X ) Z B ( ˆ S , X ) p ( ˆ S | X , Θ ) d ˆ S d X . (20) 4 Since B ( ˆ S , X ) is non-di ff erentiable with respect to Θ , the gradient of (20) cannot be analytically obtained by simply ap- plying back-propagation. Hence, we apply the policy-gradient method [42], which can statistically calculate the gradient of a black-box objectiv e function. By assuming that the function form of B ( ˆ S , X ) is smooth, B ( ˆ S , X ) is a continuous function and its deriv ative exists. In addition, we assume p ( ˆ S | X , Θ ) is composed with di ff erentiable functions with respect to Θ . Then, the gradient of (20) can be calculated using a log- deriv ativ e trick [42] ∇ x p ( x ) = p ( x ) ∇ x ln p ( x ) as ∇ Θ J ( Θ ) = Z p ( X ) Z B ( ˆ S , X ) ∇ Θ p ( ˆ S | X , Θ ) d ˆ S d X , (21) = E X h E ˆ S | X h B ( ˆ S , X ) ∇ Θ ln p ( ˆ S | X , Θ ) ii . (22) Since the expectation in (22) cannot be analytically calculated, the expectation with respect to X is approximated by a veraging the training data, and the average of ˆ S is calculated using the sampling algorithm as ∇ Θ J ( Θ ) ≈ 1 T T X τ = 1 1 K K X k = 1 B ( ˆ S ( k ) τ , X τ ) ∇ Θ ln p ( ˆ S ( k ) τ | X τ , Θ ) , (23) ˆ S ( k ) τ ∼ p ( ˆ S | X τ , Θ ) , (24) where ˆ S ( k ) τ is the k -th simulated output signal and K is the number of samplings, which is assumed to be su ffi ciently large. The superscript ( k ) represents the variable of the k -th sampling, and ∼ is a sampling operator from the right-side distribution. The details of the sampling process for (24) are described in Sec. III-C. Most OSQA scores, such as PESQ, are designed for their scores to be calculated using sev eral time frames such as one utterance of a speech sentence. Since B ( ˆ S ( k ) τ , X τ ) of every time frame τ cannot be obtained, the gradient cannot be calculated by (23). Thus, instead of using the av erage of τ , we use the av erage of I utterances. W e define the observ ation of the i -th utterance as X ( i ) : = ( X ( i ) 1 , ..., X ( i ) T ( i ) ), and the k -th output signal of the i -th utterance as ˆ S ( i , k ) : = ( ˆ S ( i , k ) 1 , ..., ˆ S ( i , k ) T ( i ) ). Then the gradient can be calculated as ∇ Θ J ( Θ ) ≈ 1 I I X i = 1 ∇ Θ J ( i ) ( Θ ) , (25) ∇ Θ J ( i ) ( Θ ) ≈ K X k = 1 B ˆ S ( i , k ) , X ( i ) K T ( i ) T ( i ) X τ = 1 ∇ Θ ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) , (26) where T ( i ) is the frame length of the i -th utterance, and we assume that the output signal of each time frame is calculated independently . The details of the deviation of (25) are described in the Appendix A. B. Scoring-function design for stable training W e now introduce a design of a scoring function B ( ˆ S , X ) to stabilize the training process. Because the expectation for the gradient calculation in (22) is approximated using the sampling algorithm, the training may become unstable. One reason for unstable training behavior is that the v ariance in the estimated gradient becomes large in accordance with the Ա ܺ , ܩ , ܺ , ܵ ሚ , , ܵ መ , , ൌ ܩ , , ܺ , (i) (ii) Fig. 3. T -F mask sampling procedure of proposed method on complex plane. The black, red, blue, and green points represent X ( i ) ω,τ , ˆ G ( i ) ω,τ X ( i ) ω,τ , ˜ S ( i , k ) ω,τ , and ˆ G ( i , k ) ω,τ X ( i ) ω,τ , respectiv ely . First, the parameters of p ( ˆ S ω,τ | X ( i ) ω,τ , Θ ), i.e. , the T - F mask ˆ G ( i ) ω,τ and the variance are estimated using a DNN. Then, ˜ S ( i , k ) ω,τ is sampled from p ( ˆ S ω,τ | X ( i ) ω,τ , Θ ) by using a typical sampling algorithm; which is shown as arro w-(i). Finally , the simulated T -F mask ˆ G ( i , k ) ω,τ is calculated to minimize the MSE between ˜ S ( i , k ) ω,τ and the simulated output signal ˆ G ( i , k ) ω,τ X ( i ) ω,τ by (29); which is shown as arro w-(ii). large v ariance in the scoring-function output [42]. T o stabilize the training, instead of directly using a raw OSQA score as B ( ˆ S , X ), a normalized OSQA score is used to reduce its variance. Hereafter , a raw OSQA score calculated from S , X and ˆ S is written as Z ( ˆ S , X ) to distinguish between a raw OSQA score Z ( ˆ S , X ) and normalized OSQA score B ( ˆ S , X ). From (25) and (26), the total gradient ∇ Θ J ( Θ ) is a weighted sum of the i -th gradient of the log-likelihood function, and B ( ˆ S , X ) is used as its weight. Since typical OSQA scores vary not only by the performance of source enhancement but also by the SNRs of each input signal X (1 ,..., I ) , ∇ Θ J ( Θ ) also varies by the OSQA scores and SNRs of X (1 ,..., I ) . T o reduce the variance in the estimate of the gradient, it would be better to remov e such external factors according to the input conditions of each input signal, e.g . , input SNRs. As a possible solution, the external factors inv olved in the OSQA score would be estimated by calculating the expectation of the OSQA score of the input signal. Thus, subtracting the conditional expectation of Z ( ˆ S , X ) given by each input signal E ˆ S | X [ Z ( ˆ S , X )] from Z ( ˆ S , X ) might be e ff ective in reducing the variance as B ˆ S , X = Z ( ˆ S , X ) − E ˆ S | X h Z ( ˆ S , X ) i . (27) This implementation is known as “baseline-subtraction” [42], [43]. Here, E ˆ S | X [ Z ( ˆ S , X )] cannot be analytically calculated, so we replace the expectation with the a verage of OSQA scores. Then the scoring function is designed as B ˆ S ( i , k ) , X ( i ) = Z ( ˆ S ( i , k ) , X ( i ) ) − 1 K K X j = 1 Z ( ˆ S ( i , j ) , X ( i ) ) . (28) C. Sampling-algorithm to simulate T -F-mask-pr ocessed out- put signal The sampling operator used in (24) is an intuitive method that uses a typical pseudo random number generator such as the Mersenne-T wister [44]. Howe ver , this sampling operator would in fact be di ffi cult to use because typical sampling algorithms simulate output signals that do not satisfy the 5 constraint of real-valued T -F-mask processing defined by (2). T o av oid this problem, we calculate the T -F mask ˆ G ( i , k ) ω,τ and output signal ˆ S ( i , k ) ω,τ from the simulated output signal by using a typical sampling algorithm ˜ S ( i , k ) ω,τ , so that ˆ G ( i , k ) ω,τ and ˆ S ( i , k ) ω,τ satisfy the constraint of T -F-mask processing and minimize the squared error between ˆ S ( i , k ) ω,τ and ˜ S ( i , k ) ω,τ . Figure 3 illustrates the ov erview of the problem and the proposed solution on the complex plane. In this study , we use the real-v alue T -F mask within the range of 0 ≤ G ω,τ ≤ 1. Thus, the output signal is constrained to exist on the dotted line in Fig. 3, i.e. , T -F mask processing a ff ects only the norm of ˆ S ( i , k ) ω,τ . Ho we ver , since p ( ˆ S | X , Θ ) is modeled by a continuous PDF such as the complex Gaussian distribution in (11), a typical sampling algorithm possibly generates output signals that do not satisfy the T -F-mask constraint, i.e. , the phase spectrum of ˜ S ( i , k ) ω,τ does not coincide with that of X ( i ) ω,τ . T o solve this problem, we formulate the PSA-based T -F-mask re- calculation. First, a temporary output signal ˜ S ( i , k ) ω,τ is sampled using a sampling algorithm (Fig. 3 arrow-(i)). Then, the T -F mask ˆ G ( i , k ) ω,τ that minimizes the squared error between ˜ S ( i , k ) ω,τ and ˆ G ( i , k ) ω,τ X ( i ) ω,τ is calculated using the PSA equation as ˆ G ( i , k ) ω,τ = min 1 , max 0 , | ˜ S ( i , k ) ω,τ | | X ( i ) ω,τ | cos θ ( ˜ S ( i , k ) ) ω,τ − θ ( X ( i ) ) ω,τ , (29) where θ ( ˜ S ( i , k ) ) ω,τ and θ ( X ( i ) ) ω,τ are the phase spectra of ˜ S ( i , k ) ω,τ and X ( i ) ω,τ , respectiv ely . Then, the output signal is calculated by ˆ S ( i , k ) ω,τ = ˆ G ( i , k ) ω,τ X ( i ) ω,τ , (30) as shown with arrow-(ii) in Fig. 3. D. T raining procedur e W e describe the overall training procedure of the proposed method, as shown in Fig. 4. Hereafter , to simplify the sam- pling algorithm, we use the complex Gaussian distribution as p ( ˆ S | X , Θ ) described in (11)–(16). First, the i -th observation utterance X ( i ) is simulated by (1) using a randomly selected target-source file and a noise source with equal frame size from the training dataset. Next, the T -F mask G ( x ( i ) τ ) and variance σ ( x ( i ) τ ) are estimated by (11)–(16). Then, to simulate the k -th output signal ˆ S ( i , k ) , the temporary output signal ˜ S ( i , k ) 0 ω,τ is sampled from the complex Gaussian distribution using a pseudo random number generator , such as the Mersenne-T wister [44], as < ˜ S ( i , k ) ω,τ = ˜ S ( i , k ) ω,τ ∼ N C ˆ G ( i ) ω,τ < X ( i ) ω,τ = X ( i ) ω,τ , σ 2 ω,τ I , (31) where I is the 2 × 2 identity matrix, and < and = denote the real and imaginary parts of the complex number , respec- tiv ely . After that, T -F mask ˆ G ( i , k ) ω,τ is calculated using (29). T o accelerate the algorithm con ver gence, we additionally use the -greedy algorithm to calculate ˆ G ( i , k ) ω,τ . With probability 1 − applied to each time-frequency bin, the maximum a posteriori (MAP) T -F mask ˆ G ( i ) ω,τ estimated using DNNs is used instead of ˆ G ( i , k ) ω,τ as ˆ G ( i , k ) ω,τ ← ˆ G ( i , k ) ω,τ (with prob. ) ˆ G ( i ) ω,τ (otherwise) . (32) In addition, a large gradient value ∇ Θ J ( Θ ) leads to unstable training. One reason for the large gradient is that the log- likelihood ∇ Θ ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) in (26) becomes large. T o re- duce the gradient of the log-likelihood, the di ff erence between the mean T -F mask ˆ G ( i ) ω,τ and simulated T -F mask ˆ G ( i , k ) ω,τ is truncated to confine it within the range of [ − λ, λ ] as ∆ ˆ G ( i , k ) ω,τ ← ˆ G ( i , k ) ω,τ − ˆ G ( i ) ω,τ (33) ∆ ˆ G ( i , k ) ω,τ ← λ ( ∆ ˆ G ( i , k ) ω,τ > λ ) ∆ ˆ G ( i , k ) ω,τ ( − λ ≤ ∆ ˆ G ( i , k ) ω,τ ≤ λ ) − λ ( ∆ ˆ G ( i , k ) ω,τ < − λ ) , (34) ˆ G ( i , k ) ω,τ ← ˆ G ( i ) ω,τ + ∆ ˆ G ( i , k ) ω,τ . (35) Then, the output signal ˆ S ( i , k ) is calculated by T -F-mask processing (30), and the OSQA scores Z ( ˆ S ( i , k ) , X ( i ) ) and B ( ˆ S ( i , k ) , X ( i ) ) are calculated by (28). After applying these procedures for I utterances, Θ is updated using the back- propagation algorithm using the gradient calculated by (25). IV . EXPERIMENTS W e conducted objecti ve experiments to e v aluate the perfor- mance of the proposed method. The experimental conditions are described in Sec. IV -A. T o in vestigate whether a DNN source-enhancement function can be trained to increase OSQA scores, we first in vestigated the relationship between the number of updates and OSQA scores (Sec. IV -B). Second, the source enhancement performance of the proposed method was compared with those of conv entional methods by using sev eral objectiv e measurements (Sec. IV -C). Finally , subjecti ve ev aluations for sound quality and ineligibility were conducted (Sec. IV -D). For comparison methods, we used four DNN source-enhancement methods; two T -F-mask mapping func- tions trained using an MMSE-based objectiv e function [19] and the ML-based objectiv e function described in Sec. II-B, and two T -F-mask selection functions trained for increasing the PESQ and STOI [39]. A. Experimental conditions 1) Dataset: The A TR Japanese speech database [45] was used as the training dataset of the target source. The dataset consists of 6640 utterances spoken by 11 males and 11 females. The utterances were randomly separated into 5976 for the de velopment set and 664 for the validation set. As the training dataset of noise, a noise dataset of CHiME-3 was used that consisted of four types of background noise files including noise in cafes , street junctions , public transport , and pedestrian areas [46]. The noisy-mixture dataset was generated by mixing clean speech utterances with various noisy and SNR conditions using the follo wing procedure; i) the noise is randomly selected from noise dataset, ii) the amplitude of noise is adjusted to be the desired SNR-level, and iii) the speech and noise source is added in the time-domain. As the test dataset, a Japanese speech database consisting of 300 utterances spoken by 3 males and 3 females was used for target-source dataset, and an ambient noise database recorded at airports (Airp.), amusement parks (Amuse.), o ffi ces (O ffi ce), 6 Calculate Θ (26) T arget T raining data … … Noise … … … | , Θ Random select T -F mask sampling (29)(31)-(35) T -F masking (30) Calc. , , ሺሻ (27) , , Sampling process (24) ( times) Calculate Θ (25) Update parameters Repeat times Fig. 4. T raining procedure of proposed method T ABLE I E xperiment al conditions Parameters for signal processing Sampling rate 16.0 kHz FFT length 512 pts FFT shift length 256 pts # of mel-filterbanks 64 Smoothing parameter β 0.3 Lower threshold G min 0.158 ( = − 16 dB) T raining SNR (dB) -6, 0, 6, 12 DNN architecture # of hidden layers for DNNs 3 # of hidden units for DNNs 1024 Activ ation function (T -F mask, φ g ) sigmoid Activ ation function (variance, φ σ ) exponential Activ ation function (hidden, φ h ) ReLU Context window size Q 5 V ariance regularization parameter C σ 10 − 4 Parameters for MMSE and ML-based DNN training Initial step-size 10 − 4 Step-size threshold for early-stopping 10 − 7 Dropout probability (input layer) 0.2 Dropout probability (hidden layer) 0.5 L 2 normalization parameter 10 − 4 Parameters for T -F mask selection # of T -F mask templates 128 -greedy parameter 0.01 Parameters for proposed DNN training Step-size 10 − 6 # of utterance I 10 # of T -F mask sampling K 20 Clipping parameter λ 0.05 -greedy parameter 0.05 and party r ooms (Party) was used as the noisy dataset. All samples were recorded at the sampling rate of 16 kHz. The SNR lev els of the training / test dataset were -6, 0, 6, and 12 dB. 2) DNN ar chitectur e and setup: For the proposed and all con ventional methods, a fully connected DNN was used that has 3 hidden layers and 1024 hidden units. All input vectors were mean-and-variance normalized using the training data statistics. The activ ation functions for the T -F mask φ g , variance φ σ , and hidden units φ h were the sigmoid function, exponential function, and rectified linear unit (ReLU), respec- tiv ely . The context window size was Q = 5, and the variance regularization parameter in (15) was C σ = 10 − 4 1 . The Adam method [47] was used as a gradient method. T o av oid over - fitting, input vectors and DNN outputs, i.e. , the T -F masks and error variances, were compressed using a B = 64 Mel- transformation matrix, and the estimated T -F masks and error variances were transformed into a linear frequency domain using the Mel-transform’ s pseudo-in verse [48]. A PSA objecti ve function [19], [20] w as used as the MMSE- based objective function. Since the PSA objectiv e function does not use the variance parameter σ ( x τ ), DNNs estimate only T -F masks G ( x τ ). For the ML-based objectiv e function, we used (9) with the comple x Gaussian distribution described in Sec. II-B. T o train both methods, the dropout algorithm was used and initialized by layer-by-layer pre-training [49]. An early-stopping algorithm [17] was used for fine-tuning with the initial step-size 10 − 4 and the step-size threshold 10 − 7 , and L2 normalization with the parameter 10 − 4 was used as a regularization algorithm. For the T -F-mask selection-based method [39], to improve the flexibility of T -F-mask selection, we used 128 T -F-mask templates. The DNN architecture, except for the output layer , is the same as MMSE- and ML-based methods. For the proposed method, DNN parameters were initialized by ML-based training, and their step-size was 10 − 6 . T o calcu- late ∇ Θ J ( Θ ), the iteration parameters I = 10 and K = 20 were used. The -greedy parameter was 0.05, and the clipping parameter λ was determined as 0 . 05 according to preliminary informal experiments 2 . As the OSQA scores, we used the PSEQ, which is a speech quality measure, and the STOI, which is a speech intelligibility measure. T o a void adjusting the step- size of the gradient method for each OSQA, we normalized OSQA scores to uniform the range of the each OSQA score. In this experiments, each OSQA score was normalized so that its maximum and minimum values were 100 and 0 as Z PESQ ( ˆ S , X ) = 20 . 0 × PESQ( ˆ S , X ) + 0 . 5 , Z STOI ( ˆ S , X ) = 100 . 0 × STOI( ˆ S , X ) . 1 In preliminary experiments using candidate values C σ ∈ { 10 − 2 , 10 − 3 , 10 − 4 } , there were no distinct di ff erences in training stability and results. Thus, to eliminate the e ff ect of regularization, we used the minimum parameter of the candidate values. 2 W e tested some possible combinations of these parameters by grid-search. Then, we found that the listed parameters achieved a stable training and realistic computational time (2 days using an Intel Xeon Processor E5-2630 v3 CPU and a T esla M-40 GPU). 7 0 500 0 10000 -2 -1 0 1 2 3 0 500 0 1000 0 -1 0 1 2 0 500 0 10000 -0.5 0 0.5 1 1.5 0 500 0 10000 -0.4 -0.2 0 0.2 0.4 0.6 0.8 0 500 0 10000 -1 -0.5 0 0.5 1 1.5 2 0 500 0 1000 0 0.48 0.5 0.52 0.54 0.56 0.58 0 500 0 10000 0.56 0.58 0.6 0.62 0.64 0.66 0 500 0 10000 0.48 0.5 0.52 0.54 0.56 0.58 0 500 0 10000 0.38 0.4 0.42 0.44 0.46 0 500 0 10000 0.48 0.5 0.52 0.54 0.56 Input SNR: -6 dB Input SNR: 0 dB Input SNR: 6 dB Input SNR: 12 dB A verage STOI improvement [%] # of update # of update # of update # of update # of update PESQ improvement Fig. 5. OSQA score improvement depending on number of updates. X-axis shows number of updates, and y-axis shows av erage di ff erence between OSQA score of proposed method and that of observed signal. Solid lines and gray area are average and standard-error , respecti vely . 0 5000 1 000 0 0.046 0.048 0.05 0.052 0.054 0.056 0.058 0 5000 1000 0 0.0165 0.017 0.0175 0.018 0.0185 0.019 0 5 000 1000 0 6 6.2 6.4 6.6 6.8 7 7.2 10 -3 0 5000 10000 2.2 2.4 2.6 2.8 10 -3 0 500 0 1000 0 0.018 0.0185 0.019 0.0195 0.02 0.0205 0.021 0 5000 1 000 0 0.048 0.05 0.052 0.054 0 5000 1 000 0 0.019 0.02 0.021 0.022 0.023 0.024 0 5 000 1000 0 7 7.5 8 8.5 9 9.5 10 10 -3 0 5000 10000 3 3.5 4 4.5 10 -3 0 5000 1 000 0 0.019 0.02 0.021 0.022 0.023 Input SNR: -6 dB Input SNR: 0 dB Input SNR: 6 dB Input SNR: 12 dB A verage (b) MSE # of update # of update # of update # of update # of update (a) MSE Fig. 6. Mean squared error (MSE) depending on number of updates. OSQA scores used for training of proposed method were (a) PESQ and (b) STOI. X-axis sho ws number of updates, and y-axis shows MSE. Solid lines and gray area are average and standard-error , respecti vely . The training algorithm was stopped after 10,000 times of ex ecuting the whole parameter update process shown in Fig. 4. 3) Other conditions: It is kno wn that T -F-mask processing causes artificial distortion, so-called musical noise [50]. For all methods, to reduce musical noise, flooring [6], [51] and smoothing [52], [53] were applied to ˆ G ω,τ before T -F-mask processing as ˆ G ω,τ ← max G min , ˆ G ω,τ , (36) ˆ G ω,τ ← β ˆ G ω,τ + (1 − β ) ˆ G ω,τ − 1 , (37) where we used the lower threshold of the T -F mask G min = 0 . 158 and smoothing parameter β = 0 . 3. The frame size of the short-time Fourier transform (STFT) was 512, and the frame was shifted by 256 samples. All the above-mentioned conditions are summarized in T able I. T ABLE II C orrela tion coefficients between MSE and OSQA score impro vements -6 dB 0 dB 6 dB 12 dB A verage PESQ − 0 . 120 − 0 . 081 0 . 020 0 . 089 − 0 . 020 STOI 0 . 756 − 0 . 672 − 0 . 951 − 0 . 980 0 . 482 B. Investigation of r elationship between number of updates and OSQA scor e T o in vestig ate whether the DNN source-enhancement func- tion can be trained to increase OSQA scores, we first inv es- tigated the relationship between the number of updates and improv ement of the OSQA scores. W e define “OSQA score improv ement” as the di ff erence in the score value from the baseline OSQA score. For the baseline, we use the OSQA score obtained from the observed signal. Since the DNN pa- rameters of the proposed method were initialized by ML-based training, each OSQA score was compared with the OSQA 8 T ABLE III E v alua tion resul ts on three objective measurements . A sterisks indica te scores significantl y higher than tha t of MMSE and ML in p aired one - sided t - test . G ra y cells indica te the highest score in same noise and input SNR condition . Input SNR: -6 dB SDR [dB] PESQ STOI [%] Method Airp. Amuse. O ffi ce Party A ve. Airp. Amuse. O ffi ce P arty A ve. Airp. Amuse. O ffi ce Party A ve. OBS − 4 . 28 − 6 . 98 − 5 . 64 − 1 . 50 − 4 . 6 1 . 24 1 . 38 1 . 33 1 . 14 1 . 27 72 . 1 76 . 7 73 . 8 69 . 1 72 . 9 MMSE 3 . 22 5 . 87 4 . 66 3 . 77 4 . 38 1 . 66 1 . 89 1 . 80 1 . 48 1 . 71 68 . 9 73 . 6 71 . 0 66 . 7 70 . 1 ML 3 . 31 6 . 12 4 . 87 3 . 63 4 . 48 1 . 68 1 . 95 1 . 80 1 . 54 1 . 74 69 . 2 74 . 3 72 . 0 64 . 9 70 . 1 C-PESQ − 0 . 28 1 . 38 − 0 . 03 1 . 67 0 . 69 1 . 55 1 . 77 1 . 64 1 . 44 1 . 60 ∗ 72 . 2 ∗ 76 . 4 ∗ 73 . 4 ∗ 70 . 4 ∗ 73 . 2 C-STOI 0 . 21 2 . 02 0 . 68 2 . 17 1 . 27 1 . 48 1 . 64 1 . 56 1 . 34 1 . 50 ∗ 75 . 0 ∗ 79 . 8 ∗ 76 . 6 ∗ 71 . 1 ∗ 75 . 6 P-PESQ 3 . 13 ∗ 6 . 34 4 . 72 3 . 50 4 . 42 ∗ 1 . 78 ∗ 2 . 07 ∗ 1 . 91 ∗ 1 . 57 ∗ 1 . 83 ∗ 71 . 0 ∗ 76 . 0 ∗ 72 . 4 ∗ 67 . 9 ∗ 71 . 8 P-STOI 2 . 18 ∗ 6 . 60 3 . 90 ∗ 4 . 15 4 . 21 1 . 63 1 . 93 1 . 73 ∗ 1 . 59 1 . 72 ∗ 74 . 9 ∗ 80 . 1 ∗ 76 . 6 ∗ 71 . 3 ∗ 75 . 7 P-MIX 2 . 93 ∗ 6 . 20 4 . 39 3 . 49 4 . 25 ∗ 1 . 77 ∗ 2 . 08 ∗ 1 . 89 ∗ 1 . 59 ∗ 1 . 83 ∗ 72 . 1 ∗ 77 . 4 ∗ 73 . 8 ∗ 68 . 2 ∗ 72 . 9 Input SNR: 0 dB SDR [dB] PESQ STOI [%] Method Airp. Amuse. O ffi ce Party A ve. Airp. Amuse. O ffi ce Party A ve. Airp. Amuse. O ffi ce Party A ve. OBS 1 . 67 − 1 . 19 0 . 36 4 . 46 1 . 32 1 . 71 1 . 88 1 . 81 1 . 54 1 . 73 84 . 5 87 . 8 85 . 2 82 . 9 85 . 1 MMSE 8 . 03 10 . 0 9 . 55 8 . 44 9 . 00 2 . 17 2 . 36 2 . 27 2 . 09 2 . 22 80 . 7 84 . 7 83 . 1 80 . 1 82 . 1 ML 8 . 62 10 . 4 9 . 97 8 . 66 9 . 40 2 . 20 2 . 42 2 . 30 2 . 14 2 . 27 82 . 5 86 . 4 84 . 6 79 . 6 83 . 3 C-PESQ 6 . 36 7 . 08 6 . 49 7 . 89 6 . 95 2 . 11 2 . 33 2 . 23 2 . 00 2 . 16 ∗ 83 . 7 86 . 2 84 . 0 ∗ 82 . 7 ∗ 84 . 2 C-STOI 7 . 30 8 . 07 7 . 18 8 . 70 7 . 81 2 . 03 2 . 18 2 . 10 1 . 89 2 . 05 ∗ 86 . 8 ∗ 89 . 9 ∗ 87 . 4 ∗ 84 . 7 ∗ 87 . 2 P-PESQ 8 . 40 10 . 3 9 . 77 8 . 28 9 . 19 ∗ 2 . 30 ∗ 2 . 55 ∗ 2 . 41 ∗ 2 . 20 ∗ 2 . 37 ∗ 82 . 7 86 . 4 84 . 1 ∗ 80 . 3 ∗ 83 . 4 P-STOI 8 . 45 ∗ 11 . 2 9 . 52 ∗ 9 . 74 ∗ 9 . 74 2 . 12 2 . 36 2 . 21 2 . 11 2 . 20 ∗ 86 . 7 ∗ 90 . 0 ∗ 87 . 5 ∗ 85 . 0 ∗ 87 . 3 P-MIX 8 . 09 9 . 85 9 . 12 8 . 11 8 . 79 ∗ 2 . 31 ∗ 2 . 57 ∗ 2 . 41 ∗ 2 . 23 ∗ 2 . 38 ∗ 84 . 2 ∗ 87 . 8 ∗ 85 . 5 ∗ 81 . 6 ∗ 84 . 7 Input SNR: 6 dB SDR [dB] PESQ STOI [%] Method Airp. Amuse. O ffi ce Party A ve. Airp. Amuse. O ffi ce P arty A ve. Airp. Amuse. O ffi ce Party A ve. OBS 7 . 67 4 . 96 6 . 29 10 . 5 7 . 34 2 . 18 2 . 33 2 . 28 2 . 02 2 . 20 92 . 2 93 . 8 92 . 7 91 . 8 92 . 6 MMSE 12 . 1 13 . 6 13 . 4 12 . 6 12 . 9 2 . 54 2 . 68 2 . 63 2 . 49 2 . 58 88 . 9 91 . 2 90 . 4 88 . 6 89 . 8 ML 13 . 1 14 . 2 14 . 1 13 . 5 13 . 7 2 . 59 2 . 77 2 . 69 2 . 54 2 . 65 91 . 1 93 . 0 92 . 2 89 . 8 91 . 5 C-PESQ 11 . 5 11 . 9 11 . 4 12 . 6 11 . 9 2 . 54 2 . 75 2 . 69 2 . 45 2 . 61 90 . 5 91 . 8 90 . 9 89 . 9 90 . 8 C-STOI 13 . 2 13 . 6 13 . 1 14 . 3 13 . 5 2 . 50 2 . 62 2 . 57 2 . 38 2 . 52 ∗ 93 . 4 ∗ 94 . 8 ∗ 93 . 9 ∗ 92 . 8 ∗ 93 . 8 P-PESQ 12 . 6 13 . 8 13 . 6 12 . 6 13 . 2 ∗ 2 . 70 ∗ 2 . 89 ∗ 2 . 80 ∗ 2 . 64 ∗ 2 . 76 90 . 2 92 . 1 91 . 2 89 . 1 90 . 6 P-STOI ∗ 13 . 4 ∗ 15 . 3 ∗ 14 . 3 ∗ 14 . 8 ∗ 14 . 4 2 . 49 2 . 69 2 . 60 2 . 45 2 . 56 ∗ 93 . 4 ∗ 94 . 9 ∗ 94 . 0 ∗ 92 . 8 ∗ 93 . 8 P-MIX 11 . 5 12 . 3 12 . 1 11 . 6 11 . 9 ∗ 2 . 69 ∗ 2 . 90 ∗ 2 . 79 ∗ 2 . 66 ∗ 2 . 76 ∗ 91 . 5 ∗ 93 . 1 ∗ 92 . 3 ∗ 90 . 4 ∗ 91 . 8 Input SNR: 12 dB SDR [dB] PESQ STOI [%] Method Airp. Amuse. O ffi ce Party A ve. Airp. Amuse. O ffi ce P arty A ve. Airp. Amuse. O ffi ce Party A ve. OBS 13 . 6 11 . 0 12 . 3 16 . 4 13 . 3 2 . 61 2 . 76 2 . 72 2 . 47 2 . 64 96 . 1 96 . 9 96 . 4 96 . 2 96 . 4 MMSE 15 . 9 16 . 9 16 . 8 16 . 3 16 . 5 2 . 84 2 . 95 2 . 92 2 . 77 2 . 87 93 . 5 94 . 7 94 . 4 93 . 2 94 . 0 ML 17 . 5 18 . 0 18 . 0 18 . 1 17 . 9 2 . 95 3 . 09 3 . 03 2 . 88 2 . 98 95 . 5 96 . 3 96 . 0 94 . 9 95 . 7 C-PESQ 15 . 5 15 . 8 15 . 3 16 . 3 15 . 7 2 . 95 ∗ 3 . 14 ∗ 3 . 08 2 . 86 ∗ 3 . 01 94 . 2 94 . 9 94 . 4 94 . 0 94 . 4 C-STOI ∗ 18 . 2 ∗ 18 . 6 ∗ 18 . 2 ∗ 19 . 0 ∗ 18 . 5 2 . 94 3 . 05 3 . 01 2 . 81 2 . 95 ∗ 96 . 7 ∗ 97 . 4 ∗ 97 . 0 ∗ 96 . 6 ∗ 96 . 9 P-PESQ 16 . 5 17 . 2 17 . 1 16 . 6 16 . 8 ∗ 3 . 04 ∗ 3 . 19 ∗ 3 . 12 ∗ 2 . 97 ∗ 3 . 08 94 . 4 95 . 2 94 . 9 93 . 8 94 . 6 P-STOI ∗ 18 . 2 ∗ 19 . 5 ∗ 18 . 8 ∗ 19 . 7 ∗ 19 . 1 2 . 85 3 . 02 2 . 96 2 . 78 2 . 90 ∗ 96 . 8 ∗ 97 . 5 ∗ 97 . 1 ∗ 96 . 7 ∗ 97 . 0 P-MIX 13 . 6 13 . 9 13 . 9 13 . 8 13 . 8 ∗ 3 . 01 ∗ 3 . 18 ∗ 3 . 10 ∗ 2 . 97 ∗ 3 . 07 95 . 3 96 . 0 95 . 7 94 . 7 95 . 4 score that had zero updates. Thus, if DNN parameters were successfully trained with the proposed method, the OSQA score improvement would increase in accordance with the number of updates. Figure 5 shows the OSQA score improv ements ev aluated on the test dataset. Both OSQA score improv ements increased as the number of updates increased for all SNR conditions. These results suggest that the proposed method is e ff ectiv e at increasing arbitrary OSQA scores, such as the PESQ and STOI. W e also in vestigated the relationship between the number of updates and MSE using the test dataset. Figure 6 shows MSE depending on the number of updates. Under most SNR conditions, MSE did not decrease despite OSQA scores increasing. T able II sho ws the correlation coe ffi cients between OSQA score improv ements and MSE values. There was little correlation between PESQ improv ement and MSE, and the correlation between STOI improvement and MSE depended T ABLE IV O bjective scores of example resul ts sho wn in F ig . 7. Performance measurement Method SDR [dB] PESQ STOI [%] OBS 2 . 36 1 . 79 81 . 5 MMSE 9 . 31 2 . 32 80 . 0 ML 11 . 3 2 . 48 82 . 1 P-PESQ 10 . 7 2 . 55 81 . 4 P-STOI 11 . 2 2 . 40 86 . 3 P-MIX 11 . 2 2 . 55 83 . 4 on the input SNR condition. Thus, these results suggest that minimization of MSE does not necessarily maximize OSQA scores. C. Objective evaluation The source-enhancement performance of the proposed method was compared with those of conv entional methods 9 0 2 4 0 2 4 6 8 - 6 0 - 4 0 - 2 0 0 (a) Freq. [kHz] 0 2 4 0 2 4 6 8 - 2 0 - 1 5 - 1 0 - 5 0 T ime [s] Freq. [kHz] T ime [s] (b) 0 2 4 0 2 4 6 8 - 6 0 - 4 0 - 2 0 0 0 2 4 0 2 4 6 8 - 2 0 - 1 5 - 1 0 - 5 0 T ime [s] (c) 0 2 4 0 2 4 6 8 - 6 0 - 4 0 - 2 0 0 0 2 4 0 2 4 6 8 - 2 0 - 1 5 - 1 0 - 5 0 T ime [s] T ime [s] (d) 0 2 4 0 2 4 6 8 - 6 0 - 4 0 - 2 0 0 0 2 4 0 2 4 6 8 - 2 0 - 1 5 - 1 0 - 5 0 T ime [s] 0 2 4 0 2 4 6 8 -6 0 -4 0 -2 0 0 0 2 4 0 2 4 6 8 -6 0 -4 0 -2 0 0 T arget Observation Freq. [kHz] Freq. [kHz] [dB] [dB] (e) [dB] 0 2 4 0 2 4 6 8 -6 0 -4 0 -2 0 0 0 2 4 0 2 4 6 8 -2 0 -1 5 -1 0 -5 0 T ime [s] Fig. 7. Examples of estimated T -F mask and output signal. T op figures show spectrogram of target source S ω,τ (left) and observed signal X ω,τ (right), respectiv ely . Middle figures sho w spectrogram of output signal ˆ S ω,τ and bottom figures sho w estimated T -F mask ˆ G ω,τ , respectively . White dotted box and circle show larger or less noise reduction areas which modified by training of P-PESQ and P-STOI , respectively . (a) MMSE , (b) ML , (c) P-PESQ , (d) P-STOI , and (e) P-MIX . using three objectiv e measurements: the signal-to-distortion ratio (SDR), PESQ, and STOI. The SDR was defined as SDR [dB] : = 10 log 10 P T τ = 1 P Ω ω = 1 | S ω,τ | 2 P T τ = 1 P Ω ω = 1 | S ω,τ − ˆ S ω,τ | 2 , (38) and calculated using the “BSS-Eval toolbox [54]. ” These measurements were ev aluated on the observed signal ( OBS ), the MMSE- and ML-based DNN training ( MMSE and ML ), a T - F-mask selection method to increase the PESQ and STOI [39] ( C-PESQ and C-STOI ), and the proposed method to increase the PESQ and ST OI ( P-PESQ and P-STOI ). T o inv estigate whether the proposed method enables training of a DNN to increase a metric that consists of multiple OSQA scores, we also trained a DNN to increase a mixed-OSQA score ( P-MIX ). As the first trial, we mixed the PESQ and the STOI. The mixed-OSQA is defined as Z MIX ( ˆ S , X ) = γ Z PESQ ( ˆ S , X ) + ( 1 − γ ) Z STOI ( ˆ S , X ) . In this trial, in order to confirm whether multiple OSQA scores increase simultaneously , the additive coe ffi cient γ = 0 . 5 was determined in such a way that both OSQA scores had the same contribution to Z MIX ( ˆ S , X ). T able III lists the e v aluation results of each objective measurement on four noise types and four input SNR con- ditions. The asterisk indicates that the score was significantly higher than both MMSE and ML in a paired one-sided t-test ( α = 0 . 05). The SDRs tended to be higher when using the conv entional MMSE / ML-based objectiv e function than the proposed method under lo w SNR conditions. The PESQ and STOI of P-PESQ and P-STOI were higher than those of MMSE and ML , respecti vely . For each method, the PESQ and STOI improved by around 0.1 and 2–5 %, respecti vely , and significant di ff erences were observed for all noise and SNR conditions. These results suggest that the proposed method was able to train the DNN source-enhancement function to directly increase black-box OSQA scores. In mixed-OSQA experiments, both PESQ and STOI of P-MIX were higher than those of MMSE and ML under almost all noise and SNR conditions. In the comparison to the results of the mixed-OSQA and single-OSQA ( i.e. P-PESQ and P-STOI ), P-MIX achieved almost the same or slightly lower PESQ and STOI scores than P-PESQ and P-STOI , respectiv ely . In addition, P-MIX outperformed STOI and PESQ scores than P-PESQ and P-STOI , respectively . These results suggest that the use of the mixed-OSQA would be an e ff ectiv e way to increase multiple-perceptual qualities. In T able III we also show that the proposed method outper- formed the T -F mask selection-based methods [39] in terms of the target OSQA under almost all noise types and SNR conditions. Such fa vorable experimental results would hav e been observed because of the flexibility of the T -F mask esti- mation achiev ed by the proposed method. In this experiment, the number of the T -F mask template ( = 128) was lar ger than that used in the previous work ( = 32) [39]. Howe ver , since 10 1 2 3 4 5 * * 1 2 3 4 5 * * * 1 2 3 4 5 * * * S-MOS N-MOS G-MOS ML P-PESQ P-STOI ML P-PESQ P-STOI ML P-PESQ P-STOI Fig. 8. Ev aluation results of sound-quality test according to ITU-T P .835. Bar graphs and error bar indicate average and standard error , respectively . Asterisks indicate significant di ff erence observed in paired one-sided t-test. 65 70 75 80 85 90 * ML P-PESQ P-STO I Intelligibility score [%] Fig. 9. Evaluation results of word-intelligibility test. Asterisks indicate significant di ff erence observed in unpaired one-sided t-test. the T -F masks were generated by a combination of the finite number of templates, the patterns of the T -F mask were still limited. These results suggested that by adopting the policy- gradient method to optimize the parameters of a continuous PDF of the T -F mask processing, the flexibility of the T -F mask estimation was improved. Figure 7 shows examples of the estimated T -F masks and output signal, and T able IV lists its objecti ve scores. The SNR of the observed signal was adjusted to 0 dB using amusement parks noise. Figure 7 shows that the estimated T - F masks reflect the characteristics of each objectiv e function. In comparison to the results of MMSE and ML that reduced the distortion of the target source on average, the T -F mask estimated by P-PESQ strongly reduced the residual noise, ev en when it distorted the target sound at a middle / high frequency ( e.g . Fig. 7 white dotted box), and achie ved the best PESQ. In contrast, the T -F mask estimated by P-STOI weakly reduced noise to avoid distorting the target source, even when the noise remained in the non-speech frames ( e.g. Fig. 7 white dotted circle), and achie ved the best STOI. This may be because the residual noise degrades the sound quality and the distortion of the target source degrades speech intelligibility . The T -F mask estimated by P-MIX in volv ed both characteristics and relaxed the disadvantage of P-PESQ and P-STOI , and both OSQA scores were higher than those of ML and MMSE . Namely , speech distortion at a middle / high frequency was reduced ( e.g. Fig. 7 white dotted box) and residual noise in the non-speech frames were reduced ( e.g. Fig. 7 white dotted circle). D. Subjective evaluation 1) Sound quality evaluation: T o in vestigate the sound qual- ity of the output signals, subjectiv e speech-quality tests were conducted according to ITU-T P .835 [55]. In the tests, the participants rated three di ff erent factors in the samples: • Speech mean-opinion-score (S-MOS): the speech sam- ple was rated 5–not distorted, 4–slightly distorted, 3– somewhat distorted, 2–fairly distorted, or 1–very dis- torted. • Subjective noise MOS (N-MOS): the background of the sample was 5–not noticeable, 4–slightly noticeable, 3– noticeable but not intrusive, 2–somewhat intrusi ve, or 1– very intrusiv e. • Overall MOS (G-MOS): the sound quality of the sample was 5–excellent, 4–good, 3–fair , 2–poor , or 1–bad. Sixteen participants ev aluated the sound quality of the output signals of ML , P-PESQ , and P-STOI . The participants e valuated 20 files for each method; the 20 files consisted of fi ve randomly selected files from the test dataset for each of the four types of noise. The input SNR was 6 dB. Figure 8 shows the results of the subjecti ve tests. For all factors, P-PESQ achie ved a higher score than ML , and statistically significant di ff erences from ML were observed in a paired one-sided t -test ( p -value = 0 . 05). The reason for this result suggested that participan ts may have perceiv ed the degrade of the speech quality from both the speech distortion and the residual noise in speech frame in the output signal of ML . In addition, although there was no statistically significant di ff erence between P-PESQ and P-STOI in terms of S-MOS score, N-MOS score of P-STOI was significantly lower than that of P-PESQ . Thus, G-MOS score of P-STOI was also lower than that of P-PESQ . It would be because P-STOI weakly reduced noise to av oid distorting the target source, even when the noise remained in the non-speech frames as shown in Sec. IV .C. 2) Speech intelligibility test: W e conducted a word- intelligibility test to in vestigate speech intelligibility . W e se- lected 50 low familiarity words from familiarity-controlled word lists 2003 (FW03) [56] as the test dataset of speech. The selected dataset consisted of Japanese four-mora words whose accent type was Low-High-High-High. The noisy test dataset was created by adding a randomly selected noise at SNR of 6 dB from the noisy dataset, which was used in the objectiv e ev aluation. Sixteen participants attempted to write a 11 phonetic transcription for output signals of ML , P-PESQ , and P-STOI . The percentage of correct answers was used as the intelligibility score. Figure 9 sho ws the intelligibility score of each method. P-STOI achiev ed the highest score. In addition, statistically significant di ff erences from ML were observed in an unpaired one-sided t -test ( p -v alue = 0 . 05). From both sound-quality and speech-intelligibility tests, we found that the proposed method could impro ve the specific hearing quality corresponding to the OSQA score used as the objectiv e function. V . CONCLUSIONS W e proposed a training method for the DNN-based source- enhancement function to increase OSQA scores such as the PESQ. The di ffi culty is that the gradient of OSQA scores may not be analytically calculated by simply applying the back-propagation algorithm because most OSQA scores are black boxes. T o calculate the gradient of the OSQA-based objectiv e function, we formulated a DNN-optimization scheme on the basis of the policy-gradient method. In the e xperiment, 1) it was revealed that the DNN-based source-enhancement function can be trained using the gradient of the OSQA obtained with the policy-gradient method. In addition, 2) the OSQA score and specific hearing quality corresponding to the OSQA score used as the objectiv e function improved. Therefore, it can be concluded that this method made it possible to use not only analytical objective functions b ut also black-box functions for the training of the DNN-based source- enhancement function. Although we focused on maximization of OSQA in this study , the proposed method potentially increases other black- box measurements. In the future, we will aim to adopt the proposed method to increase other black-box objectiv e mea- sures such as the subjecti ve score obtained from a “human-in- the-loop” audio-system [57] and word accuracy of a black-box automatic-speech-recognition system [58]. W e found that both the PESQ and STOI could increase simultaneously by mixing multiple OSQA scores as an objective function. In the future, we will also in vestig ate the optimality of the OSQA score and its mixing ratio for the proposed method. R eferences [1] J. Benesty , S. Makino, and J. Chen, Eds., “Speech enhancement, ” Springer , 2005. [2] Y . Ephraim and D. Malah, “Speech enhancement using a minimum mean-square error short-time spectral amplitude estimator, ” IEEE T rans. Audio, Speech and Language Pr ocessing , pp.1109–1121, 1984. [3] R. Zelinski “ A microphone array with adaptive post-filtering for noise reduction in re verberant rooms, ” in Pr oc. ICASSP , pp. 2578 –2581, 1988. [4] Y . Hioka, K. Furuya, K. K obayashi, K. Niwa and Y . Haneda, “Un- derdetermined sound source separation using power spectrum density estimated by combination of directivity gain, ” IEEE Tr ans. Audio, Speech and Language Processing , pp.1240–1250, 2013. [5] K. Niwa, Y . Hioka, and K. K obayashi, “Optimal Microphone Array Observation for Clear Recording of Distant Sound Sources, ” IEEE / ACM T rans. Audio, Speech and Language Processing , pp.1785–1795, 2016. [6] L. Lightburn, E. D. Sena, A. Moore, P . A. Naylor , M. Brookes, “Improving the perceptual quality of ideal binary masked speech, ” in Pr oc. ICASSP , 2017. [7] T . Y oshioka, A. Sehr, M. Delcroix, K. Kinoshita, R. Maas, T . Nakatani, and W . Kellermann, “Making machines understand us in reverberant rooms: robustness against re verberation for automatic speech recogni- tion, ” IEEE Signal Pr ocessing Magazine , pp. 114–126, 2012. [8] A. Narayanan and D. W ang, “Ideal ratio mask estimation using deep neural networks for robust speech recognition, ” in Proc. ICASSP , 2013. [9] T . Ochiai, S. W atanabe, T . Hori, and J. R. Hershey , “Multichannel End- to-end Speech Recognition, ” in Pr oc. ICML , 2017. [10] K. Kobayashi, Y . Haneda, K. Furuya, and A. Kataoka, “ A hands-free unit with noise reduction by using adapti ve beamformer, ” IEEE T rans. on Consumer Electronics , V ol.54-1, 2008. [11] Y . Hioka, K. Furuya, K. Kobayashi, S. Sakauchi, and Y . Haneda, “ Angu- lar region-wise speech enhancement for hands-free speakerphone, ” IEEE T rans. on Consumer Electr onics , V ol.58-4, 2012. [12] B. C. J. Moore, “Speech processing for the hearing-impaired: successes, failures, and implications for speech mechanisms, ” Speech Communica- tion , V ol. 41, Issue 1, pp.81–91, 2003. [13] D. L. W ang, “Time-frequenc y masking for speech separation and its potential for hearing aid design, ” T rends in Amplification , v ol. 12, pp. 332–353, 2008. [14] T . Zhang, F . Mustiere, and C. Micheyl, “Intelligent Hearing Aids: The Next Revolution, ” In Pr oc. EMBC, 2016. [15] Y . Zhao, D. W ang, I. Merks, and T . Zhang, “DNN-based enhancement of noisy and rev erberant speech, ” In Proc. ICASSP , 2016. [16] R. Oldfield, B. Shirley and J. Spille, “Object-based audio for interac- tiv e football broadcast, ” Multimedia T ools and Applications , V ol. 74, pp.2717–2741, 2015. [17] Y . Koizumi, K. Niwa, Y . Hioka, K. Kobayashi and H. Ohmuro, “In- formativ e acoustic feature selection to maximize mutual information for collecting target sources, ” IEEE / ACM T rans. Audio, Speech and Language Processing , pp.768–779, 2017. [18] Y . LeCun, Y . Bengio, and G. Hinton, “Deep Learning, ” Nature , 521, pp.436–444, 2015. [19] F . W eninger, H. Erdogan, S. W atanabe, E. V incent, J. L. Roux, J. R. Her- shey , and B. Schuller, “Speech Enhancement with LSTM Recurrent Neural Networks and its Application to Noise-Robust ASR, ” in Pr oc. L V A / ICA , 2015. [20] H. Erdogan, J. R. Hershe y , S. W atanabe, and J. L. Roux, “Phase-sensiti ve and recognition-boosted speech separation using deep recurrent neural networks, ” in Proc. ICASSP , 2015. [21] D. S. Williamson and D. L. W ang, “Time-frequency masking in the complex domain for speech dereverberation and denoising, ” IEEE / ACM T rans. Audio, Speech and Language Processing , 2017. [22] Y . Zhao, B. Xu, R. Giri, and T . Zhang, “Perceptually Guided Speech Enhancement using deep neural networks, ” in Pr oc. ICASSP , 2018. [23] Y . Xu, J. Du, L. R. Dai, and C. H. Lee, “ An experimental study on speech enhancement based on deep neural networks, ” IEEE Signal Pr ocessing Letters , pp.65–68, 2014. [24] Y . Xu, J. Du, L. R. Dai and C. H. Lee, “ A regression approach to speech enhancement based on deep neural networks, ” IEEE / A CM Tr ans. Audio, Speech and Language Processing , pp.7–19, 2015. [25] Y . Xu, J. Du, Z. Huang, L. R. Dai, and C. H. Lee, “Multi-objective learning and mask-based post-processing for deep neural network based speech enhancement, ” in Pr oc. INTERSPEECH , 2015. [26] T . Gao, J. Du, L. R. Dai, and C. H. Lee, “SNR-Based Progressiv e Learning of Deep Neural Network for Speech Enhancement, ” in Pr oc. INTERSPEECH , 2016. [27] Q. W ang, J. Du, L. R. Dai and C. H. Lee, “ A multiobjecti ve learning and ensembling approach to high-performance speech enhancement with compact neural network architectures, ” IEEE / ACM T rans. Audio, Speech and Languag e Pr ocessing , pp.1181–1193, 2018. [28] T . Kawase, K. Niwa, K. Kobayashi, and Y . Hioka, “ Application of neural network to source PSD estimation for W iener filter based sound source separation, ” in Proc. IW AENC , 2016. [29] K. Niwa, Y . K oizumi, T . Kawase, K. Kobayashi and Y . Hioka, “Super- vised Source Enhancement Composed of Non-negativ e Auto-Encoders and Complementarity Subtraction” in Pr oc. ICASSP , 2017. [30] P . Smaragdis and S. V enkataramani, “ A Neural Network Alternative to Non-Negati ve Audio Models, ” in Proc. ICASSP , 2017. [31] L. Chai, J. Du and Y . W ang, “Gaussian Density Guided Deep Neural Network For Single-Channel Speech Enhancement, ” in Pr oc. MLSP , 2017. [32] K. Kinoshita, M. Delcroix, A. Ogawa, T . Higuchi, and T . Nakatani, “Deep Mixture Density Network for Statistical Model-based Feature Enhancement, ” in Proc. ICASSP , 2017. [33] A. A. Nugraha, A. Liutkus, and E. V incent, “Multichannel Audio Source Separation With Deep Neural Networks, ” IEEE / ACM Tr ans. Audio, Speech and Language Processing , 2016. [34] J. Hershy , Z. Chen, J. L. Roux, and S. W atanabe, “Deep clustering: Discriminativ e embeddings for segmentation and separation, ” In Pr oc. ICASSP , 2016. 12 [35] S. Pascual, A. Bonafonte, and J. Serra, “SEGAN: Speech Enhancement Generativ e Adversarial Network, ” In Proc INTERSPEECH , 2017. [36] D. E. Rumelhart, G. E. Hinton, E. Geo ff rey and R. J. Williams, “Learning representations by back-propagating errors, ” Natur e , 323, pp.533–536, 1986. [37] ITU-T Recommendation P .862, “Perceptual evaluation of speech quality (PESQ): An objectiv e method for end-to-end speech quality assessment of narro w-band telephone networks and speech codecs, ” 2001. [38] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ An Algo- rithm for Intelligibility Prediction of T ime-Frequency W eighted Noisy Speech, ” IEEE Tr ansactions on Audio, Speech and Language Process- ing , V ol. 19, pp.2125–2136, 2011. [39] Y . Koizumi, K. Niwa, Y . Hioka, K. K obayashi and Y . Haneda, ‘DNN- based Source Enhancement Self-optimized by Reinforcement Learning using Sound Quality Measurements, ” in Pr oc. ICASSP , 2017. [40] E. S. Sutton and A. G. Barto, “Reinforcement Learning: An Introduc- tion, ” A Bradford Book, 1998. [41] D. Silver, A. Huang, C. J. Maddison, A. Guez, L. Sifre, G. Driessche, J. Schrittwieser , I. Antonoglou, V . Panneershelv am, M. Lanctot, S. Diele- man, D. Grewe, J. Nham, N. Kalchbrenner, I. Sutske ver, T . Lillicrap, M. Leach, K. Ka vukcuoglu, T . Graepel and D. Hassabis, “ Mastering the game of Go with deep neural networks and tree search, ” Natur e , pp.484—489, 2016. [42] R. J. Williams, “Simple Statistical Gradient-F ollowing Algorithms for Connectionist Reinforcement Learning, ” Machine Learning , V ol. 8, 1992. [43] R. S. Sutton, D. McAllester, S. Singh, and Y . Mansour, “Policy Gradient Methods for Reinforcement Learning with Function Approximation, ” In Pr oc. NIPS , 1999. [44] M. Matsumoto and T . Nishimura, “Mersenne T wister: A 623- dimensionally Equidistrib uted Uniform Pseudorandom Number Gener- ator , ” A CM Trans. on Modeling and Computer Simulations, 1998. [45] A. Kurematsu, K. T akeda, Y . Sagisaka, S. Katagiri, H. Kuwabara, and K. Shikano, “ A TR Japanese speech database as a tool of speech recognition and synthesis, ” Speech communication , pp.357–363, 1990. [46] J. Barker, R. Marxer , E. V incent and S. W atanabe, “The third ‘CHiME’ speech separation and recognition challenge: dataset, task and baseline, ” in Pr oc. ASR U , 2015. [47] D. P . Kingma and J. Ba, “ Adam: A Method for Stochastic Optimization, ” in Pr oc ICLR , 2015. [48] F . W eninger, J. R. Hershey , J. L. Roux and B. Schuller , “Discrimina- tiv ely Trained Recurrent Neural Networks for Single-Channel Speech Separation, ” in Proc. GlobalSIP , 2014. [49] F . Seide, G. Li, X. Chen and D. Y u, “Feature engineering in context- dependent deep neural networks for con versational speech transcription, ” in Pr oc. ASR U , pp. 24–29, 2011. [50] R. Miyazaki, H. Saruwatari, T . Inoue, Y . T akahashi, K. Shikano and K. Kondo, “Musical-Noise-Free Speech Enhancement Based on Op- timized Iterative Spectral Subtraction, ” IEEE Tr ansactions on Audio, Speech and Language Processing , V ol. 20, pp.2080–2094, 2012. [51] I. Cohen, “Optimal Speech Enhancement Under Signal Presence Uncer- tainty Using Log-Spectral Amplitude Estimator, ” IEEE Signal Pr ocess- ing Letters , V ol. 9, pp.113–116, 2002. [52] E. V incent, “ An Experimental Evaluation of W iener Filter Smoothing T echniques Applied to Under-Determined Audio Source Separation, ” in Pr oc. L V A / ICA , 2010. [53] K. Niwa, Y . Hioka, and K. K obayashi, “Post-Filter Design for Speech Enhancement in V arious Noisy En vironments, ” in Pr oc IW AENC , 2014. [54] E. V incent, R. Gribonv al and C. Fev otte, “Performance measurement in blind audio source separation, ” IEEE Tr ans. Audio, Speech and Language Processing , 14(4), pp.1462–1469, 2006. [55] ITU-T Recommendation P .835, “Subjecti ve test methodology for ev al- uating speech communication systems that include noise suppression algorithm, ” 2003. [56] S. Amano, S. Sakamoto, T . Kondo, and Y . Suzuki, “Dev elopment of familiarity-controlled word lists 2003 (FW03) to assess spoken-word intelligibility in Japanese, ” Speech Communication , pp. 76–82, 2009. [57] K. Niwa, K. Ohtani and K, T akeda, “Music Staging AI, ” in Proc. ICASSP , 2017. [58] S. W atanabe and J. L. Roux, “Black Box Optimization for Automatic Speech Recognition, ” in Pr oc. ICASSP , 2014. A ppendix A. Deviation of (25) W e describe the de viation of (25). First, as with (19) and (20), the objecti ve function is defined as the expectation of B ( ˆ S , X ) as J ( Θ ) = E ˆ S , X h B ( ˆ S , X ) i , (39) = Z p ( X ) Z B ( ˆ S , X ) p ( ˆ S | X , Θ ) d ˆ S d X . (40) Then, the gradient of (40) can be calculated using a log- deriv ativ e trick as ∇ Θ J ( Θ ) = E X h E ˆ S | X h B ( ˆ S , X ) ∇ Θ ln p ( ˆ S | X , Θ ) ii . (41) By approximating the expectation on X by the av erage on I utterances and that of ˆ S by the av erage on K times sampling, (41) can be calculated as ∇ Θ J ( Θ ) ≈ 1 I I X τ = 1 1 K K X k = 1 B ( ˆ S ( i , k ) , X ( i ) ) ∇ Θ ln p ( ˆ S ( i , k ) | X ( i ) , Θ ) . (42) W e assume that the output signal on each time frame is calculated independently . Then, ln p ( ˆ S | X , Θ ) can be reformed to ln p ( ˆ S | X , Θ ) = T X τ = 1 ln p ( ˆ S τ | X τ , Θ ) , (43) and its gradient can be calculated by ∇ Θ ln p ˆ S ( i , k ) | X ( i ) , Θ = T ( i ) X τ = 1 ∇ Θ ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) , (44) ≈ 1 T ( i ) T ( i ) X τ = 1 ∇ Θ ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) . (45) T o normalize the di ff erence in frame length T ( i ) , we multiplied 1 / T ( i ) by the original gradient. The log-likelihood function ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) can be expanded as ln p ( ˆ S ( i , k ) τ | X ( i ) τ , Θ ) c = − Ω X ω = 1 ln( σ 2 ω,τ ) ( i ) + L ( i , k ) < ,ω,τ + L ( i , k ) = ,ω,τ 2( σ 2 ω,τ ) ( i ) , (46) L ( i , k ) < ,ω,τ = ˆ G ( i , k ) ω,τ < X ( i ) ω,τ − ˆ G ( i ) ω,τ < X ( i ) ω,τ 2 , (47) L ( i , k ) = ,ω,τ = ˆ G ( i , k ) ω,τ = X ( i ) ω,τ − ˆ G ( i ) ω,τ = X ( i ) ω,τ 2 , (48) where ˆ G ( i ) ω,τ and ( σ 2 ω,τ ) ( i ) can be estimated by forw ard- propagation of the DNN as (12)–(16), and ˆ G ( i , k ) ω,τ is giv en by the sampling algorithm of the proposed method. By using abov e procedure, ∇ Θ J ( Θ ) can be calculated by simply applying back-propagation with respect to ˆ G ( i ) ω,τ and ( σ 2 ω,τ ) ( i ) . Please note that since the simulated output signal ˆ S ( i , k ) τ deals with the “label data”, the back-propagation algorithm is not applied for ˆ G ( i , k ) ω,τ . 13 Y uma K oizumi (M’15) received the B.S. and M.S. from Hosei University , T okyo, in 2012 and 2014, and the Ph.D. degree from the University of Electro- Communications in 2017. Since joining the Nip- pon T elegraph and T elephone Corporation (NTT) in 2014, he has been researching acoustic signal processing and machine learning. He was awarded the IPSJ Y amashita SIG Research A ward from the Information Processing Society of Japan (IPSJ) in 2014 and the A waya Prize from the Acoustical Society of Japan (ASJ) in 2017. He is a member of the ASJ and the Institute of Electronics, Information and Communication Engineers (IEICE). Kenta Niwa (M’09) receiv ed his B.E., M.E., and Ph.D. in information science from Nagoya Univer- sity in 2006, 2008, and 2014. Since joining the NTT in 2008, he has been engaged in research on microphone array signal processing as a research engineer at NTT Media Intelligence Laboratories. From 2017, he is also a visiting researcher at V ictoria Univ ersity of W ellington, New Zealand. He was awarded the A waya Prize by the ASJ in 2010. He is a member of the ASJ and the IEICE. Y usuke Hioka (S’04-M’05-SM’12) receiv ed his B.E., M.E., and Ph.D. degrees in engineering in 2000, 2002, and 2005 from K eio Uni versity , Y oko- hama, Japan. From 2005 to 2012, he was with the NTT Cyber Space Laboratories (now NTT Media Intelligence Laboratories), NTT in T okyo. From 2010 to 2011, he was also a visiting researcher at V ictoria Univ ersity of W ellington, New Zealand. In 2013 he permanently moved to New Zealand and was appointed as a Lecturer at the University of Canterbury , Christchurch. Then in 2014, he joined the Department of Mechanical Engineering, the University of Auckland, Auckland, where he is currently a Senior Lecturer. His research interests include audio and acoustic signal processing especially microphone arrays, room acoustics, human auditory perception and psychoacoustics. He is a Senior Member of IEEE and a Member of the Acoustical Society of New Zealand, ASJ, and the IEICE. Kazunori K obayashi recei ved the B.E., M.E., and Ph.D. degrees in Electrical and Electronic System Engineering from Nagaoka Univ ersity of T echnol- ogy in 1997, 1999, and 2003. Since joining NTT in 1999, he has been engaged in research on micro- phone arrays, acoustic echo cancellers and hands- free systems. He is no w Senior Research Engineer of NTT Media Intelligence Laboratories. He is a member of the ASJ and the IEICE. Y oichi Haneda (M’97-SM’06) receiv ed the B.S., M.S., and Ph.D. degrees from T ohoku University , Sendai, in 1987, 1989, and 1999. From 1989 to 2012, he was with the NTT , Japan. In 2012, he joined the Univ ersity of Electro-Communications, where he is a Professor . His research interests in- clude modeling of acoustic transfer functions, micro- phone arrays, loudspeaker arrays, and acoustic echo cancellers. He received paper awards from the ASJ and from the IEICE of Japan in 2002. Dr . Haneda is a senior member of IEICE, and a member of AES, ASA and ASJ.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment