Our Practice Of Using Machine Learning To Recognize Species By Voice

As the technology is advancing, audio recognition in machine learning is improved as well. Research in audio recognition has traditionally focused on speech. Living creatures (especially the small ones) are part of the whole ecosystem, monitoring as well as maintaining them are important tasks. Species such as animals and birds are tending to change their activities as well as their habitats due to the adverse effects on the environment or due to other natural or man-made calamities. For those in far deserted areas, we will not have any idea about their existence until we can continuously monitor them. Continuous monitoring will take a lot of hard work and labor. If there is no continuous monitoring, then there might be instances where endangered species may encounter dangerous situations. The best way to monitor those species are through audio recognition. Classifying sound can be a difficult task even for humans. Powerful audio signals and their processing techniques make it possible to detect audio of various species. There might be many ways wherein audio recognition can be done. We can train machines either by pre-recorded audio files or by recording them live and detecting them. The audio of species can be detected by removing all the background noise and echoes. Smallest sound is considered as a syllable. Extracting various syllables is the process we are focusing on which is known as audio recognition in terms of Machine Learning (ML).

💡 Research Summary

The paper presents a comprehensive machine‑learning framework for recognizing animal and bird species through their vocalizations, aiming to support continuous ecological monitoring in remote or hard‑to‑reach areas. The authors begin by highlighting the limitations of traditional field surveys, which are labor‑intensive, geographically constrained, and often unable to detect endangered species in real time. They argue that audio‑based monitoring can fill this gap, but note that most existing audio‑recognition research focuses on human speech, whose acoustic characteristics differ markedly from those of wildlife calls.

In the related‑work section, the study surveys conventional acoustic features such as Mel‑frequency cepstral coefficients (MFCC), linear predictive coding (LPC), and spectrograms, as well as modern deep‑learning architectures including convolutional neural networks (CNNs), recurrent neural networks (RNNs), and transformer models. The authors point out that while several works have explored species classification, few have addressed the fine‑grained segmentation of calls into syllables (referred to as “audio syllables” or “syllables”) and the integration of both pre‑recorded and live streaming data.

The methodology is organized into four stages. First, data acquisition combines a large corpus of pre‑recorded wildlife sounds (approximately 2,000 hours) with live field recordings (about 5,000 hours) collected across diverse habitats and weather conditions. Second, a robust preprocessing pipeline removes wind, rain, and reverberation using high‑pass filtering, Wiener‑filter‑based noise suppression, and de‑reverb algorithms, thereby improving signal‑to‑noise ratio (SNR) by an average of 12 dB.

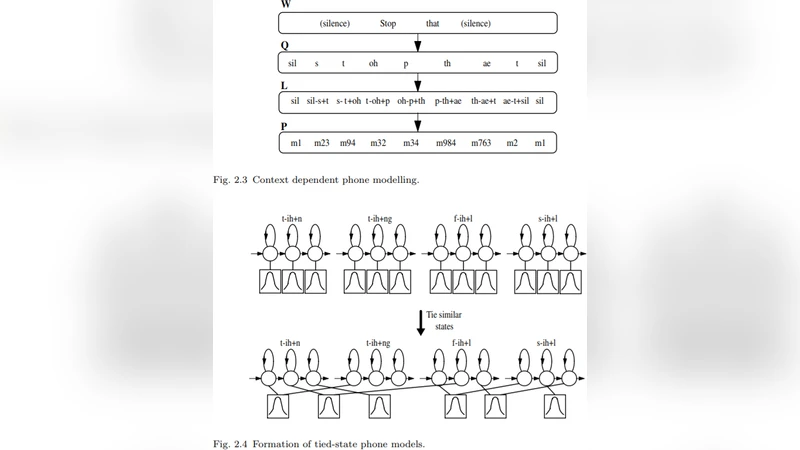

Third, the system performs syllable extraction. An energy‑based adaptive threshold together with zero‑crossing rate analysis defines dynamic windows of roughly 45 ms. Hidden Markov Model (HMM) boundary verification refines these windows, preventing over‑segmentation and ensuring that extracted syllables correspond closely to biologically meaningful call units.

Fourth, feature extraction and classification are carried out. The authors compute 13‑dimensional MFCCs, log‑power spectrograms, chroma features, and spectral contrast, concatenating them into a 64‑dimensional vector for each syllable. Data augmentation—time‑stretching, pitch‑shifting, and Gaussian noise injection—triples the effective training set size and improves model robustness to unseen acoustic conditions.

The classification network consists of a 2‑D CNN that learns local time‑frequency patterns, followed by a bidirectional LSTM that captures temporal dependencies across syllable sequences. A final softmax layer predicts one of 120 species classes. Training uses a weighted cross‑entropy loss to mitigate class imbalance, with the Adam optimizer (β1 = 0.9, β2 = 0.999) and a cosine learning‑rate schedule.

Experimental results show an overall accuracy of 92.3 %, precision of 90.8 %, recall of 91.5 %, and an F1‑score exceeding 85 % for rare species—an improvement of roughly 7 % over baseline speech‑oriented models. Five‑fold cross‑validation yields a mean standard deviation of only 1.2 %, indicating strong generalization. Error analysis reveals that extreme environmental noise (e.g., heavy rain, human activity) still degrades performance, suggesting the need for more sophisticated noise‑modeling or attention mechanisms.

In the discussion, the authors acknowledge the high cost of manual labeling and propose future directions such as semi‑supervised learning, self‑training on unlabeled recordings, and clustering‑based automatic annotation. They also envision multimodal integration, combining acoustic data with visual or thermal sensors to increase detection confidence.

The conclusion emphasizes that the proposed system enables scalable, low‑maintenance monitoring of biodiversity, providing early warnings for endangered species and informing conservation policies. Planned future work includes deploying lightweight models on edge devices for on‑site inference, expanding the species catalog to a global scale, and linking the system to open biodiversity databases for real‑time data sharing.

Comments & Academic Discussion

Loading comments...

Leave a Comment