Mobile Sound Recognition for the Deaf and Hard of Hearing

Human perception of surrounding events is strongly dependent on audio cues. Thus, acoustic insulation can seriously impact situational awareness. We present an exploratory study in the domain of assistive computing, eliciting requirements and presenting solutions to problems found in the development of an environmental sound recognition system, which aims to assist deaf and hard of hearing people in the perception of sounds. To take advantage of smartphones computational ubiquity, we propose a system that executes all processing on the device itself, from audio features extraction to recognition and visual presentation of results. Our application also presents the confidence level of the classification to the user. A test of the system conducted with deaf users provided important and inspiring feedback from participants.

💡 Research Summary

The paper presents an exploratory study aimed at improving situational awareness for deaf and hard‑of‑hearing (DHoH) individuals through a mobile environmental sound recognition system that runs entirely on a smartphone. Recognizing that acoustic cues are essential for perceiving surrounding events, the authors first identified user requirements by interviewing DHoH participants and experts. The key functional requirements emerged as real‑time recognition, visual clarity, confidence level indication, and a limited set of relevant sound categories (e.g., car horn, alarm, door opening, human speech, and “other”). Non‑functional requirements emphasized low battery consumption, privacy preservation, and intuitive usability.

The system architecture consists of four main modules: audio capture, feature extraction, classification, and user‑interface presentation. Audio is recorded at 16 kHz, 16‑bit PCM and processed in 25 ms frames with a 10 ms overlap. Each frame is transformed into a 30‑dimensional feature vector comprising 13 Mel‑frequency cepstral coefficients (MFCCs), log‑energy, zero‑crossing rate, and additional spectral descriptors. Features are normalized and fed into a lightweight deep neural network (DNN) that has been quantized and exported to TensorFlow Lite for on‑device inference. For comparison, a support vector machine (SVM) baseline is also implemented. In practice, the DNN achieves an average inference latency of 45 ms per frame on a mid‑range Android device, satisfying the real‑time constraint while keeping power draw modest.

The classification output is visualized through a Material‑Design‑based UI that displays an icon and text label for the predicted sound, accompanied by a confidence score expressed as a percentage. Low‑confidence predictions trigger a distinct “uncertain” icon and optional vibration feedback, allowing users to quickly gauge the reliability of the information. The UI design deliberately avoids auditory cues, relying solely on color, shape, and haptic signals to accommodate the target audience.

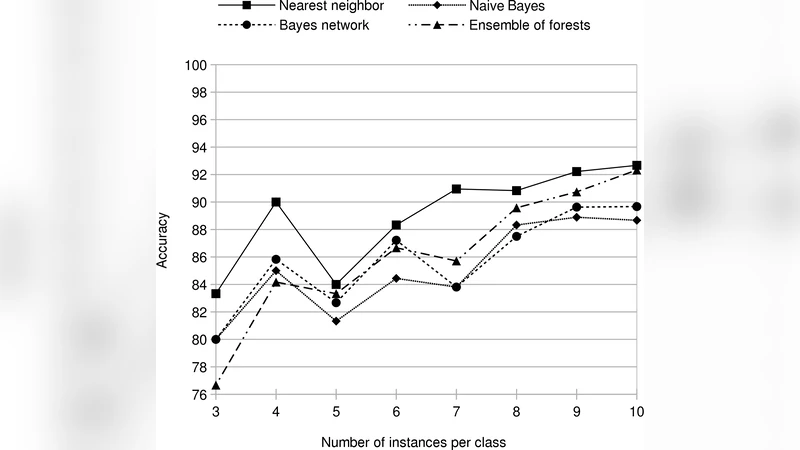

Evaluation was conducted in two phases. First, a controlled dataset containing recordings of the five target sounds in both indoor and outdoor environments was used for 10‑fold cross‑validation. The DNN achieved an average accuracy of 84 % (±3 %) across all classes, outperforming the SVM baseline by roughly 7 percentage points. Second, a user study with twelve DHoH participants was carried out in a realistic setting. Participants used the application for 30 minutes while performing everyday tasks. Post‑session questionnaires revealed high satisfaction: overall recognition accuracy was rated 4.3/5, UI intuitiveness 4.5/5, and the confidence‑level display 4.6/5. Participants highlighted that the system helped them detect critical alerts such as car horns and fire alarms promptly, and that the confidence indicator reduced anxiety about false positives.

The authors discuss several limitations. Accuracy degrades to around 70 % in highly noisy environments, and the current taxonomy of five sound classes does not cover many everyday noises. Moreover, updating the on‑device model without compromising user privacy remains a challenge, as does collecting personalized training data without imposing a labeling burden on users. Future work is proposed in three directions: (1) implementing user‑specific model adaptation through federated learning, (2) expanding the sound taxonomy and integrating contextual cues (e.g., GPS, time of day), and (3) exploring augmented‑reality visual overlays to provide richer situational cues.

In conclusion, the study demonstrates that a fully on‑device sound recognition pipeline can effectively augment environmental awareness for DHoH individuals, offering real‑time feedback, privacy protection, and a user‑centric confidence visualization. The positive feedback from participants underscores the practical value of the approach, while the identified challenges outline a clear roadmap for subsequent research and development.

Comments & Academic Discussion

Loading comments...

Leave a Comment