Bayesian prediction for physical models with application to the optimization of the synthesis of pharmaceutical products using chemical kinetics

Quality control in industrial processes is increasingly making use of prior scientific knowledge, often encoded in physical models that require numerical approximation. Statistical prediction, and subsequent optimization, is key to ensuring the proce…

Authors: Antony Overstall, David Woods, Kieran Martin

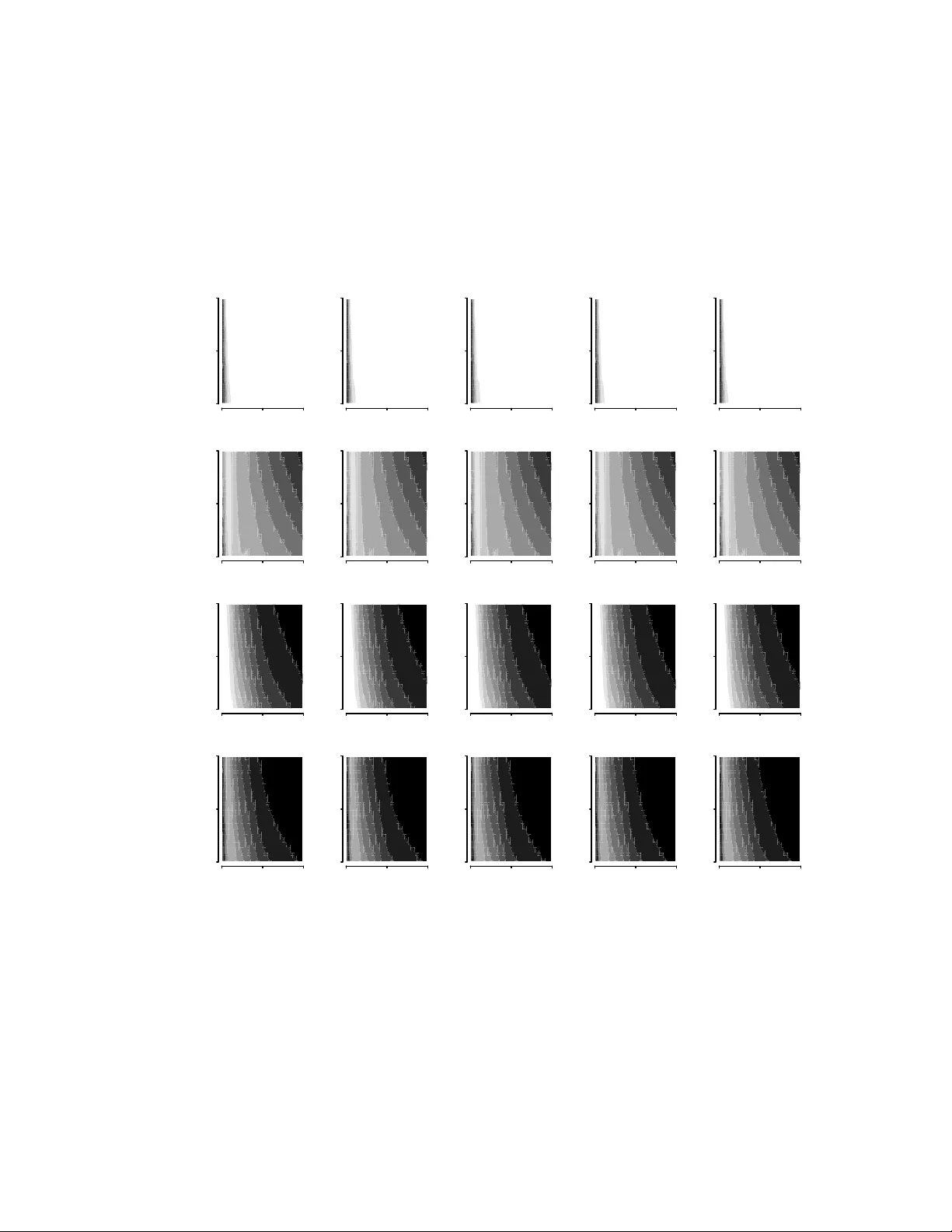

Ba y esian prediction for ph ysical mo dels with application to the optimization of the syn thesis of pharmaceutical pro ducts using c hemical kinetics An tony M. Overstall † ∗ , David C. W o ods † & Kieran J. Martin ‡ † Univ ersity of Southampton, Southampton SO17 1BJ, UK ‡ Ro c he, W elwyn Garden Cit y AL7 1TW, UK Qualit y con trol in industrial pro cesses is increasingly making use of prior scien tific kno wledge, often enco ded in physical mo dels that require n umerical approximation. Statistical prediction, and subsequent optimization, is k ey to ensuring the pro cess output meets a sp ecification target. How ever, the n umerical exp ense of appro ximating the mo dels p oses computational challenges to the iden tification of com binations of the pro cess factors where there is confidence in the qualit y of the response. Recen t w ork in Bay esian computation and statistical appro ximation (emulation) of exp ensiv e computational mo dels is exploited to dev elop a nov el strategy for optimizing the p osterior probability of a pro cess meeting sp ecification. The ensuing metho dology is motiv ated by , and demonstrated on, a chemical synthesis pro cess to manufacture a pharmaceutical pro duct, within whic h an initial set of substances evolv e according to chemical reactions, under certain pro cess conditions, in to a series of new substances. One of these substances is a target phar- maceutical pro duct and t wo are un wan ted by-products. The aim is to determine the combinations of process conditions and amounts of initial substances that maximize the probability of obtaining sufficien t target pharmaceutical pro duct whilst ensuring unw anted by-products do not exceed a given level. The relation- ship betw een the factors and amounts of substances of interest is theoretically described b y the solution to a system of ordinary differential equations incorporating temperature dependence. Using data from a small exp erimen t, it is shown ho w the metho dology can approximate the multiv ariate p osterior predictiv e distribution of the pharmaceutical target and by-products, and therefore identify suitable op erating v alues. Materials to replicate the analysis can b e found at www.github.com/amo105/chemicalkinetics . Keyw ords: Approximate coordinate exc hange; multiv ariate Gaussian pro cess; Gibbs sampling; parallel temp ering; Riemann manifold Langevin Metrop olis-Hastings 1 In tro duction Pharmaceutical pro ducts are often man ufactured b y chemical synthesis, with an initial set of c hemical substances evolving o ver time via a series of chemical reactions into a substance or substances of in terest. One (or more) of these substances will be the target pharmaceutical product while the others will b e un wan ted or harmful by-pr o duct substances. In addition to a time dep endence, the chemical synthesis pro cess may b e hypothesised to dep end on v arious controllable factors. The aim of the c hemical engineers will b e to manipulate the controllable factors such that sp ecification limits on the final substances are satisfied. F or example, the quan tity of pharmaceutical product ma y b e required to exceed s ome sp ecified lev el whilst the amoun ts of un wan ted b y-pro ducts are corresp ondingly k ept b elo w a certain lev el. ∗ Corresponding author. Southampton Statistical Sciences Research Institute, Universit y of Southampton, Southampton, SO17 1BJ, UK. Email: A.M.Overstall@southampton.ac.uk 1 It is common for a physical mo del, for example derived from scientific theory as the solution to a set of ordinary differential equations (ODEs), to b e p ostulated to approximately describ e the dep endence of the synthesis process on the controllable factors (and time); see c hapters in am Ende (2011). In addition, often an experiment can be p erformed within whic h the amoun ts of the substances of in terest are measured for several differen t specifications of the controllable factors. These observed resp onses can then b e used to inform the relationship b et ween controllable factors and the syn thesis process, e.g. through the estimation of unknown tuning parameters. In this pap er, we develop and apply methodology for the statistical mo delling of suc h exp erimen ts, motiv ated by a pharmaceutical exemplar whic h measured three substances of in terest: the pharmaceutical pro duct and t wo unw anted by-products. The dynamics b ehind the synthesis pro cess are appro ximately describ ed b y a system of non-linear ODEs, the solution to whic h forms a ph ysical mo del for the amoun ts of the three substances at a given time. This mo del depends on a set of con trollable factors (including time) and unknown parameters. A small exp erimen t has b een conducted where the amounts of the substances of interest hav e b een observed for different controllable factors at certain time p oin ts. The aim is to use this observed data to estimate the unknown parameters and then to use these estimates to maximize the probabilit y of the predicted amounts satisfying kno wn sp ecification limits as a function of the controllable factors and time. The approach used here will b e Ba yesian, giving a coherent method of propagating uncertain ty on the unkno wn parameters (giv en the observ ed data) through to the predicted amoun ts. This approac h is closely aligned with the concept of “pharmaceutical design space” 1 , a set of combinations of v alues of the controllable factors that hav e b een demonstrated to provide assurance of qualit y . See, for example, P eterson (2008), P eterson and Y ah yah (2009) and Lebrun et al. (2013) for related Bay esian approac hes to the definition of a design space using linear statistical mo delling metho ds. Beyond pharmaceutical design space, in the wider field of pro cess optimization, see, for example, Chiao and Hamada (2001), Peterson (2004) and Del Castillo (2007). Despite the apparen t simplicit y and app ealing nature of the Ba yesian mo delling approach, several com- putational challenges remain. Firstly , the solution to the system of ODEs is analytically in tractable and so a computationally exp ensiv e numerical solution is required (e.g. Iserles, 2009). The computational exp ense of this appro ximation will impact the computing time required b oth for the estimation of the unknown pa- rameters through the ev aluation of their p osterior distribution and the predictions for new combinations of the controllable factors. T o reduce computational exp ense, we make use of (m ultiv ariate) Gaussian pro cess em ulators to accelerate b oth the Mark ov chain Monte Carlo (MCMC) algorithm emplo yed and the prediction of future resp onses. Secondly , some of the observ ations fall below a minimum threshold, χ , under whic h the actual amount cannot be measured reliably , resulting in left-censored observ ations. Instead of assuming that the true observ ations are equal to zero or χ with certain ty , w e assume that the true observ ations are unkno wn, sub ject to b eing in the in terv al ( 0 , χ ) , within a natural Bay esian formulation. Thirdly , we know that the ph ysical model deriv ed from the solution to the ODEs only pro vides an approximation to the true pro cess. W e account for an y p otential mismatch b y introducing an explicit model discrepancy term (c.f. Kennedy and O’Hagan, 2001). F ourthly , the function giving the probability of satisfying the specification limits for giv en con trollable factors will be noisy and computationally expensive to ev aluate making optimization non-trivial. The contribution of this pap er is the nov el application of a combination of mo dern computational and statistical metho dology to address these c hallenges, and the demonstration of this approac h on an imp ortant practical problem. The pap er is organised as follo ws. In Section 2 we describe the motiv ating experiment, and the ph ysical and statistical models for the observ ations in more detail. In Section 3 we describ e the methodology including construction of Gaussian process emulators. In Section 4 we presen t the results of applying the metho dology to the motiv ating exp erimen t, b efore concluding with a discussion in Section 5. 1 https://www.fda.gov/downloads/drugs/guidances/ucm073507.pdf 2 2 Bac kground 2.1 Chemical Reactions The motiv ating c hemical syn thesis process inv olves nine substances, lab elled A to I. The substances of in terest are E, F and H, where F constitutes the pharmaceutical pro duct with E and H b eing un wan ted by-product substances. Let A ( t ) denote the amount (in mols) of substance A at time t (in seconds), with similar notation for the remaining substances. A t time t = 0, initial amoun ts of A, D and E, denoted b y A 0 = A ( 0 ) , D 0 = D ( 0 ) and E 0 = E ( 0 ) , resp ectiv ely , are in tro duced into the lab oratory apparatus at a specified temp erature ( λ in Kelvins) and volume ( V , in litres). Let x = ( x 1 , . . . , x 5 ) = ( A 0 , D 0 , E 0 , λ, V ) b e an F × 1 vector ( F = 5) and assume that x ∈ X = ∏ F f = 1 [ x f ,min , x f ,max ] . The upper and lo wer limits, x f ,min and x f ,max , for eac h factor are given in T able 1. Definition Name Lo wer limit, x f ,min Upp er limit, x f ,max Units Initial amount of A x 1 = A 0 22.5 45 mols Initial amount of D x 2 = D 0 91.4 91.59 mols Initial amount of E x 3 = E 0 26.42 26.47 mols T emp erature x 4 = λ 298.15 313.15 Kelvins (K) V olume x 5 = V 31.28 32.56 litres (l) T able 1: Low er and upp er limits of the con trollable factors in the motiv ating exp erimen t. The chemical syn thesis pro cess is gov erned by the follo wing series of c hemical reactions: A k 1 Ð → 2B + C B + D + E k 2 ← → B + G + F F + B k 3 Ð → H + I (1) where k 1 , k 2 , and k 3 denote the chemical reaction rates. The second line denotes a reversible reaction and th us k 2 is split into k − 2 and k + 2 , for the forward and backw ard reactions, resp ectiv ely . Let [ A ]( t ) = A ( t ) V be the concentration (in mol/litre) at time t of A, let ˙ A ( t ) = d [ A ]( t ) d t b e the corresp onding time deriv ativ e, and assume similar definitions for the remaining substances; B to I. Chemical reactions (1) lead to the follo wing system of non-linear ODEs (suppressing the dep endence on t ): ˙ A = − k 1 [ A ] ˙ B = 2 k 1 [ A ] − k 3 [ F ][ B ] ˙ C = k 1 [ A ] ˙ D = − k − 2 [ B ][ D ][ E ] + k + 2 [ B ][ G ][ F ] ˙ E = − k − 2 [ B ][ D ][ E ] + k + 2 [ B ][ G ][ F ] ˙ F = k − 2 [ B ][ D ][ E ] − k + 2 [ B ][ G ][ F ] − k 3 [ F ][ B ] ˙ G = k − 2 [ B ][ D ][ E ] − k + 2 [ B ][ G ][ F ] ˙ H = k 3 [ F ][ B ] ˙ I = k 3 [ F ][ B ] for t ∈ T = [ 0 , 3000 ] seconds. (2) The initial concentrations of all nine substances are zero, except for A, D and E which are [ A ]( 0 ) = A 0 V , [ D ]( 0 ) = D 0 V and [ E ]( 0 ) = E 0 V , respectively . F or giv en c hemical reaction rates, the solution ([ A ]( t ) , . . . , [ I ]( t )) from (2) provides the concentrations of the nine substances for given com binations of the con trollable factors, x , and reac tion rates. The amoun ts of the nine substances are then given b y ( A ( t ) , . . . , I ( t )) = V × ([ A ]( t ) , . . . , [ I ]( t )) . Ho wev er, the solution is analytically intractable and hence we emplo y a computationally exp ensiv e numerical metho d to find an 3 appro ximate solution. In particular, w e use the v ariable coefficient ordinary differen tial equation solver (Bro wn et al., 1989) as implemented in the R (R Core T eam, 2018) pack age deSolve() function (So etaert et al., 2010). Ho wev er the methodology describ ed in Section 3 can be used in conjunction with any n umerical metho d. The first reaction is assumed to b e instantaneous, which the chemical engineers model by assuming k 1 = 10000. The remaining reaction rates, k − 2 , k + 2 and k 3 , are unkno wn, and the temperature dependence is incorp orated into the system of ODEs via the Arrehnius equation (see, for example, Laidler 1984). Hence, the general form for a reaction rate is k i = k ( r ) i exp E i G 1 λ − 1 λ ( r ) , i = 2 , 3 , where λ ( r ) is the reference temp erature (here 298.15K), k ( r ) i > 0 is the reaction rate at the reference tem- p erature, E i > 0 is the activ ation energy and G = 8 . 31445 Jmol − 1 K − 1 is the gas constan t. By definition, the activ ation energies for the reversible reactions are equal, i.e. E + 2 = E − 2 = E 2 . This means there are p = 5 unkno wn physical parameters, γ = ( γ 1 , . . . , γ 5 ) = k ( r ) − 2 , k ( r ) + 2 , k ( r ) 3 , E 2 , E 3 . T o increase computational effi- ciency of the metho dology described in Section 3, w e op erate on the logarithm scale, i.e. consider θ k = log γ k , for k = 1 , . . . , p , and let θ = ( θ 1 , . . . , θ p ) ∈ Θ = R p . The quality con trol question of interest is to make c hoices of initial amounts of A, D and E, temp erature, v olume and time suc h that the amoun t of the three substances of in terest satisfy the follo wing sp ecification limits (in mols): E ( t ) < 3 , F ( t ) > 20 , H ( t ) < 3 . (3) Recall that F is the pharmaceutical product and its amount needs to b e sufficien tly large to make the pro duction pro cess economically viable. How ever E and H represent unw anted by-products with upp er limits for safety reasons. 2.2 Exp erimen t and Ph ysical Mo del W e estimate the v alues of the unknown physical parameters θ using observ ations from a small experiment. The c hemical reactions (1) are observ ed for I ′ = 6 combinations of the con trollable factors x , giv en in T able 2. F or run i = 1 , . . . , I ′ , we observ e the amounts of E, F and H at a series of n i times t i 1 , . . . , t in i . The times range from 0.5 to 2902 seconds, with n i ranging from 17 to 20 time p oints p er run. In total, there are n = ∑ I ′ i = 1 n i = 109 observ ations of the amoun ts ov er all I ′ runs. Note how run 6 is a repetition of run 5, th us there are I = I ′ − 1 = 5 unique treatmen ts. Runs 5 and 6 hav e observ ations taken at 18 differen t time p oin ts. Ho wev er the last t w o time p oints for run 5 are at 1620 and 1825 seconds, whereas the last tw o time p oints for run 6 are tak en four seconds later. Therefore there are m = 93 unique com binations of controllable factors and time p oin ts. Let x ′ j , t j b e the v alues of the con trollable factors for eac h of these unique com binations ( j = 1 , . . . , m ). The exp eriment was not designed to b e statistically optimal for either the estimation of parameters θ or prediction for new treatmen ts. Let K = 3 b e the num b er of substances of in terest. W e denote by µ ( θ ; x , t ) = log ( E ( t ) , F ( t ) , H ( t )) the K × 1 v ector giving the log of the theoretical amoun t of the three substances of interest (E, F and H) obtained from the numerical solution to the system of ODEs at x , time t and parameters θ . Let Y i b e the n i × K matrix containing the log observ ed amoun ts of E, F and H for the i th run, for i = 1 , . . . , I ′ . The j th row of Y i , denoted b y y ij is a K × 1 containing the observed amounts for the j th time p oin t of the i th run, for i = 1 , . . . , I ′ and j = 1 , . . . , n i . Let Y b e the n × K matrix formed by stac king the matrices Y 1 , . . . , Y I ′ , i.e. Y = Y 1 ⋮ Y I ′ . 4 Initial amount (in mols) Run Sym b ol A 0 D 0 E 0 T emp erature ( λ , Kelvin) V olume ( V , litres) 1 x 1 22.50 91.59 26.47 298.15 31.31 2 x 2 45.00 91.59 26.47 298.15 32.56 3 x 3 22.50 91.50 26.45 313.15 31.28 4 x 4 45.00 91.50 26.45 313.15 32.53 5 x 5 33.75 91.40 26.42 305.65 31.88 6 x 6 33.75 91.40 26.42 305.65 31.88 T able 2: The six com binations of the con trollable factors in the experiment. The experimental apparatus cannot detect substance amoun ts lo wer than χ = 0 . 01 mols. Therefore, we ha ve censored v alues when the observ ed amount is less than χ . In total, there are zero censored observ ations in the first column of Y , and 5 and 45 in the second and third columns, resp ectiv ely . W e take accoun t of the censoring in our statistical modelling strategy described in Section 2.3. 2.3 Statistical Mo del W e assume that Y = G ( M ( θ ) + D ) + E . (4) In (4), M ( θ ) is the m × K matrix of unique model solutions, giv en by M ( θ ) = M 1 ( θ ) ⋮ M I ( θ ) , where M i ( θ ) , for i = 1 , . . . , I − 1, is the n i × K matrix with j th row given b y µ ( θ ; x i , t ij ) , and M I ( θ ) is the ( n I + 2 ) × k matrix where the j th row is giv en by µ ( θ ; x I , t I j ) , for j = 1 , . . . , n I , and the ( n I + 1 ) th and ( n I + 2 ) th ro ws are giv en b y µ ( θ ; x I , 1624 ) and µ ( θ ; x I , 1829 ) , resp ectiv ely (corresp onding to the t w o time p oin ts that differ b etw een runs 5 and 6). F urthermore, D is the m × K matrix of mo del discrepancy errors giv en by D = D 1 ⋮ D I , whic h represents the discrepancy betw een the ph ysical mo del and the true mean v alue of the pro cess (Kennedy and O’Hagan, 2001). The n × m binary incidence matrix G identifies the ro ws of M + D corresponding to the rows of Y . Finally , E is the n × K matrix of observ ational errors. The distribution of E is assumed to b e E ∼ MN ( 0 , Ω , T ) , where MN denotes the matrix normal distribution (e.g. Dawid, 1981), Ω is an unkno wn K × K column co v ariance matrix, and T is an n × n row correlation matrix. The matrix T = diag { T 1 , . . . , T I ′ } is blo c k- diagonal, with j l th element of T i giv en b y T i,j l = exp − ρ ( t ij − t il ) 2 j, l = 1 , . . . , n i , with time correlation parameter ρ > 0 assumed unkno wn. This cov ariance structure for E assumes that the correlation b etw een observ ations from the same run is dep enden t on the difference in time, but observ ations from different runs are indep enden t. 5 W e complete the mo del sp ecification by sp ecifying prior distributions for the discrepancy D and the mo del parameters. The discrepancy is assumed to follow a matrix normal distribution, D ∼ MN ( 0 , Σ , S ) , (5) where Σ is an unkno wn K × K co v ariance matrix and S is an m × m correlation matrix. The j l th element of S is giv en by S j l = exp − ψ 1 F f = 1 ( d j p − d lp ) 2 − ψ 2 ( t j − t l ) 2 , (6) for j, l = 1 , . . . , m and where the mo del discrepancy correlation parameters ψ 1 > 0 and ψ 2 > 0 are unkno wn. In (6), d j f = x ′ j f − x f ,min ( x f ,max − x f ,min ) is the scaled v alue of the f th controllable factor, for f = 1 , . . . , F and j = 1 , . . . , m , where x ′ j f is the f th elemen t of x ′ j . This correlation structure allo ws the differences in mo del discrepancy b et ween different treatments to dep end on the “distance” b et ween the controllable factors and time p oints for eac h treatment. The c hemical engineers hav e some limited prior kno wledge on the lo cation of θ . It is believed that the reaction rates are likely to lie b et ween 10 − 8 and 10 − 4 and the activ ation energies b et ween 10 2 and 10 6 . W e enco de this information by assuming that the reaction rates and activ ation energies hav e independent log- normal prior distributions with hyperparameters chosen so that there is probabilit y 0.95 that the v alue of in terest lies b etw e en the t wo limits abov e. This is achiev ed by setting the 0.025 and 0.975 quantiles to b e the low er and upper limits respectively . In summary , w e assume log θ ∼ N ( µ , ∆ ) , where µ = ( − 13 . 8 , − 13 . 8 , − 13 . 8 , 9 . 21 , 9 . 21 ) T and ∆ = 5 . 52 I p . There is no prior information av ailable for the remaining parameters, so we sp ecify v ague prior distributions that con tribute negligible information. The v ariance matrices Ω and Σ are b oth assumed to ha ve inv erse-Wishart prior distributions with ν = 4 degrees of freedom and an iden tity scale matrix (Gelman et al., 2014, Chapter 3). The correlation parameters ρ , ψ 1 and ψ 2 are giv en indep enden t exponential prior distributions with mean equal to one following the argumen ts of, for example, Ov erstall and W o o ds (2016). In Section 4 we discuss the results of a sensitivity analysis to assess the robustness to the c hoice of these v ague prior distributions. Let y = vec ( Y ) , m ( θ ) = vec ( M ( θ )) , d = vec ( D ) and e = vec ( E ) , with the v ec operator stac king columns of a matrix. Then a mo del sp ecification equiv alent to (4) is giv en by y = H ( m ( θ ) + d ) + e , (7) where H = I k ⊗ G , e ∼ N ( 0 , Ω ⊗ T ) , (8) d ∼ N ( 0 , Σ ⊗ S ) , (9) and ⊗ denotes the Kronec k er product. Let y S and y C denote the elemen ts of y whic h are fully observ ed (i.e. greater than log χ ) and censored (i.e. less than log χ ), resp ectively . Therefore, we need to ev aluate the posterior distribution (conditional on y S ) of the mo del parameters and censored observ ations. This distribution is giv en b y π ( θ , Ω , ρ, d , Σ , ψ , y C y S ) ∝ π ( y θ , d , Ω , ρ ) π ( d Σ , ψ ) π ( θ ) π ( Ω ) π ( ρ ) π ( Σ ) π ( ψ ) , (10) where π ( y θ , d , Ω , ρ ) is the complete data likelihoo d given b y (7) and (8), π ( d Σ , ψ ) is the density of the v ectorised mo del discrepancy (9), and π ( θ ) , π ( Ω ) , π ( ρ ) , π ( Σ ) and π ( ψ ) are prior densities for the model parameters. 6 2.4 Prediction Let y 0 b e a K × 1 v ector denoting the predicted log amounts of E, F and H for arbitary controllable factors x 0 ∈ X and t 0 ∈ T . The p osterior predictive distribution of y 0 is given b y π ( y 0 y S ) = π ( y 0 θ , D , ψ , Ω , Σ ) π ( θ , D , ψ , Ω , Σ y S ) d θ d D d ψ d Ω d Σ , (11) where y 0 θ , D , ψ , Ω , Σ ∼ N µ ( θ ; x , t ) + D T S − 1 s , 1 − s T S − 1 s Σ + Ω , and s is an m × 1 v ector with j th element giv en by s j = exp − ψ 1 F f = 1 ( d j f − d 0 f ) 2 − ψ 2 ( t j − t 0 ) 2 , with d 0 f = ( x 0 f − x f ,min ) ( x f ,max − x f ,min ) b eing the scaled v alue of each elemen t of x 0 , for f = 1 , . . . , F . The probability of E, F and H satisfying the specification limits (3) at p oin t ( x 0 , t 0 ) is given b y P ( y 0 ∈ Y y S ) = Y π ( y 0 y S ) d y 0 , , where Y = η ∈ R K η 1 < log 3 , η 2 > log 20 , η 3 < log 3 . 3 Metho dology The p osterior and predictive distributions giv en by (10) and (11), resp ectiv ely , will b e analytically in tractable. Our general approach to numerically approximating these distributions uses tw o phases. The Sampling Phase inv olves generating a sample from the p osterior distribution using MCMC metho ds. The Prediction Phase uses this MCMC sample to generate a sample from the p osterior predictive distribution and th us estimate the probability P ( y 0 ∈ Y y S ) by the proportion of sampled v alues satisfying the sp ecification limits. These phases make use of Gibbs sampling and parallel temp ering algorithms, and multiv ariate Gaussian pro cess em ulators for the n umerical solution to the ODEs. 3.1 Gibbs sampling and parallel temp ering W e use Gibbs sampling in conjunction with parallel temp ering to generate a sample from the joint p osterior distribution (10) of mo del parameters and censored observ ations. T o improv e the efficiency of the Gibbs sampler, we emplo y hierarchical cen tring (Papaspiliopoulos et al., 2003) and reparameterise mo del (7) as y = Hd ∗ + e , (12) where d ∗ ∼ N ( m ( θ ) , Σ ⊗ S ) . (13) Sampled v alues of d ∗ can easily b e transformed to v alues of d using d = d ∗ − m ( θ ) . The posterior distribution of mo del parameters and censored observ ations is no w given b y π ( θ , Ω , ρ, d ∗ , Σ , ψ , y C y S ) ∝ π ( y d ∗ , Ω , ρ ) π ( d ∗ θ , Σ , ψ ) π ( θ ) π ( Ω ) π ( ρ ) π ( Σ ) π ( ψ ) , where π ( y d ∗ , Ω , ρ ) is the complete-data lik eliho od defined by (12) and π ( d ∗ θ , Σ , ψ ) is defined by (13). The full conditional p osterior distributions of d ∗ , Ω and Σ are of known form and are giv en in App endix A. The full conditional posterior distribution of y C is a truncated multiv ariate normal distribution (see App endix A) and there exist efficient metho ds for generating v alues from such distributions (Gew eke, 1991). F or the remaining parameters θ , ρ and ψ , the full conditional distributions are not of kno wn form, so a Metrop olis-within-Gibbs step is employ ed. Sp ecifically , w e exploit the Riemann manifold Langevin 7 Metrop olis-Hastings (RMLMH) algorithm (Girolami and Calderhead, 2011) . This method uses deriv ative information on the p osterior surface to construct an efficient prop osal distribution. The algorithmic details are briefly described in App endix B and the comp onen ts necessary for its application to the full conditional p osterior distributions of θ , ρ and ψ are giv en in App endix C. P arallel temp ering (see, for example, Gelman et al., 2014, pp. 299-300) is used to impro ve the efficiency of sampling from a potentially multi-modal posterior distribution. It inv olves defining R distributions by a series of increasing “temperatures”, where the lo west temp erature distribution corresp onds to the p osterior distribution. An MCMC chain is initialized under each distribution, and the parallel temp ering scheme allo ws for swaps b et ween the states of the chains for distributions at different temp eratures. The low est temp erature MCMC chain is a sample from the p osterior distribution. Parallel temp ering allows larger mo ves (e.g. b et ween different areas of high p osterior density) made at the higher temp erature chains to b e passed down to the low er temperature chains, hence improving mixing. Let δ = ( θ , Ω , ρ, d ∗ , Σ , ψ , y C ) b e the collection of unkno wn parameters and censored observ ations. F or r = 1 , . . . , R , define the distribution for the r th chain to hav e densit y prop ortional to U r ( δ ) = exp log π ( y S δ ) + log π ( δ ) τ r , where 1 = τ 1 < ⋅ ⋅ ⋅ < τ R are a series of temp eratures. A parallel temp ering iteration inv olv es applying either i) a sampling step (with probability ω ); or ii) a swap step (with probabilit y 1 − ω ). In the sampling step, the curren t v alues δ c ( r ) in the r th chain are up dated to δ c + 1 ( r ) , for r = 1 , . . . , R , using the Gibbs sampling algorithm. In the swap step, tw o neigh b ouring c hains ( r and r + 1) are chosen at random. With probability min 1 , U r + 1 ( δ c ( r ) ) U r ( δ c ( r + 1 ) ) U r + 1 ( δ c ( r + 1 ) ) U r ( δ c ( r ) ) , set δ c + 1 ( r ) = δ c ( r + 1 ) and δ c + 1 ( r + 1 ) = δ c ( r ) . T o complete sampling step (i) for each distribution at temp erature t r , the full conditional and RMLMH components need to b e adjusted (see App endices A and B for details). 3.2 Amended Gibbs sampling and parallel temp ering One iteration of the RMLMH step in the Gibbs sampling algorithm outlined in Section 3.1 requires one ev al- uation of m ( θ ) to calculate the acceptance probability and one ev aluation each of sensitivities ∂ m ( θ ) ∂ θ and ∂ 2 m ( θ ) ∂ θ ∂ θ k to form the prop osal distribution. T o ev aluate m ( θ ) w e are required to ev aluate µ ( θ ; x i , t ij ) , for i = 1 , . . . , I and j = 1 , . . . , n i . The deriv ativ e terms require ev aluation of ∂ µ ( θ ; x i , t ij ) ∂ θ and ∂ 2 µ ( θ ; x i , t ij ) ∂ θ ∂ θ k , for i = 1 , . . . , I , j = 1 , . . . , n i and k = 1 , . . . , p . The system of ODEs can b e augmented with additional equations to n umerically solve for the first- and second-order sensitivities (V alko and V a jda, 1984; Girolami and Calderhead, 2011). How ever this will substan tially increase the computational burden of the numerical solution. Note that to generate a sample of size B using parallel temp ering with R chains will require B R ev aluations of the three functions for eac h x i and t ij , for i = 1 , . . . , I , j = 1 , . . . , n i and k = 1 , . . . , p . Once we ha ve generated a sample of size B from the p osterior distribution, to estimate the probability P ( y 0 ∈ Y y S ) , we require B further ev aluations of µ ( θ ; x , t ) , for eac h x ∈ X and t ∈ T at whic h w e wish to ev aluate this probabilit y . Making suc h a large num b er of ev aluations of the numerical solution is clearly computationally infeasible. Instead w e form an approximation to the numerical solution by the construction of a statistical emulator through a computer exp eriment (Sac ks et al., 1989). The n umerical solution to the ODEs is ev aluated at a selection of N combinations of parameters θ ; con trollable v ariables x ; and times t . These ev aluations are treated as data to whic h a statistical mo del, termed an emulator, is fitted. The emulator is essentially a predictiv e distribution (denoted b y Q ( θ , x , t ) ) from which fast predictions of the n umerical solution can be obtained. F or example, the predictiv e mean, ˆ µ ( θ ; x , t ) , will be used as a point prediction. F or more details on computer experiments and statistical em ulators, see, for example, Santner et al. (2003), F ang et al. (2006) and (Dean et al., 2015, Section V). 8 In the Sampling Phase, we apply the Gibbs sampling and parallel temp ering algorithm with the follo wing amendmen ts: 1. All ev aluations of sensitivities ∂ µ ( θ ; x , t ) ∂ θ and ∂ 2 µ ( θ ; x , t ) ∂ θ ∂ θ k are replaced b y ∂ ˆ µ ( θ ; x , t ) ∂ θ and ∂ 2 ˆ µ ( θ ; x , t ) ∂ θ ∂ θ k , resp ectiv ely , for k = 1 , . . . , p . 2. R + 1 parallel chains are constructed for temp eratures 1 = τ 0 = τ 1 < τ 2 < ⋅ ⋅ ⋅ < τ R . In chains r ≥ 1, all ev aluations of µ ( θ ; x , t ) in the ev aluation of U r ( δ ) in the acceptance probability are replaced by ev aluation of ˆ µ ( θ ; x , t ) . F or ev aluation of U 0 ( δ ) in c hain r = 0 with temperature τ 0 = 1, ev aluation of the actual µ ( θ ; x , t ) is retained. This mo difications results in the sample generated in chain r = 0 b eing from the true posterior distribution of δ but the o v erall sampling sc heme requiring only B ev aluations of µ ( θ ; x i , t ij ) for i = 1 , . . . , I and j = 1 , . . . , n i . In the Prediction Phase, to estimate the probabilit y of satisfying the sp ecification limits, all ev aluations of µ ( θ ; x , t ) are replaced by a v alue generated from Q ( θ , x , t ) . Sampling from this predictive distribution means the estimate of the probabilit y tak es accoun t of additional uncertaint y introduced by using an emulator appro ximation. 3.3 Multiv ariate Gaussian pro cess emulator In this pap er, we employ the m ultiv ariate Gaussian pro cess (MGP; Conti and O’Hagan 2010) em ulator. The n umerical solution to the ODEs, µ ( θ ; x , t ) , is ev aluated at eac h of the elemen ts of the set (or meta-design) ζ = {( θ ∗ 1 , x ∗ 1 , t ∗ 1 ) , . . . , ( θ ∗ N , x ∗ N , t ∗ N )} . The sup erscript ∗ notation has b een in tro duced to distinguish the meta- design from the design of the ph ysical exp erimen t. Let z i = µ ( θ ∗ i ; x ∗ i , t ∗ i ) , Z be the N × K matrix with i th ro w giv en b y z T i , and w i = ( θ ∗ i ; x ∗ i , t ∗ i ) b e the U × 1 v ector of all inputs, where U = p + F + 1 = 11. The central assumption of the MGP is that Z follows a matrix normal distribution, Z ∼ MN 1 N β T , Φ , P , where β is a K × 1 vector of common column means of Z , Φ is a K × K unstructured column cov ariance matrix, P is an N × N row correlation matrix and 1 N is the N × 1 v ector of ones. W e model the ij th element of P as P ij = exp − U u = 1 α u ( w iu − w j u ) 2 , where w iu is the u th element of w i and α u > 0 are correlation parameters, for u = 1 , . . . , U . Supp ose w e wish to predict z 0 = µ ( θ 0 ; x 0 , t 0 ) . Let ˆ β = 1 T N P − 1 Z 1 T N P − 1 1 N , (14) ˆ Ψ = Z T P − 1 I N − 1 N 1 T N P − 1 1 T N P − 1 1 N Z , (15) and w 0 = ( θ 0 , x 0 , t 0 ) , with I N the N × N identit y matrix. It can be sho wn that the predictiv e distribution of z 0 , i.e. Q ( θ , x , t ) , conditional on outputs z 1 , . . . , z N is given b y N ˆ µ ( θ ; x , t ) , ˆ Π ( θ ; x , t ) , (16) where ˆ µ ( θ ; x , t ) = ˆ β + p T 0 P − 1 Z − 1 N ˆ β T , (17) ˆ Π ( θ ; x , t ) = 1 − p T 0 P − 1 p 0 ˆ Ψ , (18) 9 with N × 1 v ector p 0 ha ving i th elemen t p 0 i = exp − U u = 1 α u ( w iu − w 0 u ) 2 , and w 0 u b eing the u th element of w 0 . The predictiv e distribution given by (16) is conditional on the correlation parameters, α = ( α 1 , . . . , α U ) . W e replace these v alues by their marginal p osterior mo de (e.g. Ov erstall and W o o ds, 2016) although a fully Ba yesian approac h could b e tak en with these parameters in tegrated out with respect to their p osterior distribution, conditional on Z . 3.3.1 Meta-design for the Sampling and Prediction Phases The tw o em ulators formed prior to the Sampling and Prediction Phases depend on the choice of the meta- design ζ . The n umerical solution, µ ( θ ; x , t ) , has three differen t inputs: θ , x and t . W e construct the meta-design as a cartesian product of designs for eac h of these three input types, ζ = ζ 1 × ζ 2 × ζ 3 , where ζ 1 = θ ∗ 1 , . . . , θ ∗ N 1 , ζ 2 = x ∗ 1 , . . . , x ∗ N 2 , ζ 3 = t ∗ 1 , . . . , t ∗ N 3 , and N = ∏ M i = 1 N i where M = 3 for our exp er- imen t. Separate designs in t and θ and x allo w us to exploit computational efficiencies in the computation of the n umerical solution to the ODEs; computing µ ( θ ; x , t 1 ) and µ ( θ ; x , t 2 ) , for t 1 ≠ t 2 , requires a negligible increase in computational effort o v er just computing µ ( θ ; x , t 1 ) . This structure of meta-design also allo ws the em ulator ro w correlation matrix, P , to be decomposed as P = M i = 1 P i = P 1 ⊗ P 2 ⊗ P 3 , where the ij th elemen ts of P 1 , P 2 and P 3 are P 1 ,ij = exp − ∑ p u = 1 α u θ ∗ iu − θ ∗ j u 2 for i, j = 1 , . . . , N 1 , P 2 ,ij = exp − ∑ F u = 1 α p + u x ∗ iu − x ∗ j u 2 for i, j = 1 , . . . , N 2 , P 3 ,ij = exp − α U t ∗ i − t ∗ j 2 for i, j = 1 , . . . , N 3 , resp ectiv ely . Hence, P − 1 = M i = 1 P − 1 i , significan tly reducing the computational burden of constructing the emulator and impro ving numerical sta- bilit y . Similarly , for a meta-design with this structure, (14), (15), (17) and (18) also simplify: ˆ β = M i = 1 1 T N i P − 1 i Z ∏ M i = 1 1 T N i P − 1 i 1 N i , ˆ Ψ = Z T M i = 1 P − 1 i − M i = 1 P − 1 i 1 N i 1 T N i P − 1 i Z , ˆ µ ( θ ; x , t ) = ˆ β + M i = 1 p T i P − 1 i Z − 1 N ˆ β T , ˆ Π ( θ ; x , t ) = 1 − M i = 1 p T i P − 1 i p i ˆ Ψ , where vectors p 1 , p 2 and p 3 ha ve j th elements giv en by p 1 j = exp − ∑ p u = 1 α u θ ∗ j u − θ u 2 , for j = 1 , . . . , N 1 , p 2 j = exp − ∑ p u = 1 α p + u x ∗ j u − x u 2 , for j = 1 , . . . , N 2 , p 3 j = exp − α U t ∗ j − t 2 , for j = 1 , . . . , N 3 , 10 resp ectiv ely . When we choose ζ to construct the em ulator for the Sampling Phase , w e choose the v alues of x and t to coincide with the design of the physical exp eriment, i.e. to b e equal to x 1 , . . . , x I and t 1 , . . . , t m . Hence uncertain ty in the prediction will only result from the differen t choice of parameter θ . F or our exp erimen t, this means fixing ζ 2 = { x 1 , . . . , x 5 } and ζ 3 = { t 1 , . . . , t m } , i.e. N 2 = I = 5 and N 3 = m . W e use the following exploratory algorithm to adaptively choose ζ 1 . This uses a h ybrid of the metho d- ologies proposed b y Rasm ussen (2003), Fielding et al. (2011) and Overstall and W o o ds (2013). Rasm ussen (2003) first proposed using MCMC metho ds to adaptively impro ve an emulator to an unnormalised p osterior densit y based on a computationally expensive function in ligh t of observ ed data. Fielding et al. (2011) ex- tended this metho dology b y using parallel temp ering, and Ov erstall and W o o ds (2013) prop osed to emulate the computationally exp ensiv e function, as opp osed to the posterior densit y , to make mo del criticism more efficien t. The follo wing explor atory algorithm iteratively improv es the accuracy of the emulator in the region of the parameter space Θ corresponding to high posterior densit y . 1. Generate the set ζ 0 1 = θ ∗ 1 , . . . , θ ∗ N 0 1 as a sample of size N 0 1 from the prior distribution of θ . 2. Let ζ 0 = ζ 0 1 × ζ 2 × ζ 3 and fit the MGP to the resulting ev aluations from µ ( θ ; x , t ) . 3. Let ζ = ζ 0 and rep eat the following steps un til µ ( θ ; x , t ) as b een ev aluated a total of N times. (a) Perform L iterations of the Gibbs sampling and parallel tempering sc heme (for chains r = 1 , . . . , R ) where ev aluation of µ ( θ ; x , t ) is replaced b y ev aluation of ˆ µ ( θ ; x , t ) in all instances. (b) Ev aluate ˜ z rij = µ ( θ ( r ) ; x i , t j ) , for r = 1 , . . . , R , i = 1 , . . . , I and j = 1 , . . . , n i , where θ ( r ) is the curren t v alue of θ in the r th c hain. Augment the matrix Z by ˜ z rij for r = 1 , . . . , R , i = 1 , . . . , I and j = 1 , . . . , n i . Refit the MGP . The meta-design for the Prediction Phase is constructed as the cartesian pro duct of three space-filling designs. Firstly , ζ 1 is constructed as a space-filling (e.g. Johnson et al., 1990) sub-sample of size N 1 , selected using the cover.design function in the R pack age Fields (Nyc hk a et al., 2015) using the sample generated from the p osterior distribution of θ in the Sampling Phase as a candidate list. Secondly , ζ 2 and ζ 3 are c hosen as Latin hypercub e designs in X and T of sizes N 2 and N 3 , resp ectiv ely (Santner et al., 2003, Ch. 5). 4 Results 4.1 Sampling Phase T o create the meta-design for the Sampling Phase, w e set N 0 1 = 50, N 1 = 100 and set up R = 5 parallel c hains. This results in ten iterations of the exploratory algorithm in Section 3.3.1 with L = 50. W e adapt the temp eratures of the parallel c hains using the methodology of Miaso jedow et al. (2013). Figure 1 sho ws the resulting v alues of θ ∗ in meta-design ζ 1 . It is clear that the exploratory algorithm selects points from a concen trated region of Θ. W e then obtain a sample of size B = 50000 from the p osterior distribution of δ using the amended Gibbs sampling and parallel temp ering algorithm presented in Section 3.2. Conv ergence was assessed informally via trace plots (not shown) which show ed that the chains had mixed adequately and each p osterior was unimo dal. Figure 2 shows plots of the prior and estimated p osterior densities for each elemen t of θ as well as the elements of ψ and ρ . Clearly the data has led to an increase in information from the prior to p osterior distributions. Diagnostics for assessing the adequacy of the fitted mo del (see, for example, Gelman et al., 2014, Chapter 6) indicated that there w ere no reasons to believe that the fit was inadequate. 11 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −20 −16 −12 −20 −16 −12 −8 θ 2 θ 1 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −18 −14 −10 −20 −16 −12 −8 θ 3 θ 1 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 14 −20 −16 −12 −8 θ 4 θ 1 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 −20 −16 −12 −8 θ 5 θ 1 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −18 −14 −10 −20 −16 −12 θ 3 θ 2 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 14 −20 −16 −12 θ 4 θ 2 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 −20 −16 −12 θ 5 θ 2 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 14 −18 −14 −10 θ 4 θ 3 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 −18 −14 −10 θ 5 θ 3 ● ● Initial sample from prior distribution V alues selected by e xploratory algorithm ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 4 6 8 10 12 4 6 8 10 12 14 θ 5 θ 4 Figure 1: V alues of θ in the meta-design ζ 1 for the Sampling Phase as found b y the exploratory algorithm in Section 3.3.1: grey p oin ts are the N 0 1 initial v alues generated from the prior distribution and black p oints are the v alues selected in step 3 of the exploratory algorithm. 12 −11.2 −11.0 −10.8 −10.6 0 1 2 3 4 θ 1 Density −16 −15 −14 −13 0.0 0.4 0.8 θ 2 Density −15.5 −15.0 −14.5 −14.0 0.0 0.5 1.0 1.5 θ 3 Density 10.6 10.8 11.0 11.2 11.4 11.6 0 1 2 3 4 θ 4 Density 9 10 11 12 0.0 0.4 0.8 1.2 θ 5 Density 0 2 4 6 8 10 0.0 0.2 0.4 0.6 ψ 1 Density 10 15 20 25 30 35 0.00 0.06 0.12 ψ 2 Density 2.0 2.5 3.0 3.5 4.0 4.5 0.0 0.5 1.0 1.5 ρ Density Prior distribution P osterior distr ib ution Figure 2: T race plots of the p osterior sample (left hand panels) and the estimated p osterior and prior densities (right hand panels) for each elemen t of θ . 13 4.2 Prediction Phase W e no w use the sample generated from the p osterior distribution of δ , and, in particular, θ , to construct an em ulator Q ( θ , x , t ) , as defined in (16), for the numerical solution to the ODEs. W e let N 1 = 20, N 2 = 50 and N 3 = 50. It could be noted that this v alue of N 1 is quite small compared to the rule of th umb of Lo eppky et al. (2009) that the sample size should be appro ximately ten times the n umber of input dimensions (in this case p = 5). How ev er, we found that, due to the concen tration of the p osterior, this v alue was sufficiently large to pro duce an adequate emulator and a void numerical instabilit y in the inv ersion of P 1 . T o assess the adequacy of the emulator, w e created a test meta-design of the same size (as abov e, a space filling sub-sample with a candidate list excluding the p oin ts from the original meta-design) and implemented the diagnostic metho ds of Overstall and W o o ds (2016). In particular, the ro ot mean squared errors b et w een the n umerical solution and the predictive mean were 3 . 4 × 10 − 2 , 0 . 19 and 0 . 40 for each of the K = 3 dimensions of µ ( θ ; x , t ) , resp ectiv ely . The o verall cov erage of the 95% predictive interv als, as assessed on the test design, was 89.0%. Name Sym b ol V alue Unit Initial amount of A A 0 x 1 30.52 mols Initial amount of D D 0 x 2 91.51 mols Initial amount of E E 0 x 3 26.47 mols T emp erature λ x 4 313.15 K V olume V x 5 31.28 litres Time t 199.10 s T able 3: Optimum v alues of the con trollable factors, x and t , as found by A CE. No w w e can use em ulator Q ( θ , x , t ) to approximate the probability , P ( y 0 ∈ Y y S ) , of satisfying the sp ecification limits for given x and t . W e need to maximize this probability o ver the space S = X × T . T o do this we use the approximate co ordinate exchange (A CE; Overstall and W o ods 2017) algorithm. ACE w as originally dev elop ed for finding Ba y esian optimal exp erimen tal designs where the ob jectiv e function is maximised ov er a design space. In these situations, the ob jectiv e function is usually analytically intractable. A CE uses a cyclic ascen t algorithm (e.g. Lange, 2013, Chapter 7) to maximise the ob jectiv e function via a univ ariate Gaussian pro cess em ulator based on ev aluations of a Mon te Carlo approximation to the ob jectiv e function. W e use the implementation of A CE given b y the R pack age acebayes (Ov erstall et al., 2017). W e use 100 random starts of A CE, where the initial v alue of ( x , t ) is uniformly generated in S × T . The optimum v alues of x and t , as found by A CE, are shown in T able 3. The approximate maxim um v alue of P ( y 0 ∈ Y y S ) attained was 0.77 (sub ject to Mon te Carlo error). W e in v estigated the sensitivity of the ab o v e approac h to the choice of prior distributions for ρ , ψ , Ω and Σ . W e did this b y rep eating the ab ov e analysis to find the optimal settings for x and t under different prior specifications. W e found the results to b e broadly robust to choice of prior distribution and details of this are pro vided in Appendix D. T o further explore how P ( y 0 ∈ Y y S ) dep ends on x and t , we fix the initial amount of E, temperature and v olume at 26.47 mols, 31.28 litres, and 313.5 K, respectively , i.e. the optim um v alues as found by ACE. W e then v ary the initial amounts of A and D, and time ov er the ranges identified in T able 1. The last ro w of Figure 3 shows P ( y 0 ∈ Y y S ) for time against the initial amount of A, with differen t columns for different initial amounts of D. The first three ro ws show corresp onding plots for the marginal probabilit y of satisfying eac h of the sp ecification limits (3) on E, F and H, respectively . The trade-off b et ween the ob jectives given b y the sp ecification limits can b e clearly seen. T o satisfy the limit E ( t ) < 3 mols, t has to b e large. This is in tuitively obvious since the initial amoun t of E is 26.47 mols so the pro cess will need to progress for some time b efore the amoun t of E has decreased enough to satisfy the limit. The opp osite is true for H (initial amoun t of 0 mols), where the optimum time to satisfy H ( t ) < 3 mols is for t to be small. This means that there is a very narrow window of v alues of t that will satisfy all three constrain ts, as shown in the last ro w of Figure 3. Note that the optim um v alues of x and t (as sho wn in T able 3) lie within this narro w window. 14 91.40 mols 91.45 mols 91.50 mols 91.54 mols 91.59 mols E F H All Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Time (s) Amount of A (mols) 0 1500 3000 22.5 33.75 45 Figure 3: The probabilit y of satisfying eac h of the three marginal constrain ts and the joint constrain ts for time (in s; x -axis) against initial amoun t of A (in mols; y -axis) for fiv e different initial amounts of D. The colours indicate the magnitude of the probabilit y , with black b eing 0 and white b eing 1. 15 5 Discussion This paper has dev elop ed and applied methodology for c ho osing combinations of v alues of the con trollable factors that produce a high probabilit y of meeting pro cess sp ecification when the pro cess is at least approx- imating determined b y a physical model. Our application was a chemical syn thesis pro cess used to pro duce a pharmaceutical pro duct when sp ecification was dete rmined by a) the amount of the substance constitut- ing the pharmaceutical pro duct b eing ab o ve some lev el; and b) the amounts of t wo unw anted by-product substances b eing below some lev els. The relationship betw een the controllable factors and the amoun ts of substances of interest is hypothesised to b e gov erned by the analytically intractable solution to a system of ODEs. Resp onses from a ph ysical exp erimen t are used to refine the kno wledge on this relationship and the probability of satisfying the constraints is maximized. Statistical em ulators for the numerical solution are constructed to accelerate the mo del-fitting pro cess and the estimation of the probability of satisfying the constraints. The metho dology pro duces computationally feasible results that take accoun t of differen t uncertain ties in volv ed in the exp erimen tal and mo delling pro cesses (measuremen t error, mo del inadequacy , em ulator error). The metho ds ha v e the p oten tial to b e applied to a v ariety of qualit y con trol and pharma- ceutical design space problems that in volv e the numerically expensive appro ximation to ph ysical mo dels. The mo delling and computational approac h taken in this pap er can b e mo dified in a v ariety of differ- en t wa ys to suit the application of interest. F or example, we hav e explicitly taken account of the mo del discrepancy . If the exp erimen ters b elieved the mo del giv en by the solution to the ODEs was a very close appro ximation to the true physical process then the mo del discrepancy could be discarded by setting D = 0 and omitting the steps for sampling from the full conditional distributions of Σ and ψ . Another example of mo dification is the introduction of noise in to the sp ecification of the con trollable factors. In this pap er, we assumed that the chemical engineers hav e complete con trol ov er the sp ecification of the controllable factors when w e perform the maximization of the probabilit y of satisfying the sp ecification limits given by (3). In some cases, the chemical engineer would not b e able to control these exactly . F ollo wing the approac h in this paper, it w ould b e straigh tforward to introduce v ariabilit y as an intermediate step b et ween specifying the controllable v ariables and ev aluating the Gaussian process em ulator. Lastly , w e employ ed the RMLMH algorithm to sample from the full conditional distributions of θ , ρ and ψ . It w ould also b e possible to use the metho dology in this pap er to employ Riemann Manifold Hamiltonian Mon te Carlo metho dology (Girolami and Calderhead, 2011). Ac kno wledgmen ts The authors would lik e to thank Drs John P eterson, Mohammad Y ahy ah and Neil Hodnett (GlaxoSmithK- line) for pro viding the original kinetics mo delling problem and data set. Additionally , the authors are grateful for the editor, asso ciate editor and tw o referees who provided v aluable feedback on earlier v ersions of the pap er. DCW was support by UK Engineering and Physical Sciences Research Council (EPSRC) F ellowship EP/J018317/1 and KJM was supp orted b y a PhD a ward from EPSR C and GlaxoSmithKline. A F ull-conditional distributions for Gibbs sampling A.1 F ull conditional distribution for mo del discrepancy F or the r th chain with temp erature τ r , the full-conditional distribution of d ∗ is d ∗ y , θ , Σ , Ω , ρ, ψ ∼ N ( µ d ∗ , τ r V d ∗ ) , where V d ∗ = H T Ω − 1 ⊗ T − 1 H + Σ − 1 ⊗ S − 1 − 1 , µ d ∗ = V d ∗ H T Ω − 1 ⊗ T − 1 y + Σ − 1 ⊗ S − 1 m ( θ ) . 16 A.2 F ull conditional distributions for co v ariance matrices F or the r th chain with temp erature τ r , the full-conditional distributions of Ω and Σ are giv en by Ω Y , D ∗ , ρ ∼ IW ν + n + K + 1 τ r − K − 1 , 1 τ r I K + ( Y − GD ∗ ) T T − 1 ( Y − GD ∗ ) , and Σ D ∗ , θ , ψ ∼ IW ν + m + K + 1 τ r − K − 1 , 1 τ r I k + ( D ∗ − M ( θ )) T S − 1 ( D ∗ − M ( θ )) , resp ectiv ely , where IW denotes the inv erse-Wishart distribution. A.3 F ull conditional distribution for censored observ ations Let A b e the n × n permutation matrix whic h re-orders the elemen ts of y such that w e can write Ay = y S y C ∼ N AHd ∗ , A ( Ω ⊗ T ) A T . Define b = AHd ∗ and L = A ( Ω ⊗ T ) A T . Let b = ( b S , b c ) T where b S and b ) C are the n S × 1 and n c × 1 sub-v ectors corresponding to y S and y C , resp ectiv ely . Similarly , partition L as L = L S S L S C L C S L C C . The full conditional distribution of y c is y C ∼ N b C + L C S L − 1 S S ( y S − b S ) , τ r L C C − L C S L − 1 S S L S C truncated to the hypercub e [ −∞ , log χ ] n c . B Riemann manifold Langevin Metrop olis-Hastings (RMLMH) Algorithm Consider generating a sample from the distribution of δ ∈ R p , conditional on y , having density π ( δ y ) ∝ ( π ( y δ ) π ( δ )) 1 / τ r , for temp erature τ r , using the Metrop olis-Hastings algorithm. Let h ( δ ) = log π ( y δ ) + log π ( δ ) and define ▽ h ( δ ) = ∂ log π ( y δ ) ∂ δ + ∂ log π ( δ ) ∂ δ , G ( δ ) = − E y ∣ δ ∂ log π ( y δ ) ∂ δ ∂ log π ( y δ ) ∂ δ T − ∂ 2 log π ( δ ) ∂ δ ∂ δ T . If δ c is the current v alue of δ , then under the RMLMH algorithm, the proposal distribution is N ( µ δ , τ r V δ ) , where µ δ = δ c + 2 2 G ( δ c ) − 1 ▽ h ( δ c ) − 2 n ( 1 ) + 2 2 n ( 2 ) , V δ = 2 G ( δ c ) − 1 , with n ( 1 ) and n ( 2 ) ha ving i th elemen ts n ( 1 ) i = p j = 1 G ( δ c ) − 1 ∂ G ( δ ) ∂ δ j δ = δ c G ( δ c ) − 1 ij , n ( 2 ) i = p j = 1 G ( δ c ) − 1 ij tr G ( δ c ) − 1 ∂ G ( δ ) ∂ δ j δ = δ c , resp ectiv ely . 17 C Comp onen ts required for Riemann manifold MH Algorithm C.1 Ph ysical parameters The log density of the full conditional distribution of θ is giv en by h ( θ ) ∝ − 1 2 ( d ∗ − m ( θ )) T Σ − 1 ⊗ S − 1 ( d ∗ − m ( θ )) − 1 2 ( θ − µ ) T ∆ − 1 ( θ − µ ) . The deriv ative with resp ect to θ is ▽ h ( θ ) = ( d ∗ − m ( θ )) T Σ − 1 ⊗ S − 1 ∂ m ( θ ) ∂ θ − ∆ − 1 ( θ − µ ) . The matrix tensor for θ is G ( θ ) = ∂ m ( θ ) ∂ θ T Σ − 1 ⊗ S − 1 ∂ m ( θ ) ∂ θ + ∆ − 1 , with deriv atives ∂ G ( θ ) ∂ θ k = 2 ∂ m ( θ ) ∂ θ T Σ − 1 ⊗ S − 1 ∂ 2 m ( θ ) ∂ θ ∂ θ k , for k = 1 , . . . , p . C.2 Correlation parameter for time dep endency Consider a log transformation of ρ , i.e. a = log ρ . The log density of the full conditional distribution of a is giv en b y h ( a ) ∝ − K 2 I ′ i = 1 log T i − 1 2 ( y − Hd ∗ ) T Ω − 1 ⊗ diag i = 1 ,...,I ′ T − 1 i ( y − Hd ∗ ) + a − exp ( a ) . The deriv ative with resp ect to a is ∂ h ( a ) ∂ a = − K 2 I ′ i = 1 tr T − 1 i T ia + 1 2 ( y − Hd ∗ ) T Ω − 1 ⊗ diag i = 1 ,...,I ′ T − 1 i T ia T − 1 i ( y − Hd ∗ ) + 1 − exp ( a ) , where T ia = ∂ T i ∂ a with j l th element − exp ( a )( t ij − t il ) 2 T i,j l . The tensor matrix for a is G ( a ) = K 2 tr T − 1 i T ia T − 1 i T ia − exp ( a ) , with deriv ative ∂ G ( a ) ∂ a = K tr T − 1 i T ia T − 1 i T iaa − T ia T − 1 i T ia − exp ( a ) , where T iaa = ∂ 2 T i ∂ a 2 with j l th element exp ( a )( t ij − t il ) 2 exp ( a )( t ij − t il ) 2 − 1 T i,j l . 18 C.3 Correlation parameters for mo del discrepancy Consider a log transformation of each elemen t of ψ , i.e. b i = log ψ i for i = 1 , 2. The log density of the full conditional distribution of b = ( b 1 , b 2 ) is given b y h ( b ) ∝ − K 2 log S − 1 2 ( d ∗ − m ( θ )) T Σ ⊗ S − 1 ( d ∗ − m ( θ )) + 2 i = 1 b i − exp ( b i ) . The deriv ative with resp ect to b i is ∂ h ( b ) ∂ b i = − K 2 tr S − 1 S i + 1 2 ( d ∗ − m ( θ )) T Σ ⊗ S − 1 S i S − 1 ( d ∗ − m ( θ )) + 1 − exp ( b i ) , where S i = ∂ S ∂ b i with j l th element giv en by − exp ( b 1 ) ∑ F f = 1 ( d j f − d lf ) 2 S j l , if i = 1 , − exp ( b 2 )( t j − t l ) 2 S j l , if i = 2 . The tensor matrix, G ( b ) has ij th element G ( b ) ij = K 2 tr S − 1 S i S − 1 S j − I ( i = j ) exp ( b i ) . The deriv atives of G ( b ) are ∂ G ( b ) ij ∂ b k = K 2 tr S − 1 S i S − 1 S j k − S − 1 S i S − 1 S k S − 1 S j + S − 1 S j S − 1 S ik − S − 1 S i S − 1 S j S − 1 S k − I ( i = j = k ) exp ( b i ) , where S ik = ∂ 2 S ∂ b i ∂ b k with j l th element giv en by exp ( b 1 ) ∑ F f = 1 ( d j f − d lf ) 2 exp ( b 1 ) ∑ F f = 1 ( d j f − d lf ) 2 − 1 S j l , if i = 1 and k = 1 , exp ( b 2 )( t j − t l ) 2 exp ( b 2 )( t j − t l ) 2 − 1 S j l , if i = 2 and k = 2 , exp ( b 1 + b 2 ) ∑ F f = 1 ( d j f − d lf ) 2 ( t j − t l ) 2 S j l , if i = 1 and k = 2 . D Prior sensitivit y analysis Supp ose ρ ∼ Gamma ( α, β ) Σ ∼ IW ( ν, I 3 ) ψ i ∼ Gamma ( α, β ) Ω ∼ IW ( ν, I 3 ) for i = 1 , 2, where the Gamma distribution is parameterised suc h that the expectation and v ariance are α β and α β 2 , resp ectiv ely , and the inv erse-Wishart such that the mode is I 3 ν . Let the prior distribution for ρ , ψ , Σ and Ω outlined in Section 2.3 b e denoted as Prior 0 where α = β = 1 and ν = 4. W e consider three different prior sp ecifications (denoted Prior 1, 2 and 3) giv en b y different com binations of α , β and ν , corresp onding to a sequence of increasingly diffuse prior distributions. F or each prior, the analysis described in Section 4 was undertaken and the optim um v alues of x and t were found b y ACE. T able 4 shows the v alues of α , β and ν for Priors 1, 2 and 3 as well as the optimum v alues of x and t . Also included is the probabilit y of satisfying the specification limits giv en by (3) under each prior distribution for the optim um v alues also found under each prior distribution. There is some v ariability with resp ect to initial amounts of A and D and, particularly , with resp ect to time. How ever the probability of satisfying the sp ecification limits remain broadly insensitiv e to these changes. W e conclude that the results are robust to c hoice of prior distribution. 19 Prior 0 1 2 3 α 1 0.500 0.250 0.125 β 1 0.500 0.250 0.125 ν 4 2 1 0.5 Optim um v alues for con trollable factors and time Initial amount of A 30.52 22.50 39.58 37.75 Initial amount of D 91.51 91.49 91.59 91.59 Initial amount of E 26.47 26.47 26.47 26.47 T emp erature 313.15 313.15 313.15 313.15 V olume 31.28 31.28 31.28 31.28 Time 199.10 453.36 169.59 169.59 Probabilit y of satisfying specification limits Prior 0 0.77 0.75 0.78 0.73 Prior 1 0.74 0.75 0.75 0.73 Prior 2 0.76 0.74 0.78 0.73 Prior 3 0.76 0.74 0.77 0.76 T able 4: F or Priors 0, 1 , 2 and 3: a) the v alues of α , β and ν sp ecifying the prior distributions for ψ , Σ and Ω ; b) the optim um v alues of x and t for maximizing the probability of satisfying the sp ecification limits; and c) the probability of satisfying the specification limits given by (3) under eac h prior distribution for the optim um v alues also found under each prior distribution. References am Ende, D.J. (Ed.), 2011. Chemical Engineering in the Pharmaceutical Industry . Wiley , Hoboken, NJ. Bro wn, P ., Byrne, G., Hindmarsh, A., 1989. V o de: A v ariable co efficien t o de solver. SIAM Journal on Scien tific and Statistical Computing 10, 1038–1051. Chiao, C., Hamada, M., 2001. Analyzing exp eriments with correlated multiple responses. Journal of Quality T echnology 33, 451–465. Con ti, S., O’Hagan, A., 2010. Bay esian em ulation of complex m ulti-output and dynamic computer mo dels. Journal of Statistical Planning and Inference 140, 640–651. Da wid, A., 1981. Some matrix-v ariate distribution theory: Notational considerations and a ba y esian appli- cation. Biometrik a 68, 265–274. Dean, A., Morris, M., J., S., Bingham, D.e. (Eds.), 2015. Handb o ok of Design and Analysis of Exp erimen ts. CR C Press, Boca Raton. Del Castillo, E., 2007. Pro cess Optimization – A Statistical Approac h. Springer, New Y ork. F ang, K., Li, R., Sudianto, A., 2006. Design and Mo delling for Computer Exp erimen ts. Chapman and Hall, Bo ca Raton. Fielding, M., Nott, D.J., Liong, S.Y., 2011. Efficient MCMC sc hemes for computationally expensive p osterior distributions. T echnometrics 53, 16–28. Gelman, A., Carlin, J.B., Stern, H.S., Dunson, D.B., V ehtari, A., Rubin, D.B., 2014. Bay esian Data Analysis. v olume 3rd. Chapman and Hall. Gew eke, J.F., 1991. Effcient simulation from the multiv ariate normal and student-t distributions sub ject to linear constraints, in: Computer Science and Statistics. Pro ceedings of the 23rd Symp osium on the In terface. 20 Girolami, M., Calderhead, B., 2011. Riemann manifold langevin and hamiltonian monte carlo metho ds (with discussion). Journal of the Ro yal Statistical So ciet y , Series B 73, 123–214. Iserles, A., 2009. A First Course in the Numerical Analysis of Differential Equations. 2nd ed., Cambridge Univ ersity Press. Johnson, M.E., Mo ore, L.M., Ylvisak er, D., 1990. Minimax and maximin distance designs. Journal of Statistical Planning and Inference 26, 131–148. Kennedy , M.C., O’Hagan, A., 2001. Bay esian calibration of computer mo dels (with discussion). Journal of the Roy al Statistical Society , Series B 63, 425–464. Laidler, K.J., 1984. The developmen t of the arrhenius equation. Journal of Chemical Education 61, 494. Lange, K., 2013. Optimization. 2nd ed., Springer, New Y ork. Lebrun, P ., Boulanger, B., Debrus, B., Lambert, P ., Hub ert, P ., 2013. A Bay esian design space for analytical metho ds based on multiv ariate mo dels and predictions. Journal of Biopharmaceutical Statistics 23, 1330– 1351. Lo eppky , J.L., Sac ks, J., W elch, W.J., 2009. Choosing the sample size of a computer exp erimen t: a practical guide. T echnometrics 51, 366–376. Miaso jedow, B., Moulines, E., Vihola, M., 2013. An adaptive parallel temp ering algorithm. Journal of Computational and Graphical Statistics 22, 649–664. Nyc hk a, D., F urrer, R., P aige, J., Sain, S., 2015. fields: T o ols for spatial data. URL: www.image.ucar.edu/ fields . R pack age version 8.15. Ov erstall, A.M., W o ods, D.C., 2013. A strategy for ba yesian inference for computationally expensive mo dels with application to the estimation of stem cell prop erties. Biometrics 69, 458–468. Ov erstall, A.M., W o ods, D.C., 2016. Multiv ariate emulation of computer simulators: mo del selection and diagnostics with application to a humanitarian relief model. Journal of the Roy al Statistical So ciet y C 65, 483–505. Ov erstall, A.M., W o o ds, D.C., 2017. Ba y esian design of exp erimen ts using appro ximate co ordinate exchange. T echnometrics 59, 458–470. Ov erstall, A.M., W o ods, D.C., Adamou, M., 2017. acebay es: An R pack age for Bay esian optimal design of exp erimen ts via approximate coordinate exc hange. arXiv:1705.08096 . P apaspiliop oulos, O., Roberts, G.O., Skold, M., 2003. Non-cen tered parameterizations for hierarchical mo dels and data augmentation, in: Bernardo, J.M., Bay arri, M.J., Berger, J.O., Dawid, A.P ., Heck erman, D., Smith, A.F.M., W est, M. (Eds.), Ba y esian Statistics 7, Oxford. P eterson, J., 2004. A p osterior predictive approach to multiple resp onse surface optimization. Journal of Qualit y T echnology 36, 139–153. P eterson, P .J., 2008. A Ba yesian approach to the ICH Q8 definition of design space. Journal of Biopharma- ceutical Statistics 18, 959–975. P eterson, P .J., Y ahy ah, M., 2009. A Bay esian design space approach to robustness and system suitability for pharmaceutical assays and other pro cesses. Statistics in Biopharmaceutical Researc h 1, 441–449. R Core T eam, 2018. R: A Language and En vironment for Statistical Computing. R F oundation for Statistical Computing. Vienna, Austria. URL: https://www.R- project.org/ . 21 Rasm ussen, C.E., 2003. Gaussian processes to sp eed up hybrid monte carlo for expensive bay esian in tegrals, in: Bernardo, J.M., Bay arri, M.J., Berger, J.O., Dawid, A.P ., Heck erman, D., Smith, A.F.M., W est, M. (Eds.), Bay esian Statistics 7, Oxford. Sac ks, J., W elch, W., Mitc hell, T., Wynn, H., 1989. Design and analysis of computer experiments. Statistical Science 4, 409–435. San tner, T.J., Williams, B.J., Notz, W.I., 2003. The Design and Analysis of Computer Exp eriments. Springer, New Y ork. So etaert, K., P etzoldt, T., W o odrow Setzer, R., 2010. Solving differential equations in R: Pac k age deSolv e. Journal of Statistical Softw are 33, 1–25. URL: http://www.jstatsoft.org/v33/i09 . V alko, P ., V a jda, S., 1984. An extended ODE solv er for sensitivit y calculations. Computers and Chemistry 8, 255–271. 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment