Packaging and Sharing Machine Learning Models via the Acumos AI Open Platform

Applying Machine Learning (ML) to business applications for automation usually faces difficulties when integrating diverse ML dependencies and services, mainly because of the lack of a common ML framework. In most cases, the ML models are developed for applications which are targeted for specific business domain use cases, leading to duplicated effort, and making reuse impossible. This paper presents Acumos, an open platform capable of packaging ML models into portable containerized microservices which can be easily shared via the platform’s catalog, and can be integrated into various business applications. We present a case study of packaging sentiment analysis and classification ML models via the Acumos platform, permitting easy sharing with others. We demonstrate that the Acumos platform reduces the technical burden on application developers when applying machine learning models to their business applications. Furthermore, the platform allows the reuse of readily available ML microservices in various business domains.

💡 Research Summary

The paper introduces Acumos, an open‑source platform designed to simplify the packaging, cataloguing, and distribution of machine‑learning (ML) models as portable micro‑services. The authors begin by outlining the practical challenges that organizations face when trying to embed ML models into production applications: diverse dependencies, multi‑stage pipelines, the need for expert knowledge, and the high cost of training resources. Existing solutions such as TensorFlow‑Serving, Clipper, ModelDB, and commercial ML‑as‑a‑Service offerings either focus solely on deployment or are locked into proprietary clouds, leaving a gap for a framework that both packages and shares models across heterogeneous environments.

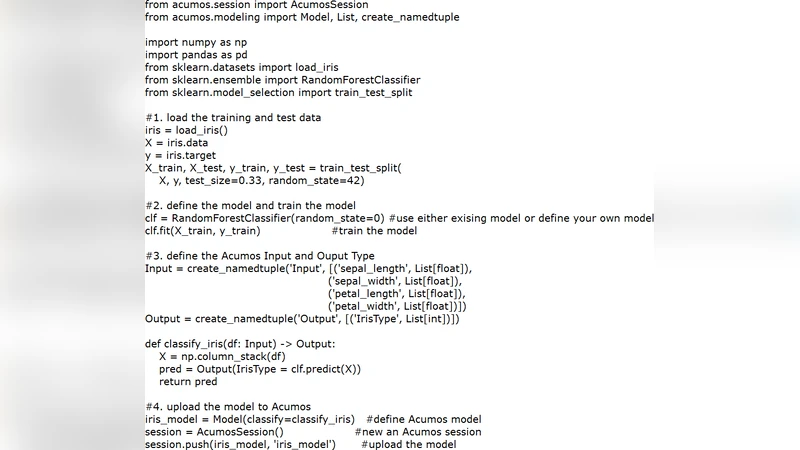

Acumos fills this gap with three core capabilities. First, it supports multiple programming languages (Python, R, Java) and popular ML libraries (scikit‑learn, TensorFlow, Spark MLlib, etc.). Data scientists can upload a pre‑trained model using language‑specific APIs; the platform automatically introspects the model to discover required libraries, eliminating the need for manual dependency lists. Second, Acumos isolates each model at the service level by containerising it into a Docker image. The container includes a generated RESTful API, enabling any consumer—whether on a public cloud, private data centre, or a local workstation—to run the model without additional configuration. The Docker image can be orchestrated with Kubernetes or other container‑management tools, providing scalability and portability. Third, Acumos maintains a searchable catalog where contributors publish metadata describing the model’s purpose, input/output schema, and domain. End‑users can discover suitable models by keyword or schema matching, download the Docker image, and integrate it directly into their applications.

The architecture consists of three stages: Uploading, Publishing, and Predicting. During uploading, the model is serialized, its dependencies are captured, and a protobuf‑based interface definition is generated to ensure cross‑language compatibility. Publishing stores the model in a private repository, attaches metadata, and makes it discoverable. Predicting packs the model into a Docker image, stores it in the platform’s registry, and provides the consumer with a ready‑to‑run service that can be invoked via HTTP.

Two case studies illustrate Acumos’s practical impact. In the first, a sentiment‑analysis model trained on 50,000 IMDB reviews is uploaded to Acumos. An end‑user with only an Amazon movie‑review dataset (500 test samples) downloads the pre‑trained service and achieves an AUC of 0.9376. By contrast, when the same user attempts to train a model solely on Amazon data, comparable performance requires at least 10,000 labelled reviews, incurring substantial labeling and compute costs. This demonstrates that Acumos enables model reuse across domains, reduces the data‑labeling burden, and protects the model owner’s proprietary data. The second case study showcases model‑level isolation: teams developing image‑processing and text‑classification models independently can each publish their services, and other teams can compose these services via REST calls without worrying about language or library incompatibilities.

The authors acknowledge limitations: current language and library support is not exhaustive, complex streaming pipelines are not natively handled, and operational concerns such as Docker image size, security scanning, and version management require additional tooling. Future work includes tighter integration with KubeFlow, enhanced multi‑tenant security, automated model update pipelines, and broader ecosystem support.

In conclusion, Acumos provides a pragmatic solution for the end‑to‑end lifecycle of ML models— from expert development to non‑expert consumption—by abstracting away dependency management, deployment complexity, and sharing logistics. By turning models into reusable, containerised services, Acumos lowers the technical barrier for businesses to adopt AI, encourages model reuse, and fosters an open marketplace for ML capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment