Second Thoughts on the Second Law

We speculate whether the second law of thermodynamics has more to do with Turing machines than steam pipes. It states the logical reversibility of reality as a computation, i.e., the fact that no information is forgotten: nature computes with Toffoli-, not NAND gates. On the way there, we correct Landauer’s erasure principle by directly linking it to lossless data compression, and we further develop that to a lower bound on the energy consumption and heat dissipation of a general computation.

💡 Research Summary

The paper “Second Thoughts on the Second Law” proposes a radical reinterpretation of the thermodynamic second law, claiming that it is fundamentally a statement about logical reversibility rather than about heat flow in steam engines. The authors argue that nature computes using reversible gates such as the Toffoli gate, so that no information is ever truly erased. In this view, the second law is equivalent to the principle that information loss does not occur in physical processes.

To support this claim, the authors first review the historical development of the second law—from Carnot’s efficiency limit, through Clausius’s formulation, Kelvin’s statements, and Boltzmann’s statistical interpretation—criticising each as being tied to specific macroscopic devices (e.g., steam pipes). They then segue into quantum non‑locality, suggesting that a non‑counterfactual, algorithmic perspective on information may be more fundamental than classical causal structures.

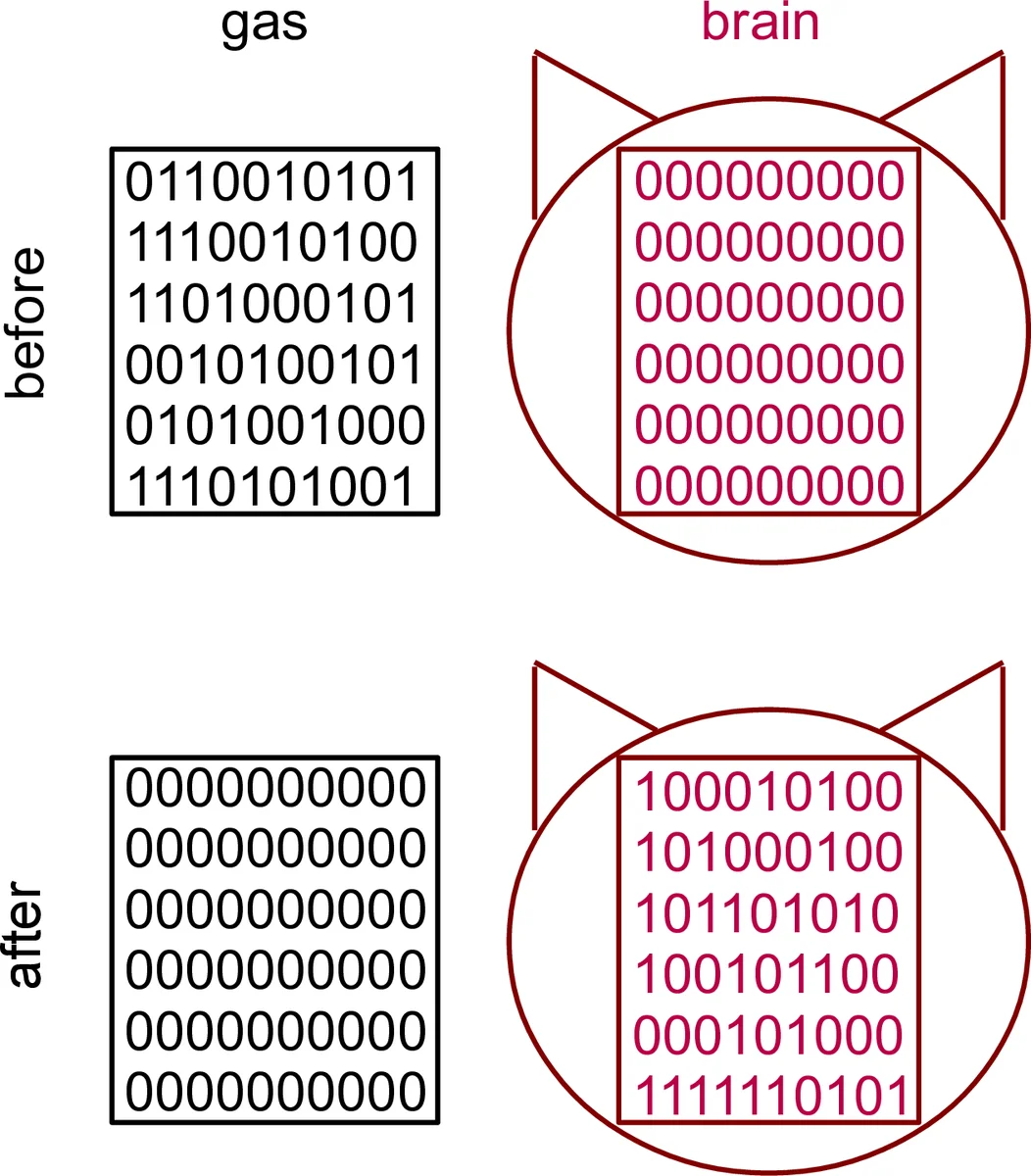

The core technical contribution is a reformulation of Landauer’s erasure principle using Kolmogorov (algorithmic) complexity. Classical Landauer states that erasing N bits costs at least kT ln 2 · N of free energy, which must be dissipated as heat. The authors replace the naïve bit count with the conditional Kolmogorov complexity K_U(S|X) of the string S to be erased, given side information X. They propose a “compression‑then‑erase” model: first compress S (with X as a catalyst) into a shortest program P of length ≈K_U(S|X) using a reversible Turing machine, then erase P at the usual kT ln 2 per bit cost. Consequently, the minimal erasure cost becomes kT ln 2 · K_U(S|X).

Because Kolmogorov complexity is uncomputable, the authors acknowledge that the exact bound cannot be achieved in practice, but they argue that any computable compression algorithm C provides an upper bound: EC_U(S|X) ≤ len(C(S,X))·kT ln 2. This yields a sandwich inequality:

kT ln 2·K_U(S|X) ≤ EC_U(S|X) ≤ kT ln 2·len(C(S,X)).

Extending this idea, they consider a general computation from input A to output B with side information X. The energy cost of the whole computation satisfies:

Cost_U(A→B|X) ≥

Comments & Academic Discussion

Loading comments...

Leave a Comment