SAIL: Machine Learning Guided Structural Analysis Attack on Hardware Obfuscation

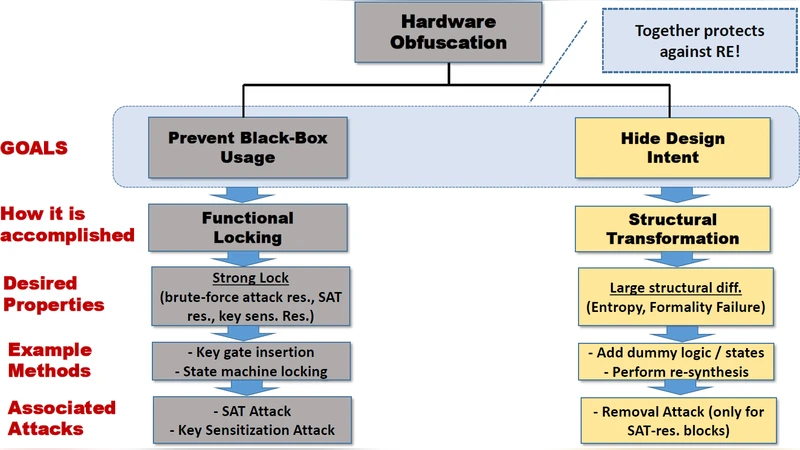

Obfuscation is a technique for protecting hardware intellectual property (IP) blocks against reverse engineering, piracy, and malicious modifications. Current obfuscation efforts mainly focus on functional locking of a design to prevent black-box usage. They do not directly address hiding design intent through structural transformations, which is an important objective of obfuscation. We note that current obfuscation techniques incorporate only: (1) local, and (2) predictable changes in circuit topology. In this paper, we present SAIL, a structural attack on obfuscation using machine learning (ML) models that exposes a critical vulnerability of these methods. Through this attack, we demonstrate that the gate-level structure of an obfuscated design can be retrieved in most parts through a systematic set of steps. The proposed attack is applicable to all forms of logic obfuscation, and significantly more powerful than existing attacks, e.g., SAT-based attacks, since it does not require the availability of golden functional responses (e.g. an unlocked IC). Evaluation on benchmark circuits show that we can recover an average of around 84% (up to 95%) transformations introduced by obfuscation. We also show that this attack is scalable, flexible, and versatile.

💡 Research Summary

The paper introduces SAIL (Structural Analysis using Machine Learning), a novel attack that targets the structural aspect of hardware logic obfuscation rather than its functional locking. Traditional obfuscation techniques focus on inserting key‑controlled gates (typically XOR/XNOR) and then re‑synthesizing the netlist, which results in only local and deterministic changes to the circuit topology. The authors first perform a statistical study on several ISCAS‑85 benchmarks and discover that the majority of inserted key‑gate localities undergo either no change (Level‑1) or modest local modifications (Level‑2), while only a small fraction (≈6 %) experience deeper structural transformations (Level‑3). Moreover, they find that the synthesis tool uses a surprisingly limited set of transformation rules: about 90 % of all changes are governed by fewer than 180 rules, and the top six rules account for over 40 % of the modifications.

These observations motivate the use of machine learning to learn the deterministic mapping between pre‑ and post‑synthesis local sub‑graphs. SAIL consists of two complementary ML components:

-

Change Prediction Model – a Random Forest classifier trained to predict whether a given locality (a sub‑graph of up to 10 gates surrounding a key gate) has been altered by synthesis. The model achieves an average accuracy of ~81 % across benchmarks, with higher accuracy for larger localities (up to ~98 % for size‑10 sub‑graphs on highly regular circuits).

-

Reconstruction Model – a multi‑layer, multi‑channel neural network that, given a post‑synthesis locality, predicts the corresponding pre‑synthesis structure. Several networks are trained for different locality sizes (3‑10 gates) and combined via cumulative confidence voting to improve robustness.

A key practical challenge is the lack of a “golden” (unobfuscated) netlist for training. The authors solve this with a “Pseudo Self‑Referencing” scheme: the attacker treats the already obfuscated netlist as a pseudo‑golden circuit, applies an additional round of the same obfuscation process, and thus generates paired pre‑/post‑synthesis localities for training without ever accessing the original design.

The attack pipeline proceeds as follows: (a) extract all key‑gate localities from the target obfuscated netlist; (b) use the Change Prediction Model to filter those that likely changed; (c) feed the filtered localities to the Reconstruction Model to obtain their pre‑synthesis forms; (d) optionally combine the reconstructed fragments to recover larger portions of the original netlist.

Experimental evaluation on seven ISCAS‑85 benchmarks with key sizes ranging from 8 to 64 bits shows that SAIL can recover an average of 84.14 % of the structural transformations introduced by obfuscation, reaching up to 94.98 % on the largest benchmark (c7552). The approach works for both combinational and sequential designs and does not require any functional oracle or unlocked IC, unlike SAT‑based attacks. It also scales gracefully: accuracy and runtime increase modestly with key length and circuit size, and the method remains effective across different key‑gate insertion heuristics.

Compared to SAT‑based functional attacks, SAIL offers several advantages: (i) no need for golden responses, (ii) applicability to any Boolean function (SAT attacks can fail on specific functions like multipliers), (iii) suitability for sequential circuits, and (iv) deterministic, predictable performance. However, the current work focuses on XOR‑based key insertion and synthesis‑level structural changes; more sophisticated obfuscation techniques such as routing‑level camouflage, multi‑layer encryption, or non‑deterministic synthesis optimizations are not addressed. The authors suggest future work on extending the methodology to these richer threat models, reducing the reliance on the pseudo‑self‑referencing step, and exploring meta‑learning approaches that could generalize across different designs without per‑circuit retraining.

In summary, SAIL demonstrates that the structural side‑channel of logic obfuscation is vulnerable to data‑driven analysis. By learning the limited set of synthesis transformations, an attacker can effectively reverse‑engineer the original circuit topology, thereby undermining the primary goal of hiding design intent. This work highlights the need for obfuscation schemes that introduce more global, non‑deterministic structural changes or that combine functional locking with robust structural camouflage.

Comments & Academic Discussion

Loading comments...

Leave a Comment