OS Scheduling Algorithms for Memory Intensive Workloads in Multi-socket Multi-core servers

Major chip manufacturers have all introduced multicore microprocessors. Multi-socket systems built from these processors are routinely used for running various server applications. Depending on the application that is run on the system, remote memory accesses can impact overall performance. This paper presents a new operating system (OS) scheduling optimization to reduce the impact of such remote memory accesses. By observing the pattern of local and remote DRAM accesses for every thread in each scheduling quantum and applying different algorithms, we come up with a new schedule of threads for the next quantum. This new schedule potentially cuts down remote DRAM accesses for the next scheduling quantum and improves overall performance. We present three such new algorithms of varying complexity followed by an algorithm which is an adaptation of Hungarian algorithm. We used three different synthetic workloads to evaluate the algorithm. We also performed sensitivity analysis with respect to varying DRAM latency. We show that these algorithms can cut down DRAM access latency by up to 55% depending on the algorithm used. The benefit gained from the algorithms is dependent upon their complexity. In general higher the complexity higher is the benefit. Hungarian algorithm results in an optimal solution. We find that two out of four algorithms provide a good trade-off between performance and complexity for the workloads we studied.

💡 Research Summary

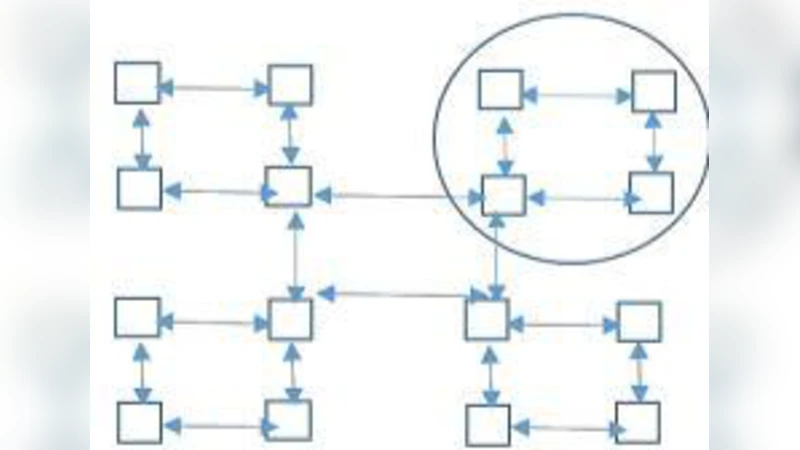

The paper addresses the performance penalty caused by remote DRAM accesses in multi‑socket, multi‑core servers that run memory‑intensive workloads on ccNUMA architectures. While modern operating systems schedule threads primarily based on CPU utilization and load balancing, they typically ignore the cost of accessing memory located on remote sockets. The authors propose a novel OS‑level scheduling optimization that observes, at the end of each scheduling quantum, the number of local and remote DRAM accesses generated by every thread. This information is collected using dedicated hardware performance counters that maintain a per‑thread, per‑node access count. Using these counts, the scheduler recomputes the thread‑to‑node mapping for the next quantum with the goal of minimizing future remote accesses.

Four scheduling algorithms are introduced. The first three are greedy heuristics of increasing sophistication, while the fourth adapts the Hungarian algorithm to obtain a globally optimal assignment.

Algorithm 1 (Global Greedy): All thread‑node access counts (N × L values, where N is the number of threads and L the number of nodes) are sorted in descending order. The highest‑count pair is assigned, after which the selected thread is removed from further consideration and the node’s capacity (four cores) is decremented. The process repeats until every core is filled. Complexity is O(N L log(N L)) dominated by the sort.

Algorithm 2 (Node‑wise Greedy): For each node, the threads are locally sorted by their access count to that node. The top four threads are placed on the node, then removed from the global list, and the algorithm proceeds to the next node. Assuming parallel sorting across nodes, the dominant cost is O(N log N).

Algorithm 3 (Combination Greedy): All possible 4‑thread groups (combinations) are generated (N choose 4). For each group, the total remote‑access count to each node is computed, yielding L × (N choose 4) values. These are sorted, and the highest‑scoring group is assigned to its best node; the four threads are then eliminated from further consideration. This repeats until all threads are placed. The combinatorial nature leads to a complexity of O(L · N⁴ log(L · N⁴)), which is the most expensive of the greedy methods but also yields the greatest reduction in remote accesses.

Algorithm 4 (Hungarian Matching): The scheduling problem is modeled as a bipartite assignment problem where each thread can be assigned to any of the L nodes (subject to per‑node core limits). The cost matrix contains the summed local + remote DRAM latency for each possible assignment. The Hungarian algorithm finds the minimum‑cost perfect matching in O(N³) time, delivering an optimal solution with respect to the defined cost metric.

The authors implemented each algorithm as a standalone C++ program and evaluated them using three synthetic workloads (Synth1, Synth2, Synth3) designed to exhibit different patterns of remote/local DRAM accesses. The test platform consists of four sockets, each with four cores (total 16 threads). The base configuration uses a local DRAM latency of 100 cycles and a remote DRAM latency of 150 cycles; sensitivity analyses were performed with remote latencies of 200 and 300 cycles.

Results (presented as percentage reduction in DRAM cycles) show that Algorithms 3 and 4 consistently achieve the highest savings, reaching up to 55 % reduction when remote latency is 300 cycles. Algorithm 2 also provides a solid trade‑off, delivering 30‑35 % savings with lower computational overhead. Algorithm 1, while simplest, yields the smallest improvements (≈13‑21 %). Across all workloads, the benefit diminishes as the variability of the access pattern increases (Synth1 → Synth2 → Synth3), confirming that the algorithms rely on the predictability of past behavior.

The paper positions its contribution relative to prior work. Earlier studies have explored OS scheduling policies for CPU‑bound workloads or have used page migration and replication to reduce remote accesses, but these approaches incur migration overhead and can suffer from coherence issues. In contrast, the proposed method proactively reassigns threads based on observed access patterns without moving pages, thereby avoiding migration costs. The authors also note that their approach does not yet account for other factors such as cache‑to‑cache transfers, cache affinity, or the overhead of reading performance counters, which are identified as avenues for future research.

In conclusion, the study demonstrates that modest OS‑level scheduling adjustments, guided by fine‑grained DRAM access statistics, can substantially cut remote memory latency in NUMA servers. The four algorithms provide a spectrum of options ranging from low‑complexity heuristics to optimal polynomial‑time matching, allowing system designers to select a strategy that balances implementation cost against performance gain. The work opens the door for integrating such scheduling intelligence into production kernels, potentially improving throughput and energy efficiency of data‑center servers running memory‑intensive parallel applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment