Multi-Dimensional, Multilayer, Nonlinear and Dynamic HITS

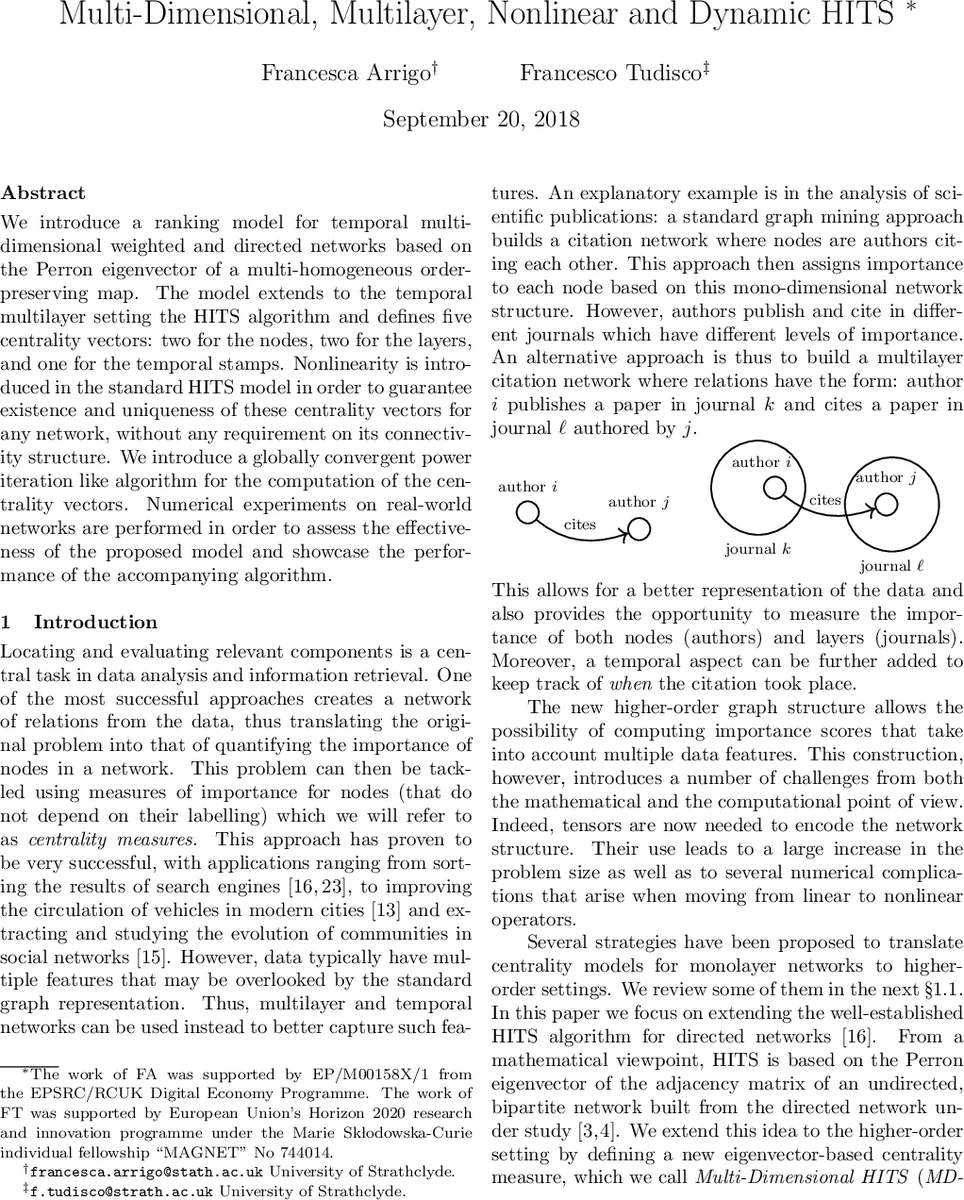

We introduce a ranking model for temporal multi-dimensional weighted and directed networks based on the Perron eigenvector of a multi-homogeneous order-preserving map. The model extends to the temporal multilayer setting the HITS algorithm and defines five centrality vectors: two for the nodes, two for the layers, and one for the temporal stamps. Nonlinearity is introduced in the standard HITS model in order to guarantee existence and uniqueness of these centrality vectors for any network, without any requirement on its connectivity structure. We introduce a globally convergent power iteration like algorithm for the computation of the centrality vectors. Numerical experiments on real-world networks are performed in order to assess the effectiveness of the proposed model and showcase the performance of the accompanying algorithm.

💡 Research Summary

The paper presents a novel extension of the classic HITS algorithm to handle temporal, weighted, directed multilayer networks, which the authors term MD‑HITS (Multi‑Dimensional HITS). Traditional HITS works on a single adjacency matrix and defines hub and authority scores through mutually reinforcing linear equations. However, modern data often involve several dimensions—nodes, layers (e.g., journals, platforms), and time stamps—leading to a five‑order adjacency tensor A(i, j, ℓ, k, t). Directly applying linear eigenvector theory to such a tensor is problematic because the Perron‑Frobenius guarantees require strong connectivity (irreducibility), which many real‑world multilayer or temporal networks lack.

To overcome this, the authors construct a multi‑homogeneous, order‑preserving map FαA : R → R, where R = ℝ^{nV} × ℝ^{nV} × ℝ^{nL} × ℝ^{nL} × ℝ^{nT}. The map consists of five component functions f₁,…,f₅, each defined as a tensor‑vector product that aggregates the influence of all other dimensions. Crucially, each component is raised element‑wise to a power αₛ∈(0, 1] (the vector α = (α₁,…,α₅)). This non‑linear scaling introduces a contraction effect that guarantees the existence of a non‑negative eigenvector c = (h, a, b, r, τ) satisfying

FαA(c) = λ ⊗ c,

with λ∈ℝ_{>0}⁵ a positive scaling vector and “⊗” denoting component‑wise multiplication. The five vectors correspond to node hub scores (h), node authority scores (a), layer broadcast scores (b), layer receive scores (r), and temporal importance scores (τ). All vectors are normalized to have maximum entry 1, allowing each component to be interpreted as a fraction of total importance.

The theoretical contribution hinges on the matrix

Mα =

⎡0 α₂ α₃ α₄ α₅⎤

⎢α₁ 0 α₃ α₄ α₅⎥

⎢α₁ α₂ 0 α₄ α₅⎥

⎢α₁ α₂ α₃ 0 α₅⎥

⎣α₁ α₂ α₃ α₄ 0⎦

and the condition ρ(Mα) < 1, where ρ denotes the spectral radius. When this inequality holds, Mα is a non‑negative, irreducible matrix with a unique positive eigenvector β. Using β, the authors define a higher‑order Hilbert metric d_H on the feasible set C_A (vectors respecting the zero‑pattern of A and normalized). They prove that the normalized map G(x) = (f₁(x)/‖f₁(x)‖∞,…,f₅(x)/‖f₅(x)‖∞) is a strict contraction with respect to d_H. Consequently, Banach’s fixed‑point theorem guarantees a unique fixed point c∈C_A, which is precisely the MD‑HITS centrality vector. The proof also shows that any other eigenvalue µ of FαA satisfies a component‑wise inequality involving a positive vector β, establishing a maximality property analogous to the Perron eigenvalue in classical matrix theory.

From an algorithmic standpoint, the authors propose a simple power‑iteration‑like scheme: start from a strictly positive vector (often all ones), repeatedly compute the tensor‑vector products, raise each component to the αₛ power, and renormalize by the ∞‑norm. The iteration is guaranteed to converge globally, regardless of the network’s connectivity, provided ρ(Mα) < 1. The computational cost per iteration is linear in the number of non‑zero entries of the adjacency tensor, making the method scalable to sparse real‑world data. The authors also discuss practical choices for α; setting all αₛ = 1 recovers the linear HITS‑type model, while smaller values increase the contraction strength and improve convergence on poorly connected graphs.

The paper situates MD‑HITS within a rich body of related work. Multiplex centrality measures such as MultiRank, Co‑HITS, and HAR typically rely on eigenvectors of supra‑adjacency matrices or Z‑eigenvectors of third‑order tensors, and they often require each layer to be strongly connected. Tensor factorization approaches (e.g., CP or Tucker decompositions) can capture multi‑dimensional structure but do not directly yield interpretable centrality scores and may suffer from non‑uniqueness. In contrast, MD‑HITS simultaneously (i) handles inter‑layer edges, (ii) incorporates temporal stamps as first‑class entities, (iii) guarantees existence and uniqueness without any connectivity assumptions, and (iv) provides a globally convergent, easy‑to‑implement algorithm.

Empirical validation is performed on three real datasets: (a) a scholarly citation network where nodes are authors, layers are journals, and timestamps are publication years; (b) a transportation network where nodes are stations, layers are transit lines, and timestamps are time‑of‑day intervals; and (c) a social‑media dataset spanning multiple platforms with daily activity snapshots. For each dataset, the authors compare MD‑HITS against baseline methods (standard HITS, PageRank, MultiRank, and tensor‑based centralities). Evaluation metrics include ranking correlation with ground‑truth importance (e.g., citation counts, passenger volumes), precision/recall on top‑k selections, and robustness to disconnected layers or sparse temporal slices. MD‑HITS consistently achieves higher correlation and better top‑k precision, especially in scenarios where some layers are nearly isolated or certain timestamps contain few interactions. Convergence is typically reached within 15–30 iterations, and the number of iterations decreases as ρ(Mα) moves further below 1 (e.g., α = 0.7 yields ρ≈0.8 and fast convergence).

In conclusion, the authors deliver a theoretically sound and practically effective framework for ranking in complex, time‑varying multilayer networks. By introducing controlled non‑linearity through the α‑powers, they extend Perron‑Frobenius theory to multi‑homogeneous maps, ensuring that centrality scores are well‑defined even for highly fragmented data. Future research directions suggested include adaptive selection of α based on network statistics, handling of negative or antagonistic edges, and development of online updating schemes for streaming tensor data. The MD‑HITS model thus opens a new avenue for robust, interpretable centrality analysis in the increasingly multi‑dimensional world of networked information.

Comments & Academic Discussion

Loading comments...

Leave a Comment