Attention as a Perspective for Learning Tempo-invariant Audio Queries

Current models for audio–sheet music retrieval via multimodal embedding space learning use convolutional neural networks with a fixed-size window for the input audio. Depending on the tempo of a query performance, this window captures more or less musical content, while notehead density in the score is largely tempo-independent. In this work we address this disparity with a soft attention mechanism, which allows the model to encode only those parts of an audio excerpt that are most relevant with respect to efficient query codes. Empirical results on classical piano music indicate that attention is beneficial for retrieval performance, and exhibits intuitively appealing behavior.

💡 Research Summary

The paper tackles a fundamental limitation of current audio‑sheet‑music retrieval systems that rely on fixed‑size audio windows. Because a fixed number of spectrogram frames contains varying amounts of musical material depending on the performance tempo, the learned cross‑modal embeddings become sensitive to tempo changes, while the corresponding sheet‑music snippets have a relatively constant note‑head density. To address this mismatch, the authors introduce a soft attention mechanism that allows the model to selectively focus on the most informative parts of an audio excerpt when constructing its embedding.

The overall architecture follows the previously proposed cross‑modal embedding framework: two convolutional pathways embed sheet‑music images and audio excerpts into a shared 32‑dimensional space, and a canonical correlation analysis (CCA) layer together with a pairwise ranking loss enforces that matching pairs are close while mismatched pairs are far apart. The novel component is an attention pathway h placed before the audio encoder g. For each spectrogram frame t, a softmax layer computes a weight a_t; the original frame is multiplied by this weight, effectively suppressing irrelevant temporal regions and amplifying salient ones. This “soft‑input‑attention” enables the network to read as much of the input as needed, regardless of the underlying tempo.

Three model variants are evaluated on the MSMD dataset, a large collection of synthesized classical piano pieces with precise audio‑score alignments. (1) BL – the baseline without attention, using a 84‑frame (≈2 s) audio window; (2) BL + AT – identical architecture but with the attention module, still using 84 frames; (3) BL + AT + LC – the attention model with a larger temporal context of 168 frames (≈4 s). All models share the same sheet‑music image size (80 × 100 px) and are trained to produce 32‑dimensional embeddings.

Performance is measured with Recall@k (k = 1, 5, 25), Mean Reciprocal Rank (MRR), and Median Rank (MR). The baseline achieves R@1 = 41.4 %, R@5 = 63.8 %, R@25 = 77.2 %, MRR = 0.518, MR = 2. Adding attention (BL + AT) improves every metric (R@1 = 47.6 %, MRR = 0.571). Providing a larger temporal context together with attention (BL + AT + LC) yields the best results (R@1 = 55.5 %, MRR = 0.651, MR = 1). These gains demonstrate that the attention mechanism successfully mitigates the tempo‑induced variability of the audio input.

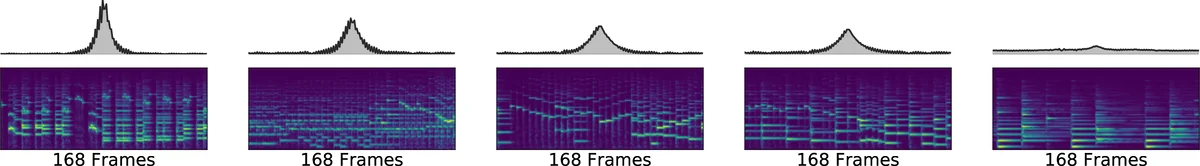

Qualitative analysis of the learned attention weights further supports the quantitative findings. Visualizations show that when the audio excerpt contains a high density of onsets (fast passages), the attention distribution becomes sharply peaked, concentrating on a short temporal segment. Conversely, for slower passages with fewer onsets, the attention spreads more evenly across the longer excerpt. This behavior aligns with the intuition that the sheet‑music snippets have roughly constant note‑head density, so the model learns to allocate its “focus” proportionally to the musical event density in the audio.

The contributions of the work are threefold: (1) introducing a soft‑input‑attention layer that allows variable‑length temporal information to be processed without redesigning the whole network; (2) demonstrating that this simple addition yields substantial improvements in tempo‑invariant cross‑modal retrieval; (3) providing empirical evidence that the attention weights behave in an interpretable, musically meaningful way. The authors suggest future directions such as testing on real performance recordings, extending the attention mechanism to multi‑head or Transformer‑style architectures, and integrating the approach into real‑time retrieval systems.

In summary, by equipping a cross‑modal audio‑sheet‑music embedding model with a soft attention mechanism, the paper achieves more robust retrieval across a wide range of tempi, moving the field closer to practical, tempo‑invariant music search applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment