Study of Automatic GPU Offloading Technology for Open IoT

IoT technologies have been progressed. Now Open IoT concept has attracted attentions which achieve various IoT services by integrating horizontal separated devices and services. For Open IoT era, we have proposed the Tacit Computing technology to discover the devices with necessary data for users on demand and use them dynamically. However, existing Tacit Computing does not care about performance and operation cost. Therefore in this paper, we propose an automatic GPU offloading technology as an elementary technology of Tacit Computing which uses Genetic Algorithm to extract appropriate offload loop statements to improve performances. We evaluate a C/C++ matrix manipulation to verify effectiveness of GPU offloading and confirm more than 35 times performances within 1 hour tuning time.

💡 Research Summary

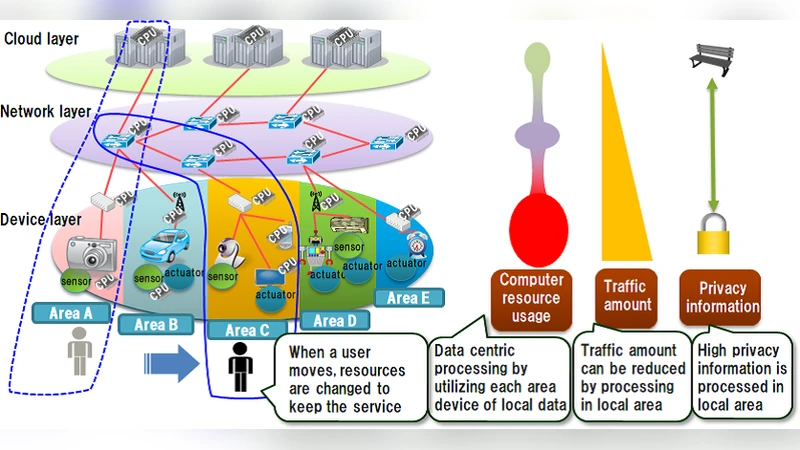

The paper addresses a critical gap in the emerging Open IoT paradigm, where heterogeneous devices and services are horizontally decoupled and dynamically composed to deliver a wide range of applications. While the authors’ previously proposed Tacit Computing framework enables on‑demand discovery of data‑rich devices and seamless device virtualization, it deliberately ignores execution performance and operational cost, leading to prohibitive CPU load for compute‑intensive tasks such as image analysis or large‑scale sensor analytics. To bridge this gap, the authors introduce an automatic GPU offloading technique that forms an essential building block for Tacit Computing.

The core contribution is a Genetic Algorithm (GA)‑driven method that automatically selects which loops in a C/C++ application should be offloaded to a GPU. The workflow proceeds as follows: (1) static source‑code analysis identifies all for‑loops and filters out those that are not parallelizable due to data dependencies, external calls, early exits, etc.; (2) each remaining loop is mapped to a binary gene (1 = offload, 0 = keep on CPU), forming a chromosome whose length equals the number of candidate loops; (3) an initial population of chromosomes is generated randomly; (4) for each chromosome, the corresponding #pragma acc kernels directives are inserted, the code is compiled with the PGI OpenACC compiler, and the resulting binary is executed on a validation machine equipped with an NVIDIA Quadro K5200 GPU. Execution time on a 2048 × 2048 matrix multiplication benchmark serves as the fitness function (fitness = 1/time). (5) Standard GA operators—roulette‑wheel selection with elite preservation, one‑point crossover (Pc = 0.9), and bit‑flip mutation (Pm = 0.05)—evolve the population over a limited number of generations (T ≤ 12). To accelerate the search, previously evaluated chromosomes are cached, avoiding redundant compilation and measurement.

Experimental results demonstrate that, within a total tuning time of less than one hour, the GA converges to a configuration that reduces the matrix multiplication runtime from 92.27 seconds (CPU‑only) to 2.43 seconds on the GPU, a speed‑up of roughly 37× (exceeding the claimed 35×). Each fitness evaluation averages under two minutes, and the entire search completes in 12 generations, confirming the practicality of the approach for rapid deployment in an Open IoT setting. The authors also note that even with only 12 generations, substantial acceleration is achieved, and extending to 20 generations yields further improvements without saturation.

The paper situates this work within related literature on GPGPU programming models (CUDA, OpenCL, OpenACC) and automatic parallelization techniques, highlighting that existing compiler‑based auto‑parallelizers often neglect the overhead of CPU‑GPU data transfers and require expert tuning. By contrast, the GA‑based method systematically explores the combinatorial space of loop offloading decisions, balancing computational gain against transfer overhead.

In conclusion, the study presents a viable, automated pathway to harness heterogeneous accelerators in Open IoT applications, reducing the expertise barrier for developers and enabling cost‑effective, high‑performance service provisioning. Future directions include refining GA parameters, extending the approach to other accelerators such as FPGAs, applying it to more complex kernels (FFT, machine‑learning workloads), and integrating the offloading engine directly into the Tacit Computing runtime for seamless, on‑the‑fly optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment