Video to Fully Automatic 3D Hair Model

Imagine taking a selfie video with your mobile phone and getting as output a 3D model of your head (face and 3D hair strands) that can be later used in VR, AR, and any other domain. State of the art hair reconstruction methods allow either a single photo (thus compromising 3D quality) or multiple views, but they require manual user interaction (manual hair segmentation and capture of fixed camera views that span full 360 degree). In this paper, we describe a system that can completely automatically create a reconstruction from any video (even a selfie video), and we don’t require specific views, since taking your -90 degree, 90 degree, and full back views is not feasible in a selfie capture. In the core of our system, in addition to the automatization components, hair strands are estimated and deformed in 3D (rather than 2D as in state of the art) thus enabling superior results. We provide qualitative, quantitative, and Mechanical Turk human studies that support the proposed system, and show results on a diverse variety of videos (8 different celebrity videos, 9 selfie mobile videos, spanning age, gender, hair length, type, and styling).

💡 Research Summary

The paper presents a fully automatic pipeline that reconstructs a complete 3‑D head model—including high‑fidelity hair strands—from a single selfie video captured on a mobile phone. Unlike prior work that requires multiple calibrated views or manual hair segmentation, this system works with any unconstrained video where the subject talks or moves naturally. The approach consists of four main stages.

-

Rough Head Geometry from Video (A). Each frame is first masked to remove background, then Structure‑from‑Motion (SfM) is applied to estimate per‑frame camera poses and dense depth maps. Using the silhouettes and poses, a visual hull of the head is built via shape‑from‑silhouette. A probability of hair presence is accumulated on each hull vertex across frames; vertices with high probability (>0.5) define a high‑confidence hair region. Frames with severe motion blur are discarded based on the area of the detected hair mask.

-

Automatic Hair Segmentation and Direction Estimation (B). Two convolutional networks are trained: one for pixel‑wise hair/skin segmentation, the other for hair‑direction classification into eight angular bins covering 0‑2π. The direction classifier resolves the orientation ambiguity of the 2‑D orientation maps obtained by filtering each frame with a bank of oriented filters. Tracing on these maps yields 2‑D hair strands, which are later used for 3‑D reconstruction.

-

Face and Full‑Head Reconstruction (C). Segmented frames are processed with IntraFace to obtain 49 inner facial landmarks per frame. The frame closest to a frontal pose is fed to a 3‑D Morphable Model (3‑DMM) based on the Basel Face Model, producing a neutral face geometry and texture. A generic full‑head mesh from the FaceWarehouse dataset is aligned to the face model, providing a complete head scaffold that includes the scalp and ears.

-

3‑D Strand Generation and Database Matching (D). Depth maps and the 2‑D strands are combined to lift the strands into 3‑D space, forming an initial strand set. This set is used to query a large synthetic hair‑style database. Matching proceeds in two steps: (i) Global deformation aligns the coarse mesh of the retrieved hairstyle to the visual hull, ensuring the overall shape fits the observed head; (ii) Local deformation refines each strand to better follow the input 2‑D strands, capturing personal details. The final hair texture is extracted from the video frames and applied to the refined strands. The final output is a unified head model comprising the 3‑D face, scalp, and detailed hair strands.

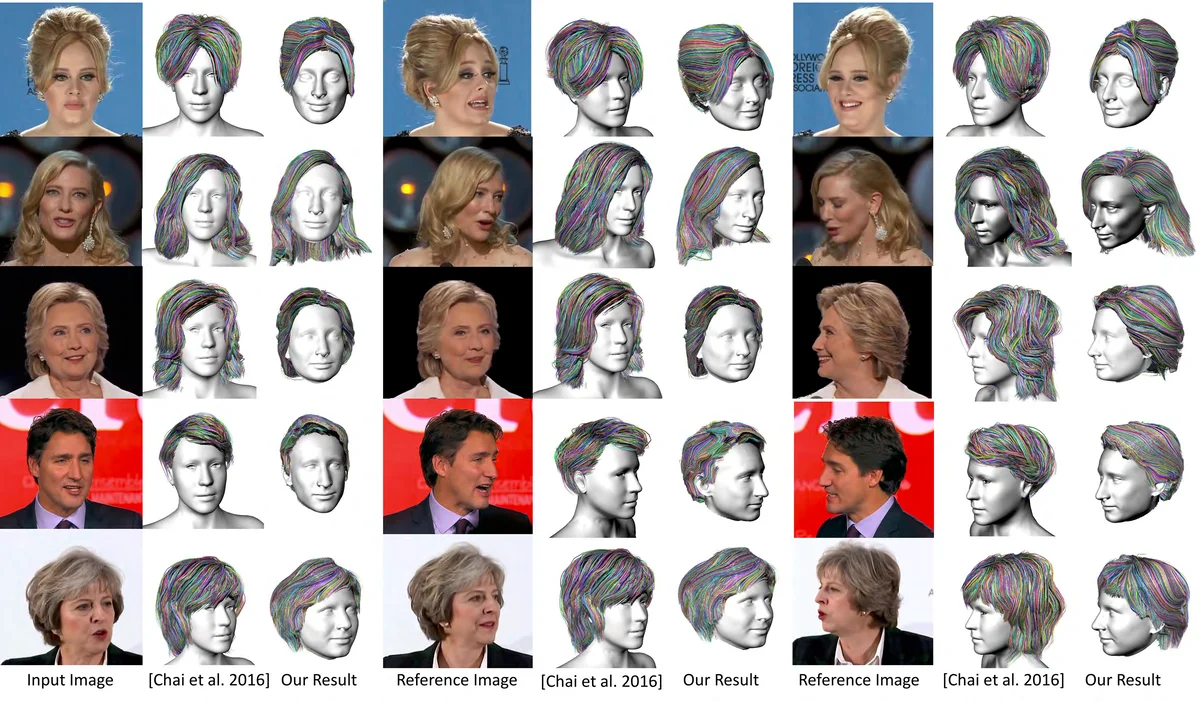

Evaluation. The authors test the system on eight celebrity videos and nine everyday selfie videos, covering a wide range of ages, genders, hair lengths, and styles. Quantitatively, the Intersection‑over‑Union (IoU) of the reconstructed hair region versus ground‑truth masks averages 80 %, compared to 60 % for the state‑of‑the‑art four‑view method (Zhang et al. 2017). Human perception studies on Amazon Mechanical Turk show a preference for the proposed method over the four‑view baseline in 72.4 % of cases and over single‑view baselines in 90.8 % of cases. The full pipeline runs in approximately 5–10 minutes on a modern GPU, requiring no user interaction.

Contributions and Limitations. The paper’s main contributions are: (1) Demonstrating that a single, unconstrained selfie video is sufficient for high‑quality 3‑D hair reconstruction; (2) Introducing a 3‑D strand‑based matching and deformation framework that outperforms 2‑D‑only approaches; (3) Leveraging a hair‑style database to compensate for missing view coverage, enabling reconstruction even when the video spans less than the full ±90° azimuth range. Limitations include reliance on relatively static head motion (large head rotations or extreme lighting can degrade performance) and the need for a curated synthetic hair database. Future work could address dynamic hair motion, improve robustness to challenging illumination, and expand the database with real captured hairstyles.

Comments & Academic Discussion

Loading comments...

Leave a Comment