Hybrid Job-driven Scheduling for Virtual MapReduce Clusters

It is cost-efficient for a tenant with a limited budget to establish a virtual MapReduce cluster by renting multiple virtual private servers (VPSs) from a VPS provider. To provide an appropriate scheduling scheme for this type of computing environment, we propose in this paper a hybrid job-driven scheduling scheme (JoSS for short) from a tenant’s perspective. JoSS provides not only job level scheduling, but also map-task level scheduling and reduce-task level scheduling. JoSS classifies MapReduce jobs based on job scale and job type and designs an appropriate scheduling policy to schedule each class of jobs. The goal is to improve data locality for both map tasks and reduce tasks, avoid job starvation, and improve job execution performance. Two variations of JoSS are further introduced to separately achieve a better map-data locality and a faster task assignment. We conduct extensive experiments to evaluate and compare the two variations with current scheduling algorithms supported by Hadoop. The results show that the two variations outperform the other tested algorithms in terms of map-data locality, reduce-data locality, and network overhead without incurring significant overhead. In addition, the two variations are separately suitable for different MapReduce-workload scenarios and provide the best job performance among all tested algorithms.

💡 Research Summary

The paper addresses the problem of efficiently scheduling MapReduce jobs in a virtual cluster built by renting multiple virtual private servers (VPSs) from a provider, a scenario common for users with limited budgets. In such a setting the tenant only knows the IP address and the datacenter (city) of each VPS; physical rack or node information is hidden. Consequently, traditional Hadoop schedulers that aim for node‑locality or rack‑locality cannot be directly applied.

To overcome this, the authors propose JoSS (Hybrid Job‑driven Scheduling), a three‑level scheduler that operates at the job, map‑task, and reduce‑task levels. JoSS first classifies each job by scale (large vs. small) based on its input size relative to the average datacenter capacity, and then further classifies small jobs by type (map‑heavy vs. reduce‑heavy) using the ratio of reduce‑input size to map‑input size. A formal proof is provided to determine the optimal threshold for this ratio.

For each class a dedicated policy is applied:

- Large jobs are distributed across datacenters in a round‑robin fashion to avoid starvation and to balance load.

- Map‑heavy small jobs are placed so that as many map tasks as possible achieve VPS‑locality (the map task runs on the same VPS that stores its input block).

- Reduce‑heavy small jobs are scheduled to minimize inter‑datacenter shuffle traffic by locating reduce tasks in the datacenter where the majority of their map outputs reside, thereby improving reduce‑data locality.

Two variants of JoSS are introduced to resolve conflicting objectives: - JoSS‑T (Task‑fast) simplifies queue management and adopts a “first‑fit” assignment to accelerate task dispatch, reducing scheduling latency.

- JoSS‑J (VPS‑join) employs a more sophisticated matching algorithm that maximizes VPS‑locality for both map and reduce tasks, at the cost of slightly higher scheduling overhead.

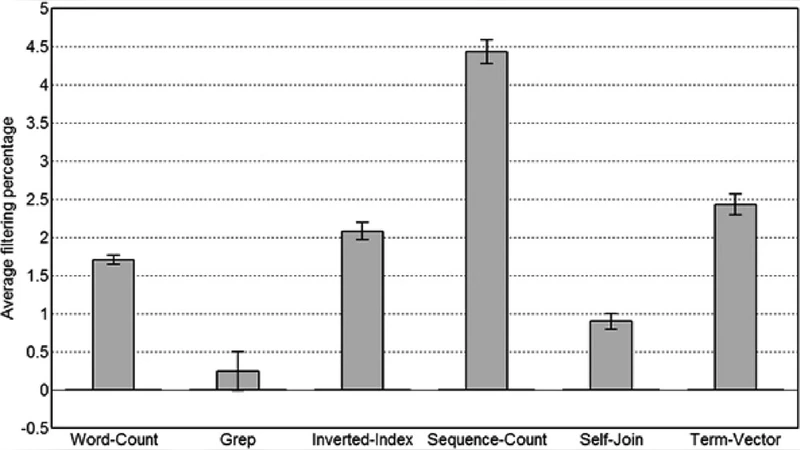

Both variants are implemented on Hadoop‑0.20.2 and evaluated against three native Hadoop schedulers: FIFO, Fair, and Capacity. Experiments use two synthetic workloads (one map‑dominant, one reduce‑dominant) and clusters composed of 2–4 geographically distributed datacenters, each hosting multiple VPSs with one map slot and one reduce slot. Metrics include map‑locality, reduce‑locality, inter‑datacenter network traffic, job makespan, and load balance.

Results show that JoSS‑T reduces task‑assignment delay, achieving up to 12 % shorter job completion times compared with the baseline schedulers, while JoSS‑J attains VPS‑locality above 85 % and cuts inter‑datacenter shuffle traffic by more than 30 %. Both variants avoid job starvation and maintain balanced resource utilization across datacenters.

The contributions are: (1) a tenant‑centric scheduling framework that simultaneously optimizes map‑ and reduce‑data locality in virtual clusters; (2) a rigorous classification scheme with provably optimal thresholds; (3) two complementary variants that prioritize either fast dispatch or maximal locality; (4) extensive empirical validation showing superior performance over existing Hadoop schedulers.

Limitations include the assumption of a single map and a single reduce slot per VPS, static data replication, and lack of direct physical topology information. Future work is suggested to extend JoSS to multi‑slot VPSs, incorporate dynamic replication and autoscaling, and explore cooperative schemes with cloud providers that expose richer topology data for even finer‑grained locality optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment