The HyperKron Graph Model for higher-order features

Graph models have long been used in lieu of real data which can be expensive and hard to come by. A common class of models constructs a matrix of probabilities, and samples an adjacency matrix by flipping a weighted coin for each entry. Examples include the Erd\H{o}s-R'{e}nyi model, Chung-Lu model, and the Kronecker model. Here we present the HyperKron Graph model: an extension of the Kronecker Model, but with a distribution over hyperedges. We prove that we can efficiently generate graphs from this model in order proportional to the number of edges times a small log-factor, and find that in practice the runtime is linear with respect to the number of edges. We illustrate a number of useful features of the HyperKron model including non-trivial clustering and highly skewed degree distributions. Finally, we fit the HyperKron model to real-world networks, and demonstrate the model’s flexibility with a complex application of the HyperKron model to networks with coherent feed-forward loops.

💡 Research Summary

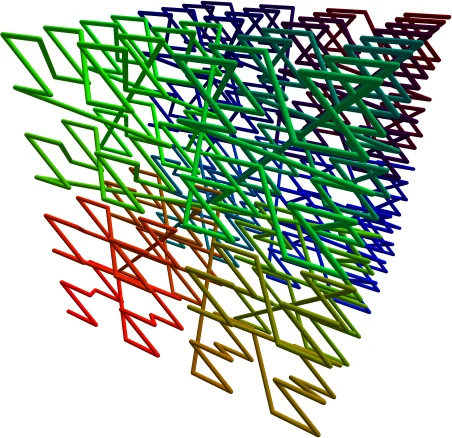

The paper introduces the HyperKron graph model, an extension of the classic Kronecker graph model designed to capture higher‑order structures such as motifs and feed‑forward loops. While traditional Kronecker models generate edges by repeatedly Kronecker‑multiplying a small probability matrix (the initiator) and then sampling each entry independently, HyperKron replaces the matrix with a three‑dimensional probability tensor. By taking the r‑fold Kronecker product of this initiator tensor, a large nʳ × nʳ × nʳ tensor of hyper‑edge probabilities is obtained, where each hyper‑edge corresponds to a set of three vertices. The model then maps each sampled hyper‑edge to a predefined 3‑node motif—most commonly a triangle—thereby inserting three undirected edges into the final simple graph (duplicates are merged).

A naive implementation would require O(N³) time (N = nʳ), which is infeasible for realistic network sizes. The authors therefore develop an efficient sampling algorithm that exploits the fact that many entries of the Kronecker‑powered tensor share identical probability values. These homogeneous regions are identified as Erdős‑Rényi blocks. Within each block, edges are generated using a “grass‑hopping” technique: a geometric random variable determines the gap to the next successful edge, so the work is proportional to the number of edges rather than the block size.

The second technical ingredient is a mapping from block indices to actual tensor coordinates. The authors use a Morton (Z‑order) code to interleave the three dimensions, allowing a fast conversion from a one‑dimensional index (derived from the multiset representation of the block) to the three‑dimensional coordinates (i, j, k) of a hyper‑edge. This requires unranking of multiset permutations, which the paper details. The total number of distinct probability values in the tensor is bounded by the multichoose count C(n³ + r − 1, r) = O(r·n³), far smaller than the total number of vertices N, guaranteeing that the number of Erdős‑Rényi blocks is sublinear in N. Consequently, the overall expected runtime is O(m log N) and empirically O(m), where m is the number of edges in the output graph.

The authors provide a theoretical analysis of several graph properties. They derive the expected number of hyper‑edges that share a given ordinary edge, which leads to closed‑form estimates of the average degree and clustering coefficient for sparse graphs. They also show how the degree distribution inherits the heavy‑tailed nature of the Kronecker model, while the triangle‑based motif insertion yields significantly higher transitivity than standard Kronecker graphs.

Empirical evaluation fits the HyperKron model to several real‑world networks (social, communication, and biological graphs). Using a simple 2 × 2 × 2 symmetric initiator tensor and r = 7 (producing ≈128 vertices), the generated graphs exhibit clustering coefficients comparable to the real data, while preserving a power‑law‑like degree distribution. The authors note that the model does not reproduce higher‑order structures involving four‑node cliques, highlighting its focus on 3‑node motifs.

A key strength of HyperKron is its flexibility. By changing the motif associated with each hyper‑edge, the model can generate directed, signed, or more complex feed‑forward patterns. The paper demonstrates this by fitting coherent feed‑forward loops in a transcriptional regulatory network, showing that the same probabilistic framework can capture a variety of higher‑order interactions without redesigning the underlying generator.

In summary, the HyperKron model offers a mathematically tractable, computationally efficient method for synthesizing graphs that embed specific higher‑order motifs. Its combination of Kronecker‑based probability scaling, Erdős‑Rényi block sampling, and Morton‑code indexing yields linear‑time generation while preserving realistic degree heterogeneity and clustering. This makes HyperKron a valuable tool for benchmarking graph algorithms, performing hypothesis testing with realistic null models, and exploring the impact of motif‑level structure on network dynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment