Dynamic Bayesian Games for Adversarial and Defensive Cyber Deception

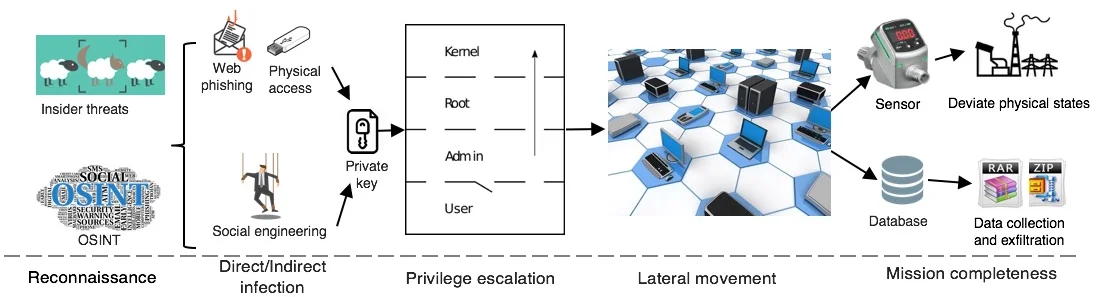

Security challenges accompany the efficiency. The pervasive integration of information and communications technologies (ICTs) makes cyber-physical systems vulnerable to targeted attacks that are deceptive, persistent, adaptive and strategic. Attack instances such as Stuxnet, Dyn, and WannaCry ransomware have shown the insufficiency of off-the-shelf defensive methods including the firewall and intrusion detection systems. Hence, it is essential to design up-to-date security mechanisms that can mitigate the risks despite the successful infiltration and the strategic response of sophisticated attackers. In this chapter, we use game theory to model competitive interactions between defenders and attackers. First, we use the static Bayesian game to capture the stealthy and deceptive characteristics of the attacker. A random variable called the \textit{type} characterizes users’ essences and objectives, e.g., a legitimate user or an attacker. The realization of the user’s type is private information due to the cyber deception. Then, we extend the one-shot simultaneous interaction into the one-shot interaction with asymmetric information structure, i.e., the signaling game. Finally, we investigate the multi-stage transition under a case study of Advanced Persistent Threats (APTs) and Tennessee Eastman (TE) process. Two-Sided incomplete information is introduced because the defender can adopt defensive deception techniques such as honey files and honeypots to create sufficient amount of uncertainties for the attacker. Throughout this chapter, the analysis of the Nash equilibrium (NE), Bayesian Nash equilibrium (BNE), and perfect Bayesian Nash equilibrium (PBNE) enables the policy prediction of the adversary and the design of proactive and strategic defenses to deter attackers and mitigate losses.

💡 Research Summary

The chapter addresses the growing inadequacy of conventional security mechanisms—such as firewalls and intrusion detection systems—against sophisticated, deceptive, and persistent cyber‑physical attacks exemplified by Stuxnet, Dyn, and WannaCry. To capture the strategic interaction between attackers and defenders under information asymmetry, the authors employ a hierarchy of game‑theoretic models: static complete‑information games, static Bayesian games, signaling (one‑sided incomplete‑information) games, and multi‑stage dynamic Bayesian games with two‑sided incomplete information.

In the baseline static game, the defender (P₁) and attacker (P₂) each choose actions from finite sets A₁ and A₂, with payoffs given by matrices J₁ and J₂. The Nash equilibrium (NE) analysis shows that mixed strategies are required because no pure‑strategy dominance exists. This model, however, assumes that both players know each other’s type and payoff structure, which is unrealistic in modern cyber environments.

To model hidden user identities, the authors introduce a random “type” variable θ₂ ∈ {θ_b (adversarial), θ_g (legitimate)}. The attacker knows his own type and selects a type‑dependent mixed strategy σ̄₂(a₂,θ₂). The defender only knows a prior distribution b₀¹ over the types. Expected utilities are weighted by this prior, leading to the Bayesian Nash equilibrium (BNE). BNE captures optimal defender behavior when only probabilistic information about the attacker’s nature is available.

The analysis then proceeds to a signaling game, where the attacker’s action serves as a signal observed by the defender. The defender updates his belief about the attacker’s type using Bayes’ rule and selects a defensive action accordingly. The equilibrium concept used is the Perfect Bayesian Nash equilibrium (PBNE), which requires consistency between beliefs, signaling strategies, and best‑response actions. This framework is particularly useful for modeling “sacrificial attacks” that aim to mislead the defender.

Finally, the authors extend the model to a multi‑stage dynamic Bayesian game to represent Advanced Persistent Threats (APTs) and a Tennessee Eastman (TE) process control scenario. Here, both sides have incomplete information: the defender can deploy defensive deception techniques such as honeypots and honey‑files to inject uncertainty into the attacker’s perception, while the attacker continuously gathers intelligence and updates his belief about the system. At each stage, the defender observes signals (e.g., detection logs, traffic patterns), updates his belief, and chooses a defensive action that together with the attacker’s strategy satisfies the PBNE conditions. The case study demonstrates how dynamic belief updates and strategic deception can reduce expected loss and deter long‑term adversarial behavior.

Key contributions of the chapter include:

- Formalization of user type as a probabilistic variable to model stealthy, camouflaged attacks.

- Integration of Bayesian reasoning into both static and dynamic game settings, enabling defenders to act under uncertainty.

- Application of signaling theory to capture attacker deception and defender belief revision.

- Development of a two‑sided incomplete‑information dynamic game that jointly models defensive deception (e.g., honeypots) and attacker adaptation.

- Derivation of NE, BNE, and PBNE solutions that guide the design of proactive, strategic defense policies rather than reactive, rule‑based mechanisms.

Overall, the work demonstrates that dynamic Bayesian game theory provides a rigorous, quantitative foundation for designing and evaluating cyber‑deception strategies, offering actionable insights for practitioners seeking to protect cyber‑physical infrastructures against advanced, adaptive adversaries.

Comments & Academic Discussion

Loading comments...

Leave a Comment