Text-Independent Speaker Verification Using Long Short-Term Memory Networks

In this paper, an architecture based on Long Short-Term Memory Networks has been proposed for the text-independent scenario which is aimed to capture the temporal speaker-related information by operating over traditional speech features. For speaker …

Authors: Aryan Mobiny, Mohammad Najarian

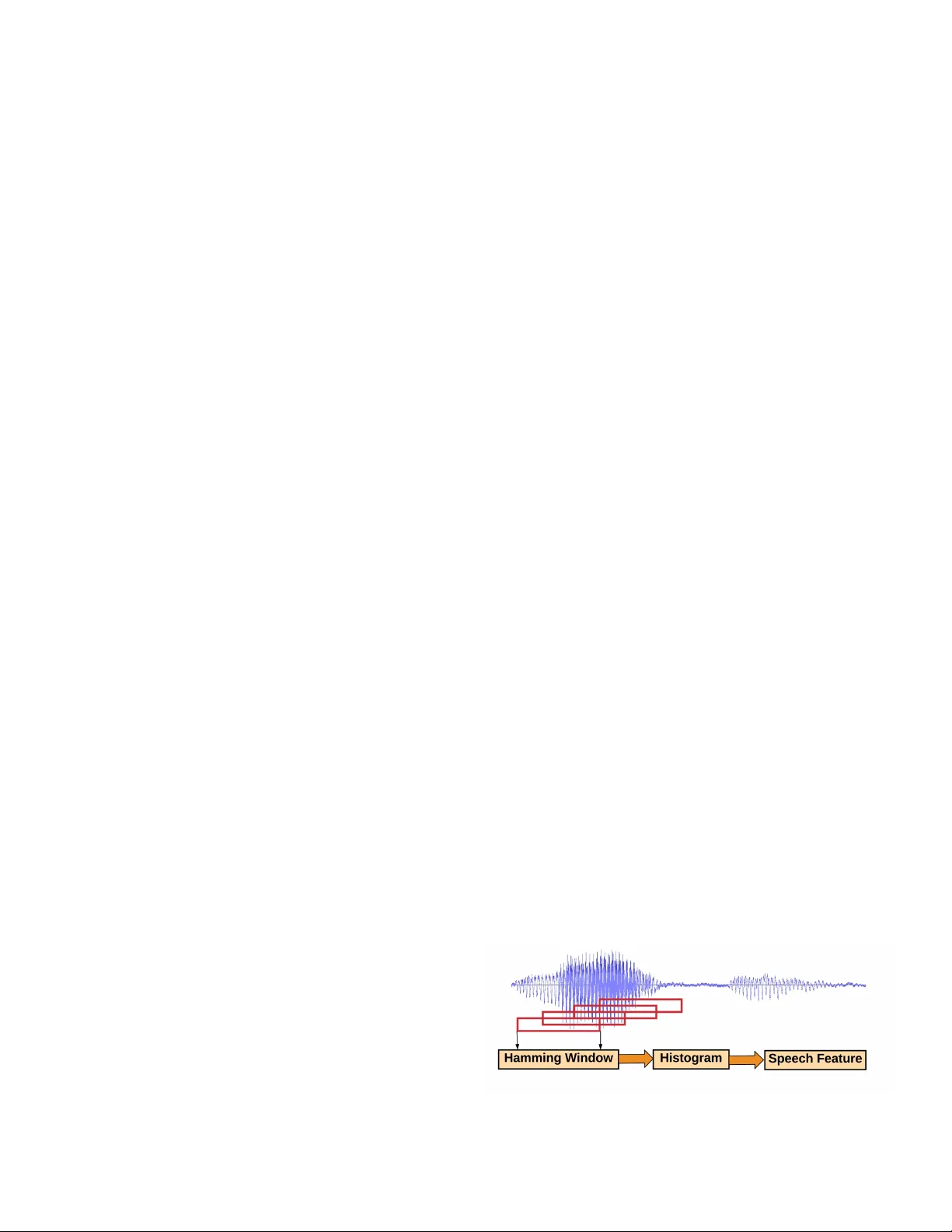

1 T e xt-Independent Speaker V erification Using Long Short-T erm Memory Networks Aryan Mobiny ∗ , Mohammad Najarian † ∗ Department of Electrical and Computer Engineering, Univ ersity of Houston Email: amobiny@uh.edu † Department of Industrial Engineering, Univ ersity of Houston Email: mnajarian@uh.edu Abstract —In this paper , an architectur e based on Long Short-T erm Memory Networks has been proposed f or the text-independent scenario which is aimed to capture the temporal speaker -r elated information by operating over traditional speech features. F or speaker v erification, at first, a background model must be created for speaker repr esentation. Then, in enrollment stage, the speak er models will be created based on the enrollment utterances. For this w ork, the model will be trained in an end-to-end fashion to combine the first two stages. The main goal of end-to-end training is the model being optimized to be consistent with the speaker verification protocol. The end- to-end training jointly learns the background and speaker models by creating the repr esentation space. The LSTM architectur e is trained to cr eate a discrimination space for validating the match and non-match pairs for speaker verification. The proposed architecture demonstrate its superiority in the text-independent compar ed to other traditional methods. I . I N T R O D U C T I O N The main goal of Speaker V erification (SV) is the process of verifying a query sample belonging to a speaker utterance by comparing to the exist- ing speaker models. Speaker verification is usually split into two text-independent and text-dependant categories. T ext-dependent includes the scenario in which all the speak ers are uttering the same phrase while in text-independent no prior information is considered for what the speakers are saying. The later setting is much more challenging as it can contain numerous v ariations for non-speaker in- formation that can be misleading while e xtracting solely speaker information is desired. The speaker verification, in general, consists of three stages: T raining, enrollment, and ev aluation. In training, the uni versal background model is trained using the gallery of speakers. In enrollment, based on the created background model, the ne w speakers will be enrolled in creating the speaker model. T ech- nically , the speakers’ models are generated using the univ ersal background model. In the e valuation phase, the test utterances will be compared to the speaker models for further identification or verifica- tion. Recently , by the success of deep learning in applications such as in biomedical purposes [1], [2], automatic speech recognition, image recogni- tion and network sparsity [3]–[6], the DNN-based approaches have also been proposed for Speaker Recognition (SR) [7], [8]. The traditional speaker verification models such as Gaussian Mixture Model-Univ ersal Background Model (GMM-UBM) [9] and i-vector [10] hav e been the state-of-the-art for long. The drawback of these approaches is the employed unsupervised fashion that does not optimize them for verification setup. Recently , supervised methods proposed for model adaptation to speaker verification such as the one presented in [11] and PLD A-based i-v ectors model [12]. Con volutional Neural Networks (CNNs) has also been used for speech recognition and speaker v erification [8], [13] inspired by their their superior po wer for action recognition [14] and scene understanding [15]. Capsule networks introduced by Hinton et al. [16] has shown quite remarkable performance in different tasks [17], [18], and demonstrated the potential and power to be used for similar purposes. In the present work, we propose the use of LSTMs by using MFCCs 1 speech features for di- rectly capturing the temporal information of the speaker -related information rather than dealing with 1 Mel Frequency Cepstral Coef ficients 2 non-speaker information which plays no role for speaker v erification. I I . R E L A T E D W O R K S There is a huge literature on speaker verification. Ho wev er, we only focus on the research efforts which are based on deep learning deep learning. One of the traditional successful works in speaker verification is the use of Locally Connected Net- works (LCNs) [19] for the text-dependent scenario. Deep networks hav e also been used as feature extractor for representing speaker models [20], [21]. W e in vestigate LSTMs in an end-to-end fashion for speaker v erification. As Con v olutional Neural Networks [22] hav e successfully been used for the speech recognition [23] some works use their architecture for speaker verification [7], [24]. The most similar work to ours is [20] in which the y use LSTMs for the text-dependent setting. On the contrary , we use LSTMs for the text-independent scenario which is a more challenging one. I I I . S P E A K E R V E R I FI C A T I O N U S I N G D E E P N E U R A L N E T W O R K S Here, we explain the speaker verification phases using deep learning. In dif ferent works, these steps hav e been adopted reg arding the procedure proposed by their research ef forts such as i- vector [10], [25], d-vector system [8]. A. Development In the de velopment stage which also called training, the speaker utterances are used for background model generation which ideally should be a uni versal model for speaker model representation. DNNs are employed due to their po wer for feature extraction. By using deep models, the feature learning will be done for creating an output space which represents the speaker in a uni versal model. B. Enr ollment In this phase, a model must be created for each speaker . F or each speaker , by collecting the spok en utterances and feeding to the trained network, dif- ferent output features will be generated for speaker utterances. From this point, different approaches hav e been proposed on how to integrate these enroll- ment features for creating the speaker model. The tradition one is aggregating the representations by av eraging the outputs of the DNN which is called d-vector system [8], [19]. C. Evaluation For ev aluation, the test utterance is the input of the network and the output is the utterance represen- tati ve. The output representati ve will be compared to dif ferent speaker model and the v erification criterion will be some similarity function. For ev aluation purposes, the traditional Equal Error Rate (EER) will often be used which is the operating point in that false reject rate and false accept rate are equal. I V . M O D E L The main goal is to implement LSTMs on top of speech extracted features. The input to the model as well as the architecture itself is explained in the follo wing subsections. A. Input The raw signal is extracted and 25ms windows with %60 overlapping are used for the generation of the spectrogram as depicted in Fig. 1. By selecting 1-second of the sound stream, 40 log-ener gy of filter banks per window and performing mean and vari- ance normalization, a feature window of 40 × 100 is generated for each 1-second utterance. Before feature extraction, voice activity detection has been done ov er the raw input for eliminating the silence. The deriv ati ve feature has not been used as using them did not make any improv ement considering the empirical e valuations. For feature extraction, we used SpeechPy library [26]. Fig. 1. The feature extraction from the raw signal. 3 B. Ar chitectur e The architecture that we use a long short-term memory recurrent neural network (LSTM) [27], [28] with a single output for decision making. W e input fix ed-length sequences although LSTMs are not limited by this constraint. Only the last hidden state of the LSTM model is used for decision making using the loss function. The LSTM that we use has two layers with 300 nodes each (Fig. 2). Fig. 2. The siamese architecture built based on two LSTM layers with weight sharing. C. V erification Setup A usual method which has been used in many other works [19], is training the network using the Softmax loss function for the auxiliary classification task and then use the extracted features for the main v erification purpose. A reasonable ar gument about this approach is that the Softmax criterion is not in align with the verification protocol due to optimizing for identification of individuals and not the one-vs-one comparison. T echnically , the Softmax optimization criterion is as belo w: softmax ( x ) S peak er = e x S peaker P Dev S pk e x Dev S pk (1) ( x S peak er = W S peak er × y + b x Dev S pk = W Dev S pk × y + b (2) in which Speaker and D ev S pk denote the sample speaker and an identity from speaker de velopment set, respectiv ely . As it is clear from the criterion, there is no indication to the one-to-one speak er com- parison for being consistent to speaker verification mode. T o consider this condition, we use the Siamese architecture to satisfy the verification purpose which has been proposed in [29] and employed in different applications [30]–[32]. As we mentioned before, the Softmax optimization will be used for initialization and the obtained weights will be used for fine- tuning. The Siamese architecture consists of two identical networks with weight sharing. The goal is to create a shared feature subspace which is aimed at discrim- ination between genuine and impostor pairs. The main idea is that when two elements of an input pair are from the same identity , their output distances should be close and far a way , otherwise. F or this objecti ve, the training loss will be contrastiv e cost function. The aim of contrastiv e loss C W ( X , Y ) is the minimization of the loss in both scenarios of ha ving genuine and impostor pairs, with the follo wing definition: C W ( X , Y ) = 1 N N X j =1 C W ( Y j , ( X 1 , X 2 ) j ) , (3) where N indicates the training samples, j is the sam- ple index and C W ( Y i , ( X p 1 , X p 2 ) i ) will be defined as follo ws: C W ( Y i , ( X 1 , X 2 ) j ) = Y ∗ C g en ( D W ( X 1 , X 2 ) j ) + (1 − Y ) ∗ C imp ( D W ( X 1 , X 2 ) j ) + λ || W || 2 (4) in which the last term is the regularization. C g en and C imp will be defined as the functions of D W ( X 1 , X 2 ) by the follo wing equations: ( C g en ( D W ( X 1 , X 2 ) = 1 2 D W ( X 1 , X 2 ) 2 C imp ( D W ( X 1 , X 2 ) = 1 2 max { 0 , ( M − D W ( X 1 , X 2 )) } 2 (5) in which M is the margin. V . E X P E R I M E N T S T ensorFLow has been used as the deep learning library [33]. For the de velopment phase, we used data augmentation by randomly sampling the 1- second audio sample for each person at a time. Batch normalization has also been used for av oiding 4 possible gradient explotion [34]. It’ s been shown that ef fecti ve pair selection can drastically improve the verification accuracy [35]. Speaker verification is performed using the protocol consistent with [36] for which the name identities start with E will be used for e v aluation. Algorithm 1: The utilized pair selection algo- rithm for selecting the main contributing impos- tor pairs Update : Freeze weights! Evaluate : Input data and get output distance vector; Search : Return max and min distances for match pairs : max g en & min g en ; Thresholding : Calculate th = th 0 × max g en min g en ; while impostor pair do if imp > max g en + th then discard; else feed the pair; A. Baselines W e compare our method with different base- line methods. The GMM-UBM method [9] if the first candidate. The MFCCs features with 40 co- ef ficients are extracted and used. The Universal Backgr ound Model (UBM) is trained using 1024 mixture components. The I-V ector model [10], with and without Pr obabilistic Linear Discriminant Anal- ysis (PLD A) [37], has also been implemented as the baseline. The other baseline is the use of DNNs with locally-connected layers as proposed in [19]. In the d-v ector system, after de velopment phase, the d-vectors extracted from the enrollment utterances will be aggregated to each other for generating the final representation. Finally , in the ev aluation stage, the similarity function determines the closest d- vector of the test utterances to the speaker models. B. Comparison to Dif fer ent Methods Here we compare the baseline approaches with the proposed model as provided in T able I. W e utilized the architecture and the setup as discussed in Section IV -B and Section IV -C, respectively . As can be seen in T able I, our proposed architecture outperforms the other methods. T ABLE I T H E A R C H I T E C T U R E U S E D F O R V E R I FI C ATI O N P U R P O S E . Model EER GMM-UBM [9] 27.1 I-vectors [10] 24.7 I-vectors [10] + PLD A [37] 23.5 LSTM [ours] 22.9 C. Effect of Utterance Duration One one the main adv antage of the baseline meth- ods such as [10] is their ability to capture rob ust speaker characteristics through long utterances. As demonstrated in Fig. 3, our proposed method out- performs the others for short utterances considering we used 1-second utterances. Ho wever , it is worth to hav e a fair comparison for longer utterances as well. In order to ha ve a one-to-one comparison, we modified our architecture to feed and train the system on longer utterances. In all experiments, the duration of utterances utilized for dev elopment, enrollment, and e v aluation are the same. Fig. 3. The effect of the utterance duration (EER). As can be observed in Fig. 3, the superiority of our method is only in short utterances and in longer utterances, the traditional baseline methods such as [10], still are the winners and LSTMs fails to capture effecti vely inter- and inter-speaker v ariations. 5 V I . C O N C L U S I O N In this work, an end-to-end model based on LSTMs has been proposed for text-independent speaker verification. It was sho wn that the model provided promising results for capturing the tem- poral information in addition to capture the within- speaker information. The proposed LSTM architec- ture has directly been used on the speech features extracted from speaker utterances for modeling the spatiotemporal information. One the observed traces is the superiority of traditional methods on longer utterances for more robust speaker modeling. More rigorous studies are needed to in vestigate the rea- soning behind the failure of LSTMs to capture long dependencies for speaker related characteristics. Ad- ditionally , it is expected that the combination of traditional models with long short-term memory ar- chitectures may improv e the accurac y by capturing the long-term dependencies in a more effecti ve way . The main adv antage of the proposed approach is its ability to capture informati ve features in short utterances. R E F E R E N C E S [1] W . Shen, M. Zhou, F . Y ang, C. Y ang, and J. T ian, “Multi-scale con volutional neural networks for lung nodule classification, ” in International Conference on Information Processing in Medical Imaging , pp. 588–599, Springer , 2015. [2] A. Mobiny , S. Moulik, I. Gurcan, T . Shah, and H. V an Nguyen, “Lung cancer screening using adapti ve memory-augmented recurrent networks, ” arXiv pr eprint arXiv:1710.05719 , 2017. [3] K. Simonyan and A. Zisserman, “V ery deep con volutional networks for large-scale image recognition, ” arXiv pr eprint arXiv:1409.1556 , 2014. [4] A. Krizhevsk y , I. Sutske ver , and G. E. Hinton, “Imagenet classi- fication with deep con volutional neural networks, ” in Advances in neural information pr ocessing systems , pp. 1097–1105, 2012. [5] G. Hinton, L. Deng, D. Y u, G. E. Dahl, A.-r . Mohamed, N. Jaitly , A. Senior , V . V anhoucke, P . Nguyen, T . N. Sainath, et al. , “Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Processing Magazine , v ol. 29, no. 6, pp. 82–97, 2012. [6] A. T orfi and R. A. Shirv ani, “ Attention-based guided struc- tured sparsity of deep neural networks, ” arXiv preprint arXiv:1802.09902 , 2018. [7] Y . Lei, N. Scheffer , L. Ferrer , and M. McLaren, “ A novel scheme for speaker recognition using a phonetically-aware deep neural netw ork, ” in Acoustics, Speech and Signal Processing (ICASSP), 2014 IEEE International Confer ence on , pp. 1695– 1699, IEEE, 2014. [8] E. V ariani, X. Lei, E. McDermott, I. L. Moreno, and J. Gonzalez-Dominguez, “Deep neural networks for small foot- print text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2014 IEEE International Confer ence on , pp. 4052–4056, IEEE, 2014. [9] D. A. Reynolds, T . F . Quatieri, and R. B. Dunn, “Speaker verification using adapted gaussian mixture models, ” Digital signal processing , vol. 10, no. 1-3, pp. 19–41, 2000. [10] N. Dehak, P . J. Kenn y , R. Dehak, P . Dumouchel, and P . Ouel- let, “Front-end factor analysis for speaker verification, ” IEEE T ransactions on A udio, Speech, and Language Pr ocessing , vol. 19, no. 4, pp. 788–798, 2011. [11] W . M. Campbell, D. E. Sturim, and D. A. Reynolds, “Support vector machines using gmm supervectors for speaker verifica- tion, ” IEEE signal pr ocessing letters , vol. 13, no. 5, pp. 308– 311, 2006. [12] D. Garcia-Romero and C. Y . Espy-W ilson, “ Analysis of i-vector length normalization in speaker recognition systems, ” in T welfth Annual Confer ence of the International Speech Communication Association , 2011. [13] O. Abdel-Hamid, A.-r . Mohamed, H. Jiang, L. Deng, G. Penn, and D. Y u, “Con volutional neural networks for speech recogni- tion, ” IEEE/ACM T ransactions on audio, speech, and language pr ocessing , vol. 22, no. 10, pp. 1533–1545, 2014. [14] S. Ji, W . Xu, M. Y ang, and K. Y u, “3d conv olutional neural networks for human action recognition, ” IEEE transactions on pattern analysis and machine intelligence , vol. 35, no. 1, pp. 221–231, 2013. [15] D. T ran, L. Bourdev , R. Fergus, L. T orresani, and M. Paluri, “Learning spatiotemporal features with 3d conv olutional net- works, ” in Computer V ision (ICCV), 2015 IEEE International Confer ence on , pp. 4489–4497, IEEE, 2015. [16] S. Sabour , N. Frosst, and G. E. Hinton, “Dynamic routing be- tween capsules, ” in Advances in Neural Information Pr ocessing Systems , pp. 3856–3866, 2017. [17] A. Mobiny and H. V an Nguyen, “Fast capsnet for lung cancer screening, ” arXiv preprint , 2018. [18] A. Jaiswal, W . AbdAlmageed, and P . Natarajan, “Capsule- gan: Generativ e adversarial capsule network, ” arXiv pr eprint arXiv:1802.06167 , 2018. [19] Y .-h. Chen, I. Lopez-Moreno, T . N. Sainath, M. V isontai, R. Alv arez, and C. Parada, “Locally-connected and conv olu- tional neural networks for small footprint speaker recognition, ” in Sixteenth Annual Conference of the International Speech Communication Association , 2015. [20] G. Heigold, I. Moreno, S. Bengio, and N. Shazeer , “End-to- end text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer ence on , pp. 5115–5119, IEEE, 2016. [21] C. Zhang and K. K oishida, “End-to-end text-independent speaker verification with triplet loss on short utterances, ” in Pr oc. of Interspeech , 2017. [22] Y . LeCun, L. Bottou, Y . Bengio, and P . Haffner , “Gradient- based learning applied to document recognition, ” Pr oceedings of the IEEE , vol. 86, no. 11, pp. 2278–2324, 1998. [23] T . N. Sainath, A.-r . Mohamed, B. Kingsbury , and B. Ram- abhadran, “Deep con volutional neural networks for lvcsr , ” in Acoustics, speech and signal pr ocessing (ICASSP), 2013 IEEE international conference on , pp. 8614–8618, IEEE, 2013. [24] F . Richardson, D. Reynolds, and N. Dehak, “Deep neural network approaches to speaker and language recognition, ” IEEE Signal Pr ocessing Letters , vol. 22, no. 10, pp. 1671–1675, 2015. [25] P . Kenny , G. Boulianne, P . Ouellet, and P . Dumouchel, “Joint factor analysis versus eigenchannels in speaker recognition, ” IEEE T ransactions on Audio, Speech, and Language Pr ocess- ing , vol. 15, no. 4, pp. 1435–1447, 2007. [26] A. T orfi, “Speechpy-a library for speech processing and recog- nition, ” arXiv preprint , 2018. [27] S. Hochreiter and J. Schmidhuber, “Long short-term memory , ” Neural computation , vol. 9, no. 8, pp. 1735–1780, 1997. [28] H. Sak, A. Senior, and F . Beaufays, “Long short-term memory recurrent neural network architectures for large scale acoustic 6 modeling, ” in F ifteenth annual conference of the international speech communication association , 2014. [29] S. Chopra, R. Hadsell, and Y . LeCun, “Learning a similarity metric discriminatively , with application to face verification, ” in Computer V ision and P attern Recognition, 2005. CVPR 2005. IEEE Computer Society Conference on , vol. 1, pp. 539–546, IEEE, 2005. [30] X. Sun, A. T orfi, and N. Nasrabadi, “Deep siamese conv o- lutional neural networks for identical twins and look-alike identification, ” Deep Learning in Biometrics , p. 65, 2018. [31] R. R. V arior , M. Haloi, and G. W ang, “Gated siamese conv olu- tional neural network architecture for human re-identification, ” in European Confer ence on Computer V ision , pp. 791–808, Springer , 2016. [32] G. Koch, R. Zemel, and R. Salakhutdinov , “Siamese neural net- works for one-shot image recognition, ” in ICML Deep Learning W orkshop , vol. 2, 2015. [33] M. Abadi, A. Agarwal, P . Barham, E. Bre vdo, Z. Chen, C. Citro, G. S. Corrado, A. Da vis, J. Dean, M. De vin, S. Ghemaw at, I. Goodfello w , A. Harp, G. Irving, M. Isard, Y . Jia, R. Jozefow- icz, L. Kaiser , M. Kudlur , J. Levenber g, D. Man ´ e, R. Monga, S. Moore, D. Murray , C. Olah, M. Schuster , J. Shlens, B. Steiner , I. Sutske ver , K. T alwar , P . T ucker , V . V anhoucke, V . V asude van, F . V i ´ egas, O. V inyals, P . W arden, M. W attenberg, M. Wick e, Y . Y u, and X. Zheng, “T ensorFlow: Large-scale machine learning on heterogeneous systems, ” 2015. Software av ailable from tensorflow .org. [34] S. Ioffe and C. Sze gedy , “Batch normalization: Accelerating deep network training by reducing internal covariate shift, ” in International confer ence on machine learning , pp. 448–456, 2015. [35] Y .-R. Lin, Y . Chi, S. Zhu, H. Sundaram, and B. L. Tseng, “Facetnet: a frame work for analyzing communities and their ev olutions in dynamic networks, ” in Pr oceedings of the 17th international confer ence on W orld W ide W eb , pp. 685–694, A CM, 2008. [36] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxceleb: a large- scale speaker identification dataset, ” in INTERSPEECH , 2017. [37] P . K enny , “Bayesian speaker verification with hea vy-tailed priors., ” in Odyssey , p. 14, 2010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment