Hybrid CTC-Attention based End-to-End Speech Recognition using Subword Units

In this paper, we present an end-to-end automatic speech recognition system, which successfully employs subword units in a hybrid CTC-Attention based system. The subword units are obtained by the byte-pair encoding (BPE) compression algorithm. Compar…

Authors: Zhangyu Xiao, Zhijian Ou, Wei Chu

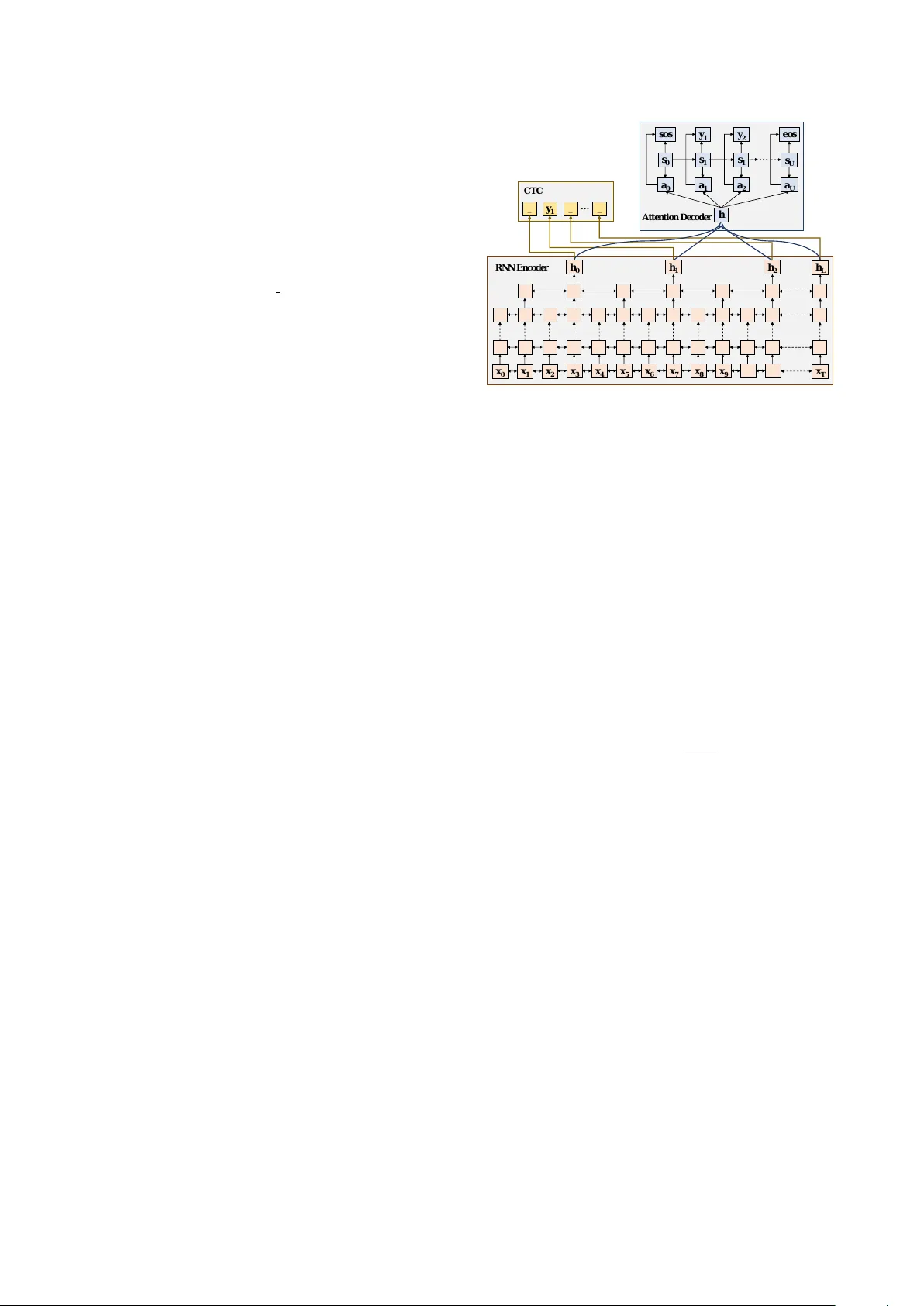

Hybrid CTC-Attention based End-to-End Speech Recognition using Subword Units Zhangyu Xiao 1 , Zhijian Ou 1 , W ei Chu 2 , Hui Lin 2 1 Department of Electronic Engineering, Tsinghua Uni versity , Beijing 100084, China 2 Shanghai Liulishuo Information T echnology Co., Ltd xiaozy13@mails.tsinghua.edu.cn, ozj@tsinghua.edu.cn, { wei.chu,hui.lin } @liulishuo.com Abstract In this paper , we present an end-to-end automatic speech recog- nition system, which successfully employs subword units in a hybrid CTC-Attention based system. The subword units are ob- tained by the byte-pair encoding (BPE) compression algorithm. Compared to using words as modeling units, using characters or subword units does not suf fer from the out-of-vocab ulary (OO V) problem. Furthermore, using subword units further of- fers a capability in modeling longer context than using charac- ters. W e evaluate dif ferent systems o ver the LibriSpeech 1000h dataset. The subword-based hybrid CTC-Attention system ob- tains 6.8% word error rate (WER) on the test clean subset with- out any dictionary or e xternal language model. This represents a significant improv ement (a 12.8% WER relative reduction) ov er the character-based hybrid CTC-Attention system. Index T erms : end-to-end speech recognition, hybrid ctc- attention, subword unit 1. Introduction T raditional large vocab ulary continuous speech recognition (L VCSR) systems consist of a complex pipeline of multiple modules, such as a GMM/DNN-HMM based acoustic model, a pronunciation lexicon and an external word-le vel language model [1, 2]. Building such a complex L VCSR system remains a complicated task, which requires expertise-intensi v e knowl- edge. In this paper , we are interested in building end-to-end speech recognition systems, which have shown promising re- sults on L VCSR tasks. An end-to-end system generally de- notes a simplified pipeline, which is usually based on neural network architectures and can be trained from scratch. Gener- ally , there are two main approaches for designing end-to-end L VCSR systems: Connectionist T emporal Classification (CTC) [3] and RNN encoder-decoder with attention mechanism [4]. Howe ver , these end-to-end L VCSR systems still have se v- eral drawbacks. On the one hand, CTC makes a strong inde- pendent assumption between labels, thus cannot perform well without a strong language model. Attention based methods solve this problem by training a decoder which emits labels de- pending on previous ones. On the other hand, attention based system are hard to train due to its excessi vely flexible attention alignments which might be unreasonable. In speech recognition tasks, the alignment between input features and output symbols is usually monotonic. CTC uses the so-called latent path to rep- resent this alignment. Recent work that combines CTC and at- tention loss in the end-to-end system [5] achie ves lower WERs This work is supported by NSFC grant 61473168 and a Liulishuo grant. Correspondence to: Z. Ou (ozj@tsinghua.edu.cn). than using either approach individually . This is our first obser- vation to impro ve end-to-end speech recognition system. Our second observ ation is concerned with dif ferent choices of the modeling units. End-to-end systems directly map acous- tic features to label sequences, which are composed of sym- bols like phonemes [6, 7], characters [4, 5, 8, 9, 10, 11], sub- words [12, 13] and words [14]. Phoneme based approaches need a carefully designed pronunciation lexicon to map words to phoneme sequences. Both phoneme based models [6, 7] and word based models [14] need a predefined dictionary , thus can- not handle the out-of-vocabulary (OO V) problem. In contrast, characters, or graphemes, are advantageous for end-to-end sys- tems, since all text can be easily segmented into character se- quence, and thus naturally enable open vocab ulary end-to-end speech recognition. Howe ver , using characters would increase the burden in learning longer context dependency . Using sub- word units would potentially overcome these drawbacks, and still keep the advantage for open v ocabulary end-to-end recog- nition. Recently , the subword based model has shown impres- siv e results in neural machine translation (NMT) [15, 16] be- cause of its ability to deal with infrequent words, like com- pounds, cognates as well as loanwords. For end-to-end speech recognition, there are also successful applications with subword units [12, 13]. In [13], both subword units and cross-word units are gen- erated with the byte-pair encoding (BPE) [15] method, and the neural network is trained based on the CTC loss using a sub- word and cross-word based language model. Cross-word units are taken into the unit set in order to model liaisons in oral En- glish con versations, such as speaking “gonna” instead of “go- ing to”. Howe ver , the CTC model employed in [13] performs poorly without an external language model. Using an external language model would require a predefined dictionary . In [12], the authors adopt the w ord-piece model (WPM) [17] to produce their subword units and employs the RNN-Transduer (RNN-T) neural architecture. It is sho wn in [12] that RNN-T with WPM significantly outperforms the character based RNN-T . The en- coder network is pre-trained with a CTC loss while the decoder network is initialized with a pre-trained LSTM language model. W ithout careful tuning of pre-training, it is nearly impossible to train an effectiv e RNN-T . Note that both BPE and WPM based subword models use a fixed decomposition of words [12, 13]. Learning a variable decomposition of the target sequence is studied in [18, 19]. In [18], the authors propose a method called GRAM-CTC to jointly learn the alignment between the input and output sequence as well as a better decomposition for the target sequence. In [19], the authors add an addtional objec- tiv e of learning a dynamic decomposition to the training loss function. Both variable decomposition approaches greatly in- crease the difficulty of training, and thus require more careful fine-tuning and higher computational cost. From the abov e two observations, we present an end-to-end L VCSR system in this paper , which successfully employs sub- word units in a hybrid CTC-Attention neural architecture. The combination of subword modeling and hybrid CTC-Attention has not been explored, to the best of our knowledge, which would contribute to a stronger end-to-end system. W e use the BPE method to construct the subword units, and e valu- ate different systems over the LibriSpeech 1000h dataset [20]. The subword-based hybrid CTC-Attention system obtains 6.8% word error rate (WER) on the test clean subset without any dic- tionary or external language model. This represents a signifi- cant improv ement (a 12.8% WER relati ve reduction) compared to the character-based hybrid CTC-Attention system. 2. Hybrid CTC-Attention Architectur e In this section, we introduce a hybrid CTC-Attention architec- ture [5] for end-to-end speech recognition. The overall archi- tecture can be found in Figure 1. Given an input acoustic fea- ture sequence x = ( x 1 , x 2 , . . . , x T ) and an output symbol se- quence y = ( y 1 , y 2 , . . . , y U ) , the hybrid CTC-Attention based framew ork models the transcription between x and y . Note that y u ∈ { 1 , . . . , K } , K is the number of different label units. In end-to-end speech recognition systems, the length of output label sequence is usually shorter than the length of feature se- quence (i.e., U < T ) . The hybrid CTC-Attention architecture uses a shared RNN encoder to produce a high-lev el representation h = ( h 1 , h 2 , . . . , h L ) for the input sequence x , where L is the down- sampled frame index: h = E ncoder ( x ) Then a CTC model and an attention based decoder generates objectiv es simultaneously based on the high-leve l feature h . In our experiments, the RNN Encoder is implemented by stacking multiple Bi-directional Long Short-T erm Memory (BLSTM) layers. A detailed description of CTC and attention based de- coder will be presented in Section 2.1 and Section 2.2 respec- tiv ely . Then the hybrid CTC-Attention objectiv e will be given in Section 2.3. 2.1. Connectionist T emporal Classification (CTC) CTC provides a method to train RNNs without any prior alignment between input and output sequences of different lengths. CTC introduces a latent variable, CTC path π = ( π 1 , π 2 , . . . , π L ) , as the frame-lev el label of the input sequence. A special “blank” symbol is used to separate adjacent identical labels and represents a null emission. By removing repetitions of identical labels and blank symbols, dif ferent paths can be mapped to a particular label sequence. Based on the RNN en- coder output h , CTC calculates the conditional probability of the label for each frame and assumes that the labels at different frames are conditionally independent. So the probability of a CTC path can be computed as follows: p ( π | x ) = L Y l =1 q π l l where q π l l denotes the softmax probability of outputting label π l at frame l . q l = q 1 l , · · · q K +1 l is often called the softmax h 0 h 1 h L x T x 0 h 2 h a 0 s 0 so s s 1 y 1 a 2 y 2 a U s U eos … _ y 1 _ _ … Attention Dec oder CTC RN N Encoder x 1 x 2 x 3 x 4 x 5 x 6 x 7 x 8 x 9 a 1 s 1 Figure 1: The hybrid CTC-Attention model consists of thr ee modules: RNN Encoder , CTC Loss and Attention Decoder . RNN Encoder is implemented by stac king multiple BLSTM layers, in which the top two layers subsample the hidden states fr om lay- ers below by a factor of 2. CTC and Attention Decoder share the same output deep featur es fr om RNN Encoder and compute objective functions simultaneously . output. The likelihood of the label sequence is the sum of prob- abilities of all compatible CTC paths: p ( y | x ) = X π ∈ φ ( y ) p ( π | x ) where φ ( y ) denotes the set of all the CTC paths which can be mapped to the label sequence y . A forward-backward algorithm can be employed to ef fi- ciently sum over all the possible paths. The likelihood of y can then be computed with the forward variable α u l and the back- ward v ariable β u l as follows: p ( y | x ) = X u α u l β u l q π l l where u is the label index and l is the frame index. The CTC loss is defined as the negati ve log likelihood of the output label sequence: L C T C = − ln( p ( y | x )) By computing the deriv ate of the CTC loss with respect to the softmax output q l , the parameters of the RNN Encoder can be trained with standard back-propagation. 2.2. Attention based Decoder The attention based decoder is an RNN which con verts the high- lev el features h generated by the shared encoder into the output label sequence with the attention mechanism. The decoder cal- culates the likelihood of the label sequence, based on the condi- tional probability of the label y u giv en the input feature h and the previous labels y 1: u − 1 , using the chain rule: p ( y | x ) = Y u p ( y u | h, y 1: u − 1 ) At each step u , the decoder generates a conte xt vector c u based on all the input features h and attention weight a u,l : c u = X l a u,l h l The attention weight a u = ( a u, 1 , a u, 2 , . . . , a u,L ) is obtained from location based attention energies e u,l as follows: a u,l = sof tmax ( e u,l ) e u,l = ω T tanh ( W s u − 1 + V h l + M f u,l + b ) f u = F ∗ a u − 1 where ω , W , V , M , b are trainable parameters, s u − 1 is the de- coder’ s RNN state. ∗ denotes the one-dimensional con volution along the frame axis, l , with the con volution parameter F , to produce the features f u = ( f u, 1 , f u, 2 , . . . , f u,L ) . W ith the context v ector c u , we can predict the RNN hidden state s u and the next output y u as follows: s u = LS T M ( s u − 1 , y u − 1 , c u ) y u = F ull y C onnected ( s u , c u ) where the LS T M function here is implemented as a uni- directional LSTM layer and the F ully C onnected function in- dicates a feed-forward fully-connected network. In the attention based decoder module, a special start-of- sequence symbol h sos i and end-of-sequence symbol h eos i has been added to the output sequence. When h eos i is emitted, the decoder stops the generation of new output labels. Finally , the attention loss is defined as the negati ve log lik e- lihood of the target sequence. 2.3. Hybrid CTC-Attention Objective W ith the aim to take advantage of both models, the CTC loss and attention loss can be combined [5]. W e show the ov erall architecture of the hybrid model in Figure 1. Both CTC and attention based methods their own draw- backs. CTC makes the conditional independent assumption between the labels, thus requiring a strong external language model to compensate for the long term dependency between the labels. The attention mechanism produces each output using a weighted sum over all the input without any constraint or guid- ance which can be provided by alignments. Thus it is usually difficult to train the attention based decoder . Note that the forw ard-backward algorithm in CTC can learn a monotonic alignment between acoustic features and label se- quences, which can help the encoder to conv erge more quickly . Moreov er , the attention based decoder can learn the dependen- cies among the tar get sequence. Hence, combining CTC and attention loss not only can help the conv ergence of the attention based decoder , but also enable the hybrid model to utilize label dependencies. The hybrid CTC-Attention objecti ve is defined as a weighted sum of CTC loss and attention based loss: L hybr id = λL C T C + (1 − λ ) L Attention where λ ∈ (0 , 1) is a tunable hyper-parameter . 3. Using Subword Units T raditional phoneme-based speech recognition systems require an external pronunciation lexicon to link phonemes and words. Thus, out-of-v ocabulary (OO V) words (such as name entities or rare words) cannot be recognized. In addition, the pronuncia- tion lexicon also complicates the decoding procedure. End-to-end speech recognition can directly map acoustic frames to characters, words or subwords. For word-based end- to-end system, the drawback is that OO V words can not be rec- ognized and a large lexicon is needed, which suffers from ex- pensiv e computation cost due to a large softmax output. For T able 1: An utterance in the training data is segmented into wor ds, character s and subwor ds r espectively . The special sym- bol ‘ ’ denotes the word boundary so that the original wor d sequence can be restor ed fr om character and subwor d based sequence. Basic Unit Segmented Sequence word that neither of them had crossed the threshold since the dark day character t h a t n e i t h e r o f t h e m h a d c r o s s e d t h e t h r e s h o l d s i n c e t h e d a r k d a y subword that ne i ther of them had cro s sed the th re sh old sin ce the d ar k day character-based end-to-end system, the drawback is that the de- coder’ s computation cost is increased and it is difficult to learn word-le vel dependenc y in the target sequence. Subwords are chosen as the model units in our speech recognition system. Subword units are obtained by the byte- pair encoding (BPE) algorithm [15], which iteratively merges the most frequent pairs of units (initially all are characters) and adds it into the set of subword units. W e define the initial sub- word set as the character vocabulary (‘ A ’,‘B’, . . . ,‘Z’) plus an additional symbol ‘ ’ indicating the end boundary of a word. For example, if ‘ AB’ is the most frequent pair of units in the current set, then ‘ A ’ and ‘B’ are merged to produce a new unit ‘ AB’, which will be added into the subw ord set. The iteration is ended until a giv en number of merging operations is reached. Since the BPE algorithm maintains all the characters in the subword set, rare words can be represented by subword units. Once we obtain the subword set, we break the w ord-based train- ing transcripts into subword sequences, by greedily segmenting the longest subwords from left to right in a sentence. T able 1 shows an example of three different segmentation of an utterance from the training transcripts. The original tran- script is the word based sequence. W e obtain the character se- quence by simply dividing words into characters one by one and and adding the word boundary symbol ‘ ’. It can be easily seen from T able 1 that the character based sequence representation is long, which is not good for decoding. Using a greedy search- ing algorithm, the word sequence can be segmented into sub- word units, out of the 500 subword units generated by BPE. W e can see that frequent words, such as ‘that’ and ‘of ’, and single character like ‘s’ and ‘d’, occur in the subword sequence. The subword based segmentation keeps the representation flexibil- ity as the character based segmentation, but has a much shorter sequence length, which is good for decoding. When encountering an OO V word such as “cyberlife”, phoneme and w ord based systems mark this word with a special h unk i symbol, making recognition errors. In character based systems, the decoder attempts to output character sequence (‘c’, ‘y’, · · · , ‘e’, ‘ ’) one by one. A substitution error would occur easily if any of these characters was wrong. In subword based systems, since the subw ord ‘cyber’ and ‘life’ are frequent w ords or word roots, the decoder can predict the subword decompo- sition (‘cyber’, ‘life ’) with only two decoding steps and can recognize the rare word ‘cyberlife’ more easily . The subword sequence is used as the target output to train our hybrid CTC-Attention system. In decoding, a subword se- quence is first produced by the decoder and then con verted to the corresponding w ord sequence by removing the word bound- ary symbol ‘ ’. T able 2: W or d Error Rates (WERs) on the LibriSpeech subsets test clean and test other . The hybrid CTC-Attention (CTC+Att) model outperforms the pure CTC and Attention (Att) based model, when using characters (c har). The combined par ameter λ is set to 1.0 for pur e CTC and 0.0 for pure attention based ex- periments. W e extract 500 and 1000 subword units with the BPE algorithm. The hybrid CTC+Att model with 500 and 1000 sub- wor d units achieve the WERs 6.8% and 7.6% on the test clean set respectively , repr esenting 12.8% and 2.6% r elative impro ve- ments over the char baseline. Note that no language models are applied in our experiments. Model output unit λ WER test clean test other CTC char 1.0 20.9 39.8 Att char 0.0 10.5 30.9 CTC+Att char 0.2 7.8 21.9 CTC+Att subword 500 0.2 6.8 19.5 CTC+Att subword 500 0.5 7.6 21.0 CTC+Att subword 1000 0.2 7.6 21.2 wav2letter [8] 4-gram LM char - 7.2 - 4. Experiments 4.1. Experimental Setup The hybrid CTC-Attention based systems are experimented with the Chainer [21] backend of the ESPNET toolkit [22], using characters or subwords. CTC and attention-based sys- tems are implemented by setting λ to 1 and 0 , respectively . For comparison, no lexicon and language model are used in all the recognition systems. W e train and test different systems over LibriSpeech dataset [20], consisting of 1000 hours of read audio books. The de v and test subsets of LibriSpeech are classified into two categories: simple (‘clean’) and hard (‘other’) subsets. W e monitor con ver- gence with LibriSpeech subsets dev clean and de v other . For ev aluation, we report the word error rates (WERs) on the sub- sets test clean, test other . The acoustic features are 40 dimen- sional filterbanks generated by Kaldi [23], with mean subtrac- tion and variance normalization on a per -speaker basis. The RNN encoder is a 8-layer BLSTM with 320 LSTM cell units per-direction, and each BLSTM layer is followed by a Batch Normalization layer [24]. Each of the top two lay- ers subsamples the hidden state with a factor of 2 from the output of the layer belo w . The attention decoder is a 1 layer uni-directional LSTM with 320 units. 10 conv olution filters of width 100 are used to compute location based attention ener- gies. The Adadelta algorithm with gradient clipping is adopted as our optimizer , with hyper-parameter = 10 − 8 . For decod- ing, we use the beam search algorithm with the beam size 20. All the experiments are performed with 4 T esla K80 GPU. It takes about 2 days to train the hybrid model over the 1000h dataset. Subword units are extracted using all the transcripts of training data by BPE algorithm. The number of subword units is set to 500. 4.2. Results and Discussions Results of v arious systems are sho wn in T able 2, from which we hav e the following main comments. First, for all the different systems using characters, the hy- brid system outperforms both the CTC and the attention based systems greatly , since it benefits from both loss. T able 3: Experiments with different sizes of training data. W e randomly select 100h and 500h from the 1000h LibriSpeech dataset. All experiments use the same setup which corr esponds to the subwor d set size of 500 and λ of 0.2 in T able 2. hours WER test clean test other 100 34.7 45.4 500 10.4 26.9 1000 6.8 19.5 Second, when using 500 subword units extracted from the training transcripts, the subword-based hybrid system obtains 6.8% WER on test clean and 19.5% on test other . This repre- sents a significant improvement (12.8% and 7% respectiv ely) ov er the character-based hybrid system. Third, we examine the effects of dif ferent λ . The best tuned λ is 0 . 2 , the same as in [5]. Using a larger λ = 0 . 5 , the CTC loss forms a greater proportion in the hybrid loss. The WER degrades because CTC performs badly without an external lan- guage model. Also note that the pure attention based system produces inferior performance without an auxiliary CTC Loss. Forth, we examine the ef fects of using different number of subword units. The performance of the hybrid system using 1000 subword units is slightly better than the character based hybrid system. When increasing the number of subword units, the occurrences of subword units will become sparser . F or ex- ample, the least frequent unit ‘q’ occurred 97 times in the 500 subword set; for the 1000 subword set, the least frequent unit becomes ‘toge’ and occurs only 3 times in the training data. As a result, the model performance deteriorates due to the data sparseness problem. A larger number of subwords, such as 10k, is more suitable for tasks with larger training corpus, like ma- chine translation [16]. Finally , we examine the performances of the subword based hybrid system, using dif ferent amount of training data. T wo subsets of training data are drawn randomly from the 1000h Librispeech training set, having 100h and 500h respectively . W e use the experimental setup which corresponds to the sub- word set size of 500 and λ of 0.2 in T able 2. The results are shown in T able 3. The poor results from using small-sized train- ing dataset indicate that we need a large-sized training dataset in order to successfully train subword systems. It can be seen that the WER decreases rapidly when increasing the size of the training data. Thus, the subword systems potentially can per- form ev en better when trained over much larger scaled data. 5. Conclusions and Future W ork In this work, we present a hybrid CTC-Attention based end- to-end speech recognition system using subword units, which works without any dictionary and language model. Compared to the character -based hybrid system, the proposed subword- based hybrid system significantly reduces WERs in both clean and noisy conditions. Giv en the demonstrated benefits of using subword units, it is worthwhile to further study techniques for better subword unit construction and decompositions. Another future work is to apply the proposed system to larger scale speech recognition tasks with tens of thousands hours of speech data. 6. References [1] L. R. Rabiner , “ A tutorial on hidden markov models and selected applications in speech recognition, ” Proceedings of the IEEE , 1989. [2] G. Hinton, L. Deng, D. Y u, G. E. Dahl, A.-r. Mohamed, N. Jaitly , A. Senior, V . V anhoucke, P . Nguyen, T . N. Sainath et al. , “Deep neural netw orks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Pr ocessing Magazine , vol. 29, no. 6, pp. 82–97, 2012. [3] A. Grav es, S. Fern ´ andez, F . Gomez, and J. Schmidhuber , “Con- nectionist temporal classification: labelling unsegmented se- quence data with recurrent neural networks, ” in International Confer ence on Machine learning (ICML) , 2006. [4] D. Bahdanau, J. Chorowski, D. Serdyuk, P . Brakel, and Y . Ben- gio, “End-to-end attention-based large vocab ulary speech recog- nition, ” in International Conference on Acoustics, Speech and Sig- nal Processing (ICASSP) , 2016. [5] S. Kim, T . Hori, and S. W atanabe, “Joint ctc-attention based end- to-end speech recognition using multi-task learning, ” in Interna- tional Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , 2017. [6] A. Graves and N. Jaitly , “T owards end-to-end speech recognition with recurrent neural networks, ” in International Confer ence on Machine Learning (ICML) , 2014. [7] Y . Miao, M. Go wayyed, and F . Metze, “Eesen: End-to-end speech recognition using deep rnn models and wfst-based decoding, ” in Automatic Speech Recognition and Understanding (ASRU) , 2015. [8] R. Collobert, C. Puhrsch, and G. Synnaev e, “W av2letter: an end- to-end convnet-based speech recognition system, ” arXiv preprint arXiv:1609.03193 , 2016. [9] W . Chan, N. Jaitly , Q. Le, and O. V inyals, “Listen, attend and spell: A neural network for large vocabulary conv ersational speech recognition, ” in International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , 2016. [10] A. Maas, Z. Xie, D. Jurafsky , and A. Ng, “Le xicon-free con versa- tional speech recognition with neural networks, ” in Confer ence of the North American Chapter of the Association for Computational Linguistics (NAA CL) , 2015. [11] Amodei, Dario et al. , “Deep speech 2: End-to-end speech recog- nition in english and mandarin. ” International Conference on Ma- chine Learning (ICML) , 2016. [12] K. Rao, H. Sak, and R. Prabhav alkar, “Exploring architectures, data and units for streaming end-to-end speech recognition with rnn-transducer , ” in Automatic Speech Recognition and Under- standing W orkshop (ASRU) , 2017. [13] T . Zenkel, R. Sanabria, F . Metze, and A. W aibel, “Subword and crossword units for ctc acoustic models, ” arXiv pr eprint arXiv:1712.06855 , 2017. [14] H. Soltau, H. Liao, and H. Sak, “Neural speech recognizer: Acoustic-to-word lstm model for large vocabulary speech recog- nition, ” arXiv preprint , 2016. [15] R. Sennrich, B. Haddow , and A. Birch, “Neural machine trans- lation of rare words with subword units, ” Annual Meeting of the Association for Computational Linguistics (ACL) , 2016. [16] Y . W u, M. Schuster , Z. Chen, Q. V . Le, M. Norouzi, W . Macherey , M. Krikun, Y . Cao, Q. Gao, K. Macherey et al. , “Google’s neural machine translation system: Bridging the gap between human and machine translation, ” arXiv preprint , 2016. [17] M. Schuster and K. Nakajima, “Japanese and korean voice search, ” in International Conference on Acoustics, Speech and Signal Processing (ICASSP) , 2012. [18] H. Liu, Z. Zhu, X. Li, and S. Satheesh, “Gram-ctc: Automatic unit selection and target decomposition for sequence labelling, ” International Conference on Machine Learning (ICML) , 2017. [19] W . Chan, Y . Zhang, Q. Le, and N. Jaitly , “Latent sequence decom- positions, ” arXiv preprint , 2016. [20] V . Panayotov , G. Chen, D. Pove y , and S. Khudanpur , “Lib- rispeech: an asr corpus based on public domain audio books, ” in International Confer ence on Acoustics, Speech and Signal Pr o- cessing (ICASSP) , 2015. [21] S. T okui, K. Oono, S. Hido, and J. Clayton, “Chainer: a next- generation open source framework for deep learning, ” in W ork- shop on Machine Learning Systems (LearningSys), NIPS , 2015. [22] S. W atanabe, T . Hori, S. Karita, T . Hayashi, J. Nishitoba, Y . Unno, N. E. Y . Soplin, J. Heymann, M. W iesner , N. Chen et al. , “Espnet: End-to-end speech processing toolkit, ” arXiv preprint arXiv:1804.00015 , 2018. [23] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The kaldi speech recognition toolkit, ” in Automatic Speech Recognition and Understanding W orkshop (ASRU) , 2011. [24] S. Iof fe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal cov ariate shift, ” Interna- tional Conference on Machine Learning (ICML) , 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment