A MapReduce based Big-data Framework for Object Extraction from Mosaic Satellite Images

We propose a framework stitching of vector representations of large scale raster mosaic images in distributed computing model. In this way, the negative effect of the lack of resources of the central system and scalability problem can be eliminated. The product obtained by this study can be used in applications requiring spatial and temporal analysis on big satellite map images. This study also shows that big data frameworks are not only used in applications of text-based data mining and machine learning algorithms, but also used in applications of algorithms in image processing. The effectiveness of the product realized with this project is also going to be proven by scalability and performance tests performed on real world LandSat-8 satellite images.

💡 Research Summary

**

The paper presents a novel big‑data framework that leverages the MapReduce programming model to perform scalable stitching of large‑scale satellite image mosaics and subsequent object extraction. The authors begin by outlining two fundamental challenges in traditional satellite image processing: (1) the exponential growth of computational resources (CPU, memory) required when stitching many high‑resolution raster tiles on a single machine, and (2) the spatio‑temporal inconsistencies that arise when overlapping mosaics are captured at different times, leading to pixel‑level mismatches and failed stitching. In addition, they note that certain objects (e.g., rivers, road networks) cannot be reliably extracted automatically because their boundaries are not well defined in raster imagery.

To address these issues, the authors propose a distributed architecture built on Hadoop (MapReduce) with auxiliary services such as HBase, Apache Lucene, and PostgreSQL/PostGIS. The workflow consists of five major stages:

-

Pre‑processing and Metadata Extraction – Raw Landsat‑8 tiles are cleaned of ancillary textures (USGS, NASA) and their geographic corner coordinates (NW, NE, SW, SE) are harvested. This metadata is stored in HBase for fast lookup.

-

Mosaic Selection via Polygon Coverage – A user‑defined polygon (the region of interest) is submitted. A MapReduce job evaluates whether the polygon is fully covered by a subset of available tiles, effectively solving a spatial “polygon covering” problem at scale. Only the tiles that guarantee full coverage are passed downstream, reducing unnecessary data movement.

-

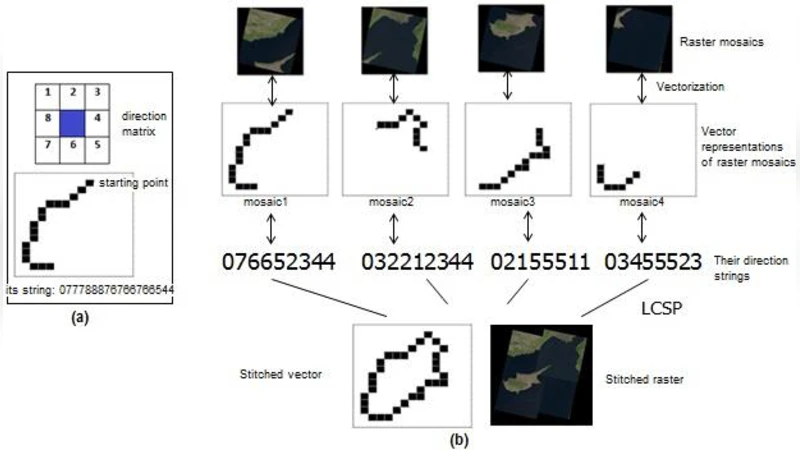

Vectorisation and Stitching – Selected raster tiles are converted into vector representations (points, lines, polygons) in the first Mapper layer. Two alternative stitching algorithms are then applied in subsequent Map/Reduce phases:

- Point‑set registration – Feature points are extracted, matched, and a transformation matrix is estimated (e.g., via RANSAC) to align the vectors.

- LCSP‑based quasi‑vector stitching – The coordinate sequences of adjacent tiles are treated as strings; the longest common subsequence is identified to infer alignment. Both approaches aim to produce a seamless vector mosaic while keeping network traffic low because only geometric primitives, not full pixel arrays, are shuffled between nodes.

-

Object Boundary Definition and Refinement – Users interact with reference maps (e.g., Google Earth) to sketch coarse object boundaries. The system automatically refines these sketches into precise OGC‑compliant geometries (Point, LineString, Polygon) using heuristic refinement techniques. This semi‑automatic step bridges the gap between fully manual digitisation and purely algorithmic extraction.

-

Spatial Database Ingestion and Analysis – The final vector objects are stored in a spatial relational database (PostGIS or Oracle‑Spatial). This enables downstream spatial and topological queries, such as intersection, containment, and temporal change detection, making the output reusable by other researchers.

The authors emphasize that, unlike most prior work which focuses on low‑level raster operations (edge detection, noise removal) in a distributed setting, their contribution lies in moving the heavy lifting to the vector domain, thereby mitigating bandwidth bottlenecks and allowing high‑level GIS operations to be performed directly on the cluster. They also claim that the framework demonstrates the applicability of big‑data platforms beyond text mining and machine‑learning pipelines, extending them to computationally intensive image processing tasks.

The paper outlines an experimental plan rather than presenting concrete results. Planned evaluations include scalability tests on real Landsat‑8 datasets (e.g., stitching the four tiles covering Cyprus) and performance comparisons between the two stitching strategies in terms of runtime and geometric accuracy (e.g., IoU, F‑score). The authors acknowledge that the current stage of the research is limited to design, literature review, and early prototyping; future work will involve detailed benchmarking, accuracy assessment, and possibly exploring in‑memory frameworks such as Spark for iterative image operations.

In summary, the proposed MapReduce‑based framework offers a promising pathway to handle the ever‑growing volume of satellite imagery by (i) selecting only the necessary mosaics through a distributed polygon‑coverage algorithm, (ii) converting raster data to lightweight vector forms, (iii) stitching these vectors using either point‑set registration or LCSP‑inspired alignment, and (iv) delivering the results as standard GIS objects ready for spatial analysis. While the concept is sound and addresses clear scalability bottlenecks, the lack of empirical validation, limited discussion of vectorisation‑induced geometric error, and reliance on user‑driven boundary input are notable gaps that should be addressed in subsequent phases to fully demonstrate the framework’s practicality for real‑time or near‑real‑time geospatial applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment