Inference Compilation and Universal Probabilistic Programming

We introduce a method for using deep neural networks to amortize the cost of inference in models from the family induced by universal probabilistic programming languages, establishing a framework that combines the strengths of probabilistic programmi…

Authors: Tuan Anh Le, Atilim Gunes Baydin, Frank Wood

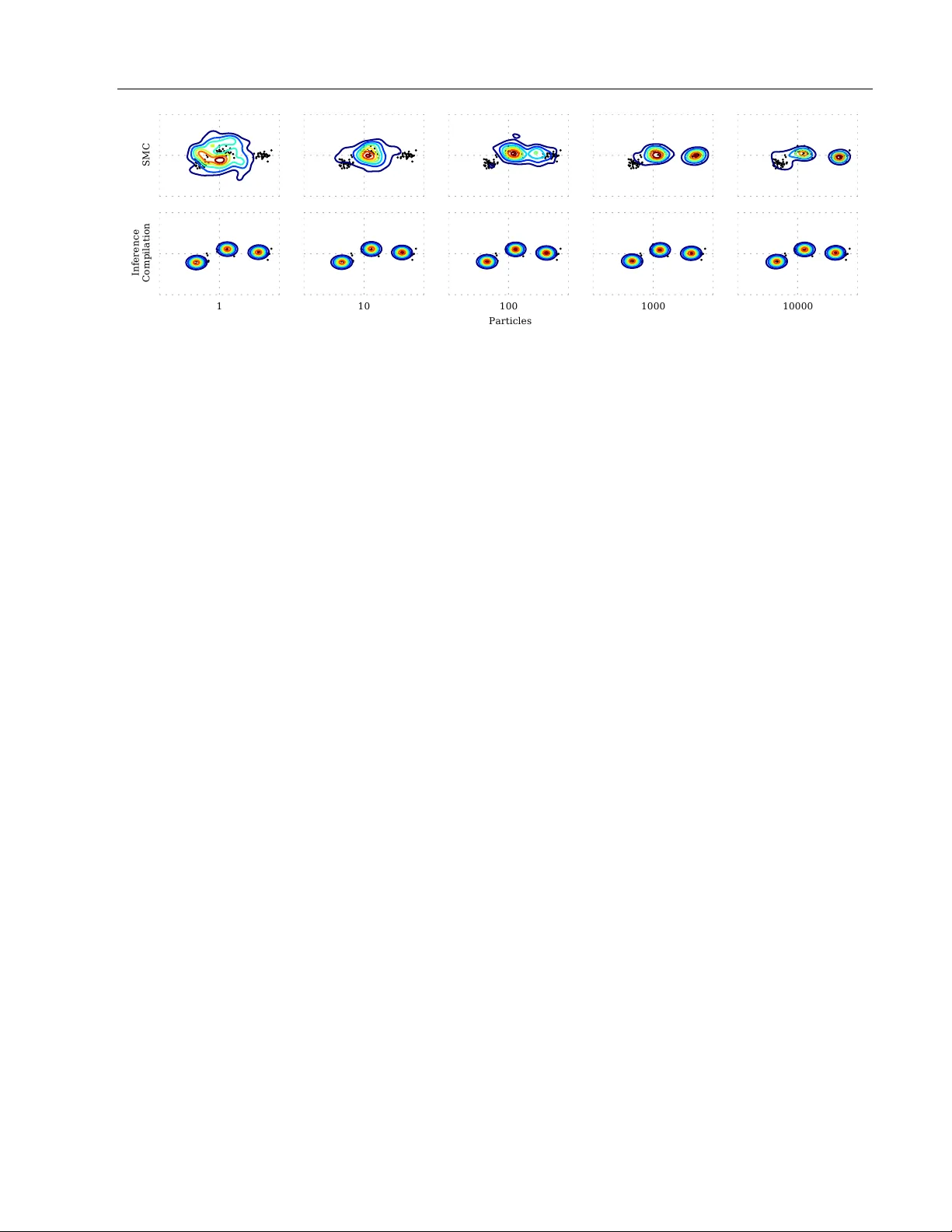

Inference Compilation and Univ ersal Probabilistic Programming T uan Anh Le A tılım Güneş Baydin F rank W o o d Departmen t of Engineering Science, Universit y of Oxford {tuananh, gunes, fwood}@robots.ox.ac.uk Abstract W e in tro duce a metho d for using deep neu- ral netw orks to amortize the cost of inference in mo dels from the family induced b y uni- v ersal probabilistic programming languages, establishing a framework that com bines the strengths of probabilistic programming and deep learning metho ds. W e call what we do “compilation of inference” b ecause our method transforms a denotational sp ecification of an inference problem in the form of a probabilis- tic program written in a universal program- ming language in to a trained neural netw ork denoted in a neural net work sp ecification lan- guage. When at test time this neural net work is fed observ ational data and executed, it per- forms approximate inference in the original mo del sp ecified by the probabilistic program. Our training objective and learning pro cedure are designed to allow the trained neural net- w ork to b e used as a proposal distribution in a sequential imp ortance sampling inference engine. W e illustrate our metho d on mixture mo dels and Captcha solving and show signifi- can t sp eedups in the efficiency of inference. 1 INTR ODUCTION Probabilistic programming uses computer programs to represen t probabilistic mo dels (Gordon et al., 2014). Probabilistic programming systems such as ST AN (Car- p en ter et al., 2015), BUGS (Lunn et al., 2000), and Infer.NET (Minka et al., 2014) allow efficient inference in a restricted space of generativ e mo dels, while sys- tems such as Churc h (Go odman et al., 2008), V enture (Mansinghka et al., 2014), and Anglican (W o o d et al., 2014)—whic h we call universal —allo w inference in un- restricted mo dels. Univ ersal probabilistic programming App earing in Proceedings of the 20 th In ternational Con- ference on Artificial In telligence and Statistics (AIST A TS) 2017, F ort Lauderdale, Flordia, USA. JMLR: W&CP vol- ume 54. Copyrigh t 2017 by the authors. Compilation Probabilistic program p ; y ) Inference T raining data ) ; y ) g T est d ata y Posterior p j y ) T raining Expensive / slow Cheap / fast SIS NN architecture Compilation artifact q j y ; ) D KL p j y ) jj q j y ; )) Figure 1: Our approac h to compiled inference. Given only a probabilistic program p ( x , y ) , during c ompi- lation w e automatically construct a neural netw ork arc hitecture comprising an LSTM core and v arious em b edding and prop osal la yers sp ecified by the proba- bilistic program and train this using an infinite stream of training data { x ( m ) , y ( m ) } generated from the model. When this exp ensiv e compilation stage is complete, we are left with an artifact of w eights φ and neural arc hi- tecture sp ecialized for the given probabilistic program. During infer enc e , the probabilistic program and the compilation artifact is used in a sequen tial imp ortance sampling pro cedure, where the artifact parameterizes the prop osal distribution q ( x | y ; φ ) . systems are built up on T uring complete programming languages which supp ort constructs suc h as higher or- der functions, sto c hastic recursion, and con trol flo w. There has b een a spate of recen t work addressing the pro duction of artifacts via “compiling aw a y” or “amortizing” inference (Gershman and Go o dman, 2014). This b o dy of work is roughly organized into tw o camps. The one in whic h this work lives, arguably the camp organized around “w ake-sleep” (Hin ton et al., 1995), is about offline unsup ervised learning of observ ation- parameterized imp ortance-sampling distributions for Mon te Carlo inference algorithms. In this camp, the approac h of Paige and W o o d (2016) is closest to ours in spirit; they prop ose learning autoregressiv e neural densit y estimation netw orks offline that approximate in verse factorizations of graphical models so that at Inference Compilation and Universal Probabilistic Programming test time, the trained “inference netw ork” starts with the v alues of all observed quantities and progressiv ely prop oses parameters for latent no des in the original structured mo del. Ho wev er, inv ersion of the depen- dency structure is imp ossible in the universal proba- bilistic program mo del family , so our approach instead fo cuses on learning prop osals for “forward” inference metho ds in which no model dep endency in version is p erformed. In this sense, our work can be seen as b eing inspired by that of Kulkarni et al. (2015) and Ritchie et al. (2016b) where program-sp ecific neural prop osal net works are trained to guide forward inference. Our aim, though, is to b e significantly less mo del-sp ecific. A t a high level what characterizes this camp is the fact that the artifacts are trained to suggest sensible yet v aried parameters for a given, explicitly structured and therefore p otentially in terpretable mo del. The other related camp, emerging around the v aria- tional auto enco der (Kingma and W elling, 2014; Burda et al., 2016), also amortizes inference in the manner we describ e, but additionally also simultaneously learns the generative mo del, within the structural regulariza- tion framework of a parameterized non-linear trans- formation of the latent v ariables. Approac hes in this camp generally pro duce recognition netw orks that non- linearly transform observ ational data at test time in to parameters of a v ariational p osterior approximation, alb eit one with less conditional structure, excepting the recen t work of Johnson et al. (2016). A chief adv an tage of this approach is that the learned mo del, as opp osed to the recognition netw ork, is simultaneously regular- ized b oth tow ards b eing simple to p erform inference in and tow ards explaining the data well. In this work, w e concern ourselves with p erforming inference in generative mo dels sp ecified as probabilistic programs while recognizing that alternative metho ds ex- ist for amortizing inference while simultaneously learn- ing mo del structure. Our contributions are t wofold: (1) W e work out wa ys to handle the complexities intro- duced when compiling inference for the class of genera- tiv e mo dels induced by univ ersal probabilistic program- ming languages and establish a technique to embed neural netw orks in forward probabilistic programming inference metho ds suc h as sequential imp ortance sam- pling (Doucet and Johansen, 2009). (2) W e develop an adaptive neural netw ork arc hitecture, comprising a recurrent neural netw ork core and embedding and prop osal lay ers sp ecified b y the probabilistic program, that is reconfigured on-the-fly for eac h execution trace and trained with an infinite stream of training data sampled from the generative mo del. This establishes a framew ork combining deep neural netw orks and gener- ativ e modeling with universal probabilistic programs (Figure 1). W e b egin by pro viding background information and reviewing related w ork in Section 2. In Section 3 we in tro duce inference compilation for sequen tial imp or- tance sampling, the ob jective function, and the neural net work arc hitecture. Section 4 demonstrates our ap- proac h on t wo examples, mixture mo dels and Captc ha solving, follow ed by the discussion in Section 5. 2 BA CK GROUND 2.1 Probabilistic Programming Probabilistic programs denote probabilistic generative mo dels as programs that include sample and observe statemen ts (Gordon et al., 2014). Both sample and observe are functions that sp ecify random v ariables in this generative mo del using probability distribu- tion ob jects as an argumen t, while observe , in addi- tion, sp ecifies the conditioning of this random v ariable up on a particular observed v alue in a second argument. These observed v alues induce a conditional probability distribution ov er the execution traces whose approxi- mations and exp ected v alues w e w ant to characterize b y p erforming inference. An execution trace of a probabilistic program is ob- tained by successively executing the program determin- istically , except when encountering sample statemen ts at which p oint a v alue is generated according to the sp ecified probability distribution and app ended to the execution trace. W e assume the order in which the observe statemen ts are encoun tered is fixed. Hence w e denote the observed v alues by y := ( y n ) N n =1 for a fixed N in all p ossible traces. Dep ending on the probabilistic program and the v alues generated at sample statemen ts, the order in whic h the execution encounters sample statemen ts as well as the num ber of encoun tered sample statemen ts may b e differen t from one trace to another. Therefore, giv en a sc heme whic h assigns a unique address to each sample statemen t according to its lexical p osition in the probabilistic program, w e represen t an execution trace of a probabilistic program as a sequence ( x t , a t , i t ) T t =1 , (1) where x t , a t , and i t are resp ectively the sample v alue, address, and instance (call num b er) of the t th en try in a given trace, and T is a trace-dep endent length. Instance v alues i t = P t j =1 1 ( a t = a j ) count the num b er of sample v alues obtained from the sp ecific sample statemen t at address a t , up to time step t . F or each trace, a sequence x := ( x t ) T t =1 holds the T sampled v alues from the sample statements. The joint probability density of an execution trace is p ( x , y ) := T Y t =1 f a t ( x t | x 1: t − 1 ) N Y n =1 g n ( y n | x 1: τ ( n ) ) , (2) T uan Anh Le, Atılım Güneş Ba ydin, F rank W o o d Figure 2: Results from counting and lo calizing ob jects detected in the P ASCAL V OC 2007 dataset (Everingham et al., 2010). W e use the corresp onding categories of ob ject detectors (i.e., person, cat, bicycle) from the MatCon vNet (V edaldi and Lenc, 2015) implementation of the F ast R-CNN (Girshick, 2015). The detector output is pro cessed by using a high detection threshold and summarized b y represen ting the b ounding b o x detector output by a single cen tral p oin t. Inference using a single trained neural netw ork was able to accurately identify b oth the n umber of detected objects and their lo cations for all categories. MAP results from 100 particles. where f a t is the probabilit y distribution sp ecified b y the sample statemen t at address a t and g n is the probabil- it y distribution sp ecified b y the n th observe statemen t. f a t ( ·| x 1: t − 1 ) is called the prior conditional densit y given the sample v alues x 1: t − 1 obtained b efore encoun tering the t th sample statemen t. g n ( ·| x 1: τ ( n ) ) is called the lik eliho o d densit y given the sample v alues x 1: τ ( n ) ob- tained b efore encounteri ng the n th observe statemen t, where τ is a mapping from the index n of the observe statemen t to the index of the last sample statemen t encoun tered b efore this observe statemen t during the execution of the program. Inference in such mo dels amounts to computing an appro ximation of p ( x | y ) and its exp ected v alues I ζ = R ζ ( x ) p ( x | y ) d x ov er chosen functions ζ . While there are many inference algorithms for universal probabilistic programming languages (Wingate et al., 2011; Ritchie et al., 2016a; W oo d et al., 2014; P aige et al., 2014; Rainforth et al., 2016), we focus on al- gorithms in the imp ortance sampling family in the con text of which we will develop our sc heme for amor- tized inference. This is related, but different to the approac hes that adapt prop osal distributions for the imp ortance sampling family of algorithms (Gu et al., 2015; Cheng and Druzdzel, 2000). 2.2 Sequen tial Imp ortance Sampling Sequen tial imp ortance sampling (SIS) (Arulampalam et al., 2002; Doucet and Johansen, 2009) is a metho d for p erforming inference o ver execution traces of a proba- bilistic program (W o od et al., 2014) whereb y a weigh ted set of samples { ( w k , x k ) } K k =1 is used to appro ximate the p osterior and the expectations of functions as ˆ p ( x | y ) = K X k =1 w k δ ( x k − x ) / K X j =1 w j (3) ˆ I ζ = K X k =1 w k ζ ( x k ) / K X j =1 w j , (4) where δ is the Dirac delta function. SIS requires designing prop osal distributions q a,i corre- sp onding to the addresses a of all sample statemen ts in the probabilistic program and their instance v alues i . A prop osal execution trace x k 1: T k is built by executing the program as usual, except when a sample statemen t at address a t is encountered at time t , a prop osal sam- ple v alue x k t is sampled from the prop osal distribution q a t ,i t ( ·| x k 1: t − 1 ) giv en the prop osal sample v alues un til that point. W e obtain K prop osal execution traces x k := x k 1: T k (p ossibly in parallel) to whic h w e assign w eights w k = N Y n =1 g n ( y n | x k 1: τ k ( n ) ) · T k Y t =1 f a t ( x k t | x k 1: t − 1 ) q a t ,i t ( x k t | x k 1: t − 1 ) (5) for k = 1 , . . . , K with T k denoting the length of the k th prop osal execution trace. 3 APPR O ACH W e ac hieve inference compilation in univ ersal proba- bilistic programming systems through prop osal distri- bution adaptation, approximating p ( x | y ) in the frame- w ork of SIS. Assuming w e hav e a set of adapted pro- p osals q a t ,i t ( x t | x 1: t − 1 , y ) such that their joint q ( x | y ) is close to p ( x | y ) , the resulting inference algorithm remains unchanged from the one described in Sec- tion 2.2, except the replacement of q a t ,i t ( x t | x 1: t − 1 ) by q a t ,i t ( x t | x 1: t − 1 , y ) . Inference compilation amounts to minimizing a func- tion, sp ecifically the loss of a neural netw ork architec- ture, which makes the prop osal distributions go o d in the sense that we sp ecify in Section 3.1. The pro cess of generating training data for this neural net work archi- tecture from the generative mo del is describ ed in Sec- tion 3.2. At the end of training, we obtain a compilation artifact comprising the neural netw ork comp onents— the recurrent neural netw ork core and the em b edding and prop osal lay ers corresp onding to the original mo del Inference Compilation and Universal Probabilistic Programming denoted by the probabilistic program—and the set of trained weigh ts, as describ ed in Section 3.3. 3.1 Ob jectiv e F unction W e use the Kullbac k–Leibler div ergence D KL ( p ( x | y ) || q ( x | y ; φ )) as our measure of close- ness b et ween p ( x | y ) and q ( x | y ; φ ) . T o achiev e closeness o ver many p ossible y ’s, we take the exp ec- tation of this quantit y under the distribution of p ( y ) and ignore the terms excluding φ in the last equality: L ( φ ) := E p ( y ) [ D KL ( p ( x | y ) || q ( x | y ; φ ))] (6) = Z y p ( y ) Z x p ( x | y ) log p ( x | y ) q ( x | y ; φ ) d x d y = E p ( x , y ) [ − log q ( x | y ; φ )] + const. (7) This ob jective function corresp onds to the nega- tiv e entrop y criterion. Individual adapted prop osals q a t ,i t ( x t | η t ( x 1: t − 1 , y , φ )) =: q a t ,i t ( x t | x 1: t − 1 , y ) dep end on η t , the output of the neural netw ork at time step t , parameterized by φ . Considering the factorization q ( x | y ; φ ) = T Y t =1 q a t ,i t ( x t | η t ( x 1: t − 1 , y , φ )) , (8) the neural netw ork architecture must b e able to map to a v ariable n umber of outputs, and incorp orate sampled v alues in a sequential manner, concurren t with the running of the inference engine. W e describ e our neural net work architecture in detail in Section 3.3. 3.2 T raining Data Since Eq. 7 is an exp ectation ov er the joint distribution, w e can use the following noisy un biased estimate of its gradien t to minimize the ob jective: ∂ ∂ φ L ( φ ) ≈ 1 M M X m =1 ∂ ∂ φ − log q ( x ( m ) | y ( m ) ; φ ) (9) ( x ( m ) , y ( m ) ) ∼ p ( x , y ) , m = 1 , . . . , M . (10) Here, ( x ( m ) , y ( m ) ) is the m th training (probabilistic program execution) trace generated by running an un- constrained probabilistic program corresp onding to the original one. This unconstrained probabilistic program is obtained by a program transformation whic h re- places eac h observe statemen t in the original program b y sample and ignores its second argument. Univ ersal probabilistic programming languages supp ort sto c hastic branching and can generate execution traces with a c hanging (and p ossibly unbounded) n umber of random choices. W e must, therefore, k eep track of information ab out the addresses and instances of the samples x ( m ) t in the execution trace, as introduced in Eq. 1. Sp ecifically , we generate our training data in the form of minibatches (Cotter et al., 2011) sampled from the generativ e mo del p ( x , y ) : D train = x ( m ) t , a ( m ) t , i ( m ) t T ( m ) t =1 , y ( m ) n N n =1 M m =1 , (11) where M is the minibatch size, and, for a given trace m , the sample v alues, addresses, and instances are re- sp ectiv ely denoted x ( m ) t , a ( m ) t , and i ( m ) t , and the v alues sampled from the distributions in observe statemen ts are denoted y ( m ) n . During compilation, training minibatc hes are gener- ated on-the-fly from the probabilistic generativ e model and streamed to a sto chastic gradient descent (SGD) pro cedure, sp ecifically Adam (Kingma and Ba, 2015), for optimizing the neural net work weigh ts φ . Minibatc hes of this infinite stream of training data are discarded after each SGD up date; we therefore hav e no notion of a finite training set and asso ciated issues such as ov erfitting to a set of training data and early stopping using a v alidation set (Prechelt, 1998). W e do sample a v alidation set that remains fixed during training to compute v alidation losses for tracking the progress of training in a less noisy wa y than that admitted by the training loss. 3.3 Neural Net work Architecture Our compilation artifact is a collection of neural net- w ork components and their trained w eights, sp ecialized in p erforming inference in the mo del sp ecified b y a giv en probabilistic program. The neural net work ar- c hitecture comprises a non-domain-sp ecific recurren t neural netw ork (RNN) core and domain-sp ecific obser- v ation em b edding and prop osal la yers sp ecified by the giv en program. W e denote the set of the com bined parameters of all neural net work comp onen ts φ . RNNs are a popular class of neural net work architec- ture whic h are well-suited for sequence-to-sequence mo deling (Sutskev er et al., 2014) with a wide sp ec- trum of state-of-the-art results in domains including mac hine translation (Bahdanau et al., 2014), video captioning (V enugopalan et al., 2014), and learning execution traces (Reed and de F reitas, 2016). W e use RNNs in this w ork owing to their ability to enco de dep endencies ov er time in the hidden state. In par- ticular, we use the long short-term memory (LSTM) arc hitecture whic h helps mitigate the v anishing and explo ding gradient problems of RNNs (Ho chreiter and Sc hmidhuber, 1997). The ov erall architecture (Figure 3) is formed by com- bining the LSTM core with a domain-sp ecific observe em b edding lay er f obs , and several sample em b edding T uan Anh Le, Atılım Güneş Ba ydin, F rank W o o d la yers f smp a,i and prop osal la yers f prop a,i that are distinct for each address–instance pair ( a, i ) . As describ ed in Section 3.2, each probabilistic program execution trace can b e of different length and comp osed of a differen t sequence of addresses and instances. T o handle this complexit y , w e define an adaptiv e neural netw ork ar- c hitecture that is reconfigured for each encountered trace by attaching the corresp onding embedding and prop osal lay ers to the LSTM core, creating new lay ers on-the-fly on the first encoun ter with each ( a, i ) pair. Ev aluation starts by computing the observe em b ed- ding f obs ( y ) . This embedding is computed once p er trace and rep eatedly supplied as an input to the LSTM at eac h time step. Another alternativ e is to supply this embedding only once in the first time step, an ap- proac h preferred by Karpath y and F ei-F ei (2015) and Vin yals et al. (2015) to preven t ov erfitting (also see Section 4.2). A t each time step t , the input ρ t of the LSTM is constructed as a concatenation of 1. the observe em b edding f obs ( y ) , 2. the em b edding of the previous sample f smp a t − 1 ,i t − 1 ( x t − 1 ) , using zero for t = 1 , and 3. the one-hot enco dings of the current address a t , in- stance i t , and prop osal type t yp e ( a t ) of the sample statemen t for which the artifact will generate the parameter η t of the prop osal distribution q a t ,i t ( ·| η t ) . The parameter η t is obtained via the proposal lay er f prop a t ,i t ( h t ) , mapping the LSTM output h t through the corresp onding pro- p osal lay er. The LSTM net work has the capacity to incorp orate inputs in its hidden state. This allows the parametric prop osal q a t ,i t ( x t | η t ( x 1: t − 1 , y , φ )) to tak e in to accoun t all previous samples and all observ ations. During training (compilation), we supply the ac- tual sample v alues x ( m ) t − 1 to the embedding f smp a t − 1 ,i t − 1 , and w e are in terested in the parameter η t in or- der to calculate the p er-sample gradient ∂ ∂ φ − log q a ( m ) t ,i ( m ) t ( x ( m ) t | η t ( x 1: t − 1 , y , φ )) to use in SGD. During inference, the ev aluation pro ceeds by requesting prop osal parameters η t from the artifact for sp ecific address–instance pairs ( a t , i t ) as these are encountered. The v alue x t − 1 is sampled from the proposal distribu- tion in the previous time step. The neural netw ork artifact is implemented in T orch (Collob ert et al., 2011), and it uses a ZeroMQ-based proto col for interfacing with the Anglican probabilistic programming system (W o o d et al., 2014). This setup allo ws distributed training (e.g., Dean et al. (2012)) and inference with GPU supp ort across many machines, LSTM . . . x t 1 a t i t type a t ) one-hot one-hot one-hot f obs f smp a i f prop a i t t 1 t 2 h t h t 1 h t 2 t t 1 t 2 observe smple Figure 3: The neural netw ork architecture. f obs : observe em b edding; f smp a t − 1 ,i t − 1 : sample embeddings; x t − 1 : previous sample v alue; a t , i t , t yp e ( a t ) : one-hot enco dings of current address, instance, prop osal type; ρ t : LSTM input; h t : LSTM output; f prop a t ,i t : prop osal la yers; η t : prop osal parameters. Note that the LSTM core can p ossibly b e a stack of multiple LSTMs. whic h is b eyond the scope of this pap er. The source co de for our framework and for repro ducing the ex- p erimen ts in this pap er can b e found on our pro ject page. 1 4 EXPERIMENTS W e demonstrate our inference compilation framework on tw o examples. In our first example we demonstrate an op en-univ erse mixture mo del. In our second, we demonstrate Captcha solving via probabilistic inference (Mansinghka et al., 2013). 2 4.1 Mixture Mo dels Mixture mo deling, e.g. the Gaussian mixture model (GMM) sho wn in Figure 5, is ab out density estimation, clustering, and counting. The inference problems p osed b y a GMM, given a set of v ector observ ations, are to iden tify ho w many , where, and how big the clusters are, and optionally , which data p oints belong to each cluster. W e in vestigate inference compilation for a tw o- dimensional GMM in which the num b er of clusters is unknown. Inference arises from observing the v al- 1 https://probprog.github.io/ inference- compilation/ 2 A video of inference on real test data for b oth examples is av ailable at: https://youtu.be/m- FYEXVyQjQ Inference Compilation and Universal Probabilistic Programming SMC 1 Inference Compilation 10 100 1000 10000 Particles Figure 4: Typical inference results for an isotropic Gaussian mixture mo del with num b er of clusters fixed to K = 3 . Shown in all panels: k ernel density estimation of the distribution ov er maximum a p osteriori v alues of the means { max µ k p ( µ k | y ) } 3 k =1 o ver 50 indep endent runs. This figure illustrates the uncertain ty in the estimate of where cluster means are for eac h giv en n umber of particles, or equiv alently , fixed amoun t of computation. The top row shows that, giv en more computation, inference, as exp ected, slowly b ecomes less noisy in exp ectation. In con trast, the b ottom row shows that the prop osal learned and used by inference compilation pro duces a low-noise, highly accurate estimate given even a very small amount of computation. Effectively , the enco der learns to sim ultaneously lo calize all of the clusters highly accurately . ues of y n (Figure 5, line 9) and inferring the p osterior n umber of clusters K and the set of cluster mean and co v ariance parameters { µ k , Σ k } K k =1 . W e assume that the input data to this mo del has b een translated to the origin and normalized to lie within [ − 1 , 1] in b oth dimensions. In order to make go o d prop osals for such inference, the neural netw ork must b e able to count, i.e., extract and represen t information ab out how man y clusters there are and, conditioned on that, to lo calize the clusters. T ow ards that end, w e select a conv olutional neural net work as the observ ation em b edding, whose input is a tw o-dimensional histogram image of binned observed data y . In presenting observ ational data y assumed to arise from a mixture mo del to the neural net work, there are some imp ortant considerations that must b e accounted for. In particular, there are symmetries in mixture mo dels (Nishihara et al., 2013) that m ust be broken in order for training and inference to w ork. First, there are K ! (factorial) wa ys to lab el the classes. Second, there are N ! wa ys the individual data p oints could b e p erm uted. Even in experiments like ours with K < 6 and N ≈ 100 , this presen ts a ma jor challenge for neu- ral netw ork training. W e break the first symmetry b y , at training time, sorting the clusters b y the Euclidian distance of their means from the origin and relab eling all p oints with a p ermutation that lab els p oin ts from the cluster nearest the original as coming from the first cluster, next closest the second, and so on. This is only appro ximately symmetry breaking as man y differen t clusters ma y b e very nearly the same distance aw a y from the origin. Second, we a void the N ! symmetry by only predicting the num b er, means, and cov ariances 1: pro cedure GaussianMixture 2: K ∼ p ( K ) sample num b er of clusters 3: for k = 1 , . . . , K do 4: µ k , Σ k ∼ p ( µ k , Σ k ) sample cluster parameters 5: Gener ate data : 6: π ← uniform (1 , K ) 7: for n = 1 , . . . , N do 8: z n ∼ p ( z n | π ) sample class lab el 9: y n ∼ p ( y n | z n = k, µ k , Σ k ) sample data 10: r eturn y n Figure 5: Pseudo algorithm for generating Gaussian mixtures of a v ariable num b er of clusters. At test time w e observe data y n and infer K, { µ k , Σ k } K k =1 . of the clusters, not the individual cluster assignments. The net effect of the sorting is that the prop osal mec h- anism will learn to prop ose the nearest cluster to the origin as it receiv es training data alwa ys sorted in this manner. Figure 4, where we fix the num b er of clusters to 3 , sho ws that w e are able to learn a prop osal that makes inference dramatically more efficient than sequential Mon te Carlo (SMC) (Doucet and Johansen, 2009). Fig- ure 2 shows one kind of application suc h an efficient inference engine can do: sim ultaneous ob ject counting (Lempitsky and Zisserman, 2010) and lo calization for computer vision, where w e achiev e counting by setting the prior p ( K ) o ver num b er of clusters to b e a uniform distribution ov er { 1 , 2 , . . . , 5 } . 4.2 Captc ha Solving W e also demonstrate our inference compilation frame- w ork b y writing generative probabilistic models for Captc has (v on Ahn et al., 2003) and comparing our re- T uan Anh Le, Atılım Güneş Ba ydin, F rank W o o d 1: pro cedure Captcha 2: ν ∼ p ( ν ) sample num b er of letters 3: κ ∼ p ( κ ) sample kerning v alue 4: Gener ate letters : 5: Λ ← {} 6: for i = 1 , . . . , ν do 7: λ ∼ p ( λ ) sample letter identity 8: Λ ← app end (Λ , λ ) 9: R ender : 10: γ ← render (Λ , κ ) 11: π ∼ p ( π ) sample noise parameters 12: γ ← noise ( γ , π ) 13: r eturn γ a 1 = “ ν ” a 2 = “ κ ” a 3 = “ λ ” a 4 = “ λ ” i 1 = 1 i 2 = 1 i 3 = 1 i 4 = 2 x 1 = 7 x 2 = − 1 x 3 = 6 x 4 = 23 a 5 = “ λ ” a 6 = “ λ ” a 7 = “ λ ” a 8 = “ λ ” i 5 = 3 i 6 = 4 i 7 = 5 i 8 = 6 x 5 = 18 x 6 = 53 x 7 = 17 x 8 = 43 a 9 = “ λ ” Noise: Noise: Noise: i 9 = 7 displacement strok e ellipse x 9 = 9 field Figure 6: Pseudo algorithm and a sample trace of the F aceb o ok Captcha generative pro cess. V ariations include sampling font styles, co ordinates for letter place- men t, and language-mo del-like letter identit y distribu- tions p ( λ | λ 1: t − 1 ) (e.g., for meaningful Captchas). Noise parameters π ma y or may not b e a part of inference. A t test time w e observe image γ and infer ν, Λ . sults with the literature. Captcha solving is w ell suited for a generativ e probabilistic programming approach b ecause its laten t parameterization is low-dimensional and interpretable by design. Using conv entional com- puter vision techniques, the problem has b een previ- ously approached using segmen t-and-classify pip elines (Starostenk o et al., 2015; Bursztein et al., 2014; Gao et al., 2014, 2013), and state-of-the-art results ha ve b een obtained b y using deep conv olutional neural net- w orks (CNNs) (Go o dfello w et al., 2014; Stark et al., 2015), at the cost of requiring very large (in the order of millions) lab eled training sets for sup ervised learning. W e start by writing generative models for each of the types surv eyed by Bursztein et al. (2014), namely Baidu 2011 ( ), Baidu 2013 ( ), eBa y ( ), Y aho o ( ), reCaptcha ( ), and Wikip edia ( ). Figure 6 pro vides an o verall summary of our mo deling approach. The actual mo dels include domain-sp ecific letter dictionaries, fon t st yles, and v ar- ious t yp es of renderer noise for matching each Captc ha st yle. In particular, implementing the displacement fields technique of Simard et al. (2003) pro ved instru- men tal in achieving our results. Note that the param- eters of sto chastic renderer noise are not inferred in the example of Figure 6. Our exp eriments ha ve shown that we can successfully train artifacts that also extract renderer noise parameters, but excluding these from the list of addresses for which w e learn prop osal dis- tributions improv es robustness when testing with data not sampled from the same mo del. This corresp onds to the w ell-known technique of adding syn thetic v aria- tions to training data for transformation inv ariance, as used by Simard et al. (2003), V arga and Bunk e (2003), Jaderb erg et al. (2014), and many others. F or the compilation artifacts w e use a stack of t wo LSTMs of 512 hidden units eac h, an observe -em b edding CNN consisting of six con- v olutions and t w o linear la y ers organized as [2 × Con volution]-MaxP o oling-[3 × Con volution]- MaxP o oling-Conv olution-MaxP o oling-Linear-Linear, where conv olutions are 3 × 3 with successively 64, 64, 64, 128, 128, 128 filters, max-p o oling lay ers are 2 × 2 with step size 2, and the resulting em b edding vector is of length 1024. All con volutions and linear lay ers are follo wed by ReLU activ ation. Dep ending on the particular style, eac h artifact has approximately 20M trainable parameters. Artifacts are trained end-to-end using Adam (Kingma and Ba, 2015) with initial learning rate α = 0 . 0001 , h yp erparameters β 1 = 0 . 9 , β 2 = 0 . 999 , and minibatc hes of size 128. T able 1 rep orts inference results with test images sam- pled from the mo del, where we achiev e v ery high recognition rates across the board. The reported re- sults are obtained after approximately 16M training traces. With the resulting artifacts, running infer- ence on a test Captcha tak es < 100 ms, whereas dura- tions ranging from 500 ms (Starostenk o et al., 2015) to 7.95 s (Bursztein et al., 2014) hav e b een rep orted with segmen t-and-classify approaches. W e also compared our approach with the one by Mansinghka et al. (2013). Their metho d is slow since it must b e run anew for eac h Captc ha, taking in the order of min utes to solve one Captcha in our implemen tation of their metho d. The probabilistic program must also b e written in a w ay amenable to Marko v Chain Monte Carlo inference suc h as ha ving auxiliary indicator random v ariables for rendering letters to ov ercome multimodality in the p osterior. W e subsequen tly in vestigated ho w the trained mo dels w ould perform on Captcha images collected from the w eb. W e identified Wikipedia and F aceb o ok as t wo ma jor services still making use of textual Captchas, and collected and labeled test sets of 500 images each. 3 Initially obtaining low recognition rates ( < 10%), with sev eral iterations of mo del mo difications (inv olving tun- ing of the prior distributions for fon t size and renderer noise), we w ere able to ac hieve 81% and 42% recogni- tion rates with real Wikip edia and F aceb o ok datasets, considerably higher than the threshold of 1% needed to 3 F aceb o ok Captchas are collected from a page for ac- cessing groups. Wikipedia Captc has app ear on the account creation page. Inference Compilation and Universal Probabilistic Programming T able 1: Captc ha recognition rates. Baidu 2011 Baidu 2013 eBa y Y aho o reCaptc ha Wikip edia F acebo ok Our metho d 99.8% 99.9% 99.2% 98.4% 96.4% 93.6% 91.0% Bursztein et al. (2014) 38.68% 55.22% 51.39% 5.33% 22.67% 28.29% Starostenk o et al. (2015) 91.5% 54.6% Gao et al. (2014) 34% 55% 34% Gao et al. (2013) 51% 36% Go odfellow et al. (2014) 99.8% Stark et al. (2015) 90% deem a Captcha scheme broken (Bursztein et al., 2011). The fact that we had to tune our priors highlights the issues of mo del bias and “syn thetic gap” (Zhang et al., 2015) when training mo dels with syn thetic data and testing with real data. 4 In our exp erimen ts we also in vestigated feeding the observe em b eddings to the LSTM at all time steps v ersus only in the first time step. W e empirically veri- fied that b oth metho ds pro duce equiv alent results, but the latter takes significantly (approx. 3 times) longer to train. This is b ecause we are training f obs end-to- end from scratch, and the former setup results in more frequen t gradient up dates for f obs p er training trace. 5 In summary , we only need to write a probabilistic generativ e model that pro duces Captchas sufficien tly similar to those that we would like to solve. Using our inference compilation framework, we get the inference neural netw ork architecture, training data, and labels for free. If you can create instances of a Captcha, y ou can break it. 5 DISCUSSION W e ha ve explored making use of deep neural netw orks for amortizing the cost of inference in probabilistic programming. In particular, we transform an inference problem given in the form of a probabilistic program in to a trained neural netw ork arc hitecture that pa- rameterizes prop osal distributions during sequential imp ortance sampling. The amortized inference tec h- nique presented here pro vides a framework within which to integrate the expressiveness of univ ersal probabilis- tic programming languages for generative mo deling and the pro cessing speed of deep neural netw orks for inference. This merger addresses several fundamen- 4 Note that the synthetic/real b oundary is not alw ays clear: for instance, we assume that the Captcha results in Go odfellow et al. (2014) closely corresp ond to our results with synthetic test data b ecause the authors ha ve access to Go ogle’s true generative process of reCaptcha images for their synthetic training data. Stark et al. (2015) b oth train and test their mo del with synthetic data. 5 Both Karpathy and F ei-F ei (2015) and Viny als et al. (2015), who feed CNN output to an RNN only once, use pretrained embedding lay ers. tal challenges asso ciated with its constituents: fast and scalable inference on probabilistic programs, in ter- pretabilit y of the generativ e mo del, an infinite stream of labeled training data, and the ability to correctly represen t and handle uncertain ty . Our exp erimental results sho w that, for the family of mo dels on which we fo cused, the prop osed neural net work architecture can b e successfully trained to ap- pro ximate the parameters of the posterior distribution in the sample space with nonlinear regression from the observe space. There are tw o asp ects of this ar- c hitecture that we are currently w orking on refining. Firstly , the structure of the neural net work is not wholly determined by the giv en probabilistic program: the in- v ariant LSTM core maintains long-term dep endencies and acts as the glue betw een the embedding and pro- p osal lay ers that are automatically configured for the address–instance pairs ( a t , i t ) in the program traces. W e would like to explore architectures where there is a tigh t correspondence b et ween the neural artifact and the computational graph of the probabilistic program. Secondly , domain-sp ecific observe em b eddings suc h as the conv olutional neural netw ork that we designed for the Captcha-solving task are hand pic ked from a range of fully-connected, conv olutional, and recurrent archi- tectures and trained end-to-end together with the rest of the architecture . F uture w ork will explore automat- ing the selection of p otentially pretrained embeddings. A limitation that comes with not learning the gen- erativ e mo del itself—as is done b y the mo dels orga- nized around the v ariational auto enco der (Kingma and W elling, 2014; Burda et al., 2016)—is the p ossibility of mo del missp ecification (Shalizi et al., 2009; Gel- man and Shalizi, 2013). Section 3.2 explains that our training setup is exempt from the common problem of o verfitting to the training set. But as demonstrated b y the fact that we needed alterations in our Captcha mo del priors for handling real data, we do hav e a risk of o verfitting to the mo del. Therefore we need to ensure that our generative mo del is ideally as close as p ossi- ble to the true data generation pro cess and remember that missp ecification in terms of broadness is prefer- able to a missp ecification where w e ha ve a narrow, but uncalibrated, mo del. T uan Anh Le, Atılım Güneş Ba ydin, F rank W o o d A ckno wledgements W e w ould lik e to thank Hakan Bilen for his help with the MatConvNet setup and showing us ho w to use his F ast R-CNN implemen tation and T om Rainforth for his helpful advice. T uan Anh Le is supported by EPSRC DT A and Go ogle (pro ject code DF6700) studentships. A tılım Güneş Baydin and F rank W o o d are supp orted under DARP A PP AML through the U.S. AFRL under Co operative Agreement F A8750-14-2-0006, Sub A ward n umber 61160290-111668. References M. S. Arulampalam, S. Maskell, N. Gordon, and T. Clapp. A tutorial on particle filters for online nonlinear/non-Gaussian Bay esian tracking. IEEE T r ansactions on Signal Pr o c essing , 50(2):174–188, 2002. D. Bahdanau, K. Cho, and Y. Bengio. Neural ma- c hine translation by jointly learning to align and translate. In International Confer enc e on L e arning R epr esentations (ICLR) , 2014. Y. Burda, R. Grosse, and R. Salakhutdino v. Imp or- tance weigh ted autoenco ders. In International Con- fer enc e on L e arning R epr esentations (ICLR) , 2016. E. Bursztein, M. Martin, and J. Mitchell. T ext-based CAPTCHA strengths and weaknesses. In Pr o c e e dings of the 18th A CM Confer enc e on Computer and Com- munic ations Se curity , pages 125–138. A CM, 2011. E. Bursztein, J. Aigrain, A. Moscicki, and J. C. Mitchell. The end is nigh: generic solving of text-based CAPTCHAs. In 8th USENIX W orkshop on Offensive T e chnolo gies (WOOT 14) , 2014. B. Carp enter, A. Gelman, M. Hoffman, D. Lee, B. Go o dric h, M. Betancourt, M. A. Brubaker, J. Guo, P . Li, and A. Riddell. Stan: a probabilistic program- ming language. Journal of Statistic al Softwar e , 2015. J. Cheng and M. J. Druzdzel. Ais-bn: An adaptive imp ortance sampling algorithm for evidential reason- ing in large bay esian net works. Journal of A rtificial Intel ligenc e R ese ar ch , 2000. R. Collob ert, K. Kavuk cuoglu, and C. F arab et. T orch7: A MA TLAB-lik e en vironment for machine learning. In BigL e arn, NIPS W orkshop , EPFL-CONF-192376, 2011. A. Cotter, O. Shamir, N. Srebro, and K. Sridharan. Better mini-batch algorithms via accelerated gradi- en t metho ds. In A dvanc es in Neur al Information Pr o c essing Systems , pages 1647–1655, 2011. J. Dean, G. Corrado, R. Monga, K. Chen, M. Devin, M. Mao, M. aurelio Ranzato, A. Senior, P . T uck er, K. Y ang, Q. V. Le, and A. Y. Ng. Large scale distributed deep net works. In F. Pereira, C. J. C. Burges, L. Bottou, and K. Q. W ein b erger, editors, A dvanc es in Neur al Information Pr o c essing Systems 25 , pages 1223–1231. Curran Asso ciates, Inc., 2012. A. Doucet and A. M. Johansen. A tutorial on particle filtering and smo othing: Fifteen years later. Hand- b o ok of Nonline ar Filtering , 12(656–704):3, 2009. M. Everingham, L. V an Go ol, C. K. Williams, J. Winn, and A. Zisserman. The Pascal visual ob ject classes (V OC) challenge. International Journal of Computer V ision , 88(2):303–338, 2010. H. Gao, W. W ang, J. Qi, X. W ang, X. Liu, and J. Y an. The robustness of hollow CAPTCHAs. In Pr o c e e dings of the 2013 A CM SIGSA C Confer enc e on Computer & Communic ations Se curity , pages 1075–1086. ACM, 2013. H. Gao, W. W ang, Y. F an, J. Qi, and X. Liu. The robustness of “connecting characters together” CAPTCHAs. Journal of Information Scienc e and Engine ering , 30(2):347–369, 2014. A. Gelman and C. R. Shalizi. Philosoph y and the practice of Bay esian statistics. British Journal of Mathematic al and Statistic al Psycholo gy , 66(1):8–38, 2013. S. J. Gershman and N. D. Goo dman. Amortized in- ference in probabilistic reasoning. In Pr o c e e dings of the 36th A nnual Confer enc e of the Co gnitive Scienc e So ciety , 2014. R. Girshick. F ast R-CNN. In Pr o c e e dings of the IEEE International Confer enc e on Computer Vision , pages 1440–1448, 2015. I. J. Go o dfello w, Y. Bulatov, J. Ibarz, S. Arnoud, and V. Shet. Multi-digit num b er recognition from street view imagery using deep con volutional neural net- w orks. In International Confer enc e on L e arning R ep- r esentations (ICLR) , 2014. N. D. Go o dman, V. K. Mansinghka, D. M. Roy , K. Bonawitz, and J. B. T enenbaum. Churc h: A language for generative mo dels. In Unc ertainty in A rtificial Intel ligenc e , 2008. A. D. Gordon, T. A. Henzinger, A. V. Nori, and S. K. Ra jamani. Probabilistic programming. In F utur e of Softwar e Engine ering, FOSE 2014 , pages 167–181. A CM, 2014. S. Gu, Z. Ghahramani, and R. E. T urner. Neural adaptiv e sequential Monte Carlo. In A dvanc es in Neur al Information Pr o c essing Systems , pages 2611– 2619, 2015. G. E. Hinton, P . Day an, B. J. F rey , and R. M. Neal. The wak e-sleep algorithm for unsup ervised neural net works. Scienc e , 268(5214):1158–1161, 1995. S. Ho chreiter and J. Schmidh ub er. Long short-term memory . Neur al Computation , 9(8):1735–1780, 1997. M. Jaderb erg, K. Simony an, A. V edaldi, and A. Zisser- man. Synthetic data and artificial neural netw orks Inference Compilation and Universal Probabilistic Programming for natural scene text recognition. In W orkshop on De ep L e arning, NIPS , 2014. M. J. Johnson, D. Duv enaud, A. B. Wiltschk o, S. R. Datta, and R. P . Adams. Structured V AEs: Comp os- ing probabilistic graphical mo dels and v ariational au- to encoders. arXiv pr eprint arXiv:1603.06277 , 2016. A. Karpath y and L. F ei-F ei. Deep visual-seman tic alignmen ts for generating image descriptions. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition , pages 3128–3137, 2015. D. Kingma and J. Ba. A dam: A metho d for sto c has- tic optimization. In International Confer enc e on L e arning R epr esentations (ICLR) , 2015. D. P . Kingma and M. W elling. Auto-encoding v aria- tional Bay es. In International Confer enc e on L e arn- ing R epr esentations (ICLR) , 2014. T. D. Kulkarni, P . K ohli, J. B. T enenbaum, and V. K. Mansinghka. Picture: a probabilistic programming language for scene perception. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition , 2015. V. Lempitsky and A. Zisserman. Learning to count ob jects in images. In A dvanc es in Neur al Information Pr o c essing Systems , pages 1324–1332, 2010. D. J. Lunn, A. Thomas, N. Best, and D. Spiegelhal- ter. WinBUGS–a Bay esian mo delling framew ork: concepts, structure, and extensibilit y . Statistics and Computing , 10(4):325–337, 2000. V. Mansinghka, T. D. Kulkarni, Y. N. Pero v, and J. T enen baum. Approximate Bay esian image in- terpretation using generative probabilistic graphics programs. In A dvanc es in Neur al Information Pr o- c essing Systems , pages 1520–1528, 2013. V. Mansinghka, D. Selsam, and Y. Pero v. V en- ture: a higher-order probabilistic programming plat- form with programmable inference. arXiv pr eprint arXiv:1404.0099 , 2014. T. Minka, J. Winn, J. Guiv er, S. W ebster, Y. Za- yk ov, B. Y angel, A. Sp engler, and J. Bronskill. In- fer.NET 2.6, 2014. Microsoft Research Cambridge. h ttp://research.microsoft.com/infernet. R. Nishihara, T. Minka, and D. T arlow. Detecting parameter symmetries in probabilistic mo dels. arXiv pr eprint arXiv:1312.5386 , 2013. B. Paige and F. W o o d. Inference netw orks for sequential Mon te Carlo in graphical mo dels. In Pr o c e e dings of the 33r d International Confer enc e on Machine L e arning , v olume 48 of JMLR , 2016. B. Paige, F. W o o d, A. Doucet, and Y. W. T eh. Asyn- c hronous anytime sequential Mon te Carlo. In A d- vanc es in Neur al Information Pr o c essing Systems , pages 3410–3418, 2014. L. Prechelt. Early stopping — but when? In Neur al Networks: T ricks of the T r ade , pages 55–69. Springer, 1998. T. Rainforth, C. A. Naesseth, F. Lindsten, B. Paige, J.-W. v an de Meen t, A. Doucet, and F. W o o d. In- teracting particle Marko v chain Mon te Carlo. In Pr o c e e dings of the 33r d International Confer enc e on Machine L e arning , volume 48 of JMLR: W&CP , 2016. S. Reed and N. de F reitas. Neural programmer- in terpreters. In International Confer enc e on L e arning R epr esentations (ICLR) , 2016. D. Ritchie, A. Stuhlm üller, and N. D. Go o dman. C3: Ligh tw eight incremen talized MCMC for probabilistic programs using con tinuations and callsite caching. In AIST A TS 2016 , 2016a. D. Ritchie, A. Thomas, P . Hanrahan, and N. Go od- man. Neurally-guided pro cedural mo dels: Amortized inference for pro cedural graphics programs using neu- ral netw orks. In A dvanc es In Neur al Information Pr o c essing Systems , pages 622–630, 2016b. C. R. Shalizi et al. Dynamics of Bay esian up dating with dep enden t data and missp ecified mo dels. Ele ctr onic Journal of Statistics , 3:1039–1074, 2009. P . Y. Simard, D. Steinkraus, and J. C. Platt. Best practices for con volutional neural netw orks applied to visual do cument analysis. In Pr o c e e dings of the Seventh International Confer enc e on Do cument A nal- ysis and R e c o gnition – V olume 2 , ICDAR ’03, pages 958–962, W ashington, DC, 2003. IEEE Computer So ciet y . F. Stark, C. Hazırbaş, R. T rieb el, and D. Cremers. Captc ha recognition with activ e deep learning. In GCPR W orkshop on New Chal lenges in Neur al Com- putation , Aachen, Germany , 2015. O. Starostenk o, C. Cruz-Perez, F. Uceda-Ponga, and V. Alarcon-Aquino. Breaking text-based CAPTCHAs with v ariable word and character orien- tation. Pattern R e c o gnition , 48(4):1101–1112, 2015. I. Sutskev er, O. Viny als, and Q. V. Le. Sequence to sequence learning with neural netw orks. In A dvanc es in Neur al Information Pr o c essing Systems , pages 3104–3112, 2014. T. V arga and H. Bunke. Generation of syn thetic train- ing data for an hmm-based handwriting recognition system. In Seventh International Confer enc e on Do c- ument A nalysis and R e c o gnition, 2003 , pages 618– 622. IEEE, 2003. A. V edaldi and K. Lenc. Matconvnet – conv olutional neural netw orks for MA TLAB. In Pr o c e e ding of the A CM International Confer enc e on Multime dia , 2015. S. V enugopalan, H. Xu, J. Donahue, M. Rohrbac h, R. Mo oney , and K. Saenko. T ranslating videos to T uan Anh Le, Atılım Güneş Ba ydin, F rank W o o d natural language using deep recurrent neural net- w orks. arXiv pr eprint arXiv:1412.4729 , 2014. O. Viny als, A. T oshev, S. Bengio, and D. Erhan. Show and tell: A neural image caption generator. In Pr o- c e e dings of the IEEE Confer enc e on Computer Vision and Pattern R e c o gnition , pages 3156–3164, 2015. L. von Ahn, M. Blum, N. J. Hopp er, and J. Langford. CAPTCHA: Using hard AI problems for securit y . In International Confer enc e on the The ory and Appli- c ations of Crypto gr aphic T e chniques , pages 294–311. Springer, 2003. D. Wingate, A. Stuhlmüller, and N. Go o dman. Ligh tw eight implementations of probabilistic pro- gramming languages via transformational compila- tion. In Pr o c e e dings of the 14th International Con- fer enc e on A rtificial Intel ligenc e and Statistics , pages 770–778, 2011. F. W o o d, J. W. v an de Meent, and V. Mansinghka. A new approach to probabilistic programming infer- ence. In Pr o c e e dings of the Sevente enth International Confer enc e on A rtificial Intel ligenc e and Statistics , pages 1024–1032, 2014. X. Zhang, Y. F u, S. Jiang, L. Sigal, and G. Agam. Learning from synthetic data using a stac ked mul- tic hannel auto enco der. In 2015 IEEE 14th Inter- national Confer enc e on Machine L e arning and A p- plic ations (ICMLA) , pages 461–464, Dec 2015. doi: 10.1109/ICMLA.2015.199.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment