Impact of Link Failures on the Performance of MapReduce in Data Center Networks

In this paper, we utilize Mixed Integer Linear Programming (MILP) models to determine the impact of link failures on the performance of shuffling operations in MapReduce when different data center network (DCN) topologies are used. For a set of non-fatal single and multi-links failures, the results indicate that different DCNs experience different completion time degradations ranging between 5% and 40%. The best performance under links failures is achieved by a server-centric PON-based DCN.

💡 Research Summary

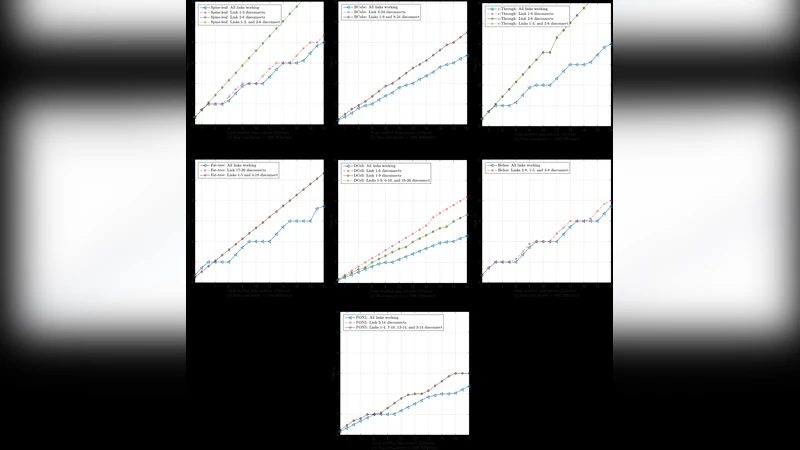

The paper investigates how link failures within data‑center networks (DCNs) affect the performance of the shuffle phase of MapReduce, a critical step where intermediate data are transferred from map tasks to reduce tasks. To quantify this impact, the authors formulate mixed‑integer linear programming (MILP) models that simultaneously minimize shuffle completion time and energy consumption under various failure scenarios. Seven representative DCN topologies are examined: Spine‑and‑Leaf, Fat‑Tree, BCube, DCell, c‑Through, Helios, and a server‑centric Passive Optical Network (PON) design. For each topology, ten map workers and six reduce workers are placed according to the configuration shown in Figure 1, and the Indy GraySort benchmark is used to generate shuffle traffic equal in size to the input data (ranging from 1 GB to 20 GB). The maximum server transmission rate is limited to 1000 MB/s, and power‑draw figures for electronic and optical equipment are taken from prior work.

Two failure scenarios are considered: (i) single‑link failures, targeting the most heavily utilized links (e.g., links 17‑20 in Fat‑Tree, the primary links in BCube) and (ii) multi‑link failures, where two or three links are simultaneously removed. The MILP model recomputes optimal routing paths for each failure case, ensuring that all intermediate data still reach their destinations while keeping the overall energy budget low.

Results show a wide range of performance degradations depending on topology. Spine‑and‑Leaf suffers only modest increases (2 %–6 %) when links 1‑3 are cut, thanks to abundant alternative paths. Fat‑Tree experiences more severe effects when a ToR‑to‑server link fails, as this constitutes a single point of failure that requires software‑level replication to recover. BCube and DCell, both server‑centric with dual NICs per server, are more resilient; however, DCell exhibits the worst degradation (up to 40 %) when its most utilized link (1‑6) is removed, indicating high sensitivity to workload placement. c‑Through and Helios, which rely on optical switching, show average degradations of 37 % and 5 % respectively under three‑link failures, reflecting the limited redundancy of their core optical switches.

The server‑centric PON architecture consistently outperforms the others. Even with up to four simultaneous link failures, the average increase in shuffle completion time remains around 22 %, and the design maintains competitive energy consumption because the passive optical components incur minimal power overhead. This superior resilience stems from the dense inter‑server connectivity provided by AWGR‑based passive optical couplers, which furnish many alternative routes without requiring active electronic switching.

The authors conclude that DCN topology selection has a direct and substantial impact on MapReduce performance under failure conditions. While traditional electronic topologies can be vulnerable to single‑point failures, server‑centric designs—especially those leveraging passive optical networking—offer both higher fault tolerance and better energy efficiency. The paper suggests future work to incorporate switch‑reconfiguration delays, switch failures, and variable MapReduce replication factors into the MILP framework, thereby providing an even more realistic assessment of data‑center resilience.

Comments & Academic Discussion

Loading comments...

Leave a Comment