From MVPs to pivots: a hypothesis-driven journey of two software startups

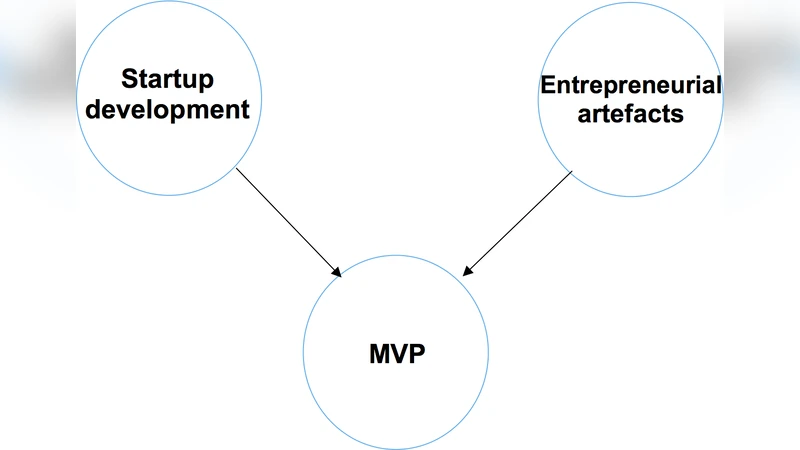

Software startups have emerged as an interesting multiperspective research area. Inspired by Lean Startup, a startup journey can be viewed as a series of experiments that validate a set of business hypotheses an entrepreneurial team make explicitly or inexplicitly about their startup. It is little known about how startups evolve through business hypothesis testing. This study proposes a novel approach to look at the startup evolution as a Minimum Viable Product(MVP) creat- ing process. We identified relationships among business hypotheses and MVPs via ethnography and post-mortem analysis in two software star- tups. We observe that the relationship between hypotheses and MVPs is incomplete and non-linear in these two startups. We also find that entrepreneurs do learn from testing their hypotheses. However, there are hypotheses not tested by MVPs and vice versa, MVPs not related to any business hypothesis. The approach we proposed visualizes the flow of entrepreneurial knowledge across pivots via MVPs.

💡 Research Summary

The paper investigates how software startups operationalize the hypothesis‑driven approach advocated by Lean Startup, using Minimum Viable Products (MVPs) as the primary unit of analysis. By conducting an ethnographic, post‑mortem case study of two distinct startups—Startuppuccino (an Italian university‑based educational platform) and MUML AS (a Norwegian hyper‑local news service)—the authors map the relationships among business hypotheses, MVPs, and pivots.

Theoretical grounding draws on Lean Startup’s Build‑Measure‑Learn loop, the concept of MVP as a boundary object, and various experiment‑driven development frameworks (e.g., HYPEX, double‑loop sense‑making). The research design combines semi‑structured interviews, participant observation, and artifact analysis (project plans, meeting notes, technical documents, Kanban boards). Narrative coding extracts “business hypothesis,” “product idea,” and “MVP” elements, timestamps each occurrence, and constructs parent‑child links among hypotheses based on semantic meaning.

In the Startuppuccino case, ten hypotheses were identified, most focusing on customer problems and value propositions. Some hypotheses (e.g., H02, H03) led to new insights that generated subsequent hypotheses (e.g., H04), illustrating a hierarchical knowledge flow. However, several hypotheses were never explicitly tested via an MVP, and certain MVPs (e.g., explainer videos, wireframes) were created without a clearly stated hypothesis, serving primarily as feedback tools. The startup underwent three pivots, each reshaping the hypothesis set and MVP strategy.

MUML AS presented eight hypotheses, centered on market entry and revenue models. The MVPs comprised prototype apps and beta versions, some produced as technical risk mitigations rather than hypothesis tests. After a nine‑month outsourcing contract, a strategic pivot incorporated the external development team as a co‑founder, demonstrating how external constraints (budget, partner performance) can trigger pivots independent of hypothesis validation.

Key findings: (1) The hypothesis‑MVP mapping is incomplete and non‑linear; not every hypothesis is tested, and not every MVP maps to a hypothesis. (2) Pivots are driven by both hypothesis outcomes and external factors, and after pivots, many prior hypotheses are revised rather than discarded. (3) Entrepreneurs do learn from MVP experiments, but learning is often informal, poorly documented, and not systematically fed back into subsequent hypothesis formulation.

These results challenge the simplistic view of Lean Startup’s Build‑Measure‑Learn cycle as a tidy linear process. The authors suggest introducing explicit hypothesis‑MVP tracking matrices and formal learning logs to improve traceability and knowledge reuse. They also recommend incorporating “constraint‑hypotheses” that capture external pressures, making pivot decisions more transparent.

Limitations include the small sample (two cases) and potential observer bias due to the researchers’ active participation in the startups. Future work should expand to a broader set of startups across domains, employ quantitative metrics (e.g., hypothesis‑to‑MVP coverage ratios, learning impact scores), and test the generalizability of the proposed visualisation framework.

Overall, the study contributes a nuanced, empirically grounded view of how hypotheses, MVPs, and pivots interact in real‑world software startups, highlighting the need for more structured learning mechanisms and better alignment between business hypotheses and product artefacts.

Comments & Academic Discussion

Loading comments...

Leave a Comment