Interactive Launch of 16,000 Microsoft Windows Instances on a Supercomputer

Simulation, machine learning, and data analysis require a wide range of software which can be dependent upon specific operating systems, such as Microsoft Windows. Running this software interactively on massively parallel supercomputers can present many challenges. Traditional methods of scaling Microsoft Windows applications to run on thousands of processors have typically relied on heavyweight virtual machines that can be inefficient and slow to launch on modern manycore processors. This paper describes a unique approach using the Lincoln Laboratory LLMapReduce technology in combination with the Wine Windows compatibility layer to rapidly and simultaneously launch and run Microsoft Windows applications on thousands of cores on a supercomputer. Specifically, this work demonstrates launching 16,000 Microsoft Windows applications in 5 minutes running on 16,000 processor cores. This capability significantly broadens the range of applications that can be run at large scale on a supercomputer.

💡 Research Summary

The paper addresses a long‑standing challenge in high‑performance computing: how to run Windows‑only applications at massive scale on Linux‑based supercomputers. Traditional solutions rely on full hardware virtualization—spinning up a separate Windows OS instance for each core or node. While functional, this approach incurs prohibitive overhead in memory, storage, and especially launch latency, making it unsuitable for interactive workloads that require thousands of instances to start within seconds or a few minutes.

To overcome these limitations, the authors combine three complementary technologies. First, they employ Wine, an open‑source compatibility layer that translates Windows system calls and DLL interfaces (e.g., NTDLL, KERNEL32, USER32, GDI32) into their POSIX equivalents. Because Wine runs entirely in user space, it eliminates the need for a full guest kernel, drastically reducing the per‑instance memory footprint (from gigabytes for a VM to a few megabytes) and removing the boot phase altogether. Second, they use LLMapReduce, a tool developed at MIT Lincoln Laboratory that automates the creation of SLURM array jobs from a directory of input files. LLMapReduce scans the input set, generates a single batch script containing thousands of task entries, and submits it as one job, thereby collapsing the serial job‑submission bottleneck that plagues conventional schedulers. Third, the underlying SLURM scheduler provides a highly scalable, multi‑threaded core‑scheduling engine capable of handling tens of thousands of concurrent tasks with low latency.

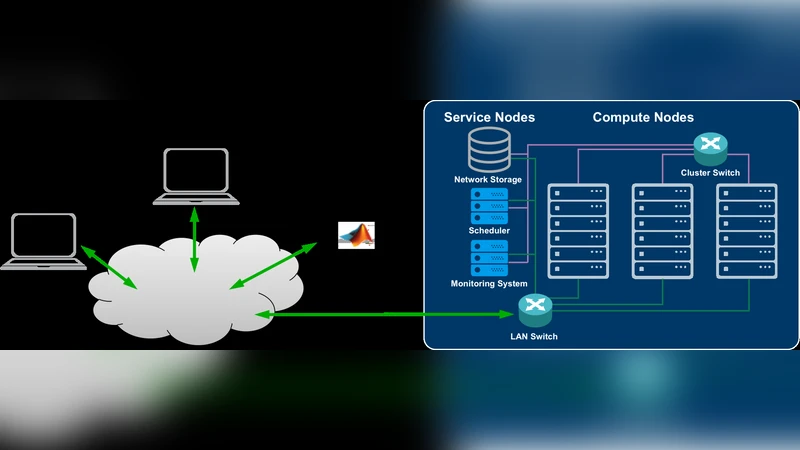

The experimental platform is the MIT SuperCloud TX‑Green system, a petascale cluster composed of 648 compute nodes, each equipped with an Intel Xeon Phi 7210 (Knight’s Landing) processor featuring 64 cores, 192 GB of RAM, and 16 GB of on‑package MCDRAM. The nodes are interconnected via a non‑blocking 10 GbE core switch and share a 10‑PB Lustre file system. The authors launch Wine instances on up to 16 384 simultaneous processes, scaling from a single instance on one node up to 16 000 instances distributed across 256 nodes. Two primary performance metrics are measured: (1) the time required to copy the Windows executable and its supporting libraries from central storage to each node’s local SSD, and (2) the actual launch time of the Wine processes. Because the copy operation is performed in parallel from each node, the I/O time remains under a few seconds even at the highest scale. The launch time results are striking: 16 000 Windows applications are started in roughly 300 seconds (5 minutes). This is an order of magnitude faster than launching comparable numbers of Windows VMs on Microsoft Azure (which can take tens of seconds to minutes per VM) or Linux VMs provisioned via Eucalyptus (hundreds of seconds).

The implications are significant. By eliminating heavyweight virtualization, the approach frees up memory and storage, allowing more instances per node and reducing overall system cost. The use of Wine preserves binary compatibility, so existing Windows binaries can run unchanged, avoiding costly code rewrites. LLMapReduce’s one‑line job submission dramatically simplifies user interaction, making large‑scale interactive sessions feasible for simulation, machine learning, and data‑analysis workloads that depend on Windows‑only tools.

Nevertheless, the method has limitations. Wine does not yet implement every Windows API, particularly newer DirectX graphics calls and certain hardware‑specific drivers, which may prevent some applications from running or cause performance degradation. Additionally, the performance gains demonstrated are tied to the many‑core Xeon Phi architecture; porting the same workflow to conventional x86‑64 clusters may require further tuning of memory affinity, network topology, and SLURM configuration.

Future work outlined by the authors includes extending compatibility (e.g., integrating GPU acceleration, improving DirectX support), scaling to even larger core counts (hundreds of thousands), and exploring hybrid models that combine lightweight containers with Wine for specialized workloads.

In summary, the paper presents a novel, practical solution for launching thousands of Windows applications on a Linux‑dominant supercomputer. By leveraging Wine’s lightweight emulation, LLMapReduce’s bulk job orchestration, and SLURM’s scalable scheduling, the authors achieve rapid, interactive deployment of 16 000 Windows instances in five minutes—a performance level previously unattainable with traditional VM‑based methods. This work opens the door for a broader class of Windows‑dependent scientific and engineering applications to benefit from the massive parallelism of modern supercomputers.

Comments & Academic Discussion

Loading comments...

Leave a Comment